The NVIDIA GeForce GTX 660 Review: GK106 Fills Out The Kepler Family

by Ryan Smith on September 13, 2012 9:00 AM ESTPower, Temperature, & Noise

As always, we’re wrapping up our look at a video card’s stock performance with a look at power, temperature, and noise. Unlike GTX 660 Ti, which was a harvested GK104 GPU, GTX 660 is based on the brand-new GK106 GPU, which will have interesting repercussions for power consumption. Scaling down a GPU by disabling functional units often has diminishing returns, so GK106 will effectively “reset” NVIDIA’s position as far as power consumption goes. As a reminder, NVIDIA’s power target here is a mere 115W, while their TDP is 140W.

| GeForce GTX 660 Series Voltages | |||||

| Ref GTX 660 Ti Load | Ref GTX 660 Ti Idle | Ref GTX 660 Load | Ref GTX 660 Idle | ||

| 1.175v | 0.975v | 1.175v | 0.875v | ||

Stopping to take a quick look at voltages, even with a new GPU nothing has changed. NVIDIA’s standard voltage remains at 1.175v, the same as we’ve seen with GK104. However idle voltages are much lower, with the GK106 based GTX 660 idling at 0.875v versus 0.975v for the various GK104 desktop cards. As we’ll see later, this is an important distinction for GK106.

Up next, before we jump into our graphs let’s take a look at the average core clockspeed during our benchmarks. Because of GPU boost the boost clock alone doesn’t give us the whole picture, we’ve recorded the clockspeed of our GTX 660 during each of our benchmarks when running it at 1920x1200 and computed the average clockspeed over the duration of the benchmark

| GeForce GTX 600 Series Average Clockspeeds | |||||

| GTX 670 | GTX 660 Ti | GTX 660 | |||

| Max Boost Clock | 1084MHz | 1058MHz | 1084MHz | ||

| Crysis | 1057MHz | 1058MHz | 1047MHz | ||

| Metro | 1042MHz | 1048MHz | 1042MHz | ||

| DiRT 3 | 1037MHz | 1058MHz | 1054MHz | ||

| Shogun 2 | 1064MHz | 1035MHz | 1045MHz | ||

| Batman | 1042MHz | 1051MHz | 1029MHz | ||

| Portal 2 | 988MHz | 1041MHz | 1033MHz | ||

| Battlefield 3 | 1055MHz | 1054MHz | 1065MHz | ||

| Starcraft II | 1084MHz | N/A | 1080MHz | ||

| Skyrim | 1084MHz | 1045MHz | 1084MHz | ||

| Civilization V | 1038MHz | 1045MHz | 1067MHz | ||

With an official boost clock of 1033MHz and a maximum boost of 1084MHz on our GTX 660, we see clockspeeds regularly vary between the two points. For the most part our average clockspeeds are slightly ahead of NVIDIA’s boost clock, while in CPU-heavy workloads (Starcraft II, Skyrim), we can almost sustain the maximum boost clock. Ultimately this means that the GTX 660 is spending most of its time near or above 1050MHz, which will have repercussions when it comes to overclocking.

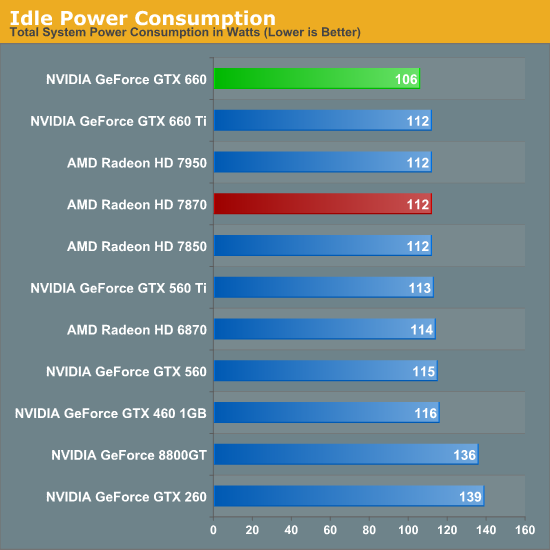

Starting as always with idle power we immediately see an interesting outcome: GTX 660 has the lowest idle power usage. And it’s not just a one or two watt either, but rather a 6W (all the wall) difference between the GTX 660 and both the Radeon HD 7800 series and the GTX 600 series. All of the current 28nm GPUs have offered refreshingly low idle power usage, but with the GTX 660 we’re seeing NVIDIA cut into what was already a relatively low idle power usage and shrink it even further.

NVIDIA’s claim is that their idle power usage is around 5W, and while our testing methodology doesn’t allow us to isolate the video card, our results corroborate a near-5W value. The biggest factors here seem to be a combination of die size and idle voltage; we naturally see a reduction in idle power usage as we move to smaller GPUs with fewer transistors to power up, but also NVIDIA’s idle voltage of 0.875v is nearly 0.1v below GK104’s idle voltage and 0.075v lower than GT 640 (GK107)’s idle voltage. The combination of these factors has pushed the GTX 660’s idle power usage to the lowest point we’ve ever seen for a GPU of this size, which is quite an accomplishment. Though I suspect the real payoff will be in the mobile space, as even with Optimus mobile GPUs have to spend some time idling, which is another opportunity to save power.

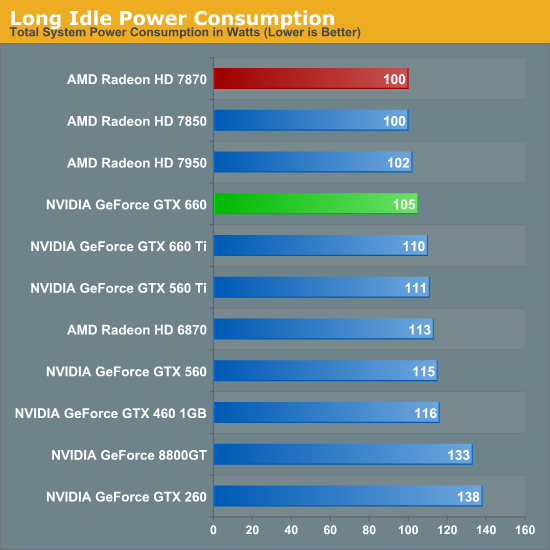

At this point the only area in which NVIDIA doesn’t outperform AMD is in the so-called “long idle” scenario, where AMD’s ZeroCore Power technology gets to kick in. 5W is nice, but next-to-0W is even better.

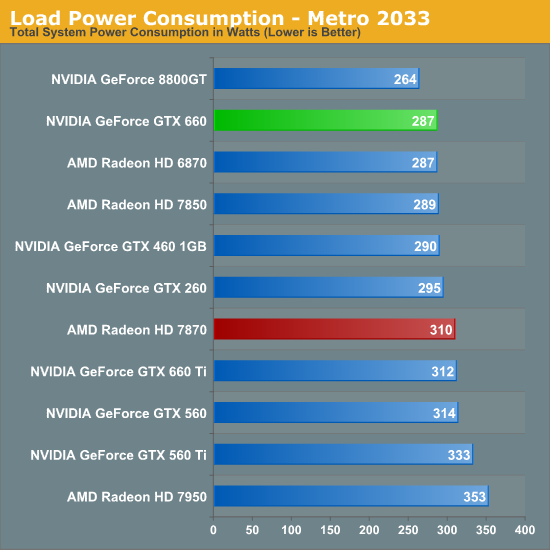

Moving on to load power consumption, given NVIDIA’s focus on efficiency with the Kepler family it comes as no great surprise that NVIDIA continues to hold the lead when it comes to load power consumption. The gap between GTX 660 and 7870 isn’t quite as large as the gap we saw between GTX 680 and 7970 but NVIDIA still has a convincing lead here, with the GTX 660 consuming 23W less at the wall than the 7870. This puts the GTX 660 at around the power consumption of the 7850 (a card with a similar TDP) or the GTX 460. On AMD’s part, Pitcairn is a more petite (and less compute-heavy) part than Tahiti, which means AMD doesn’t face nearly the disparity as they do on the high-end.

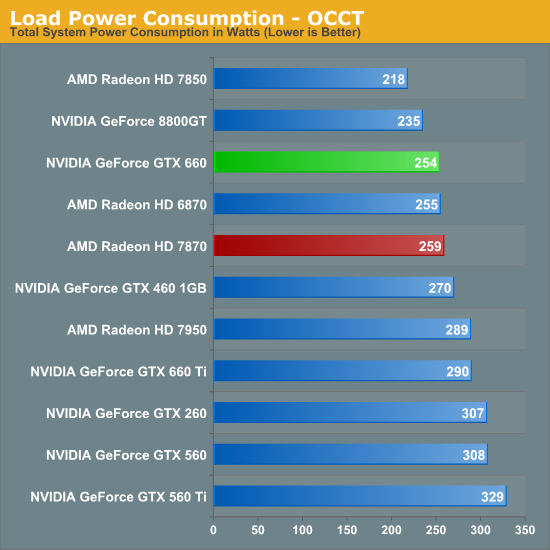

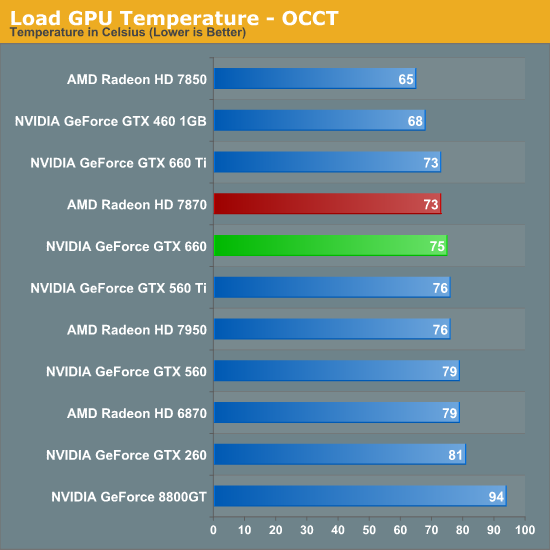

OCCT on the other hand has the GTX 660 and 7870 much closer, thanks to AMD’s much more aggressive throttling through PowerTune. This is one of the only times where the GTX 660 isn’t competitive with the 7850 in some fashion, though based on our experience our Metro results are more meaningful than our OCCT results right now.

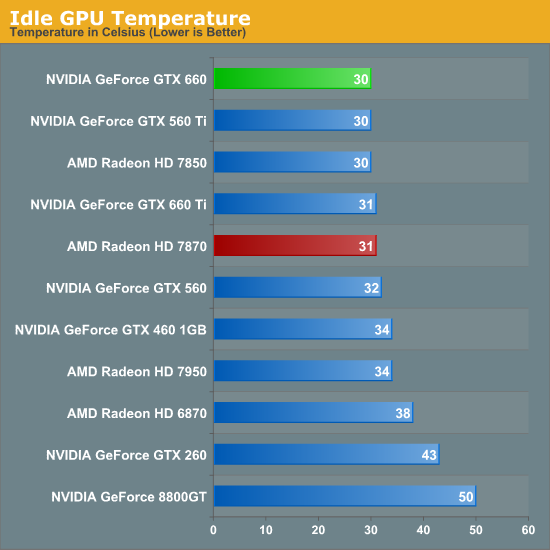

As for idle temperatures, there are no great surprises. A good blower can hit around 30C in our testbed, and that’s exactly what we see.

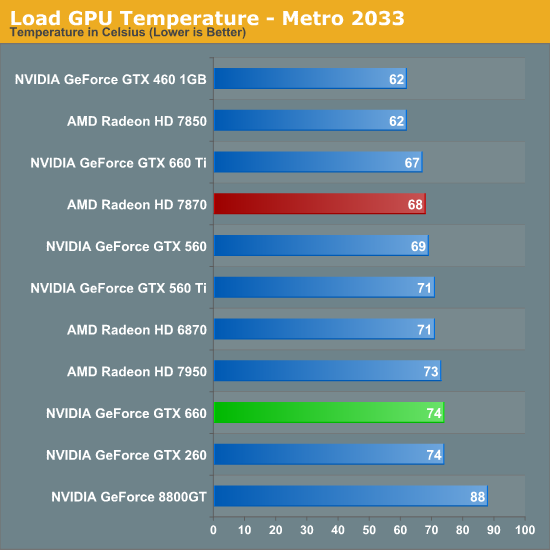

Temperatures under Metro look good enough; though despite their power advantage NVIDIA can’t keep up with the blower-equipped 7800 series. At the risk of spoiling our noise results, the 7800 series doesn’t do significantly worse for noise so it’s not immediately clear why the GTX 660 is 6C warmer here. Our best guess would be that the GTX 660’s cooler just quite isn’t up to the potential of the 7800 series’ reference cooler.

OCCT actually closes the gap between the 7870 and the GTX 660 rather than widening it, which is the opposite of what we would expect given our earlier temperature data. Reaching the mid-70s neither card is particularly cool, but both are still well below their thermal limits, meaning there’s plenty of thermal headroom to play with.

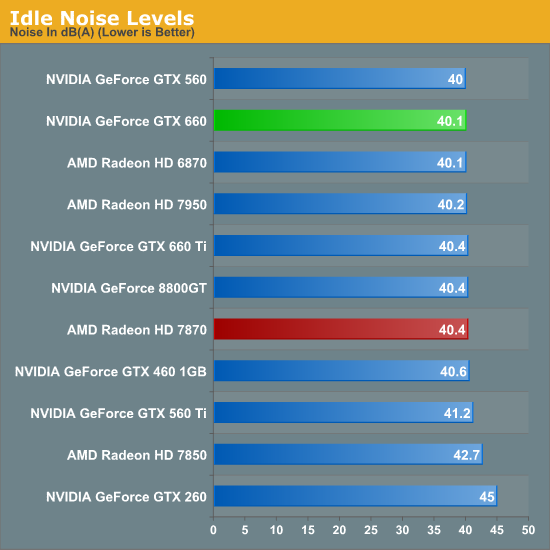

Last but not least we have our noise tests, starting with idle noise. Again there are no surprises here; the GTX 660’s blower is solid, producing no more noise than any other standard blower we’ve seen.

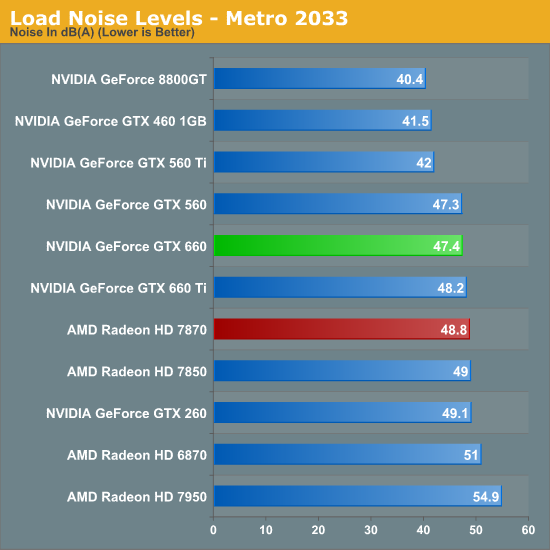

While the GTX 660 couldn’t beat the 7870 on temperatures under Metro, it can certainly beat the 7870 when it comes to noise. The difference isn’t particularly great – just 1.4dB – but every bit adds up, and 47.4dB is historically very good for a blower. However the use of a blower on the GTX 660 means that NVIDIA still can’t match the glory of the GTX 560 Ti or GTX 460; for that we’ll have to take a look at retail cards with open air coolers.

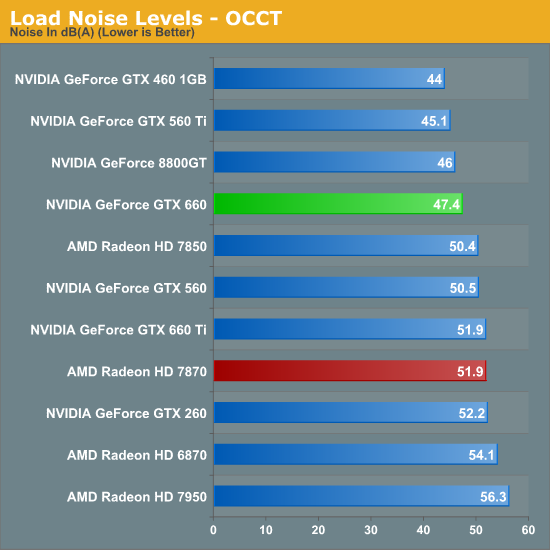

Similar to how AMD’s temperature lead eroded with OCCT, AMD’s slight loss in load noise testing becomes a much larger gap under OCCT. A 4.5dB difference is now solidly in the realm of noticeable, and further reinforces the fact that the GTX 660 is the quieter card under both normal and extreme situations.

We’ll be taking an in-depth look at some retail cards later today with our companion retail card article, but with those results already in hand we can say that despite the use of a blower the “reference” GTX 660 holds up very well. Open air coolers can definitely beat a blower with the usual drawbacks (that heat has to go somewhere), but when a blower is only hitting 47dB, you already have a fairly quiet card. So even a reference GTX 660 (as unlikely as it is to appear in North America) looks good all things considered.

147 Comments

View All Comments

rarson - Friday, September 14, 2012 - link

chizow doesn't understand the concept of early adoption. He only mentioned rebates because that Nvidia rebate debacle has been beaten over his head time and time again.chizow - Friday, September 14, 2012 - link

Ah just a matter of time before the only idiot on the internet willing to defend AMD's laughable 28nm launch prices arrives to defend their honor.How do you feel now about those $550, $450, and $350 pricepoints you so vigorously defended when the 7970/7950/7870 launched?

And yes its important to mention the rebates because revisionists like yourself are so quick to forget the actual rebates, they only mention the price drops.

So just as I asked then, where's AMD's rebates given the floor has completely dropped from under their entire pricing structure just a few months after release, just as I predicted?

Galidou - Sunday, September 16, 2012 - link

We did not vigorously defend the pricing scheme, we're just not seeing it as worse as you can see it, hence why we answer to you. Everytime I see people like you speak about AMD the way they do, I just see so much hate, when you start to say things like idiot and trying to say we're defending our ''honor'' you're past the point where your arguments are worth even a penny to me.If you have to disrespect people when speaking about video cards, there's one thing I have to say, you have a choosen side and it hinders your judgement. Stay respectful and I'll give you credit but now it's too late you just proved ourselves that you're not fit to judge well in this discussion.

Anyone know that a judge couldn't work on an affair of murder if the murdered one is in his own family because it... would severely hinder his judgement by putting emotions in the way. Disrespect to me is the worse form of acting when arguing. You lost it all there to me, I'm just sad I did reply to your previous messages without reading this one first, I would of just realized that it's too late for you.

AMD and Nvidia are both company trying to make money, trying to put one on a pedestal like if everything they do is related to god and thus is perfect... AMD's 4870 was a mistake, 7970 was a mistake, gtx 280 is related to god and it's AMD's pricing scheme that is at fault.

Everything AMD does is wrong, everything Nvidia does wrong is AMD's fault.... Like my 6800 gt that never worked properly with that Nforce 3 chipset, AMD's fault, that driver release that fried tons of Nvidia's video cards, AMD's fault, GTX 670's performance so close to GTX 680's performance, AMD's fault, 660 ti 192 bit bus and 24 ROPs, AMD's fault(I heard they stole them during the night and are not willing to give em back unless Nvidia pays a heavy ransom), Why isn't Nvidia making more money than Intel and Microsoft, AMD's fault, my grandfather's cancer, AMD's fault, wow, life is a bag full of surprise. Chozow's lack of respect calling us stupid, AMD's fault, we lost our honor because of... AMD's fault.....

chizow - Sunday, September 16, 2012 - link

Uh disrespect? You mean like you questioning very easily referenced facts like price and performance at every turn, or questioning how much I paid for a 2xGTX 670 or even that they existed with 680 PCB? Or questioning numbers only to be rebuked by a link from a widely respected website, only to question that website, then get provided with more benchmarks from one of the site you linked and question that one too?There comes a point you can't reason with people like you, so if you want to argue about emotional attachment leading to irrational behavior, you should really look in the mirror.

But what should I care, as you said everyone must look themselves in the mirror and be at peace with their own decisions in life, I can for a fact say I'm good with my buying decision this round, do you think one can say the same about buying AMD 28nm parts under their ridiculous asking prices, especially given all of the recent price drops?

Also, I have been critical of Nvidia as well with 28nm, so to say I think they can do no wrong, downright dishonest on your part. There's a reason I waited to buy my 670s instead of snatching them up at launch for $400, but then again, I've been at this long enough to make an informed decision.

Galidou - Sunday, September 16, 2012 - link

''Uh disrespect? You mean like you questioning very easily referenced facts like price and performance at every turn, or questioning how much I paid for a 2xGTX 670 or even that they existed with 680 PCB''Nope, I mean calling other idiots: ''Ah just a matter of time before the only idiot on the internet willing to defend AMD's laughable 28nm launch prices arrives to defend their honor.''

End of the discussion, you're a disrespectful Nvidia fanboy, I doubted for the gtx 670 price you said because I was vigorously looking for a 670 but not a reference fan design, something with an aftermarket fan that will stay cool for a nice and quiet overclock.

For what you call facts, life turn around perception and interpreted by the brain. Women tend to dislike when their boyfriend cheat on them while in some country it's normal to have many wifes, know what I mean? Perception is something personnal, something might be bad and abnormal and seem like a fact from someone's standpoint but for another human being, it might be just normal dependnig on their choosen side, past experiences and emotions. If someone totally beleives 2+2 makes 5 and no one can convince him of anything else, then to him it's the truth. If the only truth to you is your truth, you will disagree all of your life with other peoples because they have a different point of view.

All I was discussing with you isn't that AMD is perfect and that their pricing is perfect, I was just defending my point of view, the way I saw things while saying ''TO ME IT SEEMS LOGICAL'' while all you had to say was ''REFERENCED FACTS, FACTS, FACTS, FACTS'' not caring about how I perceived things, I just hoped you could understand why I see things this way, I understand the way you see things because I can tell it seems logical to me but that isn't the way I see IT.

chizow - Monday, September 17, 2012 - link

"End of the discussion, you're a disrespectful Nvidia fanboy."Please don't talk about respect when you can't even adhere to your own standards.

If you claim "TO ME SEEMS LOGICAL" while questioning my conclusions but ignoring facts and historical data that are relevant to the industry in general and graphics cards in particular, that suggests to me that your thought processes are not logical at all, but born of ignorance or subnormal intelligence.

After all, a simpleton can believe Unicorns and Fairies exist, but that does not make it so.

You brought up the GTX 280 again, yet once again you can't seem to understand the very key differences with the GTX 280 vs. 7970 launch prices. I've already outlined them, do I need to do so again?

Simple question, do you think Nvidia's pricing was worst at launch with the 280 than AMD's pricing with the 7970?

Galidou - Monday, September 17, 2012 - link

''You brought up the GTX 280 again, yet once again you can't seem to understand the very key differences with the GTX 280 vs. 7970 launch prices. I've already outlined them, do I need to do so again?''I brought that up?? You have to read back to realize you started it all again speaking of rebates and such which was the only reason why I answered to you to show that not everyone sees the way you do.

Maybe we could just state that Nvidia made a mistake by pricing the gtx 680 at 500$ because it was stronger than a 600$ card and then they are the faulty one as you usually see things.

''I've already outlined them, do I need to do so again?''

Well if you outlined them, if it was so different, why did you bring up the apst of the gtx 280 to compare to this different story in the first place?

Calling you an Nvidia fanboy isn'T disrespectful to me like calling other idiots defending their honor. It means you have a choosen side, maybe it might seem like it's an attack but it'S not, sorry if the hat fits your head.

Galidou - Monday, September 17, 2012 - link

Oh and I was wondering, for someone so informed about your purchases and everything, did you say you owned a gtx 280? For someone buying 330$ gtx 670 that's quite a fun ''fact'' considering you whined about the TOO LOW price of the radeon 4870, but no, you didn't get to buy one of those for 250$ on special, you got the gtx 280.... Fun stuff when the 4850/4870 were at the TOP of Performance/dollar charts, which was something we don't see often.....(fanboyism?)I'm an informed buyer which is why the only video cards I bought brand new at launch for me, my wife or friends are: Geforce ti 4200, radeon hd 9500 flashed to 9700 pro, 8800 gt, radeon 4850/4870, gtx 460 ti, radeon 6850/6870, gtx 660 ti and the radeon 7950 I super overclocked for my 3 monitors and skyrim :)

And yes there are ATI cards included in my buying decision which doesn't make me a Fanboy and helps my OPINION being undistorted by emotions thus the reason I'm not calling others idiots when speaking in forums related to VIDEO CARDS.

Galidou - Monday, September 17, 2012 - link

''And yes there are ATI cards included in my buying decision''I meant Nvidia cards :)

chizow - Monday, September 17, 2012 - link

Since you love to make this about me, when it really isn't....Yes I bought a GTX 280 at launch but I waited a few days and got lucky on a Bing cash back promotion on Ebay. 35% Bing Cash Back, quite a few others got it as well (feel free to google it), brought the total to $420ish. Then Nvidia issued their big rebate after the 4870 launch, so I had the option of $120 check or $150 EVGA bucks, I took the cash.

So $300, minus the $220 I got for my 8800GTX on Ebay and I paid a whopping total of $80 out of pocket for the fastest single GPU. Early 2009 I got a 2nd 280 for $230 in a Dell deal after the economic crash that dropped prices on all GPUs....

Obviously not everyone would have been so fortunate, but if I didn't get 280s, I might very well have gotten 260s or waited for 285s.