The NVIDIA GeForce GTX 660 Review: GK106 Fills Out The Kepler Family

by Ryan Smith on September 13, 2012 9:00 AM ESTJust What Is NVIDIA’s Competition & The Test

Every now and then it’s productive to dissect NVIDIA’s press presentation to get an idea of what NVIDIA is thinking. NVIDIA’s marketing machine is generally laser-focused, but even so it’s not unusual for them to have their eye on more than one thing at a time.

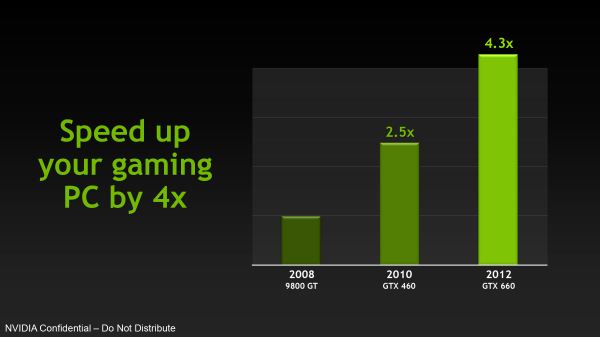

In this case, ostensibly NVIDIA’s competition for the GTX 660 is the Radeon HD 7800 series. But if we actually dig through NVIDIA’s press deck we see that they only spend a single page comparing the GTX 660 to a 7800 series card (and it’s a 7850 at that). Meanwhile they spend 4 pages comparing the GTX 660 to prior generation NVIDIA products like the GTX 460 and/or the 9800GT.

The most immediate conclusion is that while NVIDIA is of course worried about stiff competition from AMD, they’re even more worried about competition from themselves right now. The entire computer industry has been facing declining revenues in the face of drawn out upgrade cycles due to older hardware remaining “good enough” for longer period of times, and NVIDIA is not immune from that. To even be in competition with AMD, NVIDIA needs to convince its core gaming user base to upgrade in the first place, which it seems is no easy task.

NVIDIA has spent a lot of time in the past couple of years worrying about the 8800GT/9800GT in particular. “The only card that matters” was a massive hit for the company straight up through 2010, which has made it difficult to get users to upgrade even 4 years later. As a result what was once a 2 year upgrade cycle has slowly stretched out to become a 4 year upgrade cycle, which means NVIDIA only gets to sell half as many cards in that timeframe. Which leads us back to NVIDIA’s press presentation: even though the GTX 460/560 has long since supplanted the 9800GT’s install base, NVIDIA is still in competition with themselves 4 years later, trying to drive their single greatest DX10 card into the sunset.

The Test

The official launch drivers for the GTX 660 are 306.23, which are the latest iteration of NVIDIA’s R304 branch of drivers. Besides adding support for the GTX 660, these drivers are performance-identical to earlier R304 drivers in our tests.

Also, we'd like to give a quick thank you to Antec, who rushed out a replacement True Power Quattro 1200 PSU on very short notice after the fan went bad on our existing unit. Thanks guys!

| CPU: | Intel Core i7-3960X @ 4.3GHz |

| Motherboard: | EVGA X79 SLI |

| Chipset Drivers: | Intel 9.2.3.1022 |

| Power Supply: | Antec True Power Quattro 1200 |

| Hard Disk: | Samsung 470 (256GB) |

| Memory: | G.Skill Ripjaws DDR3-1867 4 x 4GB (8-10-9-26) |

| Case: | Thermaltake Spedo Advance |

| Monitor: | Samsung 305T |

| Video Cards: |

AMD Radeon HD 6870 AMD Radeon HD 7850 AMD Radeon HD 7870 AMD Radeon HD 7950 NVIDIA GeForce 8800GT NVIDIA GeForce GTX 260 NVIDIA GeForce GTX 460 1GB NVIDIA GeForce GTX 560 NVIDIA GeForce GTX 560 Ti NVIDIA GeForce GTX 660 Ti |

| Video Drivers: |

NVIDIA ForceWare 304.79 Beta NVIDIA ForceWare 305.37 NVIDIA ForceWare 306.23 Beta AMD Catalyst 12.8 |

| OS: | Windows 7 Ultimate 64-bit |

147 Comments

View All Comments

Margalus - Thursday, September 13, 2012 - link

you say the stock 660 looks bad when compared to an overclocked 7870? what a shock that is!I guess it's always fair to say an nvidia card is bad when comparing the stock reference nv card to overclocked versions of it's nearest amd competitor..

Patflute - Friday, September 14, 2012 - link

Be fair and over clock both...poohbear - Thursday, September 13, 2012 - link

well after reading this im still have with my Gigabyte OC gtx 670 i got 2 months ago for $388. I will NOT be upgrading for 3 years & im confident my GTX 670 will still be in the upper segment in 3 years (like my 5870 that i upgraded from), so @ $130/yr its a great deal.poohbear - Thursday, September 13, 2012 - link

erm, i meant i'm still happy*. sucks that u can't edit on these comments.:pKineticHummus - Friday, September 14, 2012 - link

i had no idea what you meant with your "im still happy" edit until I went back to read your original statement again. somehow I mentally replaced the "have" with "happy" lol. reading fail for me...distinctively - Thursday, September 13, 2012 - link

Looks like the 660 is getting a nasty little spanking from the 7870 when you look around at all the reviews. The GK 106 appears to loose in just about every metric compared to Pitcairn.Locateneil - Thursday, September 13, 2012 - link

I just built a PC with 3770K and Asus Z77-v Pro, I was think to buy GTX 670 for my system but now I am now confused if it is better to go with 2 GTX 660 in SLI?Ryan Smith - Friday, September 14, 2012 - link

Our advice has always been to prefer a single more powerful card over a pair of weaker cards in SLI. SLI is a great mechanism to extend performance beyond what a single card can provide, but its inconsistent performance and inherent drawbacks (need for SLI profiles and microstuttering) means that it's not a good solution for when you can have a single, more powerful GPU.knghtwhosaysni - Thursday, September 13, 2012 - link

Do you guys think you could show frametimes like techreport does in your reviews? It can show some deficiencies in rendering that average FPS doesn't, like with Crysis 2 http://techreport.com/review/23527/nvidia-geforce-...It's nice that techreport does it, but I think Anandtech is the first stop for a lot of people who are looking for benchmarks, and I think if you guys showed this data in your own reviews then it would really push AMD and Nvidia to iron out their latency spike problems.

Ryan Smith - Friday, September 14, 2012 - link

We get asked this a lot. I really like Scott's methodology there, so if we were to do this I'd want to do more than just copy him by finding some way to do better than him (which is no easy task).To that end I find FRAPS to be at a higher level than I'd like. It's measuring when frames are handed off to the GPU rather than when the GPU actually finishes the frame. These times are strongly correlated, but I'd rather have more definitive low-level data from the GPU itself. If we could pull that off then frametimes are definitely something we'd look in to.