The Ivy Bridge Preview: Core i7 3770K Tested

by Anand Lal Shimpi on March 6, 2012 8:16 PM EST- Posted in

- CPUs

- Intel

- Core i7

- Ivy Bridge

General Performance

SYSMark 2007 & 2012

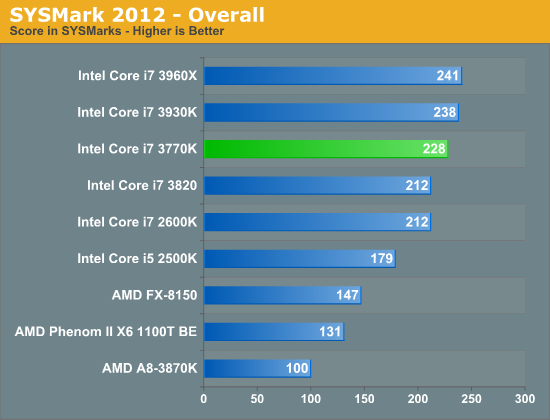

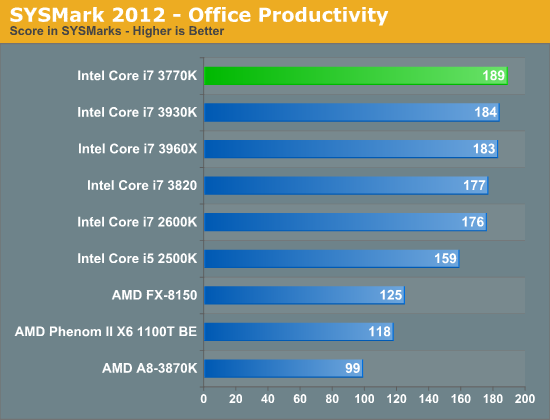

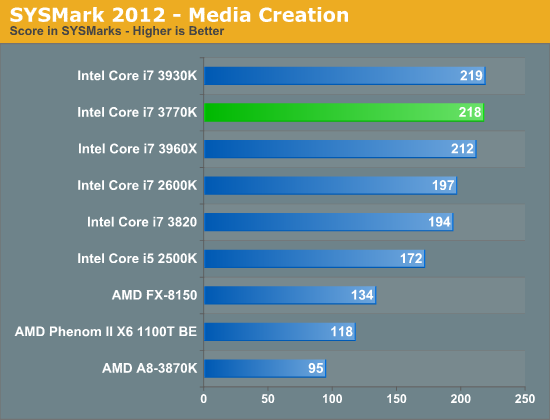

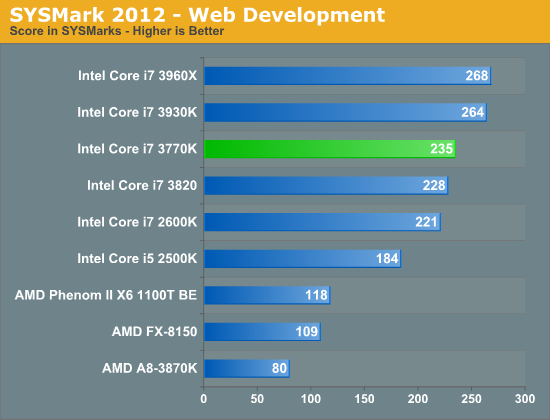

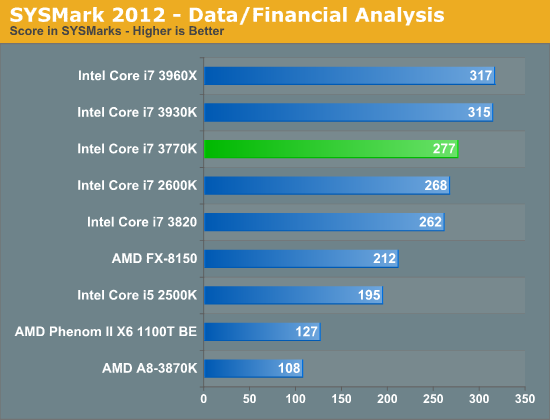

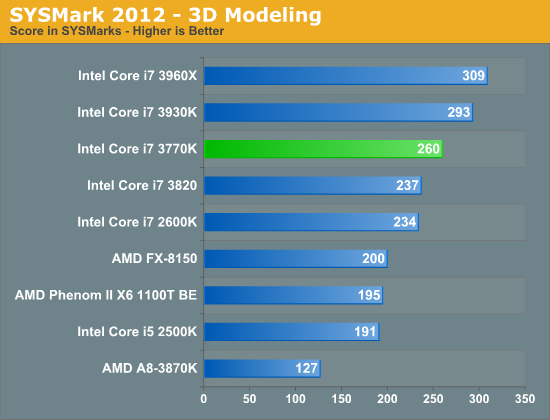

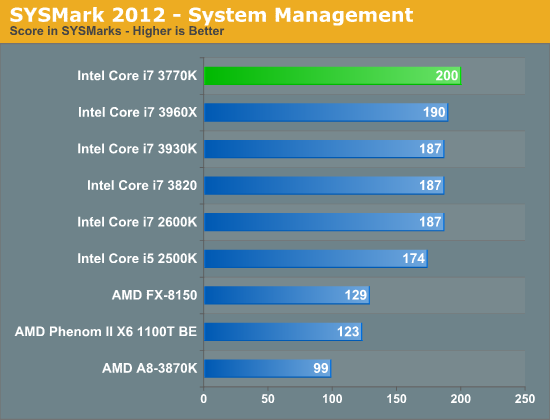

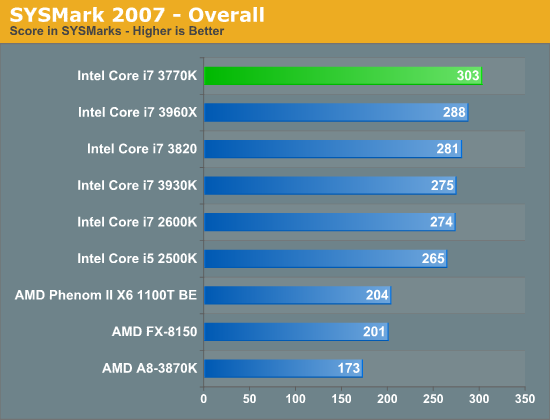

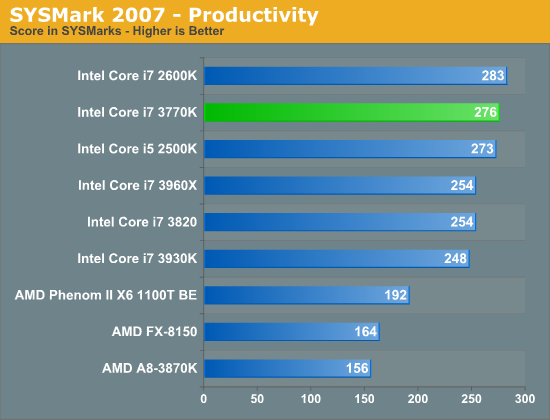

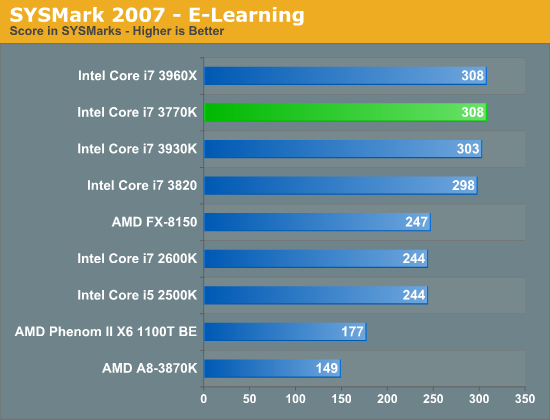

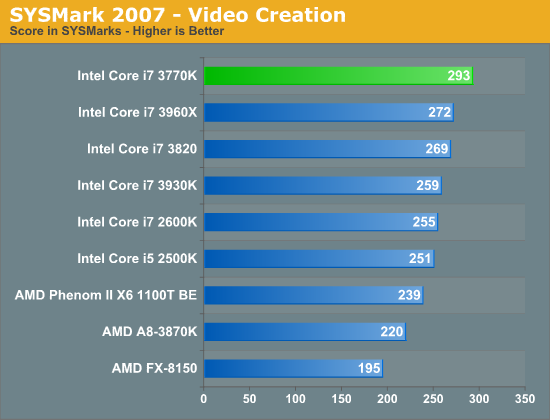

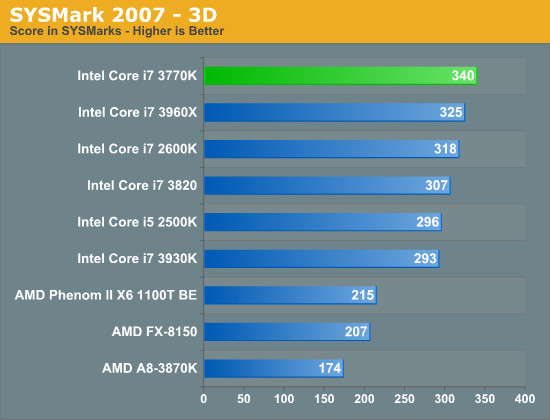

Although not the best indication of overall system performance, the SYSMark suites do give us a good idea of lighter workloads than we're used to testing. SYSMark 2007 is a better indication of low thread count performance, although 2012 isn't tremendously better in that regard.

As the SYSMark suites aren't particularly thread heavy, there's little advantage to the 6-core Sandy Bridge E CPUs. The 3770K however manages to slot in above all of the other Sandy Bridge parts at between 5 - 20% faster than the 2600K. The biggest advantages show up in either the lightly threaded tests or in the FP heavy benchmarks. Given what we know about Ivy's enhancements, this is exactly what we'd expect.

195 Comments

View All Comments

tipoo - Wednesday, March 7, 2012 - link

Thankfully the comments of a certain troll were removed so mine no longer makes sense, for any future readers.Articuno - Tuesday, March 6, 2012 - link

Just like how overclocking a Pentium 4 resulted in it beating an Athlon 64 and had lower power consumption to boot-- oh wait.SteelCity1981 - Tuesday, March 6, 2012 - link

That's a stupid comment only a stupid fanboy would make AMD is way ahead of Intel in the graphics department and is very competitive with Intel in the mobile segment now.tipoo - Tuesday, March 6, 2012 - link

Your comments would do nothing to inform regular readers of sites like this, we already know more. So please, can it.tipoo - Tuesday, March 6, 2012 - link

Not what I asked little troll. Give a source that says Apple will get a special HD4000 like no other.Operandi - Tuesday, March 6, 2012 - link

What are you talking about? As long as AMD has a better iGPU there is plenty of reason for them to be viable choice today. And if gaming iGPU performance holds on against Intel there is more than just hope of them getting back in the game in terms of high performance comput tomorrow.tipoo - Tuesday, March 6, 2012 - link

I'm pretty sure even 16x AF has a sub 2% performance hit on even the lowest end of todays GPUs, is it different with the HD Graphics? If not, why not just enable it like most people would, even on something like a 4670 I max out AF without thinking twice about it, AA still hurts performance though.IntelUser2000 - Tuesday, March 6, 2012 - link

AF has greater performance impact on low end GPUs. Typically its about 10-15%. It's less on the HD Graphics 3000, only because their 16x AF really only works at much lower levels. It's akin to having option for 1280x1024 resolution, but performing like 1024x768 because it looks like the latter.If Ivy Bridge improved AF quality to be on par with AMD/Nvidia, performance loss should be similar as well.

tipoo - Wednesday, March 7, 2012 - link

Hmm I did not know that, what component of the GPU is involved in that performance hit (shaders, ROPs, etc)? My card is fairly low end and 16x AF performs nearly no different than 0x.Exophase - Wednesday, March 7, 2012 - link

AF requires more samples in cases of high anisotropy so I guess the TMU load increases, which may also increase bandwidth requirements since it could force higher LOD in these cases. You'll only see a performance difference if the AF causes the scene to be TMU/bandwidth limited instead of say, ALU limited. I'd expect this to happen more as you move up in performance, not down, since ALU:TEX ratio tends to go up along the higher end.. but APUs can be more bandwidth sensitive and I think Intel's IGPs never had a lot of TMUs.Of course it's also very scene dependent. And maybe an inferior AF implementation could end up sampling more than a better one.