Intel SSD 520 Review: Cherryville Brings Reliability to SandForce

by Anand Lal Shimpi on February 6, 2012 11:00 AM ESTAnandTech Storage Bench 2011

Last year we introduced our AnandTech Storage Bench, a suite of benchmarks that took traces of real OS/application usage and played them back in a repeatable manner. I assembled the traces myself out of frustration with the majority of what we have today in terms of SSD benchmarks.

Although the AnandTech Storage Bench tests did a good job of characterizing SSD performance, they weren't stressful enough. All of the tests performed less than 10GB of reads/writes and typically involved only 4GB of writes specifically. That's not even enough exceed the spare area on most SSDs. Most canned SSD benchmarks don't even come close to writing a single gigabyte of data, but that doesn't mean that simply writing 4GB is acceptable.

Originally I kept the benchmarks short enough that they wouldn't be a burden to run (~30 minutes) but long enough that they were representative of what a power user might do with their system.

Not too long ago I tweeted that I had created what I referred to as the Mother of All SSD Benchmarks (MOASB). Rather than only writing 4GB of data to the drive, this benchmark writes 106.32GB. It's the load you'd put on a drive after nearly two weeks of constant usage. And it takes a *long* time to run.

1) The MOASB, officially called AnandTech Storage Bench 2011 - Heavy Workload, mainly focuses on the times when your I/O activity is the highest. There is a lot of downloading and application installing that happens during the course of this test. My thinking was that it's during application installs, file copies, downloading and multitasking with all of this that you can really notice performance differences between drives.

2) I tried to cover as many bases as possible with the software I incorporated into this test. There's a lot of photo editing in Photoshop, HTML editing in Dreamweaver, web browsing, game playing/level loading (Starcraft II & WoW are both a part of the test) as well as general use stuff (application installing, virus scanning). I included a large amount of email downloading, document creation and editing as well. To top it all off I even use Visual Studio 2008 to build Chromium during the test.

The test has 2,168,893 read operations and 1,783,447 write operations. The IO breakdown is as follows:

| AnandTech Storage Bench 2011 - Heavy Workload IO Breakdown | ||||

| IO Size | % of Total | |||

| 4KB | 28% | |||

| 16KB | 10% | |||

| 32KB | 10% | |||

| 64KB | 4% | |||

Only 42% of all operations are sequential, the rest range from pseudo to fully random (with most falling in the pseudo-random category). Average queue depth is 4.625 IOs, with 59% of operations taking place in an IO queue of 1.

Many of you have asked for a better way to really characterize performance. Simply looking at IOPS doesn't really say much. As a result I'm going to be presenting Storage Bench 2011 data in a slightly different way. We'll have performance represented as Average MB/s, with higher numbers being better. At the same time I'll be reporting how long the SSD was busy while running this test. These disk busy graphs will show you exactly how much time was shaved off by using a faster drive vs. a slower one during the course of this test. Finally, I will also break out performance into reads, writes and combined. The reason I do this is to help balance out the fact that this test is unusually write intensive, which can often hide the benefits of a drive with good read performance.

There's also a new light workload for 2011. This is a far more reasonable, typical every day use case benchmark. Lots of web browsing, photo editing (but with a greater focus on photo consumption), video playback as well as some application installs and gaming. This test isn't nearly as write intensive as the MOASB but it's still multiple times more write intensive than what we were running last year.

As always I don't believe that these two benchmarks alone are enough to characterize the performance of a drive, but hopefully along with the rest of our tests they will help provide a better idea.

The testbed for Storage Bench 2011 has changed as well. We're now using a Sandy Bridge platform with full 6Gbps support for these tests.

AnandTech Storage Bench 2011 - Heavy Workload

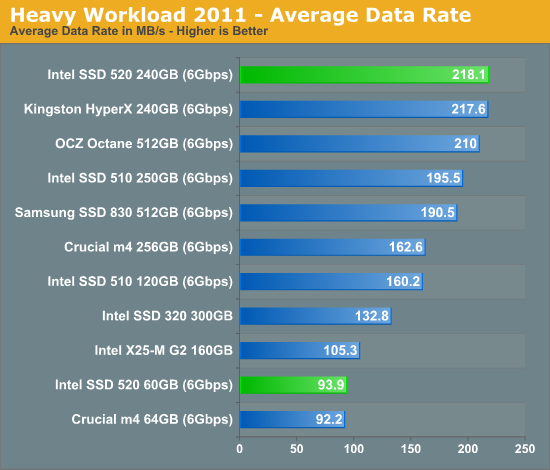

We'll start out by looking at average data rate throughout our new heavy workload test:

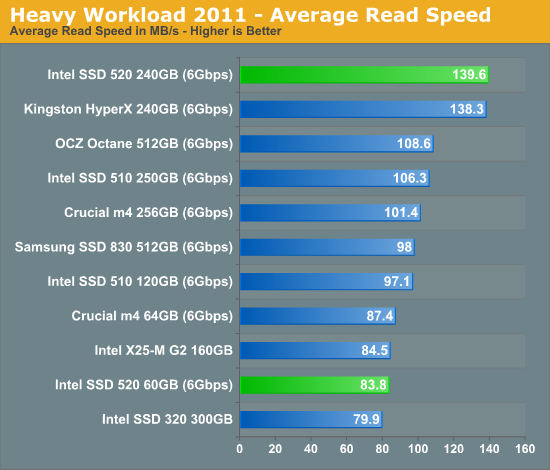

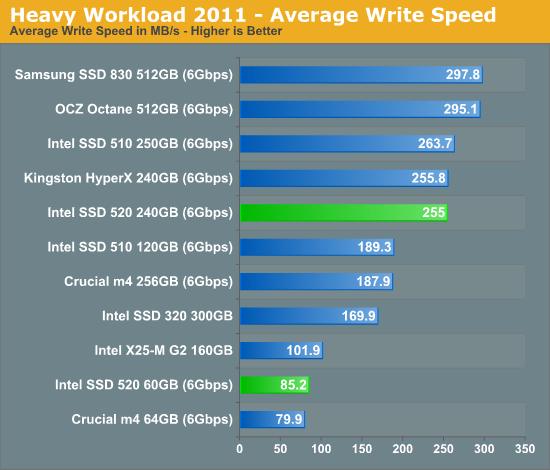

SandForce has always done well in our Heavy Workload test, and the 520 is no different. For heavy multitasking workloads, the 520 is the fastest SSD money can buy. Note that its only hindrance is incompressible write speed, which we do get a hint of in our breakdown of read/write performance below.

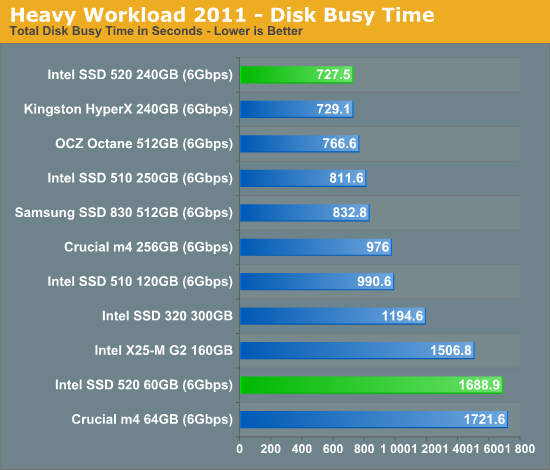

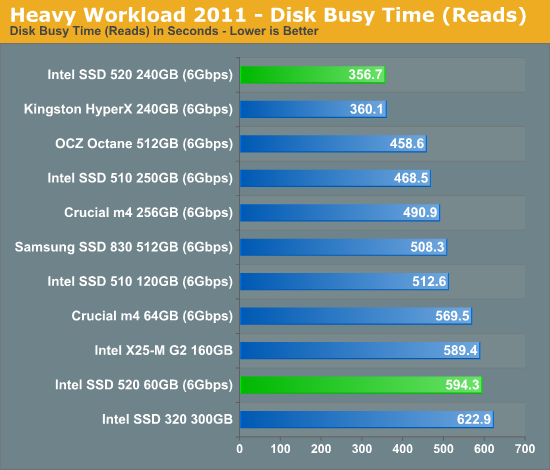

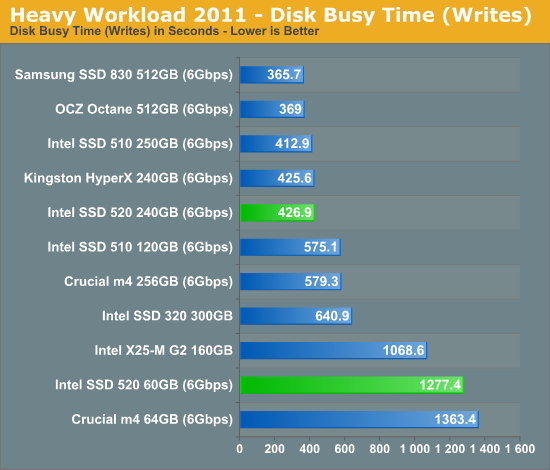

The next three charts just represent the same data, but in a different manner. Instead of looking at average data rate, we're looking at how long the disk was busy for during this entire test. Note that disk busy time excludes any and all idles, this is just how long the SSD was busy doing something:

138 Comments

View All Comments

hugh2323 - Monday, February 6, 2012 - link

Some posters are missing the reason why this drive has a high premium. It is intented for the market that values reliability over price. This market considers the price of lost data to be higher than the premium of the drive. If you are a business, and a computer goes down with its data, the lost hours of productivity and cost of data loss can easily add up. Compared to paying 20% extra for the drive initially (or whatever it is), is chump change compared to that.And then there is the consumer market that doesn't have time to f*** around with blue screen of death and whose purse strings perhaps aren't so tight.

So if you don't want to pay the premium, then your not in the target market. Simple as that.

neotiger - Monday, February 6, 2012 - link

... except this SSD doesn't give you reliability.It doesn't have any capacitors, which means after a computer crash or a power outage you will lose your data.

Not exactly reliable.

eman17j - Wednesday, February 8, 2012 - link

ssd are nonvolatile memory how are you going to lose all your data?eman17j - Wednesday, February 8, 2012 - link

oops I spoke to soon I know what you mean it wouldnt have the power to finish any write operation if there was an crash or power outage thereby losing your databji - Wednesday, February 8, 2012 - link

Irrelevant. Any application can make a sync call to ensure the data is written to the flash as necessary. Any application which does not make this sync call is risking the data at multiple levels of write cache before it actually makes it to the flash, so a capacitor would reduce the window of opportunity for data loss only slightly. And if you care that much about data loss, you are using sync anyway at that point of the application.eman17j - Wednesday, February 8, 2012 - link

buy an upsJediron - Tuesday, February 7, 2012 - link

Since when are MLC based SSD's more reliabele then SLC based SSD's ?Sorry, if they intended to put these SSD's in the market for endurance and reliability they make a mistake.

FunBunny2 - Tuesday, February 7, 2012 - link

bingo. but they last used SLC in the X25-E, and even Texas Memory is switching to MLC. The vendors are convinced they can get through warranty period with MLC. Unlike a HDD, which can last pretty much forever if it makes it through infant mortality, an SSD will die when it's time is up (think "Blade Runner").bji - Wednesday, February 8, 2012 - link

Theoretically, the failure mode for completely worn out flash should be that the drive can no longer be written to, but every existing block can still be read from. Thus you would not lose any data, you'd simply have to buy a new drive and clone the old one to it.In practice, it seems like either most SSD failures are not in the flash (maybe they are the result of firmware bugs that wedge the on-disk structures into an unrecoverable state?), or that if they are in the flash most firmware do not handle such failures gracefully and instead of putting the device into a read-only recoverable mode just give up and die. This is after reading many, many reports of SSD failures where the device became completely inoperable instead of going into read-only mode.

Also I've had plenty of platter HDD failures over the years, I always found them to be the least reliable component of any computer (ok, I guess fans are less reliable, but fan failure usually isn't catostrophic and is easy to fix; also power supplies die pretty frequently and finally for some reason CD/DVD drives also seem to fail disturbingly often).

beginner99 - Tuesday, February 7, 2012 - link

I disagree. It is too late to the market. the crucial m4 has proven its reliability in the real world and the intel drive has no special reliability features. And IMHO real world usage beats any validation tests intel can do.If you value your data you would have to back it up anyway, regardless of which drive you use.

m4: never heard of BSOD issues.

While I agree that sandforce drives have issues, there are others that do not and are also cheaper. The m4 is way best value. It's similar to CPUs. It is basically impossible to recommend any Desktop AMD CPU in any price or performance category. Same for SSD but here it is not possible to recommend Intel anymore.Neither for price, performance or reliability.