NVIDIA’s GeForce GTX 480 and GTX 470: 6 Months Late, Was It Worth the Wait?

by Ryan Smith on March 26, 2010 7:00 PM EST- Posted in

- GPUs

Compute

Update 3/30/2010: After hearing word after the launch that NVIDIA has artificially capped the GTX 400 series' double precision (FP64) performance, we asked NVIDIA for confirmation. NVIDIA has confirmed it - the GTX 400 series' FP64 performance is capped at 1/8th (12.5%) of its FP32 performance, as opposed to what the hardware natively can do of 1/2 (50%) FP32. This is a market segmentation choice - Tesla of course will not be handicapped in this manner. All of our compute benchmarks are FP32 based, so they remain unaffected by this cap.

Continuing at our look at compute performance, we’re moving on to more generalized compute tasks. GPGPU has long been heralded as the next big thing for GPUs, as in the right hands at the right task they will be much faster than a CPU would be. Fermi in turn is a serious bet on GPGPU/HPC use of the GPU, as a number of architectural tweaks went in to Fermi to get the most out of it as a compute platform. The GTX 480 in turn may be targeted as a gaming product, but it has the capability to be a GPGPU powerhouse when given the right task.

The downside to GPGPU use however is that a great deal of GPGPU applications are specialized number-crunching programs for business use. The consumer side of GPGPU continues to be underrepresented, both due to a lack of obvious, high-profile tasks that would be well-suited for GPGPU use, and due to fragmentation in the marketplace due to competing APIs. OpenCL and DirectCompute will slowly solve the API issue, but there is still the matter of getting consumer orientated GPGPU applications out in the first place.

With the introduction of OpenCL last year, we were hoping by the time Fermi was launched that we would see some suitable consumer applications that would help us evaluate the compute capabilities of both AMD and NVIDIA’s cards. That has yet to come to pass, so at this point we’re basically left with synthetic benchmarks for doing cross-GPU comparisons. With that in mind we’ve run a couple of different things, but the results should be taken with a grain of salt as they don’t represent any single truth about compute performance on NVIDIA or AMD’s cards.

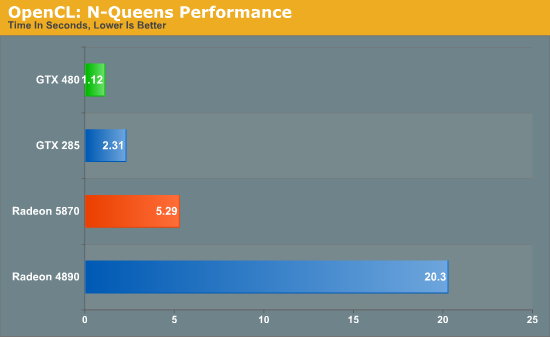

Out of our two OpenCL benchmarks, we’ll start with an OpenCL implementation of an N-Queens solver from PCChen of Beyond3D. This benchmark uses OpenCL to find the number of solutions for the N-Queens problem for a board of a given size, with a time measured in seconds. For this test we use a 17x17 board, and measure the time it takes to generate all of the solutions.

This benchmark offers a distinct advantage to NVIDIA GPUs, with the GTX cards not only beating their AMD counterparts, but the GTX 285 also beating the Radeon 5870. Due to the significant underlying differences of AMD and NVIDIA’s shaders, even with a common API like OpenCL the nature of the algorithm still plays a big part in the performance of the resulting code, so that may be what we’re seeing here. In any case, the GTX 480 is the fastest of the GPUs by far, beating out the GTX 285 by over half the time, and coming in nearly 5 times faster than the Radeon 5870.

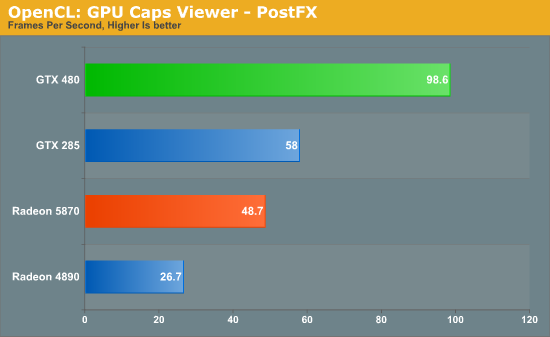

Our second OpenCL benchmark is a post-processing benchmark from the GPU Caps Viewer utility. Here a torus is drawn using OpenGL, and then an OpenCL shader is used to apply post-processing to the image. Here we measure the framerate of the process.

Once again the NVIDIA cards do exceptionally well here. The GTX 480 is the clear winner, while even the GTX 285 beats out both Radeon cards. This could once again be the nature of the algorithm, or it could be that the GeForce cards really are that much better at OpenCL processing. These results are going to be worth keeping in mind as real OpenCL applications eventually start arriving.

Moving on from cross-GPU benchmarks, we turn our attention to CUDA benchmarks. Better established than OpenCL, CUDA has several real GPGPU applications, with the limit being that we can’t bring the Radeons in to the fold here. So we can see how much faster the GTX 480 is over the GTX 285, but not how this compares to AMD’s cards.

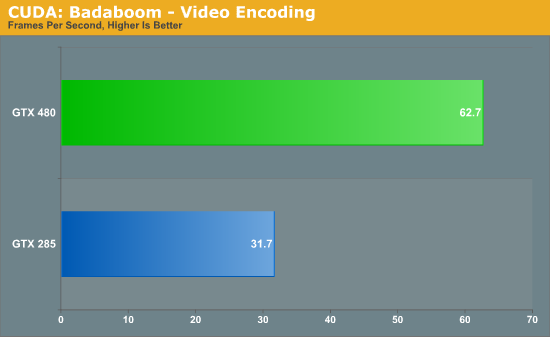

We’ll start with Badaboom, Elemental Technologies’ GPU-accelerated video encoder for CUDA. Here we are encoding a 2 minute 1080i clip and measuring the framerate of the encoding process.

The performance difference with Badaboom is rather straightforward. We have twice the shaders running at similar clockspeeds, and as a result we get twice the performance. The GTX 480 encodes our test clip in a little over half the time it took the GTX 280.

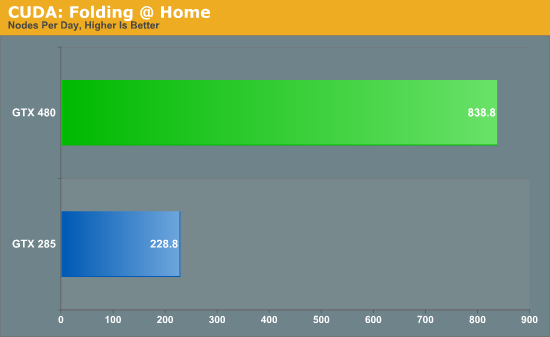

Up next is a special benchmark version of Folding@Home that has added Fermi compatibility. Folding@Home is a Standford research project that simulates protein folding in order to better understand how misfolded proteins lead to diseases. It has been a poster child of GPGPU use, having been made available on GPUs as early as 2006 as a Close-To-Metal application for AMD’s X1K series of GPUs. Here we’re measuring the time it takes to fully process a sample work unit so that we can project how many nodes (units of work) a GPU could complete per day when running Folding@Home.

Folding@Home is the first benchmark we’ve seen that really showcases the compute potential for Fermi. Unlike everything else which has the GTX 480 running twice as fast as the GTX 285, the GTX 480 is a fewtimes faster than the GTX 285 when it comes to folding. Here a GTX 480 would get roughly 3.5x as much work done per day as a GTX 285. And while this is admittedly more of a business/science application than it is a home user application (even if it’s home users running it), it gives us a glance at what Fermi is capable when it comes to compuete.

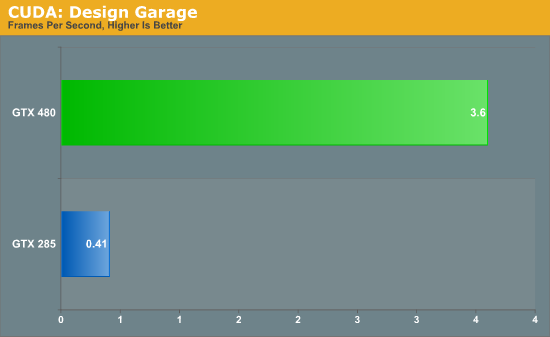

Last, but not least for our look at compute, we have another tech demo from NVIDIA. This one is called Design Garage, and it’s a ray tracing tech demo that we first saw at CES. Ray tracing has come in to popularity as of late thanks in large part to Intel, who has been pushing the concept both as part of their CPU showcases and as part of their Larrabee project.

In turn, Design Garage is a GPU-powered ray tracing demo, which uses ray tracing to draw and illuminate a variety of cars. If you’ve never seen ray tracing before it looks quite good, but it’s also quite resource intensive. Even with a GTX 480, with the high quality rendering mode we only get a couple of frames per second.

On a competitive note, it’s interesting to see NVIDIA try to go after ray tracing since that has been Intel’s thing. Certainly they don’t want to let Intel run around unchecked in case ray tracing and Larrabee do take off, but at the same time it’s rasterization and not ray tracing that is Intel’s weak spot. At this point in time it wouldn’t necessarily be a good thing for NVIDIA if ray tracing suddenly took off.

Much like the Folding@Home demo, this is one of the best compute demos for Fermi. Compared to our GTX 285, the GTX 480 is eight times faster at the task. A lot of this comes down to Fermi’s redesigned cache, as ray tracing as a high rate of cache hits which help to avoid hitting up the GPU’s main memory any more than necessary. Programs that benefit from Fermi’s optimizations to cache, concurrency, and fast task switching apparently stand to gain the most in the move from GT200 to Fermi.

196 Comments

View All Comments

kc77 - Saturday, March 27, 2010 - link

Yeah I mentioned it too. ATI got reamed for almost a whole entire page for something that didn't really happen. While this review mentions it in passing almost like it's a feature.gigahertz20 - Friday, March 26, 2010 - link

"The price gap between it and the Radeon 5870 is well above the current performance gap"Bingo, Nvidia may have the fastest single GPU out now, but not by much, and there are tons of trade offs for just a little bit more FPS over the Radeon 5870. High heat/noise/power for what? Over 90% of gamers play at 1920 X 1200 resolution or less, so even just a Radeon 5850 or Crossfired 5770's are the best bang for the buck.

If all your going to play at is 1920 X 1200 or less, I see no reason why educated people would want to buy a GTX 470/480 after reading all the reviews for Fermi today. Way to expensive and way to hot for not much of a performance gain, maybe it's time to sell my Nvidia stock before it goes down any further over the next year or so.

ImSpartacus - Friday, March 26, 2010 - link

"with a 5th one saying within the card"Page 2, Paragraph 2.

Aside from minor typos, this is a great article.

cordis - Friday, March 26, 2010 - link

Hey, thanks for the folding data, very much appreciated. Although, if there's any way you can translate it into something that folders are a little more used to, like ppd (points per day), that would be even better. I'm not sure what the benchmarking program you used is like, but if it folds things and produces log files, it should be possible to get ppd. From the ratios, it looks like above 30kppd, but it would be great to get hard numbers on it. Any chance of that getting added?Ryan Smith - Friday, March 26, 2010 - link

I can post the log files if you want, but there's no PPD data in them. It only tells me nodes.cordis - Tuesday, March 30, 2010 - link

Eh, that's ok, if you want to that's fine, but don't worry about it too much, it sounds like it was an artificial nvidia thing. We'll have to wait for people to really start folding on them to see how they work out.ciparis - Friday, March 26, 2010 - link

I had a weird malware warning pop up when I hit page 2:"The website at anandtech.com contains elements from the site googleanalyticz.com"

I'm using Safari (I also saw someone with Chrome report it). I wonder what that was all about...

Despoiler - Friday, March 26, 2010 - link

I'd like to see some overclocking benchmarks given the small die vs big die design decisions each company made.All in all ATI has this round in the business sense. The performance crown is not where the money is. ATI out executed Nvidia in a huge way. I cannot wait to see the financial results for each company.

LuxZg - Saturday, March 27, 2010 - link

Agree.. No overclocking at all..feels like big part of review missing. With GTX480 having that high consumption/temperatures, I doubt it would go much further, at least on air. On the other hand, there are already many OCed HD58xx cards out there, and even those can easily be overclocked further. With as much watts of advantage, I think AMD could easily catch up with GTX480 and still be a bit cooler and less power hungry. And less noisy as a consequence as well of course.randfee - Friday, March 26, 2010 - link

very thorough test as expected from you guys, thanks... BUT:Why on earth do you keep using an arguably outdated core i7 920 for benchmarking the newest GPUs? Even at 3,33GHz its no match for an overclocked 860, a comman highend gaming-rig cpu these days. I got mine at 4,2GHz air cooled?!

sorry... don't get it. On any GPU review I'd try to eliminate any possible bottleneck so the GPU gets limited more, why use an old cpu like this?!

anyone?