The RV870 Story: AMD Showing up to the Fight

by Anand Lal Shimpi on February 14, 2010 12:00 AM EST- Posted in

- GPUs

The Cost of Jumping to 40nm

This part of the story could almost stand on its own, but it directly impacts much of what happened with Cypress and the entire Evergreen stack that it’s worth talking about here.

By now you’ve most likely heard about issues with TSMC’s 40nm process. While the word is that the issues are finally over, poor yields and a slower than expected ramp lead to Cypress shortages last year and contributed to NVIDIA’s Fermi/GF100 delay. For the next couple of pages I want to talk about the move to 40nm and why it’s been so difficult.

The biggest issue with being a fabless semiconductor is that you have one more vendor to deal with when you’re trying to get out a new product. On top of dealing with memory companies, component manufacturers and folks who have IP you need, you also have to deal with a third party that’s going to actually make your chip. To make matters worse, every year or so, your foundry partner comes to you with a brand new process to use.

The pitch always goes the same way. This new process is usually a lot smaller, can run faster and uses less power. As with any company whose job it is to sell something, your foundry partner wants you to buy its latest and greatest as soon as possible. And as is usually the case in the PC industry, they want you to buy it before it's actually ready.

But have no fear. What normally happens is your foundry company will come to you with a list of design rules and hints. If you follow all of the guidelines, the foundry will guarantee that they can produce your chip and that it will work. In other words, do what we tell you to do, and your chip will yield.

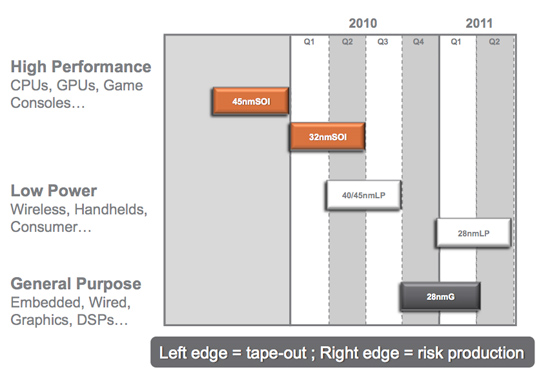

Global Foundries' 2010 - 2011 Manufacturing Roadmap

The problem is that if you follow every last one of these design rules and hints your chip won’t be any faster than it was on the older manufacturing process. Your yield will be about the same but your cost will be higher since you’ll bloat your design taking into account these “hints”.

Generally between process nodes the size of the wafer doesn’t change. We were at 200mm wafers for a while and now modern fabs use 300mm wafers. The transistor size does shrink however, so in theory you could fit more die on a wafer with each process shrink.

The problem is with any new process, the cost per wafer goes up. It’s a new process, most likely more complex, and thus the wafer cost is higher. If the wafer costs are 50% higher, then you need to fit at least 50% more die on each wafer in order to break even with your costs on the old process. In reality you actually need to fit more than 50% die per wafer on the new process because yields usually suck at the start. But if you follow the foundry’s guidelines to guarantee yield, you won’t even be close to breaking even.

The end result is you get zero benefit from moving to the new process. That’s not an option for anyone looking to actually use Moore’s Law to their advantage. Definitely not for a GPU company.

The solution is to have some very smart people in your company that can take these design rules and hints the foundry provides, and figure out which ones can be ignored, and ways to work around the others. This is an area where ATI and NVIDIA differ greatly.

132 Comments

View All Comments

Dudler - Sunday, February 14, 2010 - link

Your reasoning is wrong. The 57xx is a performance segment down from the 48xx segment. By your reasoning the 5450 should be quicker than the last gen 4870X2. The 5870 should be compared to the 4870, the 5850 to 4850 and so on.Regarding price, the article sure covers it, the 40nm process was more expensive than TSMC told Amd, and the yield problems factored in too. Can't blame Amd for that can we?

And finally, don't count out that Fermi is absent to the party. Amd can charge higher prices when there is no competition. At the moment, the 5-series has a more or less monopoly in the market. Considering this, I find their prices quite fair. Don't forget nVidia launched their Gtx280 at $637....

JimmiG - Friday, February 19, 2010 - link

"Your reasoning is wrong. The 57xx is a performance segment down from the 48xx segment. "Well I compared the cards across generations based on price both at launch and how the price developed over time. The 5850 and 4850 are not in the same price segment of their respective generation. The 4850 launched at $199, the 5850 at $259 but quickly climbed to $299.

The 5770 launched at $159 and is now at $169, which is about the same as a 1GB 4870, which will perform better in DX9 and DX10. Model numbers are arbitrary and at the very best only useful for comparing cards within the same generation.

The 5k-series provide a lot of things, but certainly not value. This is the generation to skip unless you badly want to be the first to get DX11 or you're running a really old GPU.

just4U - Tuesday, February 16, 2010 - link

and they are still selling the 275,285 etc for a hefty chunk of change. I've often considered purchasing one but the price has never been right and mail in rebates are a "PASS" or "NO THANKS" for many of us rather then a incentive.I haven't seen the mail-ins for AMD products much I hope their reading this and shy away from that sort of sales format. To many of us get the shaft and never recieve our rebates anyway.

BelardA - Monday, February 15, 2010 - link

Also remind people... the current GTX 285 is about $400 and usually slower than the $300 5850. So ATI is NOT riping off people with their new DX11 products. And looking at the die-size drawings, the RV870 is a bit smaller than the GT200... and we all now that FERMI is going to be another HUGE chip.The only disappointment is that the 5750 & 5770 are not faster than the 4850/70 which used to cost about $100~120 when inventory was good. Considering that the 5700 series GPUs are smaller... Hell, even the 4770 is faster than the 5670 and costs less. Hopefully this is just the cost of production.

But I think once the 5670 is down to $80~90 and the 5700s are $100~125 - they will be more popular.

coldpower27 - Monday, February 15, 2010 - link

nVidia made a conscious decision, not to fight the 5800 Series, with the GTX 200 in terms of a price war. Hence why their price remain poor value. They won't win using a large Gt200b die vs the 5800 smaller die.Another note to keep in mind is that the 5700 Series, also have the detriment of being higher in price due to ATi moving the pricing scale backup a bit with the 5800 Series.

I guess a card that draws much less power then the 4800's, and is close to the performance of those cards is a decent win, just not completely amazing.

MonkeyPaw - Sunday, February 14, 2010 - link

Actually, the 4770 series was meant to be a suitable performance replacement for the 3870. By that scheme, the 5770 should have been comperable to the 4870. I think we just hit some diminishing returns from the 128bit GDDR5 bus.nafhan - Sunday, February 14, 2010 - link

It's the shaders not the buswidth. Bandwidth is bandwidth however you accomplish it. The rv8xx shaders are slightly less powerful on one to one basis than the rv7xx shaders are.LtGoonRush - Sunday, February 14, 2010 - link

The point is that an R5770 has 128-bit GDDR5, compared to an R4870's 256-bit GDDR5. Memory clock speeds can't scale to make up the difference from cutting the memory bus in half, so overall the card is slower, even though it has higher compute performance on the GPU. The GPU just isn't getting data from the memory fast enough.Targon - Monday, February 15, 2010 - link

But you still have the issue of the 4870 being the high end from its generation, and trying to compare it to a mid-range card in the current generation. It generally takes more than one generation before the mid range of the new generation is able to beat the high end cards from a previous generation in terms of overall performance.At this point, I think the 5830 is what competes with the 4870, or is it the 4890? In either case, it will take until the 6000 or 7000 series before we see a $100 card able to beat a 4890.

coldpower27 - Monday, February 15, 2010 - link

Yeah we haven't seen ATi/nVidia achieved the current gen mainstream faster then the last gen high end. Bandwidth issues are finally becoming apparent.6600 GT > 5950 Ultra.

7600 GT > 6800 Ultra.

But the 8600 GTS, was only marginally faster then the 7600 GT and nowhere near 7900 GTX, it took the 8800 GTS 640 to beat the 7900 GTX completely, and the 800 GTS 320 beat it, in the large majority of scenarios, due to frame buffer limitation.

The 4770 slots somewhere between the 4830/4850. However, that card was way later then most of the 4000 series. Making it a decent bit faster then the 3870, which made more sense since the jump from 3870 to 4870 was huge, sometimes nearly even 2.5x could be seen nearly, given the right conditions.

4670 was the mainstream variant and it was, most of the performance of the 3800 Series, it doesn't beat it though, more like trades blows or is slower in general.