NVIDIA's Bumpy Ride: A Q4 2009 Update

by Anand Lal Shimpi on October 14, 2009 12:00 AM EST- Posted in

- GPUs

Blhaflhvfa.

There’s a lot to talk about with regards to NVIDIA and no time for a long intro, so let’s get right to it.

At the end of our Radeon HD 5850 Review we included this update:

“Update: We went window shopping again this afternoon to see if there were any GTX 285 price changes. There weren't. In fact GTX 285 supply seems pretty low; MWave, ZipZoomFly, and Newegg only have a few models in stock. We asked NVIDIA about this, but all they had to say was "demand remains strong". Given the timing, we're still suspicious that something may be afoot.”

Less than a week later and there were stories everywhere about NVIDIA’s GT200b shortages. Fudo said that NVIDIA was unwilling to drop prices low enough to make the cards competitive. Charlie said that NVIDIA was going to abandon the high end and upper mid range graphics card markets completely.

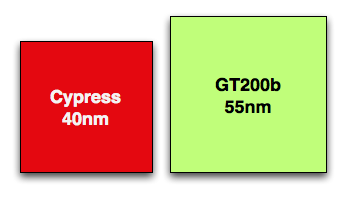

Let’s look at what we do know. GT200b has around 1.4 billion transistors and is made at TSMC on a 55nm process. Wikipedia lists the die at 470mm^2, that’s roughly 80% the size of the original 65nm GT200 die. In either case it’s a lot bigger and still more expensive than Cypress’ 334mm^2 40nm die.

Cypress vs. GT200b die sizes to scale

NVIDIA could get into a price war with AMD, but given that both companies make their chips at the same place, and NVIDIA’s costs are higher - it’s not a war that makes sense to fight.

NVIDIA told me two things. One, that they have shared with some OEMs that they will no longer be making GT200b based products. That’s the GTX 260 all the way up to the GTX 285. The EOL (end of life) notices went out recently and they request that the OEMs submit their allocation requests asap otherwise they risk not getting any cards.

The second was that despite the EOL notices, end users should be able to purchase GeForce GTX 260, 275 and 285 cards all the way up through February of next year.

If you look carefully, neither of these statements directly supports or refutes the two articles above. NVIDIA is very clever.

NVIDIA’s explanation to me was that current GPU supplies were decided on months ago, and in light of the economy, the number of chips NVIDIA ordered from TSMC was low. Demand ended up being stronger than expected and thus you can expect supplies to be tight in the remaining months of the year and into 2010.

Board vendors have been telling us that they can’t get allocations from NVIDIA. Some are even wondering whether it makes sense to build more GTX cards for the end of this year.

If you want my opinion, it goes something like this. While RV770 caught NVIDIA off guard, Cypress did not. AMD used the extra area (and then some) allowed by the move to 40nm to double RV770, not an unpredictable move. NVIDIA knew they were going to be late with Fermi, knew how competitive Cypress would be, and made a conscious decision to cut back supply months ago rather than enter a price war with AMD.

While NVIDIA won’t publicly admit defeat, AMD clearly won this round. Obviously it makes sense to ramp down the old product in expectation of Fermi, but I don’t see Fermi with any real availability this year. We may see a launch with performance data in 2009, but I’d expect availability in 2010.

While NVIDIA just launched its first 40nm DX10.1 parts, AMD just launched $120 DX11 cards

Regardless of how you want to phrase it, there will be lower than normal supplies of GT200 cards in the market this quarter. With higher costs than AMD per card and better performance from AMD’s DX11 parts, would you expect things to be any different?

Things Get Better Next Year

NVIDIA launched GT200 on too old of a process (65nm) and they were thus too late to move to 55nm. Bumpgate happened. Then we had the issues with 40nm at TSMC and Fermi’s delays. In short, it hasn’t been the best 12 months for NVIDIA. Next year, there’s reason to be optimistic though.

When Fermi does launch, everything from that point should theoretically be smooth sailing. There aren’t any process transitions in 2010, it’s all about execution at that point and how quickly can NVIDIA get Fermi derivatives out the door. AMD will have virtually its entire product stack out by the time NVIDIA ships Fermi in quantities, but NVIDIA should have competitive product out in 2010. AMD wins the first half of the DX11 race, the second half will be a bit more challenging.

If anything, NVIDIA has proved to be a resilient company. Other than Intel, I don’t know of any company that could’ve recovered from NV30. The real question is how strong will Fermi 2 be? Stumble twice and you’re shaken, do it a third time and you’re likely to fall.

106 Comments

View All Comments

Zool - Thursday, October 15, 2009 - link

And what is such a novelty on Nvidia that other dont hawe ? Oh wait maybe the PR team or the shiny flashy nvidia page that lets you believe that even the most useless product is the customers neverending dream.I need to admit that AMD is way behind in those areas.

I wouldnt say its novelty but it seems its working for the shareholders.

jasperjones - Wednesday, October 14, 2009 - link

Anand, while I generally agree with your comment, I believe there is one area where Nvidia has a competitive advantage: drivers and software.Two examples:

- the GPGPU market. On the business side, double-precision (DP) arithmetic is of tremendous importance to scientists. GT200 is vastly inferior in DP arithmetic to R700, yet people bought Nvidia due to better software (ATI is also better in DP performance if you compare its enterprise GPUs to similarly-priced Nvidia GPUs). On the consumer side, look at the sheer number of apps that use CUDA vs the tiny number of apps that use Stream. If I look at other things (OpenCL or C/C++/Fortran support), I also see Nvidia ahead of AMD/ATI.

- Linux drivers. AMD has stepped things up but Nvidia drivers are still vastly superior to ATI drivers.

I know they're trading blows in some other areas closely related to software (Nvidia has Physx, AMD DX11) but my own experience still is Nvidia has the better software.

medi01 - Friday, October 16, 2009 - link

Pardon me, but isn't like 95+% of the gaming market - Windows, not Linux?AtwaterFS - Wednesday, October 14, 2009 - link

Where is Silicon Doc? Is he busy shoving a remote up his a$$ as Nvidia takes it up theirs? MUAHAHAHAHA!Seriously tho, I need Nvidia to drop price on 58xx cards so I can buy one for TES 5 / Fallout 4 - WTF!

tamalero - Sunday, October 18, 2009 - link

he was banned, you forgot?Transisto - Wednesday, October 14, 2009 - link

I never play games but spend a lot of money (to me) and time info a folding farm.The winter is setting and I plan on heating the whole house from these. 9600 9800 and 260. So timing is now !

To me this is a bad time to invest into folding GPUs because the decisions are taking too much precious brainwidth.

My worries are:

Will there still be a demand for low end 9600 and gts250 even g200 card once the G300 come out ?

And more importantly would the performance of these antique g92 and g200 still be efficient at research computing (price wise).

I was actually purchasing more gtx 260s because these could still sell out after I upgrade to better. But these day I am more into buying used g92s on the cheap.

Thank You.

The0ne - Wednesday, October 14, 2009 - link

This doesn't come as a shock to the "few" of us that had believe NVidia was in trouble, won't have competition and will most likely leave the high end business video market. I've even posted a link on a Dailytech article, although from a ironic website name.What I'm concern about is why there aren't reports are the issues around NVidia's business/management? They have been undergoing restructuring for at least a year now and workers have been laid off here and there. Does Anandtech have any info on this matter? I haven't check lately but the CA based has been struggling.

Personally, I think some "body" has gone Financial on the business and NVidia is suffering. Planning and strategies are either nonexistent, poor, or poorly communicated/executed. I think they're going to fail and go the way of Matrox. It's too bad since they were doing well. I've been around too many companies like this to not know that upper management just got greedy and lazy. Again, that's just personal opinion from what I've read and know.

Anyone has any direct info regarding my concerns?

iwodo - Wednesday, October 14, 2009 - link

Where did you heard they have laid off? As far as i am concern they are actually hiring MORE engineers to work on their products.frozentundra123456 - Wednesday, October 14, 2009 - link

I have to say that I am somewhat disappointed with AMDs new lineup, even though I would prefer AMD to do well. They sure need it.If nVidia could switch to DDR5 and keep a larger bus, it seems like they could really outperform AMD. Even the current generation of cards from nVidia is relatively competitive with AMDs new cards except of course for Dx11. The "problem" is that nVidia seems to be trying to do too much with the GPU instead of just making good graphics performance.

Anyway, lets hope that the new generation from nVidia at least is competitive enough to push down prices on AMDs new lineup, which seems overpriced for the performance, especially the 5770 and to a lesser extent the 5750.

MonkeyPaw - Wednesday, October 14, 2009 - link

The problem is, it's all about making a profit. The GT200 was in theory a great product, but AMD essentially matched it with RV770, vastly eroding nVidia's profits on their entire top line. GT300 might be fantastic, but let's be realistic. The economy kinda sucks, and people are worried about becoming unemployed (if they aren't already). Being the best at all costs only works in prosperous times, but being good enough at the best price means much more in times like these. And considering that it takes 3 monitors to stress a 5870 on today's titles, how much better does the GT300 need to be?