NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

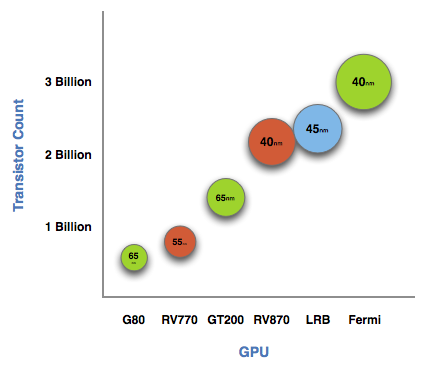

The graph below is one of transistor count, not die size. Inevitably, on the same manufacturing process, a significantly higher transistor count translates into a larger die size. But for the purposes of this article, all I need to show you is a representation of transistor count.

See that big circle on the right? That's Fermi. NVIDIA's next-generation architecture.

NVIDIA astonished us with GT200 tipping the scales at 1.4 billion transistors. Fermi is more than twice that at 3 billion. And literally, that's what Fermi is - more than twice a GT200.

At the high level the specs are simple. Fermi has a 384-bit GDDR5 memory interface and 512 cores. That's more than twice the processing power of GT200 but, just like RV870 (Cypress), it's not twice the memory bandwidth.

The architecture goes much further than that, but NVIDIA believes that AMD has shown its cards (literally) and is very confident that Fermi will be faster. The questions are at what price and when.

The price is a valid concern. Fermi is a 40nm GPU just like RV870 but it has a 40% higher transistor count. Both are built at TSMC, so you can expect that Fermi will cost NVIDIA more to make than ATI's Radeon HD 5870.

Then timing is just as valid, because while Fermi currently exists on paper, it's not a product yet. Fermi is late. Clock speeds, configurations and price points have yet to be finalized. NVIDIA just recently got working chips back and it's going to be at least two months before I see the first samples. Widespread availability won't be until at least Q1 2010.

I asked two people at NVIDIA why Fermi is late; NVIDIA's VP of Product Marketing, Ujesh Desai and NVIDIA's VP of GPU Engineering, Jonah Alben. Ujesh responded: because designing GPUs this big is "fucking hard".

Jonah elaborated, as I will attempt to do here today.

415 Comments

View All Comments

PorscheRacer - Thursday, October 1, 2009 - link

I have no clue what the red rooster thing implies, and I never understood why people called nVIDIA the green goblin. Until now. You, sir, have made it clear to me. They are called the green goblin, because that's where the trolls come from. Like wow. Your partisan and righteous thinking has no merit, no basis except conjecture and criticism. Save a keyboard, chill out and let's see if you can post anything in here without using the words, nVIDIA, ATI, red rooster, green goblin, and anything with ALL CAPS.It's fine to be passionate about something. But to exessive extents that push everyone else away and leave people ashamed, discouraged and embarrased; that's not how to win hearts and minds. I can already see you getting riled up over this post telling you to chill out....

SiliconDoc - Friday, October 2, 2009 - link

Hmmmm, that's very interesting. First you go into a pretend place where you assume green goblin is something "they call" nVIDIA, but just earlier, you'd never seen it in print before in your life.Along with that little fib problem, you make the rest of the paragraph a whining attack. One might think you need to settle down and take your own medicine.

And speaking of advice, your next paragraph talks about what you did in your first that you claim noone should, so I guess you're exempt in your own mind.

kirillian - Thursday, October 1, 2009 - link

Yall...seriously...leave the poor NVidia Fanboy alone. His head is probably throbbing with the fact that he found his first website (other than HardOCP) that isn't extremely NVidia biased.SiliconDoc - Friday, October 2, 2009 - link

Gee, I find that interesting that you know all about bias at other websites...So that says what again about here ?

silverblue - Thursday, October 1, 2009 - link

The 5870 is but one single GPU. The 295 is two and costs more. The 4870X2/CF is also a case of two GPUs. A 5870X2 would annihilate everything out there right now, and guess what? 5870 CF does just that. If money is no object, that would be the current option, or 5850s in CF to cut down on power usage and a fair amount of the cost without substantially decreasing performance.By stating "if someone wants to get their next-gen performance now", of course he's going to point in the direction of ATI as they are the only people with a DX11 part, and they currently hold the single GPU speed crown. This will not be the case in a few months, but for now, they do.

SiliconDoc - Friday, October 2, 2009 - link

I kinda doubt the 5870x2 blows away GTX295 quad, don't you ?--

Now you want to whine cost, too, but then excuse it for the 5870CF. LOL.

Another big fat riotous red rooster.

Really, you people love lies, and what's bad when it's nvidia, is good when it's ati, you just exactly said it !

ROFLMAO

--

Should I go get a 295 quad setup review and show you ?

--

How come you were wrong, whined I should settle down, then came back blowing lies again ?

There's no DX11 ready to speak of, so that's another pale and feckless attempt at the face save, after your excited, out of control, whipped up incorrect initial post, and this follow up fibber.

You need to settle down. "I want you banned"

Finally, you try to pretend you're not full of it, with your spewing caveat of prediction, "this will not be the case in a few months" - LOL

It's NOT the case NOW, but in a few months, it sure looks like it might BE THE CASE NO MATTER WHAT, unless of course ati launches the 5870x2 along with nvidia's SC GT300, which for all I know could happen.

So, even in that, you are NOT correct to any certainty, are you...

LOL

Calm down, and think FIRST, then start on your rampage without lying.

silverblue - Friday, October 2, 2009 - link

My GOD... you're a retard of the highest order.Why would I want to compare a dual GPU setup with an 8 GPU setup? What numpty would do that when it would logically be far faster? Even a quad 5870 setup wouldn't beat a quad 295 setup, and you know what? WE KNOW! 8 cores versus 4 is no contest. Core for core, RV870 is noticeably faster than the GT200 series, but you're the only person attempting to compare a single GPU card to a dual GPU card and saying the single GPU card sucks because it doesn't win.

And where did I say "I want you banned"? As someone once said, "lay off the crack".

SiliconDoc - Friday, October 2, 2009 - link

Aren't you the one who claimed only ati for the next gen performance ?Well, you really blew it, and no face save is possible. A single NVIDIA card beats the best single ati card. PERIOD.

It's true right now, and may or may not change within two months.

PERIOD.

silverblue - Friday, October 2, 2009 - link

No, I said that ATI currently has the single GPU crown. Not card - GPU. In a couple of months, ATI may have the 5870X2 out, and that WILL send the 295 the way of the dodo if it's priced correctly.No face saving necessary on my part.

Zaitsev - Wednesday, September 30, 2009 - link

^^LOL. I don't see what all the bickering is about. If you're willing to wait a few more months, then you can buy a faster card. If you want to buy now, there are also some nice options available. Currently there are 5 brands of 5870's and 1 5850 at the egg.