NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

Architecting Fermi: More Than 2x GT200

NVIDIA keeps referring to Fermi as a brand new architecture, while calling GT200 (and RV870) bigger versions of their predecessors with a few added features. Marginalizing the efforts required to build any multi-billion transistor chip is just silly, to an extent all of these GPUs have been significantly redesigned.

At a high level, Fermi doesn't look much different than a bigger GT200. NVIDIA is committed to its scalar architecture for the foreseeable future. In fact, its one op per clock per core philosophy comes from a basic desire to execute single threaded programs as quickly as possible. Remember, these are compute and graphics chips. NVIDIA sees no benefit in building a 16-wide or 5-wide core as the basis of its architectures, although we may see a bit more flexibility at the core level in the future.

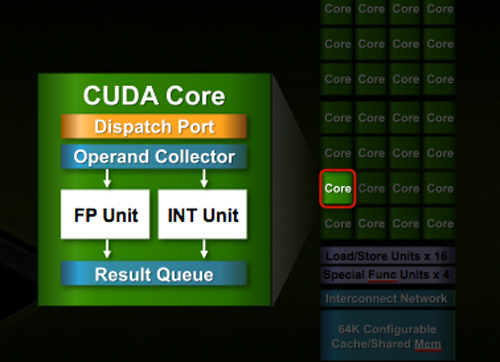

Despite the similarities, large parts of the architecture have evolved. The redesign happened at low as the core level. NVIDIA used to call these SPs (Streaming Processors), now they call them CUDA Cores, I’m going to call them cores.

All of the processing done at the core level is now to IEEE spec. That’s IEEE-754 2008 for floating point math (same as RV870/5870) and full 32-bit for integers. In the past 32-bit integer multiplies had to be emulated, the hardware could only do 24-bit integer muls. That silliness is now gone. Fused Multiply Add is also included. The goal was to avoid doing any cheesy tricks to implement math. Everything should be industry standards compliant and give you the results that you’d expect.

Double precision floating point (FP64) performance is improved tremendously. Peak 64-bit FP execution rate is now 1/2 of 32-bit FP, it used to be 1/8 (AMD's is 1/5). Wow.

NVIDIA isn’t disclosing clock speeds yet, so we don’t know exactly what that rate is yet.

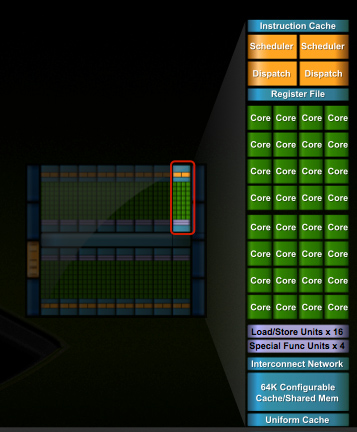

In G80 and GT200 NVIDIA grouped eight cores into what it called an SM. With Fermi, you get 32 cores per SM.

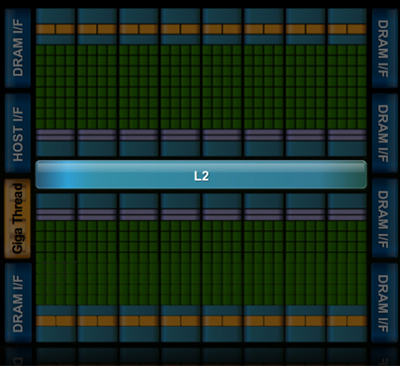

The high end single-GPU Fermi configuration will have 16 SMs. That’s fewer SMs than GT200, but more cores. 512 to be exact. Fermi has more than twice the core count of the GeForce GTX 285.

| Fermi | GT200 | G80 | |

| Cores | 512 | 240 | 128 |

| Memory Interface | 384-bit GDDR5 | 512-bit GDDR3 | 384-bit GDDR3 |

In addition to the cores, each SM has a Special Function Unit (SFU) used for transcendental math and interpolation. In GT200 this SFU had two pipelines, in Fermi it has four. While NVIDIA increased general math horsepower by 4x per SM, SFU resources only doubled.

The infamous missing MUL has been pulled out of the SFU, we shouldn’t have to quote peak single and dual-issue arithmetic rates any longer for NVIDIA GPUs.

NVIDIA organizes these SMs into TPCs, but the exact hierarchy isn’t being disclosed today. With the launch's Tesla focus we also don't know specific on ROPs, texture filtering or anything else related to 3D graphics. Boo.

A Real Cache Hierarchy

Each SM in GT200 had 16KB of shared memory that could be used by all of the cores. This wasn’t a cache, but rather software managed memory. The application would have to knowingly move data in and out of it. The benefit here is predictability, you always know if something is in shared memory because you put it there. The downside is it doesn’t work so well if the application isn’t very predictable.

Branch heavy applications and many of the general purpose compute applications that NVIDIA is going after need a real cache. So with Fermi at 40nm, NVIDIA gave them a real cache.

Attached to each SM is 64KB of configurable memory. It can be partitioned as 16KB/48KB or 48KB/16KB; one partition is shared memory, the other partition is an L1 cache. The 16KB minimum partition means that applications written for GT200 that require 16KB of shared memory will still work just fine on Fermi. If your app prefers shared memory, it gets 3x the space in Fermi. If your application could really benefit from a cache, Fermi now delivers that as well. GT200 did have an L1 texture cache (one per TPC), but the cache was mostly useless when the GPU ran in compute mode.

The entire chip shares a 768KB L2 cache. The result is a reduced penalty for doing an atomic memory op, Fermi is 5 - 20x faster here than GT200.

415 Comments

View All Comments

SiliconDoc - Thursday, October 1, 2009 - link

Good for you, one of 7 billion, and then again one of perhaps 20, as reported for Europe.But, all you see is yourself, because you're just that selfish. And, you're a big enough liar, that you even posted your insane smart aleck stupidity, like a little brat.

That's what you're about. Case closed.

bobvodka - Thursday, October 1, 2009 - link

Ah, I see, you have no facts to refute me with thus you fall back to unfounded insults safe in the knowledge that you are nothing but a troll hiding behind a keyboard.Sorry I wasted my time with you, clearly you aren't able to deal with the world in logical terms.

rennya - Thursday, October 1, 2009 - link

Uhmm... maybe because it is common knowledge that ATI can actually get 5870 launched properly, with multiple manufacturers on board, and get the retail stores stocked up?20 for the whole Europe? What a joke. If I am a millionaire, I can get 20 of those 5870 GPU thing easily.

SiliconDoc - Thursday, October 1, 2009 - link

This is October 1st, not September 23rd, so for being a millionaire, you certainly are one ding dang dumb dumb.gx80050 - Friday, October 2, 2009 - link

Isn't the internet great. It allows shitheads like yourself to say shit that would, in real life

get your head cracked open.

Hopefully you'll suffer the same fate fucking cunt.

Please turn to the loaded gun in your drawer, put it in your mouth, and pull the trigger,

blowing your brains out. You'll be doing the whole world a favor. Shitbag.

rennya - Friday, October 2, 2009 - link

Hahahaha.... even that today is already 1 October, you are still claiming that 5870 GPU is paper launch, when it is definitely not.rennya - Thursday, October 1, 2009 - link

What paper launch? Is Newegg is the only place to get one? Here somewhere in SE Asia getting one of this 5870 GPU is as easy as going to a store, flash your wad of cash at the cashier and then returns home with a box with pre-rendered 3D objects/characters on it (and of course an ATI 5870 GPU in it). In fact, after a week from the release date, there is a glut of them here already, mainly from Powercolor and HIS.SiliconDoc - Thursday, October 1, 2009 - link

LOL - roflmao - So announce in the foreign tongue, and move to the next continent when ready, you dummy. They didn't do that. They LIED, again, and failed.A week late is better than several or a month or two for the 4870.

You can't buy quantity yet either, but for peons, who cares.

rennya - Thursday, October 1, 2009 - link

Uhmm... the second language in SE Asia is English. What, just because I can prove to you that 5870 launch is real, you started to deny it? Are you the typical American that thinks the rest of the world doesn't exists?SiliconDoc - Thursday, October 1, 2009 - link

Yuo can't prove anything to me, since you won't be proving the GT300 LAUNCHED like the author claimed.Instead, none of you quacking loons have anything but "foreign nation", no links and it's too late, and strangely none of you type in the Asain fashion.

LOL

So who the heck knows what you liars are doing anyway.

The paper standard was set by this site and it's authors, and the 4870 was paper, the 4770 was paper, and this 5870 was paper, PERIOD, and as of this morning the 5850 was also PAPER LAUNCHED.

What's funny is only you morons deny it.

All the other IT channels admit it.

--

Good for you red roosters here, you're the only ones correct in the world. ( no, you're not really, and I had to say that because you'll believe anything )