AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

Power, Temperature, & Noise

As we have mentioned previously, one of AMD’s big design goals for the 5800 series was to get the idle power load significantly lower than that of the 4800 series. Officially the 4870 does 90W, the 4890 60W, and the 5870 should do 27W.

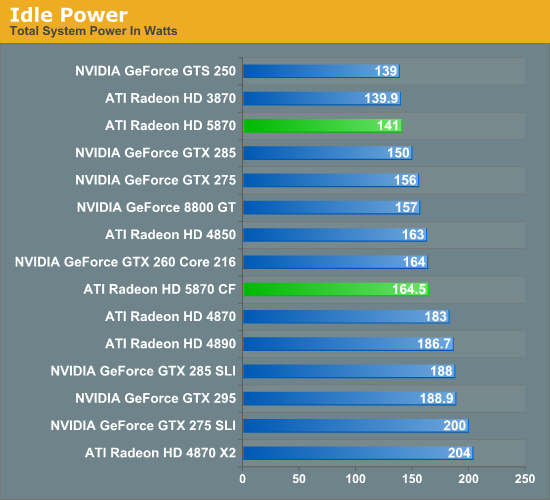

On our test bench, the idle power load of the system comes in at 141W, a good 42W lower than either the 4870 or 4890. The difference is even more pronounced when compared to the multi-GPU cards that the 5870 competes with performance wise, with the gap opening up to as much as 63W when compared to the 4870X2. In fact the only cards that the 5870 can’t beat are some of the slowest cards we have: the GTS 250 and the Radeon HD 3870.

As for the 5870 CF, we see AMD’s CF-specific power savings in play here. They told us they can get the second card down to 20W, and on our rig the power consumption of adding a second card is 23.5W, which after taking power inefficiencies into account is right on the dot.

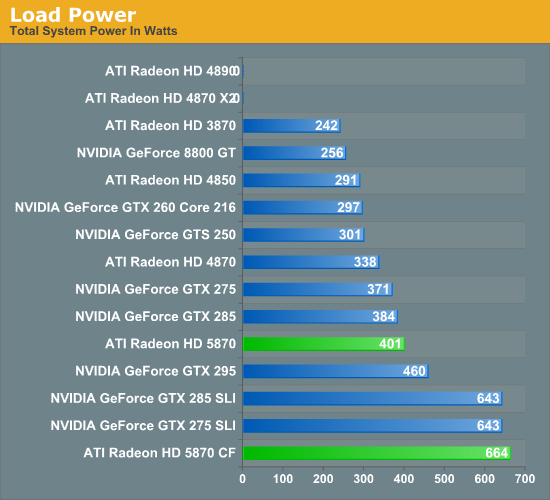

Moving on to load power, we are using the latest version of the OCCT stress testing tool, as we have found that it creates the largest load out of any of the games and programs we have. As we stated in our look at Cypress’ power capabilities, OCCT is being actively throttled by AMD’s drivers on the 4000 and 3000 series hardware. So while this is the largest load we can generate on those cards, it’s not quite the largest load they could ever experience. For the 5000 series, any throttling would be done by the GPU’s own sensors, and only if the VRMs start to overload.

In spite of AMD’s throttling of the 4000 series, right off the bat we have two failures. Our 4870X2 and 4890 both crash the moment OCCT starts. If you ever wanted proof as to why AMD needed to move to hardware based overcurrent protection, you will get no better example of that than here.

For the cards that don’t fail the test, the 5870 ends up being the most power-hungry single-GPU card, at 401W total system power. This puts it slightly ahead of the GTX 285, and well, well behind any of the dual-GPU cards or configurations we are testing. Meanwhile the 5870 CF takes the cake, beating every other configuration for a load power of 664W. If we haven’t mentioned this already we will now: if you want to run multiple 5870s, you’re going to need a good power supply.

Ultimately with the throttling of OCCT it’s difficult to make accurate predictions about all possible cases. But from our tests with it, it looks like it’s fair to say that the 5870 has the capability to be a slightly bigger power hog than any previous single-GPU card.

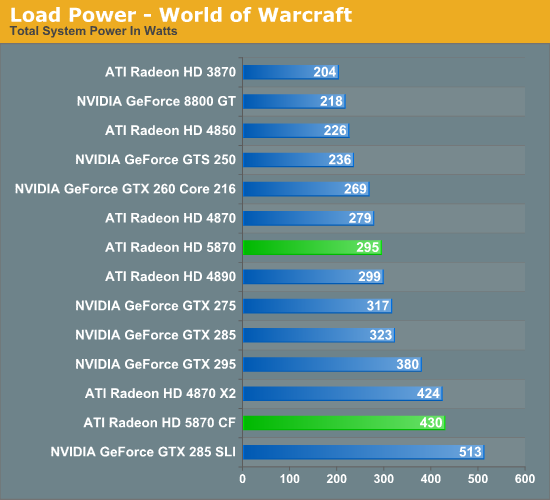

In light of our results with OCCT, we have also taken load power results for our suite of cards when running World of Warcraft. As it’s not a stress-tester it should produce results more in line with what power consumption will look like with a regular game.

Right off the bat, system power consumption is significantly lower. The biggest power hogs are the are the GTX 285 and GTX 285 SLI for single and dual-GPU configurations respectively. The bulk of the lineup is the same in terms of what cards consume more power, but the 5870 has moved down the ladder, coming in behind the GTX 275 and ahead of the 4870.

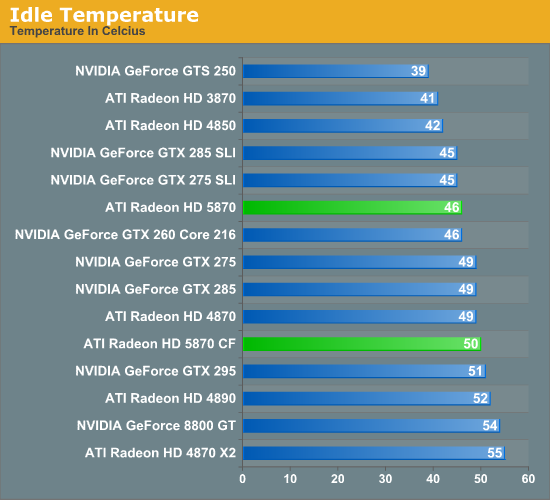

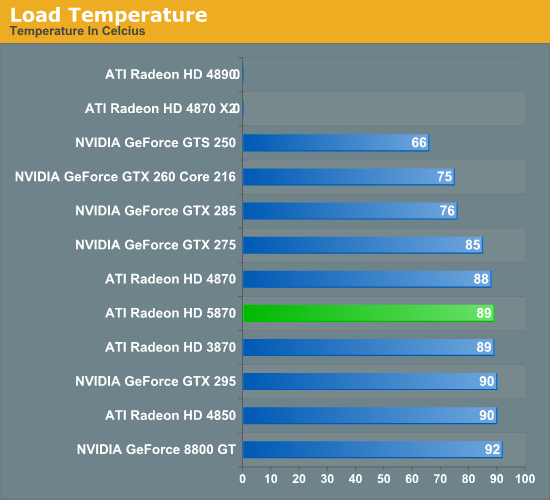

Next up we have card temperatures, measured using the on-board sensors of the card. With a good cooler, lower idle power consumption should lead to lower idle temperatures.

The floor for a good cooler looks to be about 40C, with the GTS 250, 3870, and 4850 all turning in temperatures around here. For the 5870, it comes in at 46C, which is enough to beat the 4870 and the NVIDIA GTX lineup.

Unlike power consumption, load temperatures are all over the place. All of the AMD cards approach 90C, while NVIDIA’s cards are between 92C for an old 8800GT, and a relatively chilly 75C for the GTX 260. As far as the 5870 is concerned, this is solid proof that the half-slot exhaust vent isn’t going to cause any issues with cooling.

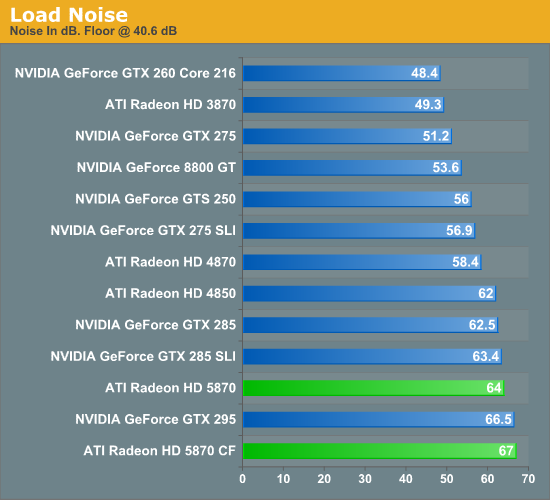

Finally we have fan noise, as measured 6” from the card. The noise floor for our setup is 40.4 dB.

All of the cards, save the GTX 295, generate practically the same amount of noise when idling. Given the lower energy consumption of the 5870 when idling, we had been expecting it to end up a bit quieter, but this was not to be.

At load, the picture changes entirely. The more powerful the card the louder it tends to get, and the 5870 is no exception. At 64 dB it’s louder than everything other than the GTX 295 and a pair of 5870s. Hopefully this is something that the card manufacturers can improve on later on with custom coolers, as while 64 dB at 6" is not egregious it’s still an unwelcome increase in fan noise.

327 Comments

View All Comments

maomao0000 - Sunday, October 11, 2009 - link

http://www.myyshop.com">http://www.myyshop.comQuality is our Dignity; Service is our Lift.

Myyshop.com commodity is credit guarantee, you can rest assured of purchase, myyshop will

provide service for you all, welcome to myyshop.com

Air Jordan 7 Retro Size 10 Blk/Red Raptor - $34

100% Authentic Brand New in Box DS Air Jordan 7 Retro Raptor colorway

Never Worn, only been tried on the day I bought them back in 2002

$35Firm; no trades

http://www.myyshop.com/productlist.asp?id=s14">http://www.myyshop.com/productlist.asp?id=s14 (Jordan)

http://www.myyshop.com/productlist.asp?id=s29">http://www.myyshop.com/productlist.asp?id=s29 (Nike shox)

shaolin95 - Wednesday, October 7, 2009 - link

So Eyefinity may use 100 monitors but if we are still gaming on the flat plant then it makes no difference to me.Come on ATI, go with the real 3D games already..been waiting since the Radeon 64 SE days for you to get on with it.... :-(

GTX 295 for this boy as it is the only way to real 3D on a 60" DLP.

Nice that they have a fast product at good prices to keep the competition going. If either company goes down we all lose so support them both! :-)

Regards

raptorrage - Tuesday, October 6, 2009 - link

wow what a joke this review is but that i mean the reviewer stance on the 5870 sounds like he is a nvidia fan just because it like what 2-3fps off of the gtx 295 doesn't actually mean it can't catch that gpu as the driver updates come out and get the gpu to actually compete against that gpu and if i remember wasn't the GTX 295 the same when it came out .. its was good but it wasn't where we all thought it should have been then BAM a few months go by and it finds the performance it was missingi don't know if this was a fail on anandtech or the testing practices but i question them as i've read many other review sites and they had a clear view where the 5870 / GTX 295 where neck N neck as i've seen them first hand so i go ahead and state them here head 2 head @ 1920x1200 but at 2560x1600 the dual gpu cards do take the top slot but that is expected but it isn't as big as a margin as i see it.

and clearly he missed the whole point YES the 5870 dose compete with the GTX 295 i just believe your testing practices do come into question here because i've seen many sites where they didn't form the opinion that you have here it seems completely dismissive like AMD has failed i just don't see that in my opinion - I'll just take this review with a gain of salt as its completely meaningless

dieselcat18 - Saturday, October 3, 2009 - link

@Silicon DocNvidia fan-boy, troll, loser....take your gforce cards and go home...we can now all see how terrible ATi is thanks you ...so I really don't understand why people are beating down their doors for the 5800 series, just like people did for the 4800 and 3800 cards. I guess Nvidia fan-boy trolls like you have only one thing left to do and that's complain and cry like the itty-bitty babies that some of you are about the competition that's beating you like a drum.....so you just wait for your 300 series cards to be released (can't wait to see how many of those are available) so you can pay the overpriced premiums that Nvidia will be charging AGAIN !...hahaha...just like all that re-badging BS they pulled with the 9800 and 200 cards...what a joke !.. Oh my, I must say you have me in a mood and the ironic thing is I do like Nvidia as much as ATi, I currently own and use both. I just can't stand fools like you who spout nothing but mindless crap while waving your team flag (my card is better than your's..WhaaWhaaWhaa)...just take yourself along with your worthless opinions and slide back under that slimly rock you came from.

dieselcat18 - Saturday, October 3, 2009 - link

@Silicon DocNvidia fan-boy, troll, loser....take your gforce cards and go home...we can now all see how terrible ATi is thanks you ...so I really don't understand why people are beating down their doors for the 5800 series, just like people did for the 4800 and 3800 cards. I guess Nvidia fan-boy trolls like you have only one thing left to do and that's complain and cry like the itty-bitty babies that some of you are about the competition that's beating you like a drum.....so you just wait for your 300 series cards to be released (can't wait to see how many of those are available) so you can pay the overpriced premiums that Nvidia will be charging AGAIN !...hahaha...just like all that re-badging BS they pulled with the 9800 and 200 cards...what a joke !.. Oh my, I must say you have me in a mood and the ironic thing is I do like Nvidia as much as ATi, I currently own and use both. I just can't stand fools like you who spout nothing but mindless crap while waving your team flag (my card is better than your's..WhaaWhaaWhaa)...just take yourself along with your worthless opinions and slide back under that slimly rock you came from.

Scali - Thursday, October 1, 2009 - link

I have the GPU Computing SDK aswell, and I ran the Ocean test on my 8800GTS320. I got 40 fps, with the card at stock, with 4xAA and 16xAF on. Fullscreen or windowed didn't matter.How can your score be only 47 fps on the GTX285? And why does the screenshot say 157 fps on a GTX280?

157 fps is more along the lines of what I'd expect than 47 fps, given the performance of my 8800GTS.

Ryan Smith - Thursday, October 1, 2009 - link

Full screen, 2560x1600 with everything cranked up. At that resolution, it can be a very rough benchmark.The screenshot you're seeing is just something we took in windowed mode with the resolution turned way down so that we could fit a full-sized screenshot of the program in to our document engine.

Scali - Friday, October 2, 2009 - link

I've just checked the sourcecode and experimented a bit with changing some constants.The CS part always uses a dimension of 512, hardcoded, so not related to the screen size.

So the CS load is constant, the larger you make the window, the less you measure the GPGPU-performance, since it will become graphics-limited.

Technically you should make the window as small as possible to get a decent GPGPU-benchmark, not as large as possible.

Scali - Friday, October 2, 2009 - link

Hum, I wonder what you're measuring though.I'd have to study the code, see if higher resolutions increase only the onscreen polycount, or also the GPGPU-part of generating it.

Scali - Thursday, October 1, 2009 - link

That's 152 fps, not 257, sorry.