The SSD Relapse: Understanding and Choosing the Best SSD

by Anand Lal Shimpi on August 30, 2009 12:00 AM EST- Posted in

- Storage

Live Long and Prosper: The Logical Page

Computers are all about abstraction. In the early days of computing you had to write assembly code to get your hardware to do anything. Programming languages like C and C++ created a layer of abstraction between the programmer and the hardware, simplifying the development process. The key word there is simplification. You can be more efficient writing directly for the hardware, but it’s far simpler (and much more manageable) to write high level code and let a compiler optimize it.

The same principles apply within SSDs.

The smallest writable location in NAND flash is a page; that doesn’t mean that it’s the largest size a controller can choose to write. Today I’d like to introduce the concept of a logical page, an abstraction of a physical page in NAND flash.

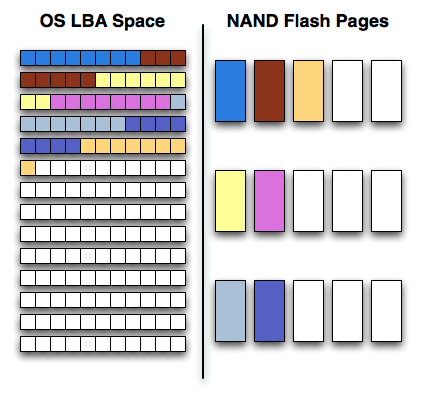

Confused? Let’s start with a (hopefully, I'm no artist) helpful diagram:

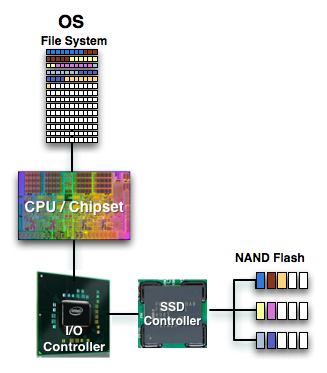

On one side of the fence we have how the software views storage: as a long list of logical block addresses. It’s a bit more complicated than that since a traditional hard drive is faster at certain LBAs than others but to keep things simple we’ll ignore that.

On the other side we have how NAND flash stores data, in groups of cells called pages. These days a 4KB page size is common.

In reality there’s no fence that separates the two, rather a lot of logic, several busses and eventually the SSD controller. The latter determines how the LBAs map to the NAND flash pages.

The most straightforward way for the controller to write to flash is by writing in pages. In that case the logical page size would equal the physical page size.

Unfortunately, there’s a huge downside to this approach: tracking overhead. If your logical page size is 4KB then an 80GB drive will have no less than twenty million logical pages to keep track of (20,971,520 to be exact). You need a fast controller to sort through and deal with that many pages, a lot of storage to keep tables in and larger caches/buffers.

The benefit of this approach however is very high 4KB write performance. If the majority of your writes are 4KB in size, this approach will yield the best performance.

If you don’t have the expertise, time or support structure to make a big honkin controller that can handle page level mapping, you go to a larger logical page size. One such example would involve making your logical page equal to an erase block (128 x 4KB pages). This significantly reduces the number of pages you need to track and optimize around; instead of 20.9 million entries, you now have approximately 163 thousand. All of your controller’s internal structures shrink in size and you don’t need as powerful of a microprocessor inside the controller.

The benefit of this approach is very high large file sequential write performance. If you’re streaming large chunks of data, having big logical pages will be optimal. You’ll find that most flash controllers that come from the digital camera space are optimized for this sort of access pattern where you’re writing 2MB - 12MB images all the time.

Unfortunately, the sequential write performance comes at the expense of poor small file write speed. Remember that writing to MLC NAND flash already takes 3x as long as reading, but writing small files when your controller needs large ones worsens the penalty. If you want to write an 8KB file, the controller will need to write 512KB (in this case) of data since that’s the smallest size it knows to write. Write amplification goes up considerably.

Remember the first OCZ Vertex drive based on the Indilinx Barefoot controller? Its logical page size was equal to a 512KB block. OCZ asked for a firmware that enabled page level mapping and Indilinx responded. The result was much improved 4KB write performance:

| Iometer 4KB Random Writes, IOqueue=1, 8GB sector space | Logical Block Size = 128 pages | Logical Block Size = 1 Page |

| Pre-Release OCZ Vertex | 0.08 MB/s | 8.2 MB/s |

295 Comments

View All Comments

Ardax - Tuesday, September 1, 2009 - link

Installing a non-OEM drive is not going to void the warranty on the rest of the system. And as the other commenter posted, your problem isn't reliability, it's performance. Anand's excellent article shows the performance dropoff of the Samsung drives.Finally, if you do get another SSD (or still have one currently), definitely disable Prefetching. SuperFetch and ReadyBoost are read-only as far as the SSD is concerned, but Prefetch optimizations do write to the drive. It selectively fragments files so that booting the system and launching the profiled applications do as much sequential reading of the HD as possible. Letting prefetch reorganize all those files is bad on any SSD, and extra bad on one where you're seeing write penalties.

...

And "One More Thing" (apologies to Steve Jobs)! Check out FlashFire (http://flashfire.org/)">http://flashfire.org/). It's a program designed to help out with low end SSDs. At a very basic view, what it does is use some of your RAM as a massive write-coalescing cache and puts that between the OS and your SSD. It collects a series of small random writes from the OS and applications and tries to turn them into a large sequential write for your SSD. It's beta, and I've never attempted to use it, but if it works for you it might be a life-saver.

heulenwolf - Friday, September 4, 2009 - link

Thanks again for the feedback, Ardax. Duly noted about the Dell warranty. They will continue to warrant the rest of the laptop, AFAIK, even if we install a 3rd party drive.Can you point to your source on the statements about how prefetch fragments files on the drive? Nothing I've read about it describes it as write intensive.

I'd like to point out that this SSD is not a low-performance unit, the kind Flashfire is supposed to help with. It was one of the fastest drives available last year, before Intel's drives came out and set the curve. When its performing normally, this system boots Vista in ~30 seconds. Its uses SLC flash with an order of magnitude more write cycles than comparable MLC-based drives. Were standard Windows installs the cause of these failures, we would have heard about MLC drives failing similarly within the first month.

Its also a business machine so loading alpha rev software on it for performance optimization isn't really an option. The known issues on Flashfire's site make it not worth the risk until its more mature.

ggathagan - Wednesday, September 16, 2009 - link

One possible work-around:I know that, for instance, if I buy a drive from Dell for one of my servers, that drive is covered under the Dell warranty for that server.

Dell sells a Kingston re-branded Intel X25-E drive and a Corsair Extreme SSD drive.

The Corsair Extreme series is an Indilinx SSD drive:

http://www.corsair.com/products/ssd_extreme/defaul...">http://www.corsair.com/products/ssd_extreme/defaul...

I don't know if this applies to non Dell-branded products that Dell sells, but it might be worth looking into.

TGressus - Wednesday, September 2, 2009 - link

"Disable Defrag, SuperFetch, ReadyBoost, and Application and Boot Prefetching"This is keen advice, especially for the OP's laptop SSD usage scenario. Definitely disable these services.

I'd also suggest disabling Automatic Restore Points on the SSD Volume(s) from the System Protection task in the "System" Control Panel. When enabled this setting can generate a lot file I/O and will add to block fragmentation and eventual garbage collection. http://en.wikipedia.org/wiki/System_Restore">http://en.wikipedia.org/wiki/System_Restore

Regular external backup and disk imaging should be just as effective, without the resource penalties.

heulenwolf - Friday, September 4, 2009 - link

TGressus, thanks for the feedback. With the exception of defrag, however, I can't find any real-world data about these services that leads me to beleive they place undue wear on the SSD. Sure, disabling them may free up marginal system resources for performance operation, but I haven't heard anything leading me to believe they're the cause of the repeated failures I saw. Since none of those features are check-box-disable-able items (again, beyound defrag) - they seem to require custom registry hacks - I'm not comfortable performing them on my business machine purely for performance optimization.I guess I've narrowed it down to options A or C from my original question:

A) Am I that 1 in a bazillion case of having gotten a bad system followed by a bad drive followed by another bad drive

B) Is there something about Vista - beyond auto defrag - that accelerates the wear and tear on these drives

C) Is there something about Samsung's early SSD controllers that drops them to a lower speed under certain conditions (e.g. poorly implemented SMART diagnostics)

D) Is my IT department right and all SSDs are evil ;)?

gstrickler - Monday, August 31, 2009 - link

Drive reliability does not appear to be a problem in your case. There is nothing in you description that indicates the drives failed, only the performance dropped to unacceptable levels. That is exactly the situation described by Anand's earlier tests of SSDs, especially with earlier firmware revisions (any brand) or with heavily used Samsung drives.I presume your swap space (paging file) is on the SSD? The symptoms you describe would occur if writing to the swap space (which Windows will do during boot up/login) is slow. You might be able to regain some performance and/or delay the reappearance of symptoms by simply moving your swap space off the SSD.

For your purposes, it sounds like your best solution would be to switch to an Intel or Indilinx drive, probably an SLC drive, but a larger MLC drive might work well also. Dell won't warranty the new drive, but it won't "void" your warranty either. You'll still have the remainder of the warranty from Dell on everything except the new SSD, which will be under warranty from the company making the drive. If you have a support contract with Dell, they might try to point to the non-Dell SSD as an issue, but at least with the Gold/Enterprise support group, I have not found Dell to do that type of finger pointing.

The Intel drives are now good at automatically cleaning up with repeated writing, while with an Indilinx drive, you may need to occasionally (perhaps every 6 months) run the "Wiper" utility to restore performance.

Also, you indicate your drive is about 3/4 full, if you can reduce that, you may see less performance hit also. You can do that by removing some data, moving data to a secondary drive (HD or SSD), or buying a larger SSD.

If you're working with large data files that you're not accessing and updating randomly (e.g. you're not primarily working with a large database), then you might benefit from having your OS and applications on the SSD, but use a HD for your data files and/or temp/swap space. Of course, make sure you have sufficient RAM to minimize any swapping regardless of whether you're using a HD or an SSD.

heulenwolf - Tuesday, September 1, 2009 - link

gstricker - duly noted about the Dell warranty.I have to disagree that drive reliability is not the issue for two reasons, only the first of which I'd mentioned before:

1) Dell's diagnostics failed on the SSD

2) Anand's test results show major slowdowns, but not from 100 MB/s 5 MB/s for both read and write operations. No matter what I did, even writing my own scripts to just read files as fast as it could, I couldn't get read access over 10 MB/s peak with average around 5. Its like the drive switched to an old PIO interface or something. The kinds of slowdowns in Anand's results do not lead to 15 minute boot times.

Its a laptop with only one drive bay so, yes, page file is on the SSD and a second drive isn't really an option. According to the windows 7 engineering blog, linked by Ardax above, SSD's are a great place to store pagefiles. Since the system has 4 GB of RAM, its not like the system has undue swap writing going on.

I can't imagine Samsung or Dell selling a drive with a 3 year warranty that would have to be replaced every 6 months under relatively normal OS wear and tear (swapping, prefetch). Vista was well past SP1 at the time the system was bought so they'd had plenty of time to qualify the drive for such uses. They'd both be out of business were this the case.

Agree that the best bet would be to switch brands but I'm kinda stuck on what's wrong with this one. Thanks for the feedback.

gstrickler - Thursday, September 3, 2009 - link

That it failed Dell's diagnostics might indicate a problem, but do you know for certain that Dell's diagnostics pass on a properly functioning Samsung SSD? I don't know the answer to that, but it needs to be answered.While Anand's tests don't show 90% drops in performance, his tests didn't continue to fragment the drive for months, so performance might continue to drop with increasing fragmentation.

More importantly, I've experienced problems with the Windows page file before, it does slow the system dramatically. Furthermore, the Windows page file does get written to as part of the boot process, so any performance problems with the page file will notably slow the boot, 15 minutes with Vista is not difficult to believe. While I haven't verified this on Vista, with NT4/W2k/XP the caching algorithm will allow reads to trigger paging other items out to disk, so even a simple read test can cause writes to the page file if the amount of data read approaches "free RAM". Again, performance problems with the page file could dramatically affect your results, even for a "read-only" test. Don't be so certain of your diagnosis unless you've eliminated these factors.

You should try removing the page file (or setting it to 2MB), then see what happens to performance.

heulenwolf - Friday, September 4, 2009 - link

I had the same thought about Dell's diagnostics. I've run them again on the latest refurb drive and found that it passes all drive-related tests. Unfortunately, Dell's diagnostics simply spit out an error code which only they hold the secret decoder-ring to so I have no idea what the diagnosis was. This result isn't conclusive but its another data point.To more fully describe the testing I did, I wrote a script that generated a random data matrix and timed writing it to file. I then read the data back in, timing only the read, and compared the two datasets to ensure no read/write errors. I looped through this process hundreds to thousands of times with file sizes from 4k up to 50 MB. Since I was using an interpreted language, I don't put much stock in the performance times, however, I was using the lowest level read and write functions available. Additionally, my 5-10 MB/s numbers come from watching Vista's Resource Monitor while the test was running, not from the program. No other measured component of the system was taxed so I don't think the CPU or being near the physical memory limit, for example, was holding it up.

donjuancarlos - Monday, August 31, 2009 - link

I have not found many articles on the net about SSDs and this one is even easy to understand.The only negative part about this article is the Lenovo T400 I am typing on (it has a Samsung drive :( ) And I have to agree, startup times are nothing special.