ATI Radeon HD 4890 vs. NVIDIA GeForce GTX 275

by Anand Lal Shimpi & Derek Wilson on April 2, 2009 12:00 AM EST- Posted in

- GPUs

New Drivers From NVIDIA Change The Landscape

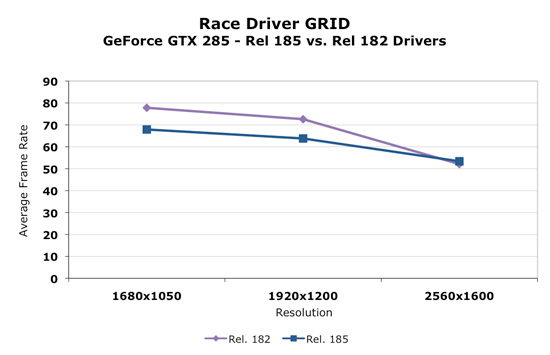

Today, NVIDIA will release it's new 185 series driver. This driver not only enables support for the GTX 275, but affects performance in parts across NVIDIA's lineup in a good number of games. We retested our NVIDIA cards with the 185 driver and saw some very interesting results. For example, take a look at before and after performance with Race Driver: GRID.

As we can clearly see, in the cards we tested, performance decreased at lower resolutions and increased at 2560x1600. This seemed to be the biggest example, but we saw flattened resolution scaling in most of the games we tested. This definitely could affect the competitiveness of the part depending on whether we are looking at low or high resolutions.

Some trade off was made to improve performance at ultra high resolutions at the expense of performance at lower resolutions. It could be a simple thing like creating more driver overhead (and more CPU limitation) to something much more complex. We haven't been told exactly what creates this situation though. With higher end hardware, this decision makes sense as resolutions lower than 2560x1600 tend to perform fine. 2560x1600 is more GPU limited and could benefit from a boost in most games.

Significantly different resolution scaling characteristics can be appealing to different users. An AMD card might look better at one resolution, while the NVIDIA card could come out on top with another. In general, we think these changes make sense, but it might be nicer if the driver automatically figured out what approach was best based on the hardware and resolution running (and thus didn't degrade performance at lower resolutions).

In addition to the performance changes, we see the addition of a new feature. In the past we've seen the addition of filtering techniques, optimizations, and even dynamic manipulation of geometry to the driver. Some features have stuck and some just faded away. One of the most popular additions to the driver was the ability to force Full Screen Antialiasing (FSAA) enabling smoother edges in games. This features was more important at a time when most games didn't have an in-game way to enable AA. The driver took over and implemented AA even on games that didn't offer an option to adjust it. Today the opposite is true and most games allow us to enable and adjust AA.

Now we have the ability to enable a feature, which isn't available natively in many games, that could either be loved or hated. You tell us which.

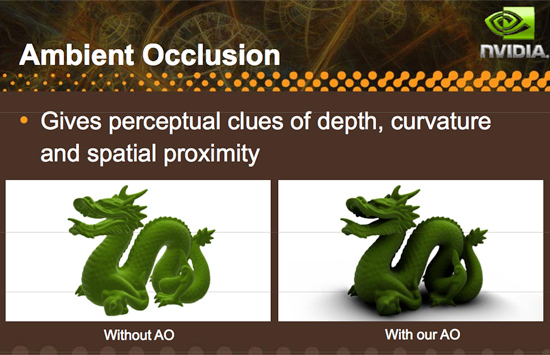

Introducing driver enabled Ambient Occlusion.

What is Ambient Occlusion you ask? Well, look into a corner or around trim or anywhere that looks concave in general. These areas will be a bit darker than the surrounding areas (depending on the depth and other factors), and NVIDIA has included a way to simulate this effect in it's 185 series driver. Here is an example of what AO can do:

Here's an example of what AO generally looks like in games:

This, as with other driver enabled features, significantly impacts performance and might not be able to run on all games or at all resolutions. Ambient Occlusion may be something some gamers like and some do not depending on the visual impact it has on a specific game or if performance remains acceptable. There are already games that make use of ambient occlusion, and some games that NVIDIA hasn't been able to implement AO on.

There are different methods to enable the rendering of an ambient occlusion effect, and NVIDIA implements a technique called Horizon Based Ambient Occlusion (HBAO for short). The advantage is that this method is likely very highly optimized to run well on NVIDIA hardware, but on the down side, developers limit the ultimate quality and technique used for AO if they leave it to NVIDIA to handle. On top of that, if a developer wants to guarantee that the feature work for everyone, they would need implement it themselves as AMD doesn't offer a parallel solution in their drivers (in spite of the fact that they are easily capable of running AO shaders).

We haven't done extensive testing with this feature yet, either looking for quality or performance. Only time will tell if this addition ends up being gimmicky or really hits home with gamers. And if more developers create games that natively support the feature we wouldn't even need the option. But it is always nice to have something new and unique to play around with, and we are happy to see NVIDIA pushing effects in games forward by all means possible even to the point of including effects like this in their driver.

In our opinion, lighting effects like this belong in engine and game code rather than the driver, but until that happens it's always great to have an alternative. We wouldn't think it a bad idea if AMD picked up on this and did it too, but whether it is more worth it to do this or spend that energy encouraging developers to adopt this and comparable techniques for more complex writing is totally up to AMD. And we wouldn't fault them either way.

294 Comments

View All Comments

sbuckler - Thursday, April 2, 2009 - link

Big difference between Havok Physics and HavokFX physics. With physx you can just turn on hardware acceleration and it works, with havok this is not possible - unlike physx it was never developed to be run on the gpu. Hence havok have had to develop a new physics engine to do that.No game uses the HavokFX engine - it's not even available to developers yet let alone in shipped games. The ati demo was all we have seen of it for several years. It's not even clear HavokFX is even a fully accelerated hardware physics engine - i.e. the version showed in the past (before intel took over havok) was basically the havok engine with some hw acceleration for effects. i.e. hardware accel could only be used to make it prettier explosions and rippling cloth - it could not be used to do anything game changing.

Hence havok have a way to go before they can even claim to support what physX already does, let alone shipping it to developers and then seeing them use it in games. Like I said the moment that comes close to happening nvidia will just release an OpenCL version of physX and that will be that.

z3R0C00L - Thursday, April 2, 2009 - link

It's integrated in the same way. Many game developers are already familiar with coding for Havok effects.Not to mention that OpenCL has chosen HavokFX (which is simply using either a CPU or a GPU to render Physics effect as seen here: http://www.youtube.com/watch?v=MCaGb40Bz58">http://www.youtube.com/watch?v=MCaGb40Bz58.

Again... Physx is dead. OpenCL is HavokFX, it's what the consortium has chosen and it runs on any CPU or GPU including Intel's upcoming Larrabee.

Like I said before (you seem to not understand logic). Physx is dead.. it's proprietary and not as flexible as Havok. Many studios are also familiar with Havok's tools.

C'est Fini as they say in french.

erple2 - Friday, April 3, 2009 - link

I think you're mistaken - OpenCL is analogous to CUDA, not to PhysX. HavokFX is analogous to PhysX. OpenCL is the GPGPU compiler that runs on any GPU (and theoretically, it should run on any CPU too, I think). It's what Apple is now trying to push (curious, given that their laptop base is all nVidia now).However, if NVidia ports PhysX to OpenCL, that's a win for everyone. Sort of. Except for NVIdia that paid a lot of money for the PhysX IP. I think that the conclusions given are accurate - NVidia is banking on "everyone" (ie Game Developers) coding for PhysX (and by extension, CUDA) rather than HavokFX (and by extension, OpenCL). However, if Developers are smart, they'll go with the actually open format (OpenCL, not CUDA). That means that any physics processing they do will work on ANY GPU, (NVidia and ATI). I personally think that NVidia banked badly this time.

While I do believe that doing physics calculations on unused GPU cycles is a great thing (and the Razor's Edge demo shows some of the interesting things that can be done), I think that NVidia's pushing of PhysX (and therefore CUDA) is like what 3dfx did with pushing GLide. Everyone supported Direct3D and OpenGL, but only 3dfx supported Glide. While Glide was more efficient (it was catering to a single hardware vendor that made Glide, afterall), the fact that Game Developers could instead program for OpenGL (or Direct3D) and get all 3D accelerators supported meant that the days of Glide were ultimately numbered.

I wonder if NVidia is trying to pull the industry to adopting its CUDA as a "standard". I think it's ultimately going to fail, however, given that the industry recognizes now that OpenCL is available.

Is OpenCL as mature as CUDA is? Or are they still kind of finalizing it? Maybe that's the issue - OpenCL isn't complete yet, so NVidia is trying to snatch up support in the Developer community early?

sbuckler - Friday, April 3, 2009 - link

CUDA is in many ways a simplified version of OpenCL - in that CUDA knows what hardware it will run on so has set functions to access it, OpenCL is obviously much more generic as it has to run on any hardware so it's not quite as easy. That part of the reason why CUDA is initially at least more popular then OpenCL - it's easier to work with. That said they are very similar so to port from one to the other won't be hard - hence develop for CUDA now then just port to OpenCL when the market demands it.All in my opinion Ati want is their hardware to run with whatever physics standard is out there. Right now they are at a growing competitive disadvantage as hardware physics slowly takes off. Hence they demo HavokFX in the hope that either (a) it takes off or (b) nvidia are forced to port PhysX to openCL. I don't think they care which one wins - both products belong to a competitor.

Nvidia who have put a lot of money into PhysX want to maximise their investment so they will keep PhysX closed as long as possible to get people to buy their cards, but in the end I am sure they are fully aware they will have to open it up to everyone - it's just a matter of when. From our standpoint the sooner the better.

erple2 - Friday, April 3, 2009 - link

Sure, but my point was simply that HavokFX and PhysX are physics API's, whereas OpenCL and CUDA are "general" purpose computing languages designed to run on a GPU.Is CUDA easier to work with? I don't really know, as I've never programmed for either. Is OpenGL harder to program for than Glide was? Again, I don't know, I'm not a developer.

ATI's "CUDA" was "Stream" (I think). I recall ATI abandoning that for (or folding that into) OpenCL. That's a sound strategic decision, I think.

If PhysX is ported to OpenCL, then that's a major win for ATI, and a lesser one for NVidia - the PhysX SDK is already free for any developer that wants it (support costs money, of course). NVidia's position in that market is that PhysX currently only works on NVidia cards. Once it works elsewhere (via OpenCL or Stream), NVidia loses that "edge". However, that's a good thing...

SiliconDoc - Monday, April 6, 2009 - link

I guess you're forgetting that recently NVidia supported a rogue software coder that was porting PhysX to ATI drivers. Those drivers hit the web and the downloads went wild high - and ATI stepped in and slammed the door shut with a lawsuit and threats.Oh well, ATI didn't want you to enjoy PhysX effects. You got screwed, even as NVidia tried to help you.

So now all you and Derek and anand are left to do is sourpuss and whine PhysX sucks and means nothing.

Then anand tries Mirror's Edge ( because he HAS TO - cause dingo is gone - unavailable ) and falls in love with PhysX and the game. LOL

His conclusion ? He's an ati fabbyboi so cannot recommend it.

tamalero - Monday, April 20, 2009 - link

the ammount of fud you spit is staggeringz3R0C00L - Thursday, April 2, 2009 - link

On one hand you have OpenCL, Havok, ATi, AMD and Intel on the other you have nVIDIA.Seriously.

z3R0C00L - Thursday, April 2, 2009 - link

I'm an nVIDIA fan.. I'll admit. I like that you added CUDA and Physx.. but are we reading the same results?The Radeon HD 4890 is the clear winner here. I don't understand how it could be any different.

CrystalBay - Thursday, April 2, 2009 - link

I agree if nV wants to sell more cards they need to include the video software at no charge...