The SSD Anthology: Understanding SSDs and New Drives from OCZ

by Anand Lal Shimpi on March 18, 2009 12:00 AM EST- Posted in

- Storage

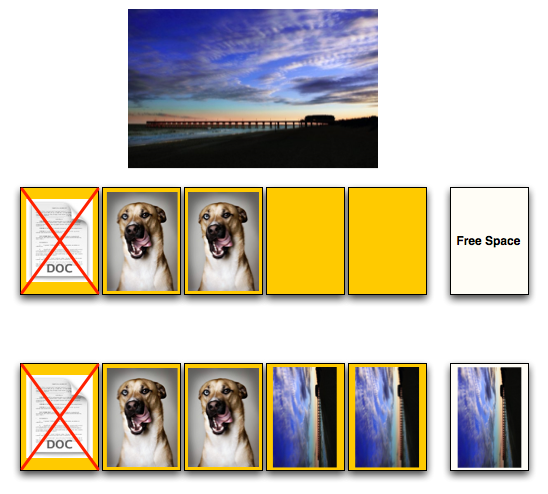

Free Space to the Rescue

There’s not much we can do about the scenario I just described; you can’t erase individual pages, that’s the reality of NAND-flash. There are some things we can do to make it better though.

The most frequently used approach is to under provision the drive. Let’s say we only shipped our drive with 20KB of space to the end user, but we actually had 24KB of flash on the drive. The remaining 4KB could be used by our controller; how, you say?

In the scenario from the last page we had to write 12KB of data to our drive, but we only had 8KB in free pages and a 4KB invalid page. In order to write the 12KB we had to perform a read-modify-write which took over twice as long as a 12KB write should take.

If we had an extra 4KB of space our 12KB write from earlier could’ve proceeded without a problem. Take a look at how it would’ve worked:

We’d write 8KB to the user-facing flash, and then the remaining 4KB would get written to the overflow flash. Our write speed would still be 12KB/s and everything would be right in the world.

Now if we deleted and tried to write 4KB of data however, we’d run into the same problem again. We’re simply delaying the inevitable by shipping our drive with an extra 4KB of space.

The more spare-area we ship with, the longer our performance will remain at its peak level. But again, you have to pay the piper at some point.

Intel ships its X25-M with 7.5 - 8% more area than is actually reported to the OS. The more expensive enterprise version ships with the same amount of flash, but even more spare area. Random writes all over the drive are more likely in a server environment so Intel keeps more of the flash on the X25-E as spare area. You’re able to do this yourself if you own an X25-M; simply perform a secure erase and immediately partition the drive smaller than its actual capacity. The controller will use the unpartitioned space as spare area.

250 Comments

View All Comments

SunSetSupaNova - Wednesday, March 18, 2009 - link

Just wanted to say great job Anand on a great article, it took me a while to read it from start to finish but it was well worth it!FHDelux - Wednesday, March 18, 2009 - link

That was the best review i have read in a long time. I originally bought an OCZ Core drive when they first came out. It was the worst piece of garbage i had ever used. Newegg wouldn't let me send it back and OCZ support forums told me all sorts of junk to get me to fix it but it was just a poorly designed drive. I eventually ended up getting the egg to take it back for credit and i wrote OCZ off as a company blinded by the marketing department. I currently own an Intel SSD and its wonderfull, everytime i see OCZ statements saying their drive competes with the Intel drive i would laugh and think back to the OCZ techs telling me i need to update my bios, or i need to install vista service pack 1 before it would work right.I am thankful that you slapped that OCZ big wig around until they made a good product. All of us out there that wasted our time and money on Pre-vertex generation drives are greatfull to you and the whole industry should be kissing your butt right now.

One thing these companies need to learn is that marketing isn't the answer, creating solid products is. Hopefully OCZ has learned their lesson, and because of your article i will give them another chance.

THANK YOU!

kelstertx - Wednesday, March 18, 2009 - link

I didn't want to worry about eventual failure of the Flash chips of an SSD, and went with an SDRAM based Ramdrive from Acard. These drives have no latency of any kind, since they use SDRAM, and no lifespan of write cycles. I've been using mine for a couple of weeks now, and I like it a lot. I put Ubuntu on mine, and had 2G left for my small home folder. The standard HDD is my long-term storage for data files, music, etc. As SDRAM gets more affordable over time, I can add DIMMs and bump up the size.I know this review was about SSDs strictly, so an SDRAM drive doesn't technically fit, but it would have been interesting to see a 9010 or 9010b in there for comparison. It beat the Intel SSD in almost all the tests. http://techreport.com/articles.x/16255/1">http://techreport.com/articles.x/16255/1

7Enigma - Wednesday, March 18, 2009 - link

I've been eying these guys ever since the announced their first press release. Every time I always was drawn away by the constant need for power (4h max on battery scares the bejeezus out of me if I was to be gone on vacation during a storm), high power usage at all times, and high cost of entry (after factoring in all of the ram modules).I really dislike that article as well, since I think the bottlenecks were much less apparent with such a horribly slow cpu. The majority of that review's data is extremely compressed. I mean a P4, and 1 gig of memory; are you F'ing kidding me? This article was written in Jan of this year!? Why didn't they just use my old 486DX?

tirez321 - Wednesday, March 18, 2009 - link

What would a drive zeroing tool do to write performance, like if you used acronis privacy expert to zero only the "free space" regularly? Would it help write performance due to the drive not having to erase pages before writing?tirez321 - Wednesday, March 18, 2009 - link

I can kinda see that it wouldn't now.Because there would still be states there regardless.

But if you could inform the drive that it is deleted somehow, hmm.

strikeback03 - Wednesday, March 18, 2009 - link

The subjective experiences with stuttering are more important to me than most of the test numbers. Other tests I have found of the G.Skill Titan and similar have looked pretty good, but left out mention of stuttering in use.Too bad, as the 80GB Intel is too small and the ~$300 for a 120GB is about the most I am willing to pay. Maybe sometime this year the OCZ Vertex or similar will get there.

strikeback03 - Tuesday, March 24, 2009 - link

When I wrote that, the Newegg price for the 120GB Vertex was near $400. Now they have it for $339 with a $30 MIR. Now that's progress.kamikaz1k - Wednesday, March 18, 2009 - link

the latency times are switched...incase u wanted to kno.also, first post ^^ hallo!

GourdFreeMan - Wednesday, March 18, 2009 - link

It seems rather premature to assume the ATA TRIM command will significantly improve the SSD experience on the desktop. If you were to use TRIM to rewrite a nonempty physical block, you do not avoid the 2ms erase penalty when more data is written to that block later on and instead simply add the wear of another erase cycle. TRIM, then, is only useful for performance purposes when an entire 512 KiB physical block is free.A well designed operating system would have to keep track of both the physical and logical maps of used space on an SSD, and only issue TRIM when deletion of a logical cluster coincides with the freeing of an entire physical block. Issuing TRIMs at any other time would only hurt performance. This means the OS will have significantly fewer opportunities to issue TRIMs than you assume. Moreover, after significant usage the physical blocks will become fragmented and fewer and fewer TRIMs will be able to be issued.

TRIM works great as long as you only deal with large files, or batches of small files contiguously created and deleted with significant temporal locality. It would greatly aid SSDs in the "used" state Anand artificially creates in this article, but on a real system where months of web browsing, Windows updates and software installing/uninstalling have occurred the effect would be less striking.

TRIM could be mated with periodic internal (not filesystem) defragmentation to mitigate these issues, but that would significantly reduce the lifespan of the SSD...

It seems the real solution to the SSD performance problem would be to decrease the size of the physical block... ideally to 4 KiB, as that is the most common cluster size on modern filesystems. (This assumes, of course, that the erase, read and write latencies could be scaled down linearly.)