NVIDIA GeForce GTX 295: Leading the Pack

by Derek Wilson on January 12, 2009 5:15 PM EST- Posted in

- GPUs

Now that we have some hardware in our hands and NVIDIA has formally launched the GeForce GTX 295, we are very interested in putting it to the test. NVIDIA's bid to reclaim the halo is quite an interesting one. If you'll remember from our earlier article on the hardware, the GTX 295 is a dual GPU card that features two chips that combine aspects of the GTX 280 and the GTX 260. The expectation should be that this card will fall between GTX 280 SLI and GTX 260 core 216 SLI.

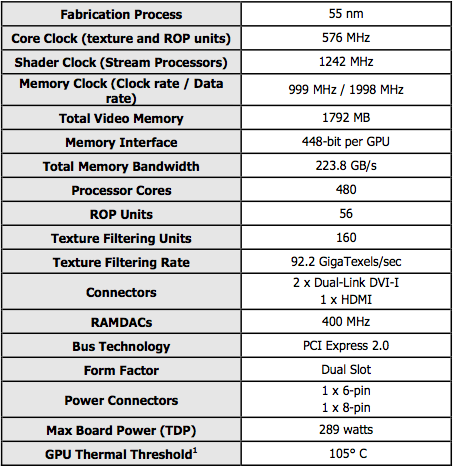

As for the GTX 295, the GPUs have the TPCs (shader hardware) of the GTX 280 with the memory and pixel power of the GTX 260. This hybrid design gives it lots of shader horsepower with less RAM and raw pixel pushing capability than GTX 280 SLI. This baby should perform better than GTX 260 SLI and slower than GTX 280 SLI. Here are the specs:

Our card looks the same as the one in the images provided by NVIDIA that we posted in December. It's notable that the GPUs are built at 55nm and are clocked at the speed of a GTX 260 despite having the shader power of the GTX 280 (x2).

We've also got another part coming down the pipe from NVIDIA. The GeForce GTX 285 is a 55nm part that amounts to an overclocked GTX 280. Although we don't have any in house yet, this new card was announced on the 8th and will be available for purchase on the 15th of January 2009.

There isn't much to say on the GeForce GTX 285: it is an overclocked 55nm GTX 280. The clock speeds compare as follows:

| Core Clock Speed (MHz) | Shader Clock Speed (MHz) | Memory Data Rate (MHz) | |

| GTX 280 | 602 | 1296 | 2214 |

| GTX 285 | 648 | 1476 | 2484 |

We don't have performance data for the GTX 285 yet, but expect it (like the GTX 280 and GTX 295) to be necessary only with very large displays.

| GTX 295 | GTX 285 | GTX 280 | GTX 260 Core 216 | GTX 260 | 9800 GTX+ | |

| Stream Processors | 2 x 240 | 240 | 240 | 216 | 192 | 128 |

| Texture Address / Filtering | 2 x 80 / 80 | 80 / 80 | 80 / 80 | 72/72 | 64 / 64 | 64 / 64 |

| ROPs | 28 | 32 | 32 | 28 | 28 | 16 |

| Core Clock | 576MHz | 648MHz | 602MHz | 576MHz | 576MHz | 738MHz |

| Shader Clock | 1242MHz | 1476MHz | 1296MHz | 1242MHz | 1242MHz | 1836MHz |

| Memory Clock | 999MHz | 1242MHz | 1107MHz | 999MHz | 999MHz | 1100MHz |

| Memory Bus Width | 2 x 448-bit | 512-bit | 512-bit | 448-bit | 448-bit | 256-bit |

| Frame Buffer | 2 x 896MB | 1GB | 1GB | 896MB | 896MB | 512MB |

| Transistor Count | 2 x 1.4B | 1.4B | 1.4B | 1.4B | 1.4B | 754M |

| Manufacturing Process | TSMC 55nm | TSMC 55nm | TSMC 65nm | TSMC 65nm | TSMC 65nm | TSMC 55nm |

| Price Point | $500 | $??? | $350 - $400 | $250 - $300 | $250 - $300 | $150 - 200 |

For this article will focus heavily on the performance of the GeForce GTX 295, as we've already covered the basic architecture and specifications. We will recap them and cover the card itself on the next page, but for more detail see our initial article on the subject.

The Test

| Test Setup | |

| CPU | Intel Core i7-965 3.2GHz |

| Motherboard | ASUS Rampage II Extreme X58 |

| Video Cards | ATI Radeon HD 4870 X2 ATI Radeon HD 4870 1GB NVIDIA GeForce GTX 295 NVIDIA GeForce GTX 280 SLI NVIDIA GeForce GTX 260 SLI NVIDIA GeForce GTX 280 NVIDIA GeForce GTX 260 |

| Video Drivers | Catalyst 8.12 hotfix ForceWare 181.20 |

| Hard Drive | Intel X25-M 80GB SSD |

| RAM | 6 x 1GB DDR3-1066 7-7-7-20 |

| Operating System | Windows Vista Ultimate 64-bit SP1 |

| PSU | PC Power & Cooling Turbo Cool 1200W |

100 Comments

View All Comments

sam187 - Tuesday, January 13, 2009 - link

Does anyone know if the GTX295/285 support hybrid power? It doesn't seem so (nvidia homepage, product dispriction from shops, ...).For me it looks like nvidia is dropping the technology (remember Geforce 9300/9400 chipset?) :-(

Stonedofmoo - Tuesday, January 13, 2009 - link

Hey what's happened with this review?Anandtech is my review site of choice because your reviews are usually in depth and informative. There are more decisions to be made with a graphics card now than frame rates.

I for one would very much like to have seen some information on the power consumption of this card.

Let's hope that your GTX 285 review is better and has more information about the 55nm transition and how it affects power consumption, because for some of us this is becoming a big issue.

I just sent back a GTX 280 because the power consumption was rediculous and at idle it uses 35-40w more power with 2 monitors connected than one monitor.

I do agree though, it's the midrange parts I'd far rather see using the new G200 process. The 9xxx series are old hat at should be replaced.

danchen - Tuesday, January 13, 2009 - link

How about multiple monitor setups ?If I'm planning to power up 3 x 24" LCD monitors(1920x1200),which card should be best ? (only expecting to use "high"/"very high" settings)?

This card only has 2 DVI outputs right ?

Should I skip this and just get 2 x GTX285 SLI (for the ports) ?

Which brand performs the best for multiscreen setup ?

nubie - Tuesday, January 13, 2009 - link

To game on? As far as I know nVidia is not opening up more than one "screen" (can span multiple monitors on a single video card though) for SLI.I don't think they can support 3 active DirectX monitors on the GTX295 (or can they?) If they do then it would be the one to get.

I crammed 3 PCI-e cards into the extra pci-e x1 slots on my old motherboard a couple years back, and was a bit disappointed by the state of multi-monitor gaming. http://picasaweb.google.com/nubie07/PCIeX102#51748...">http://picasaweb.google.com/nubie07/PCIeX102#51748...

Best to buy a triple-head-to-go (or a dual-head to go) http://www.matrox.com/graphics/en/products/gxm/th2...">http://www.matrox.com/graphics/en/products/gxm/th2... , or use SoftTHTG http://www.kegetys.net/SoftTH/">http://www.kegetys.net/SoftTH/

Sadly multi-displays (and VR/3D setups that depend on multi-displays/outputs) are a feature sorely lacking from DirectX and most game engines (the only one that comes to mind is MS flight simulator)

yacoub - Monday, January 12, 2009 - link

prices seem to be creeping back up again and that's not good (for consumers, especially in this economy). If the GTX 285 can't MSRP around $349 for 1GB models, we're in trouble. And it needs to see a sub-$300 price point before most gamers will give a crap, even though it appears to be the single-GPU card to get.nubie - Tuesday, January 13, 2009 - link

It is on a 55nm process, it can get price cut. They just need to move out the rest of the 65nm first.I wish they had some decent mid-range with new tech (like you-know-who), instead of peddling "GTX-100" series, AKA G92 as the mid-range.

Not that it isn't great tech, but at least the other team is actually trying.

SiliconDoc - Thursday, January 15, 2009 - link

What you really should have said is : It's too bad ATI, with it's flagship core, can only match the years old tech of the 9800GT, or the 9800gtx or plus, unless it uses DDDR5 memory, as it does on the 4870.So the REAL TRUTH IS - all this "new tech" from ATI - the "other one that is trying" according to you amounts to (to be overly fair to YOU ) DDR5 memory...

If we just go with the gpucore tech - like I said ATI latest flagship core - in the 4850 and 4870 - in the former- get knocked around by VERY MUCH OLDER NVIDIA "tech".

Like 2 or 3 years older ....

I guess the whole ding dang "new tech" whine is another twisted, repeatable, babbling foools errand promoted by the red amrket managers, and spewed about by the non thinking sheeple with red wool issues.

If NOT - please, pray tell... do correct me...

Unfortunately, that correction won't be forthcoming...

I saw the 4850 just the other day compared in benchmark to a 9600GSO and it was "very close"...

The question is how good is that core really ? How much "new tech" is there - it certainly appears it's all core clockspeed and DDR5... if not - why does the 4850 fall below (or barely above)the 98xxGTXx series all the time ?

Is "new technology" really what you want in the range you claimed you wanted it ? LOL

If NVidia puts out new technology in that region, do you expect several years old ATI cores to match or beat it ? I bet you don't.

Mithan - Monday, January 12, 2009 - link

Don't worry about it, those prices will come down or be heald in check.Hxx - Monday, January 12, 2009 - link

I wish you guys would have discussed about power consumtion, heat, and noise. Other than that, i enjoyed reading it. As for the card, its slightly faster than a 4870 x2 in the majority of the games, but that's about it. Nothing innovative, just another sandwich card designed by Nvidia with a poorly designed cooler letting hot air inside the case... how dissapointing.SiliconDoc - Monday, January 12, 2009 - link

The GTX295 is LOWER in power consumption AND in noise.That's why it wasn't included - you know who really likes a certain team...