Intel's Larrabee Architecture Disclosure: A Calculated First Move

by Anand Lal Shimpi & Derek Wilson on August 4, 2008 12:00 AM EST- Posted in

- GPUs

Thread and Data Management: It's Time to Blow Your Mind

With both the recent NIVIDA and AMD graphics hardware launches, we spent quite a bit of time talking about thread management. Since Larrabee is designed to be more of a collection of general purpose scalar and vector processing units, and vertex, primitive and pixel data (along with associate shader programs) are software managed. As we discussed what a context is for AMD and NVIDIA graphics hardware, a true context is going to be a different thing altogether on Larrabee.

We do have to make a point of saying before proceeding that NVIDIA and AMD are under no obligation to actually tell us how their architecture is physically implemented. It is entirely possible that much of the attributes of the hardware are not actually attributes of the hardware but simply reflections of how hardware resources are used. In recent discussions with both companies about certain realities of their hardware revealed to us that the belief is if the system behaves like a specific physical implementation then it effectively is the same as that physical implementation.

Of course, we disagree. And it is possible that some of this has more similarity with NVIDIA and AMD than they are letting on. But we'll go on what we've got for now, and assume that what Intel is doing is as divergent as it sounds.

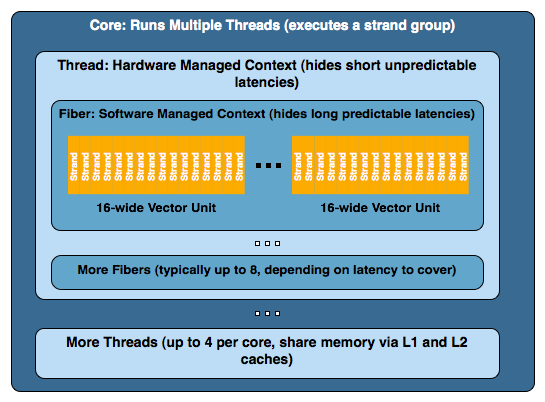

Each Larrabee core on a chip (of which it seems likely there will be some multiple of 8 in the final product) can maintain 4 simultaneous software threads (4 contexts are kept active at a time). This gives the appearance of 4 virtual physical processors to software running directly on the hardware even though all four threads are sharing a single resource. It is very likely that the major purpose of this is to hide some of the long latency we hit when going to memory for texture data and the like.

Now, for the purpose of graphics rendering using Intel's software rendering library or as it emulates DirectX and OpenGL, a thread is set up to manage the resources for a larger group of instructions and data that Intel calls a "fiber". Normally a thread will manage 8 fibers at a time. The hardware thread maintains a context in software for the fiber. The fiber's job is to manage the execution data parallel kernels on multiple groups of 16 "strands" (because the vector processor is 16-wide). A strand is what we have traditionally called a thread on other graphics hardware. The problem here is that Intel hardware is actually executing threads in a way that emulates hardware features of other architectures.

To put it together a little better, imagine one of Intel's threads as one of NVIDIA's TPCs, a fiber as an SM, and a strand as a thread. Okay, so it isn't that simple (simple?). But it is a sort of rough way of looking at it and a quick way of understand why naming is different here.

Let's take a deeper look at what goes on. With 4 threads per core (with at least 8 and hopefully something more like 32 cores), 8 fibers per thread, and some multiple of 16 strands per fiber, we could end up with a huge number of strands being managed simultaneously. This is active, running threads we are looking at as well. Since Larrabee will be a CPU in a true sense of the term, we can have as many threads as necessary live and waiting for a time slice. In the context of a normal CPU, this would be managed by the operating system, but as Larrabee will see the light of day as a graphics card, the driver will probably be managing timesharing issues an OS would normally perform.

While running ridiculous numbers of threads per core at a time might kill performance, unlike current GPUs, resource availability doesn't disrupt the creation of threads. Six of one, half dozen of the other? Maybe, and maybe not. Having active threads with data available to context switch to is key to hiding latency in NVIDIA and AMD hardware. If enough threads cannot be actively maintained, stalls happen and kill performance. Similar issues will impact Intel, and keeping dual-issue in-order hardware busy with multiple threads might be more easily managed if it can fall back on traditional CPU thread management paradigms to handle an abundance of threads that manage software that manages data parallel kernels.

101 Comments

View All Comments

phaxmohdem - Monday, August 4, 2008 - link

Can your mom play Crysis? *burn*JonnyDough - Monday, August 4, 2008 - link

I suppose she could but I don't think she would want to. Why do you care anyway? Have some sort of weird fetish with moms playing video games or are you just looking for another woman to relate to?Ooooh, burn!

Griswold - Monday, August 4, 2008 - link

He is looking for the one playing his mom, I think.bigboxes - Monday, August 4, 2008 - link

Yup. He worded it incorrectly. It should have read, "but can it play your mom?" :pTilmitt - Monday, August 4, 2008 - link

I'm really disappointed that Intel isn't building a regular GPU. I doubt that bolting a load of unoptimised x86 cores together is going to be able to perform anywhere near as well as a GPU built from the ground up to accelerate graphics, given equal die sizes.JKflipflop98 - Monday, August 4, 2008 - link

WTF? Did you read the article?Zoomer - Sunday, August 10, 2008 - link

He had a point. More programmable == more transistors. Can't escape from that fact.Given equal number of transistors, running the same program, a more programmable solution will always be crushed by fixed function processors.

JonnyDough - Monday, August 4, 2008 - link

I was wondering that too. This is obviously a push towards a smaller Centrino type package. Imagine a powerful CPU that can push graphics too. At some point this will save a lot of battery juice in a notebook computer, along with space. It may not be able to play games, but I'm pretty sure it will make for some great basic laptops someday that can run video. Not all college kids and overseas marines want to play video games. Some just want to watch clips of their family back home.rudolphna - Monday, August 4, 2008 - link

as interesting and cool as this sounds, this is even more bad news for AMD, who was finally making up for lost ground. granted, its still probably 2 years away, and hopefully AMD will be back to its old self (Athlon64 era) They are finally getting products that can actually compete. Another challenger, especially from its biggest rival-Intel- cannot be good for them.bigboxes - Monday, August 4, 2008 - link

What are you talking about? It's been nothing but good news for AMD lately. Sure, let Intel sink a lot of $$ into graphics. Sounds like a win for AMD (in a roundabout way). It's like AMD investing into a graphics maker (ATI) instead of concentrating on what makes them great. Most of the Intel supporters were all over AMD for making that decision. Turn this around and watch Intel invest heavily into graphics and it's a grand slam. I guess it's all about perspective. :)