The Radeon HD 4850 & 4870: AMD Wins at $199 and $299

by Anand Lal Shimpi & Derek Wilson on June 25, 2008 12:00 AM EST- Posted in

- GPUs

A Quick Primer on ILP

NVIDIA throws ILP (instruction level parallelism) out the window while AMD tackles it head on.

ILP is parallelism that can be extracted from a single instruction stream. For instance, if i have a lot of math that isn't dependent on previous instructions, it is perfectly reasonable to execute all this math in parallel.

For this example on my imaginary architecture, instruction format is:

LineNumber INSTRUCTION dest-reg, source-reg-1, source-reg-2

This is compiled code for adding 8 numbers together. (i.e. A = B + C + D + E + F + G + H + I;)

1 ADD r2,r0,r1

2 ADD r5,r3,r4

3 ADD r8,r6,r7

4 ADD r11,r9,r10

5 ADD r12,r2,r5

6 ADD r13,r8,r11

7 ADD r14,r12,r13

8 [some totally independent instruction]

...

Lines 1,2,3 and 4 could all be executed in parallel if hardware is available to handle it. Line 5 must wait for lines 1 and 2, line 6 must wait for lines 3 and 4, and line 7 can't execute until all other computation is finished. Line 8 can execute at any point hardware is available.

For the above example, in two wide hardware we can get optimal throughput (and we ignore or assume full speed handling of read-after-write hazards, but that's a whole other issue). If we are looking at AMD's 5 wide hardware, we can't achieve optimal throughput unless the following code offers much more opportunity to extract ILP. Here's why:

From the above block, we can immediately execute 5 operations at once: lines 1,2,3,4 and 8. Next, we can only execute two operations together: lines 5 and 6 (three execution units go unused). Finally, we must execute instruction 7 all by itself leaving 4 execution units unused.

The limitations of extracting ILP are on the program itself (the mix of independent and dependent instructions), the hardware resources (how much can you do at once from the same instruction stream), the compiler (how well does the compiler organize basic blocks into something the hardware can best extract ILP from) and the scheduler (the hardware that takes independent instructions and schedules them to run simultaneously).

Extracting ILP is one of the most heavily researched areas of computing and was the primary focuses of CPU design until the advent of multicore hardware. But it is still an incredibly tough problem to solve and the benefits vary based on the program being executed.

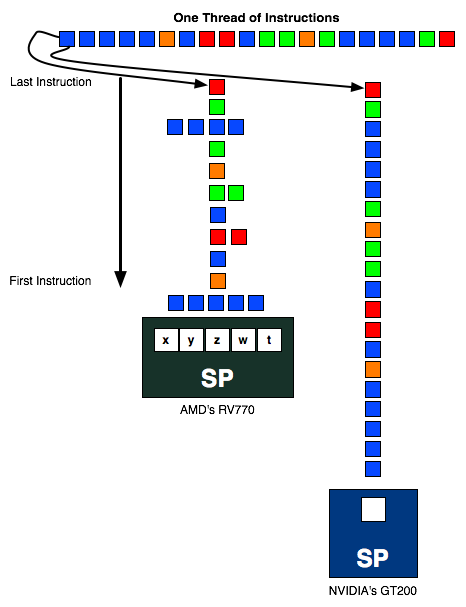

The instruction stream above is sent to an AMD and NVIDIA SP. In the best case scenario, the instruction stream going into AMD's SP should be 1/5th the length of the one going into NVIDIA's SP (as in, AMD should be executing 5 ops per SP vs. 1 per SP for NVIDIA) but as you can see in this exampe, the instruction stream is around half the height of the one in the NVIDIA column. The more ILP AMD can extract from the instruction stream, the better its hardware will do.

AMD's RV770 (And R6xx based hardware) needs to schedule 5 operations per thread every every clock to get the most out of their hardware. This certainly requires a bit of fancy compiler work and internal hardware scheduling, which NVIDIA doesn't need to bother with. We'll explain why in a second.

Instruction Issue Limitations and ILP vs TLP Extraction

Since a great deal of graphics code manipulates vectors like vertex positions (x,y,c,w) or colors (r,g,b,a), lots of things happen in parallel anyway. This is a fine and logical aspect of graphics to exploit, but when it comes down to it the point of extracting parallelism is simply to maximize utilization of hardware (after all, everything in a scene needs to be rendered before it can be drawn) and hide latency. Of course, building a GPU is not all about extracting parallelism, as AMD and NVIDIA both need to worry about things like performance per square millimeter, performance per watt, and suitability to the code that will be running on it.

NVIDIA relies entirely on TLP (thread level parallelism) while AMD exploits both TLP and ILP. Extracting TLP is much much easier than ILP, as the only time you need to worry about any inter-thread conflicts is when sharing data (which happens much less frequently than does dependent code within a single thread). In a graphics architecture, with the necessity of running millions of threads per frame, there are plenty of threads with which to fill the execution units of the hardware, and thus exploiting TLP to fill the width of the hardware is all NVIDIA needs to do to get good utilization.

There are ways in which AMD's architecture offers benefits though. Because AMD doesn't have to context switch wavefronts every chance it gets and is able to extract ILP, it can be less sensitive to the number of active threads running than NVIDIA hardware (however both do require a very large number of threads to be active to hide latency). For NVIDIA we know that to properly hide latency, we must issue 6 warps per SM on G80 (we are not sure of the number for GT200 right now), which would result in a requirement for over 3k threads to be running at a time in order to keep things busy. We don't have similar details from AMD, but if shader programs are sufficiently long and don't stall, AMD can serially execute code from a single program (which NVIDIA cannot do without reducing its throughput by its instruction latency). While AMD hardware can certainly handle a huge number of threads in flight at one time and having multiple threads running will help hide latency, the flexibility to do more efficient work on serial code could be an advantage in some situations.

ILP is completely ignored in NVIDIA's architecture, because only one operation per thread is performed at a time: there is no way to exploit ILP on a scalar single-issue (per context) architecture. Since all operations need to be completed anyway, using TLP to hide instruction and memory latency and to fill available execution units is a much less cumbersome way to go. We are all but guaranteed massive amounts of TLP when executing graphics code (there can be many thousand vertecies and millions of pixels to process per frame, and with many frames per second, that's a ton of threads available for execution). This makes the lack of attention to serial execution and ILP with a stark focus on TLP not a crazy idea, but definitely divergent.

Just from the angle of extracting parallelism, we see NVIDIA's architecture as the more elegant solution. How can we say that? The ratio of realizable to peak theoretical performance. Sure, Radeon HD 4870 has 1.2 TFLOPS of compute potential (800 execution units * 2 flops/unit (for a multiply-add) * 750MHz), but in the vast majority of cases we'll look at, NVIDIA's GeForce GTX 280 with 933.12 GFLOPS ((240 SPs * 2 flops/unit (for multiply-add) + 60 SFUs * 4 flops/unit (when doing 4 scalar muls paired with MADs run on SPs)) * 1296MHz) is the top performer.

But that doesn't mean NVIDIA's architecture is necessarily "better" than AMD's architecture. There are a lot of factors that go into making something better, not the least of which is real world performance and value. But before we get to that, there is another important point to consider. Efficiency.

215 Comments

View All Comments

0g1 - Wednesday, June 25, 2008 - link

In the article it says the GT200 doesn't need to do ILP. It only has 10 threads. Each of those threads needs ILP for each of the SP's. The problem with AMD's approach is each SP has 5 units and is aimed directly at processing x,y,z,w matrix style operations. Doing purely scalar operations on AMD's SP's would be only using 1 out of the 5 units. So, if you want to get the most out of AMD's shaders, you should be doing vector calculations.DerekWilson - Wednesday, June 25, 2008 - link

The GT200 doesn't worry with ILP at all.a single thread doesn't run width wise across all execution units. instead different threads execute the exact same single scalar op on their own unique bit of data (there is only one program counter per SM for a context). this is all TLP (thread level parallelism) and not ILP.

AMD's compiler can pack multiple scalar ops into a 5-wide VLIW operation.

on purely scalar code with many independent ops in a long program, AMD can fill all their units and get close to peak performance. explicit vector instructions are not necessary.

gigahertz20 - Wednesday, June 25, 2008 - link

http://www.hardwarecanucks.com/forum/hardware-canu...">http://www.hardwarecanucks.com/forum/ha...870-512m...The site above mounted an after market cooler on it and got awesome results. Either the Thermalright HR-03 GT is just that great of a GPU cooler, or the standard heatsink/fan on the 4870 is just that horrible. Going from 82C to 43C at load and 55C to 33C at idle, just from an after market cooler is crazy! I was hoping to see some overclocking scores after they mounted the Thermalright on it, but nope :(

Matt Campbell - Wednesday, June 25, 2008 - link

The HR-03GT really is that great. Check it out: http://www.anandtech.com/casecoolingpsus/showdoc.a...">http://www.anandtech.com/casecoolingpsus/showdoc.a...Our 8800GT went from 81 deg. C to 38 deg. C at load, 52 to 32 at idle. That's also with the quietest fan on the market at low speed. And FWIW, I played through all of The Witcher (about 60 hours) with the 8800GT passively cooled in a case with only 1 120mm fan.

-Matt

Clauzii - Wednesday, June 25, 2008 - link

I see no fan on that thing??! PASSIVE?? :O ??jeffreybt2 - Wednesday, June 25, 2008 - link

"Please note that this is with a single Zalman 92MM fan operating at 1600RPM along with Arctic Cooling MX-2 applied to the base."magnusr - Wednesday, June 25, 2008 - link

Does the audio part of the card support PAP? If not all blu-ray audio will be downsampled to 16/48...NullSubroutine - Wednesday, June 25, 2008 - link

I would just like to point out that the 4870 falls behind the 3870 X2 in Oblivion while in every other game it runs circles around it. To me it appears to be a driver problem with Oblivion rather than an indication of the hardware not doing well there. Unless of course the answer lies in the ring bus of the R680?orionmgomg - Wednesday, June 25, 2008 - link

I would love to see more benchmarks with the CPU OCed to at least 4.0All the CPUs you use can hit it NP.

Also, what about at least 2 GTX 280 Cards and their numbers. Noticed that you did have them in SLI cause the power comsumption comparisons had them, but you held back the performance numbers...

Lets see the top 4 cards from ATI and Nvidia compete in dule GPU (no punt intended)on an X48 with DDR3 1600 and a FSB of 400x10!

That would be really nice for the people hoe have performance systems, but may still be rocking out a pair of EVGA 8800Ultras, cause their waiting for real numbers and performance to come out - and their still paying off theye systems lol... :]

Ilmarin - Wednesday, June 25, 2008 - link

You're probably aware of these already, but I'll mention them just in case:* Page 10 (AA comparison) is malformed with no images

* Page 21 (Power, Heat and Noise) is missing the Heat and Noise stuff.

Heat is a big issue with these 4800 cards and their reference coolers, so it would be good to see it covered in detail. My 7800 GTX used to artifact and cause crashes when it hit 79 degrees, before I replaced it with an aftermarket cooler. Apparently the 4870 hits well over 90 degrees at load, and the 4850 isn't much better. Decent aftermarket coolers (HR-03 GT, DuOrb) aren't cheap... and if that's what it takes to prevent heat problems on these cards, some people might consider buying a slower card (like a 9800 GTX+) just because it has better cooling.

Anand, you guys should do a meltdown test... pit the 9800 GTX+ against the 4850, and the 4870 against the GTX 260, all with reference coolers, in a standard air-cooled system at a typical ambient temp. Forget timedemos/benchmarks... play an intensive game like Crysis for an hour or two, and see if you encounter glitches and crashes. If the 4800 cards can somehow remain artifact/crash free at those high temps, then I'd more seriously consider buying one. Heat damage over time may also be a concern, but is hard to test for.

Sure, DAAMIT's partners will eventually put non-reference coolers on some cards, but history tells us that the majority of the market in the first few months will be stock-cooled cards, so this has got be of concern to consumers... especially early adopters.