GeForce 9800 GTX and 3-way SLI: May the nForce Be With You

by Derek Wilson on April 1, 2008 9:00 AM EST- Posted in

- GPUs

Scaling and Performance with 3-way SLI

As we’ve explained, we had a great number of issues in testing 3-way SLI and Quad SLI on our 790i board. We couldn’t even get 8800 Ultra Tri SLI to work, as it draws so much power in addition to being finicky in the first place. We were able to get some numbers run on Crysis and Oblivion.

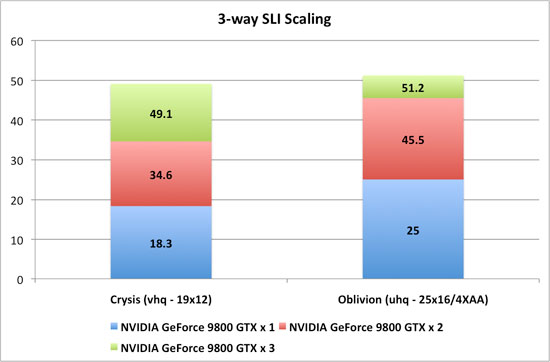

Here is a look at performance scaling with both; we’ll look at comparative performance further below.

These numbers were run on the 790i system and we absolutely did leave VSYNC on its default setting. Performance differences between one, two, and three 9800 GTX cards were more compressed when we force VSYNC off in the control panel.

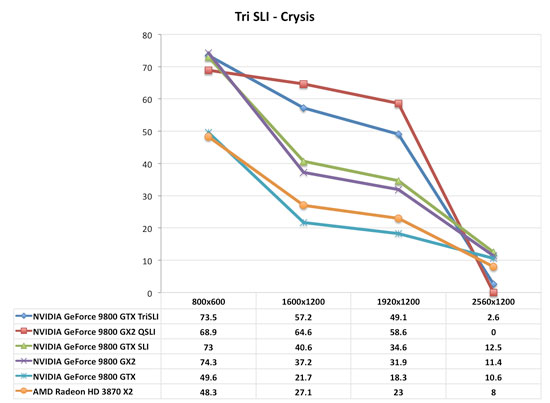

Here is how Crysis stacks up in a direct comparison with the major competition (except for the 8800 Ultra configuration which we could not run).

9800 GTX 3-way is absolutely playable at 1920x1200 with Crysis when using Very High settings. Clearly Quad SLI leads the way here, but for $300 less, that’s not a bad deal if what you want to do is play Crysis at 1920x1200.

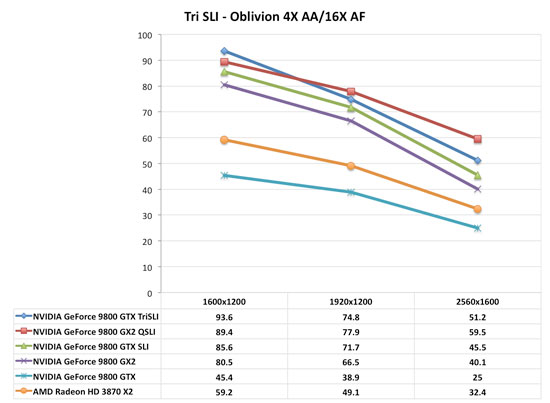

The delta between Tri and Quad is lower here. In both cases, two 9800 GTX cards outperform a single 9800 GX2. While the 9800 GX2 can be plugged into any system, NVIDIA still wants to sell 790i platforms. If you’ve got or want an NVIDIA based platform, you get higher performance for the exact same price by going with two 9800 GTX cards over a single 9800 GX2.

With the hassle of huge power supplies, cooling, etc. associated with Tri and Quad SLI, our money for maintaining value with a high end solution would have to fall to the 9800GTX SLI set up. 3-way seems to have some problems at the moment as well, as we ran into one large issue in one of the only two games we tested. Oblivion has some graphical issues that we document here on YouTube.

49 Comments

View All Comments

nubie - Tuesday, April 1, 2008 - link

It is all well and good to bash nVidia for lack of SLi support on other systems, but why can't the AMD cards run on an nVidia motherboard? Kill 2 birds with one stone there, lose your FB-Dimms and test on a single platform.Apples to apples, you can't say nVidia is at fault when the blame isn't entirely theirs (besides, isn't the capability to run Crossfire and SLi on one system a little out of the needs of most users?).

Is it that AMD allows crossfire on all Except nVidia motherboards? (Do VIA, or SiS make a multi-PCIe board?) If so, then we are talking Crossfire availability on Intel and AMD chipsets, and not nvidia, whereas nVidia allows only their own. That sounds like 30/70% blame AMD vs nVidia.

PeteRoy - Tuesday, April 1, 2008 - link

It is too hard to understand these graphs, use the ones you had in the past, I can't understand how to compare the different systems in these graphs.Use the graphs from the past with the best on top and the worst on bottom.

araczynski - Tuesday, April 1, 2008 - link

i'm using a 7900gtx right now, and the 9800gtx isn't impressing me enough to warrant $300, i might just pick up an 8800gts512 in a month when they're all well below $200. overclocking one would be more than "close enough" for me.araczynski - Tuesday, April 1, 2008 - link

... in any case, i'd much prefer to see benchmarks comparing a broader range of cards than seeing this sli/tri/quad crap. your articles are assuming that everyone upgrades their cards everytime nvidia/ati shit something out on a monthly basis.Denithor - Tuesday, April 1, 2008 - link

...because they set out to accurately compare nVidia's latest high end card to other high end options available.I'm sure in a few days there will be a followup article showing a broader spectrum of cards at more usable resolutions so we (the common masses) can see whether or not this $300 card really brings any benefit with its high price tag.

Ndel - Tuesday, April 1, 2008 - link

your benchmarks dont even make any sense =/why use a system 99.9 percent of the people dont have.

this is not even relative to what other enthusiasts currently have, how are we suppose to believe these benchmarks at all.

grain of salt...

SpaceRanger - Tuesday, April 1, 2008 - link

I believe he used the fastest processor out there to eliminate IT at the bottleneck for the benchmark.Rocket321 - Tuesday, April 1, 2008 - link

Derek -I enjoyed this article for a few reasons that made it different.

First, the honesty and discussion of problems expirenced. This helps to convay the many issues still expirenced with multi GPU solutions.

Second the youtube video. This is a neat use of available technology. Could this be useful in other ways? Maybe in the next low/mid GPU roundup it could be used to show a short clip of each card playing a game at the same point.

This could visually show where one card gets choppy and a better card doesn't.

Finally - Using a poll in the forums - really great idea to do this for relavent info and then add to an article.

Thanks for the good article!

chizow - Tuesday, April 1, 2008 - link

Thanks for making AT reviews worth reading again Derek. You addressed many of the problems I've had with the ho-hum reviews of late, like emphasizing major problems encountered during testing and dropping some incredibly insightful discoveries backed by convincing evidence (Vsync issue). Break throughs such as this are part of what make PC hardware fun and exciting.A few things you touched on but didn't really clarify was performance on Skulltrail vs. NV chipsets and memory bandwidth/amount on the 9800 vs. Ultra. I'd like to see a comparison of Skulltrail vs. 780/790i and then just future disclaimers like (Skulltrail is ~20% slower than the fastest NV solutions).

With the 9800 vs Ultra I'm a bit disappointed you didn't really dig into overclocking at all or further investigation on how much some of the issues you talked about impacted or benefitted performance, like memory bandwidth. I think its safe to say the 9800GTX as a refined G92 8800GTS has significant overclocking headroom while the Ultra does not (its basically an overclocked GTX). It would have been nice to see how much memory overclocks would've benefitted overall performance alone, then max overclocks on both the core/shader and memory.

But again, great review, I'll be reading over it again to pick up on some of the finer details.

lopri - Tuesday, April 1, 2008 - link

How many revisions the 790i have been through already? Major ones at that. Usually minor revisions are like A0->A1->A2, I thought. As a matter of fact I don't even remember if there was any nVidia chip that is 'C' revision, except maybe MCP55 (570 SLI).