SMB NAS Roundup

by Ross Whitehead Jason Clark Dave Muysson on December 5, 2006 3:30 AM EST- Posted in

- IT Computing

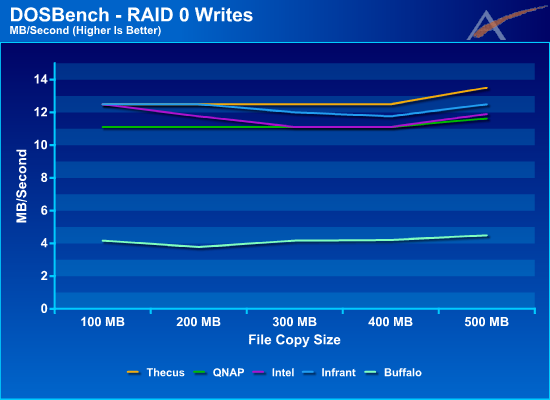

Group DOSBench RAID 0 Results

A different RAID level and a different Benchmark and all of a sudden we have a tight race. The following gains were observed relative to RAID 5: Intel 64%, Thecus 54%, QNAP 34%, Infrant 20%, and Buffalo 8%.

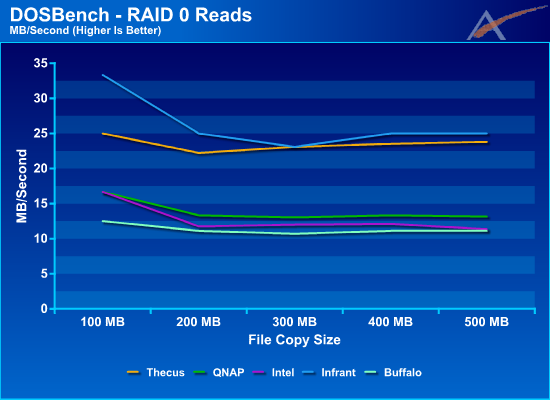

Again, the results of the RAID 0 Read test are similar to the RAID 5 Read test and do not show as dramatic improvements as observed in the Write tests.

A different RAID level and a different Benchmark and all of a sudden we have a tight race. The following gains were observed relative to RAID 5: Intel 64%, Thecus 54%, QNAP 34%, Infrant 20%, and Buffalo 8%.

Again, the results of the RAID 0 Read test are similar to the RAID 5 Read test and do not show as dramatic improvements as observed in the Write tests.

23 Comments

View All Comments

yyrkoon - Friday, December 8, 2006 - link

My problem is this: I want redundancy, but I also do not want to be limited to GbE transfer rates. I've been in communication with many people, via different channels (email, IRC, forums, etc), and the best results I've seen anyone get on GbE is around 90MB/s using specific NIC cards (Intel pro series, PCI-E).The options here are rather limited. I like Linux, however, I refuse to use Ethernet channel bonding (thus forcing the use of Linux on all my machines), or possibly a combination of Ethernet channel bonding, with a very expensive 802.11 a/d switch. 10GbE is is an option, but is way out of my price range, and 4GB FC doesn't seem to be much better. From my limited understanding of their product, Intel pro cards I think come with software to be used in aggregate load balancing, but I'm not 100% sure of this, and unless I used cross over cables from one machine, to another, I would be forced into paying $300usd or possibly more for a 802.11 a/d switch again. I've looked into all these options, plus 1394b firewire teaming, and SATA port multipliers. Port multiplier technology looks promising, but is Dependant on motherboard RAID (unless you shell out for a HBA), but from what I do know about it, you couldn't just plug it in to a Areca card, and have it work at full performance (someone correct me if I'm wrong please, Id love top learn otherwise).

My goal, is to have a reliable storage solution, with minimal wait times when transferring files. At some point, having too much would be overkill, and this also needs to be realized.

peternelson - Tuesday, December 12, 2006 - link

It sounds like your needs would be solved by using a fiber channel fabric.

You need a FC nic (or two) in each of your clients, then one or more FC switches eg from Brocade or oems of their switches. Finally you need drive arrays to connect FC or regular drives onto the FC fabric.

It isn't cheap but gives fantastic redundancy. FC speeds are 1/2/4 Gigabits per second.

yyrkoon - Tuesday, December 5, 2006 - link

I've been giving Areca a lot of thought lately. What I was considering, was to use a complete system for storage, loads of disk space, with an Areca RAID controller. The only problem I personally have with my idea here is: how do I get a fast link to the desktop PC ?I've been debating back and forth with a friend of mine about using firewire. From what he says, you can use multiple firewire links, teamed, along with some "hack" ? for raising to get 1394b to 1000MBit/s, to achieve what seems like outstanding performance. Assuming what my friend says is accurate, you could easily team 4x 1394b ports, and get 500MB/s.