ATI's Late Response to G70 - Radeon X1800, X1600 and X1300

by Derek Wilson on October 5, 2005 11:05 AM EST- Posted in

- GPUs

Pipeline Layout and Details

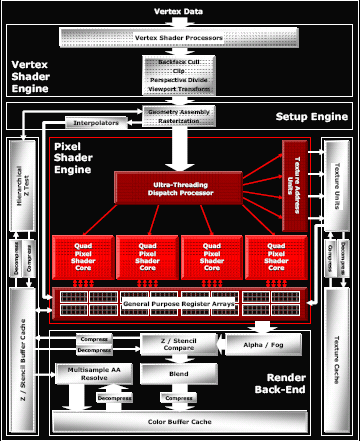

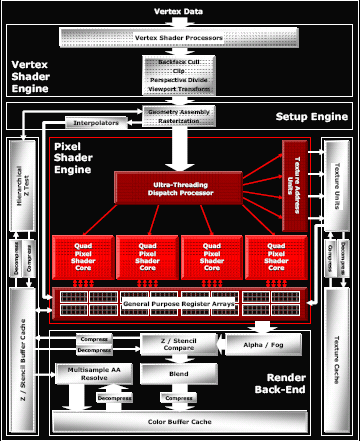

The general layout of the pipeline is very familiar. We have some number of vertex pipelines feeding through a setup engine into a number of pixel pipelines. After fragment processing, data is sent to the back end for things like fog, alpha blending and Z compares. The hardware can easily be scaled down at multiple points; vertex pipes, pixel pipes, Z compare units, texture units, and the like can all be scaled independently. Here's an overview of the high end case.

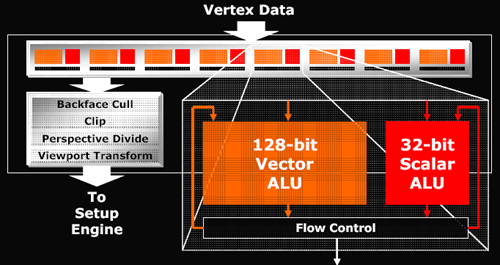

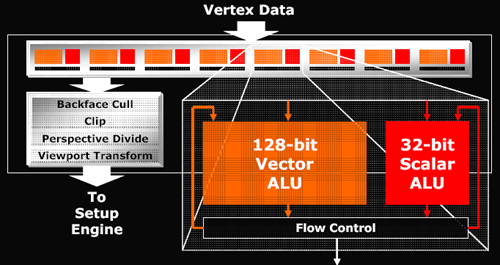

The maximum number of vertex pipelines in the X1000 series that it can handle is 8. Mid-range and budget parts incorporate 5 and 2 vertex units respectively. Each vertex pipeline is capable of one scalar and one vector operation per clock cycle. The hardware can support 1024 instruction shader programs, but much more can be done in those instructions with flow control for looping and branching.

After leaving the vertex pipelines and geometry setup hardware, the data makes its way to the "ultra threading" dispatch processor. This block of hardware is responsible for keeping the pixel pipelines fed and managing which threads are active and running at any given time. Since graphics architectures are inherently very parallel, quite a bit of scheduling work within a single thread can easily be done by the compiler. But as shader code is actually running, some instruction may need to wait on data from a texture fetch that hasn't completed or a branch whose outcome is yet to be determined. In these cases, rather than spin the clocks without doing any work, ATI can run the next set of instructions from another "thread" of data.

Threads are made up of 16 pixels each and up to 512 can be managed at one time (128 in mid-range and budget hardware). These threads aren't exactly like traditional CPU threads, as programmers do not have to create each one specifically. With graphics data, even with only one shader program running, a screen is automatically divided into many "threads" running the same program. When managing multiple threads, rather than requiring a context switch to process a different set of instructions running on different pixels, the GPU can keep multiple contexts open at the same time. In order to manage having any viable number of registers available to any of 512 threads, the hardware needs to manage a huge internal register file. But keeping as many threads, pixels, and instructions in flight at a time is key in managing and effectively hiding latency.

NVIDIA doesn't explicitly talk about hardware analogous to ATI's "ultra threading dispatch processor", but they must certainly have something to manage active pixels as well. We know from our previous NVIDIA coverage that they are able to keep hundreds of pixels in flight at a time in order to hide latency. It would not be possible or practical to give the driver complete control of scheduling and dispatching pixels as too much time would be wasted deciding what to do next.

We won't be able to answer specifically the question of which hardware is better at hiding latency. The hardware is so different and instructions will end up running through alternate paths on NVIDIA and ATI hardware. Scheduling quads, pixels, and instructions is one of the most important tasks that a GPU can do. Latency can be very high for some data and there is no excuse to let the vast parallelism of the hardware and dataset to go to waste without using it for hiding that latency. Unfortunately, there is just no test that we have currently to determine which hardware's method of scheduling is more efficient. All we can really do for now is look at the final performance offered in games to see which design appears "better".

One thing that we do know is that ATI is able to keep loop granularity smaller with their 16 pixel threads. Dynamic branching is dependant on the ability to do different things on different pixels. The efficiency of an algorithm breaks down if hardware requires that too many pixels follow the same path through a program. At the same time, the hardware gets more complicated (or performance breaks down) if every pixel were to be treated completely independently.

On NVIDIA hardware, programmers need to be careful to make sure that shader programs are designed to allow for about a thousand pixels at a time to take the same path through a shader. Performance is reduced if different directions through a branch need to be taken in small blocks of pixels. With ATI, every block of 16 pixels can take a different path through a shader. On G70 based hardware, blocks of a few hundred pixels should optimally take the same path. NV4x hardware requires larger blocks still - nearer to 900 in size. This tighter granularity possible on ATI hardware gives developers more freedom in how they design their shaders and take advantage of dynamic branching and flow control. Designing shaders to handle 32x32 blocks of pixels is more difficult than only needing to worry about 4x4 blocks of pixels.

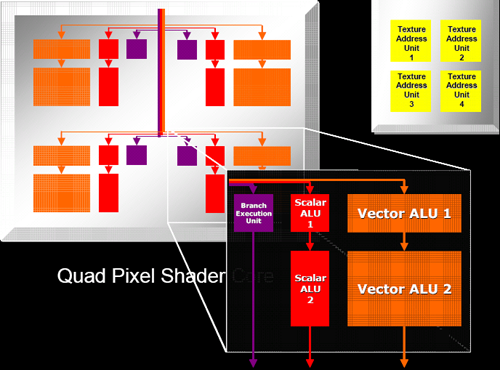

After the code is finally scheduled and dispatched, we come to the pixel shader pipeline. ATI tightly groups pixel shaders in quads and is calling each block of pixel pipes a quad pixel shader core. This language indicates the tight grouping of quads that we already assumed existed on previous hardware.

Each pixel pipe in a quad is able to handle 6 instructions per clock. This is basically the same as R4xx hardware except that ATI is now able to accommodate dynamic branching on their dedicated branch hardware. The 2 scalar, 2 vector, 1 texture per clock arrangement seems to have worked with ATI in the past enough for them to stick with it again, only adding 1 branch operation that can be issued in parallel with these 5 other instructions.

Of course, branches won't happen nearly as often as math and texture operations, so this hardware will likely be idle most of the time. In any case, having separate hardware for branching that can work in parallel with the rest of the pipeline does make relatively tight loops more efficient than what they could be if no other work could be done while a branch was being handled.

All in all, one of the more interesting things about the hardware is its modularity. ATI has been very careful to make each block of the chip independent of the rest. With high end hardware, as much of everything is packed in as possible, but with their mid-range solution, they are much more frugal. The X1600 line will incorporate 3 quads with 12 pixel pipes alongside only 4 texture units and 8 Z compare units. Contrast this to the X1300 and its 4 pixel pipes, 4 texture units and 4 Z compare units and the "16 of everything" X1800 and we can see that the architecture is quite flexible on every level.

The general layout of the pipeline is very familiar. We have some number of vertex pipelines feeding through a setup engine into a number of pixel pipelines. After fragment processing, data is sent to the back end for things like fog, alpha blending and Z compares. The hardware can easily be scaled down at multiple points; vertex pipes, pixel pipes, Z compare units, texture units, and the like can all be scaled independently. Here's an overview of the high end case.

The maximum number of vertex pipelines in the X1000 series that it can handle is 8. Mid-range and budget parts incorporate 5 and 2 vertex units respectively. Each vertex pipeline is capable of one scalar and one vector operation per clock cycle. The hardware can support 1024 instruction shader programs, but much more can be done in those instructions with flow control for looping and branching.

After leaving the vertex pipelines and geometry setup hardware, the data makes its way to the "ultra threading" dispatch processor. This block of hardware is responsible for keeping the pixel pipelines fed and managing which threads are active and running at any given time. Since graphics architectures are inherently very parallel, quite a bit of scheduling work within a single thread can easily be done by the compiler. But as shader code is actually running, some instruction may need to wait on data from a texture fetch that hasn't completed or a branch whose outcome is yet to be determined. In these cases, rather than spin the clocks without doing any work, ATI can run the next set of instructions from another "thread" of data.

Threads are made up of 16 pixels each and up to 512 can be managed at one time (128 in mid-range and budget hardware). These threads aren't exactly like traditional CPU threads, as programmers do not have to create each one specifically. With graphics data, even with only one shader program running, a screen is automatically divided into many "threads" running the same program. When managing multiple threads, rather than requiring a context switch to process a different set of instructions running on different pixels, the GPU can keep multiple contexts open at the same time. In order to manage having any viable number of registers available to any of 512 threads, the hardware needs to manage a huge internal register file. But keeping as many threads, pixels, and instructions in flight at a time is key in managing and effectively hiding latency.

NVIDIA doesn't explicitly talk about hardware analogous to ATI's "ultra threading dispatch processor", but they must certainly have something to manage active pixels as well. We know from our previous NVIDIA coverage that they are able to keep hundreds of pixels in flight at a time in order to hide latency. It would not be possible or practical to give the driver complete control of scheduling and dispatching pixels as too much time would be wasted deciding what to do next.

We won't be able to answer specifically the question of which hardware is better at hiding latency. The hardware is so different and instructions will end up running through alternate paths on NVIDIA and ATI hardware. Scheduling quads, pixels, and instructions is one of the most important tasks that a GPU can do. Latency can be very high for some data and there is no excuse to let the vast parallelism of the hardware and dataset to go to waste without using it for hiding that latency. Unfortunately, there is just no test that we have currently to determine which hardware's method of scheduling is more efficient. All we can really do for now is look at the final performance offered in games to see which design appears "better".

One thing that we do know is that ATI is able to keep loop granularity smaller with their 16 pixel threads. Dynamic branching is dependant on the ability to do different things on different pixels. The efficiency of an algorithm breaks down if hardware requires that too many pixels follow the same path through a program. At the same time, the hardware gets more complicated (or performance breaks down) if every pixel were to be treated completely independently.

On NVIDIA hardware, programmers need to be careful to make sure that shader programs are designed to allow for about a thousand pixels at a time to take the same path through a shader. Performance is reduced if different directions through a branch need to be taken in small blocks of pixels. With ATI, every block of 16 pixels can take a different path through a shader. On G70 based hardware, blocks of a few hundred pixels should optimally take the same path. NV4x hardware requires larger blocks still - nearer to 900 in size. This tighter granularity possible on ATI hardware gives developers more freedom in how they design their shaders and take advantage of dynamic branching and flow control. Designing shaders to handle 32x32 blocks of pixels is more difficult than only needing to worry about 4x4 blocks of pixels.

After the code is finally scheduled and dispatched, we come to the pixel shader pipeline. ATI tightly groups pixel shaders in quads and is calling each block of pixel pipes a quad pixel shader core. This language indicates the tight grouping of quads that we already assumed existed on previous hardware.

Each pixel pipe in a quad is able to handle 6 instructions per clock. This is basically the same as R4xx hardware except that ATI is now able to accommodate dynamic branching on their dedicated branch hardware. The 2 scalar, 2 vector, 1 texture per clock arrangement seems to have worked with ATI in the past enough for them to stick with it again, only adding 1 branch operation that can be issued in parallel with these 5 other instructions.

Of course, branches won't happen nearly as often as math and texture operations, so this hardware will likely be idle most of the time. In any case, having separate hardware for branching that can work in parallel with the rest of the pipeline does make relatively tight loops more efficient than what they could be if no other work could be done while a branch was being handled.

All in all, one of the more interesting things about the hardware is its modularity. ATI has been very careful to make each block of the chip independent of the rest. With high end hardware, as much of everything is packed in as possible, but with their mid-range solution, they are much more frugal. The X1600 line will incorporate 3 quads with 12 pixel pipes alongside only 4 texture units and 8 Z compare units. Contrast this to the X1300 and its 4 pixel pipes, 4 texture units and 4 Z compare units and the "16 of everything" X1800 and we can see that the architecture is quite flexible on every level.

103 Comments

View All Comments

mlittl3 - Wednesday, October 5, 2005 - link

I'll tell you how it is a win. Take a 8 less pipeline architecture, put it onto a brand new 0.90nm die shrink, clock the hell out of the thing, consume just a little more power and add all the new features like sm3.0 and you equal the competition's fastest card. This is a win. So when ATI releases 1,2,3 etc. more quad pipes, they will be even faster.I don't see anything bob. Anandtech's review was a very bad one. ALL the other sites said this was is good architecture and is on par with and a little faster than nvidia. None of those conclusions can be drawn from the confusing graphs here.

Read the comments here and you will see others agree. Good job, ATI and Nvidia for bringing us competition and equal performing cards. Now bob, go to some other sites, get a good feel for which card suits your needs, and then go buy one. :)

bob661 - Wednesday, October 5, 2005 - link

I read the other sites as well as AT. Quite frankly, I trust AT before any of the other sites because their methodology and consistancy is top notch. HardOCP didn't even test a X1800XT and if I was an avid reader of their site I'd be wondering where that review was. I guess I don't see it your way because I only look for bang for the buck not which could be better if it had this or had that. BTW, I just got some free money (no, I didn't steal it!) today so I'm going to pick up a 7800GT. :)Houdani - Wednesday, October 5, 2005 - link

One of the reasons for the card selections is due to the price of the cards -- and was stated as such. Just because ATI is calling the card "low-end" doesn't mean it should be compared with other low-end cards. If ATI prices their "low-end" card in the same range as a mid-range card, then it should rightfully be compared to those other cards which are at/near the price.But your point is well taken. I'd like to see a few more cards tossed in there.

Madellga - Wednesday, October 5, 2005 - link

Derek, I don't know if you have the time for this, but a review at other website showed a huge difference in performance at the Fear Demo. Ati was in the lead with substantial advantage for the maximum framerates, but near at minimum.http://techreport.com/reviews/2005q4/radeon-x1000/...">http://techreport.com/reviews/2005q4/radeon-x1000/...

As Fear points towards the new generation of engines, it might be worth running some numbers on it.

Also useful would be to report minimum framerates at the higher resolutions, as this relates to good gameplay experience if all goodies are cranked up.

Houdani - Wednesday, October 5, 2005 - link

Well, the review does state that the FEAR Demo greatly favors ATI, but that the actual shipping game is expected to not show such bias. Derek purposefully omitted the FEAR Demo in order to use the shipping game instead.allnighter - Wednesday, October 5, 2005 - link

Is it safe to assume that you guys might not have had enough time with these cards to do your usuall in-depth review? I'm sure you'll update for us to be able to get the full picture. I also must say that I'm missing the oc part of the review. I wanted to see how true it is taht these chips can go sky hig.> Given the fact that they had 3 re-spins it may as well be true.TinyTeeth - Wednesday, October 5, 2005 - link

...an Anandtech review.But it's a bit thin, I must say. I'm still missing overclocking results and Half-Life 2 and Battlefield 2 results. How come no hardware site has tested the cards in Battlefield 2 yet?

From my point of view, Doom III, Splinter Cell, Everquest II and Far Cry are the least interesting games out there.

Overall it's a good review as you can expect from the absolutely best hardware site there is, but I hope and expect there will be another, much larger review.

Houdani - Wednesday, October 5, 2005 - link

The best reason to continue benchmarking games which have been out for a while is because those are the games which the older GPUs were previously benched. When review sites stop using the old benchmarks, they effectively lose the history for all of the older GPU's, and therefore we lose those GPUs in the comparison.Granted, the review is welcome to re-benchmark the old GPUs using the new games ... but that would be a significant undertaking and frankly I don't see many (if any) review sites doing that.

But I will throw you this bone: While I think it's quite appropriate to use benchmarks for two years (maybe even three years), it would also be a good thing to very slowly introduce new games at a pace of one per year, and likewise drop one game per year.

mongoosesRawesome - Wednesday, October 5, 2005 - link

they have to retest whenever they use a different driver/CPU/motherboard, which is quite often. I bet they have to retest every other article or so. Its a pain in the butt, but thats why we visit and don't do the tests ourselves.Madellga - Wednesday, October 5, 2005 - link

Techreport has Battlefield 2 benchmarks, as Fear, Guild Wars and others. I liked the article, recommend that you read also.