The Quest for More Processing Power, Part One: "Is the single core CPU doomed?"

by Johan De Gelas on February 8, 2005 4:00 PM EST- Posted in

- CPUs

CHAPTER 3: Containing the epidemic problems

Reducing leakage

Leakage is such a huge problem that it could, in theory, make any advance in process technology useless. Without countermeasures, a 45 nm Pentium 4 would consume 100 to 150 Watts on leakage alone, and up to burn 250 Watts in total. The small die would go up in smoke before the ROM program would have finished the POST sequence.

However, smart researchers have found ways to reduce leakage significantly. SOI – Silicon on Insulator - improves the insulation of the gate and thus reduces leakage currents. SOI has made process technology even more complex, making it harder for AMD to get high binsplits on the Opteron and Athlon 64. However, it is clear that the Athlon 64 has a lot less trouble with leakage power than the Prescott, despite the fact that the Athlon 64 has only 20% less transistors than the Intel Prescott (106 versus 125 million).

The most spectacular reduction of leakage will probably come from Intel's "high-k" materials, which will replace the current silicon dioxide gate dielectric. Thanks to this advancement and other small improvements, Intel expects to reduce gate leakage by over one hundredfold! This new technology will be used when Intel moves to 45nm technology.

Another promising technique is Gate Bias technology. By using special sleep transistors, leakage can be reduced by up to 90% while the dynamic power is also reduced with 50% and more.

Body Bias techniques make it possible to control the voltage of a transistor. The objective is to make transistors slow (low leakage) when they are not used, and fast when they are. Stacked transistors and many other technologies also allow for reduction in leakage.[4]

One could probably write a book on this, but the message should be clear: the leakage problem is not going to stop progress. SOI already reduces the problem significantly and high K materials will make sure that the whole leakage problem will remain to be a nuisance, but not a major concern until the industry moves to even smaller structures than 45 nm.

At the same time, strained silicon will reduce the amount of dynamic power needed. With strained silicon, electrons experience less resistance. As a result, CPUs can get up to 35 percent faster without consuming more. This is what should allow the Athlon 64 stepping "E0" to reach higher clock speeds without consuming more.

Reducing Wire Delay

Although wire delay has not been so much in spotlight as leakage power, it is an important hurdle that designers have to take when they target high clock speeds. The resistance of wires has been reduced by both AMD and Intel using copper instead of aluminium. Capacitance has been lowered by using lower-K materials separating wires.

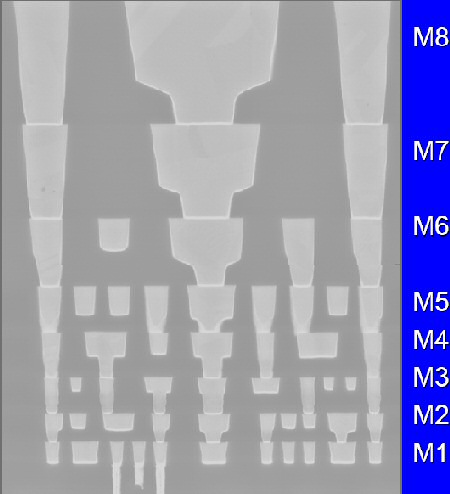

Fig 5. 8 Metal layers to reduce wire delay in Intel's 65 nm CPUs

Adding more metal layers is another strategy. More metal layers enable the wires connecting different parts of the CPU to be packed more densely. More densely means shorter wires. And shorter wires result in lower resistance, which, in turn, reduce the total RC Delay.

Fig 6. Repeaters on the Itanium Die

Of course, there are limits on what adding more metal layers, using SOI and lower-K materials can do to reduce RC delay. If some of the global wires are still too long, they are broken up into smaller parts, which are connected by repeaters. Repeaters can be used as much as you like, but they consume power of course.

Now that we have wire delay and leakage more or less out of control, let us try to find out what went exactly wrong with the Pentium 4 "Prescott". The answer is not as obvious as it seems.

65 Comments

View All Comments

WhoBeDaPlaya - Thursday, February 10, 2005 - link

Ain't no way you can get those repeaters out of there - that's already the optimum solution for driving the large load (interconnect). It probably equalizes the stage effort required (you can work out the math and find that for multi-stage logic, the optimal config is that each stage has the exact same effort level). Eg. instead of driving an interconnect with a "unit" inverter, it might be more feasible to drive it with a chain of them, each with different fan in/out. Repeater insertion is tricky and (as far as I know) can't readily be automated.Interconnects are getting to tbe point where traversal of a die diagonally can take multiple clock cycles. Some folks are suggesting that a pipelined approach could be extended to interconnects, esp. clock trees. But the most fun problem (for me at least :P) is the handling of inductance extraction - how in the h*ll do you model it accurately? High-speed digital design == Analog design. Long live analog / mixed-signal VLSI designers :P

fitten - Thursday, February 10, 2005 - link

[quote]Well-written multicore-aware code should have the number of cores as a _variable_, so you just set it to 1 on a uniprocessor platform.[/quote]Sometimes parallel algorithms aren't very good for serial execution. In these cases, you may actually have one algorithm for multiple processors and another algorithm for a single processor.

[quote]So, if Intel were to use less repeaters the heat output could be lowered significantly. [/quote]

Well... I'm sure the Intel engineers didn't just up-and-say one day, "Hey, I know something cool to do... let's put some more repeaters into the core." I'm sure there's a reason for them being in there. It would probably take a bit of redesign to get the repeaters out. (I'm pretty sure this is what you meant, but I just wanted to clarify that stuff like repeaters aren't just put into a CPU for no reason. Things like repeaters are put in because there wasn't a more viable solution to some signalling problem that's there.)

sphinx - Thursday, February 10, 2005 - link

So, the reason for the Prescott's shortcomings is the use of too many repeaters as shown in the image of the Itanium 2. If I remember correctly, the article said that the repeaters were using too much power as well. So, if Intel were to use less repeaters the heat output could be lowered significantly.AtaStrumf - Thursday, February 10, 2005 - link

Nice article and pretty easy to understand as well. I'm happy to hear that there may still be hope for controlling the power leakage, because without it I just can't see anybody getting beyond 65 nm, since even 65 nm will, without improvements, leak almost 3 times as much power as 90nm does now.Anxiously waiting for E0 A64 to see what AMD has managed to cook up.

mickyb - Wednesday, February 9, 2005 - link

There are plenty of multi-threaded apps out there. I am not sure pure single threaded apps exist any more outside of "Hello World" and some old Cobol/FORTRAN ports that are on floppy.Quake and UT have been multi-threaded for a while. Quake was multi-threaded when I had a dual Pentium pro. There were even benchmarks. The benefits seen with hyper-threading also show that many apps are multi-threaded. The performance gain was negligible due to the graphics drivers and OpenGL/DirectX not being thread optimized. I am sure that has been worked out by now.

Multi-threading is not all about making use of multiple CPUs. There are many conditions where a program would be stopped dead in its tracks waiting for a response from some outside program or hardware device. You can solve this with events, multi-process, multi-threading, call-backs, etc. Goal wise, they are related. In the Winders world, threading is the method of choice.

I really can't believe there are still arguments going on about programs not being multi-threaded. This is not that much of an issue any more. Even if your apps is not threaded, the OS is and it can run on one CPU while your app runs on the other. Or if you have 2 apps, then they can run on different CPUs.

With all that said, I agree with the thought that creating performance for all applications is better served using a faster single core CPU than dual CPUs. I think this way because when you have a unit of work to be done (even with multiple threads), it is more likely to be done quicker with a single CPU that is capable of the same computing power as 2 CPUs. I single unit of work will ultimately be smaller than a thread in all cases. The smallest is the instruction set.

Now...with that said, if the limiting factor is technology and they cannot obtain the equivalent performance of a dual core with a single core, then it makes since to go dual core to obtain it, especially with the power leakage. I like the thinking behind dual core on a laptop, but am skeptical about the part that says turning the CPU off and on rapidly to keep it cool and efficient. It will probably work if it isn't turned on and off too quickly, but heat spreads pretty quickly. You wouldn't even get past POST without a heat-sink and that silicon insulator keeps everything pretty cozy.

NegativeEntropy - Wednesday, February 9, 2005 - link

Johan, another excellent article, I'm looking forward to part 2.Evan Lieb - Wednesday, February 9, 2005 - link

It's pretty much impossible to get a "newbie" explanation of CPU architectures without a least a basic understanding of how CPUs work. Rand's suggestions were quite good, you should start there if you're overwhelmed by Johan's explanations IceWindius. It also wouldn't hurt to start with Anand's CPU articles from last year.Rand - Wednesday, February 9, 2005 - link

"I wish someone like Arstechinca would make something really built ground up like CPU's for morons so I could start understanding this stuff better."You may want to read parts 1-5 of "The Secrets of High Performance CPUs"

http://www.aceshardware.com/list.jsp?id=4

A bit outdayed now, as it was written in 99' if I recall correctly but it's still broadly relevant and a nice series of articles if your looking to get a better understanding of microprocessors without being drowned in the technical side of things.

ArsTechnica also has some good articles with a newbie friendly slant.

There are some excellent articles at RealWorldTech as well, but their definitely written for engineers rather then the average person.

Unfortunately most of the more noteable books like those by Hennessy & Patterson assume you've already some knowledge of computer architectures.

stephenbrooks - Wednesday, February 9, 2005 - link

#46, Well-written multicore-aware code should have the number of cores as a _variable_, so you just set it to 1 on a uniprocessor platform. I also think there already exists a multithreaded version of one of the big engines (Quake, UT?) that apparently does not lose any performance on a single core either.But I agree with the main thrust of your post, which is "Buy AMD".

Noli - Wednesday, February 9, 2005 - link

Not to belittle dual core development and I know there are a lot of people who run technical programs that will benefit from dual core on this site, but when I spend a small fortune on a pc, the primary driver is being able to play the most advanced games in the world. Unfortunately, I don't feel multi-threaded game code is going to get written for a longggggg time (what's the point of reducing potential customers?). How long till a very large percentage of users have dual cores? End of 2006 at the very earliest? So it's really a just a theoretical interest till then for me...