The Intel Comet Lake Core i9-10900K, i7-10700K, i5-10600K CPU Review: Skylake We Go Again

by Dr. Ian Cutress on May 20, 2020 9:00 AM EST- Posted in

- CPUs

- Intel

- Skylake

- 14nm

- Z490

- 10th Gen Core

- Comet Lake

CPU Performance: Simulation Tests

A number of our benchmarks fall into the category of simulations, whereby we are either trying to emulate the real world or re-create systems with systems. In this set of tests, we have a variety including molecular modelling, non-x86 video game console emulation, a simulation of the equivalent of a slug brain with neurons and synapses firing, and finally a popular video game that simulates the growth of a fictional land including historical events and important characters within that world.

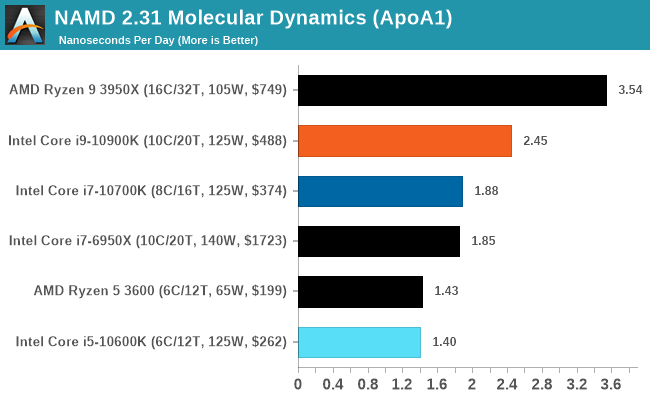

NAMD ApoA1

One frequent request over the years has been for some form of molecular dynamics simulation. Molecular dynamics forms the basis of a lot of computational biology and chemistry when modeling specific molecules, enabling researchers to find low energy configurations or potential active binding sites, especially when looking at larger proteins. We’re using the NAMD software here, or Nanoscale Molecular Dynamics, often cited for its parallel efficiency. Unfortunately the version we’re using is limited to 64 threads on Windows, but we can still use it to analyze our processors. We’re simulating the ApoA1 protein for 10 minutes, and reporting back the ‘nanoseconds per day’ that our processor can simulate. Molecular dynamics is so complex that yes, you can spend a day simply calculating a nanosecond of molecular movement.

This is one of our new tests, so we will be filling in more data as we start regression testing for older CPUs.

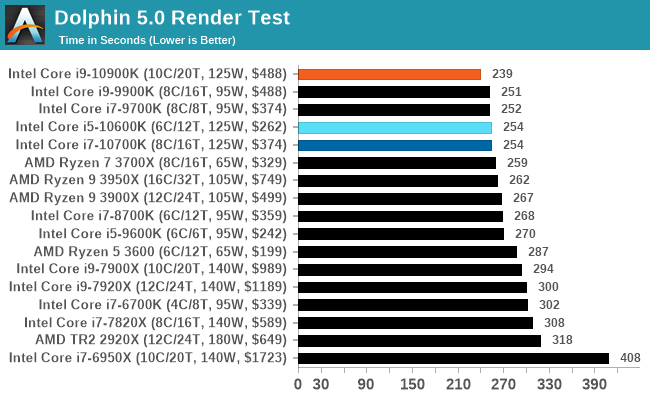

Dolphin 5.0: Console Emulation

One of the popular requested tests in our suite is to do with console emulation. Being able to pick up a game from an older system and run it as expected depends on the overhead of the emulator: it takes a significantly more powerful x86 system to be able to accurately emulate an older non-x86 console, especially if code for that console was made to abuse certain physical bugs in the hardware.

For our test, we use the popular Dolphin emulation software, and run a compute project through it to determine how close to a standard console system our processors can emulate. In this test, a Nintendo Wii would take around 1050 seconds.

The latest version of Dolphin can be downloaded from https://dolphin-emu.org/

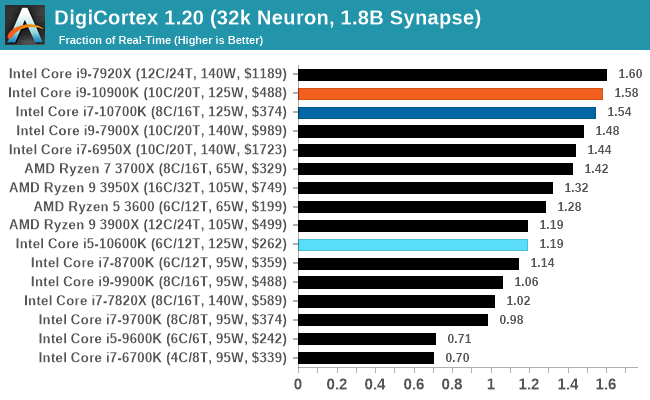

DigiCortex 1.20: Sea Slug Brain Simulation

This benchmark was originally designed for simulation and visualization of neuron and synapse activity, as is commonly found in the brain. The software comes with a variety of benchmark modes, and we take the small benchmark which runs a 32k neuron / 1.8B synapse simulation, equivalent to a Sea Slug.

Example of a 2.1B neuron simulation

We report the results as the ability to simulate the data as a fraction of real-time, so anything above a ‘one’ is suitable for real-time work. Out of the two modes, a ‘non-firing’ mode which is DRAM heavy and a ‘firing’ mode which has CPU work, we choose the latter. Despite this, the benchmark is still affected by DRAM speed a fair amount.

DigiCortex can be downloaded from http://www.digicortex.net/

The additional bandwidth of the HEDT platforms put them higher up the chart here - Digicortex always ends up as an odd mix of bottlenecks mostly around memory, but it can be localized internal bandwidth limited as well.

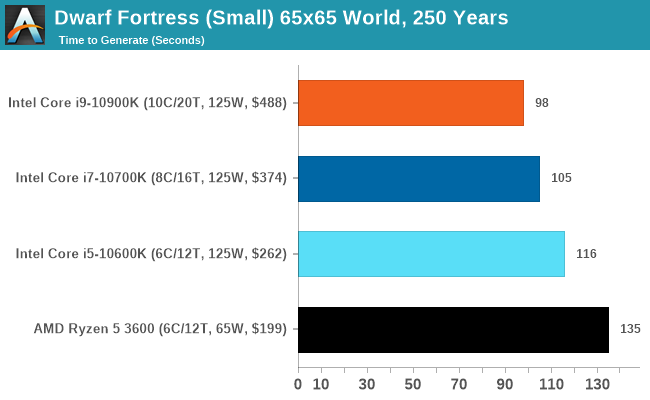

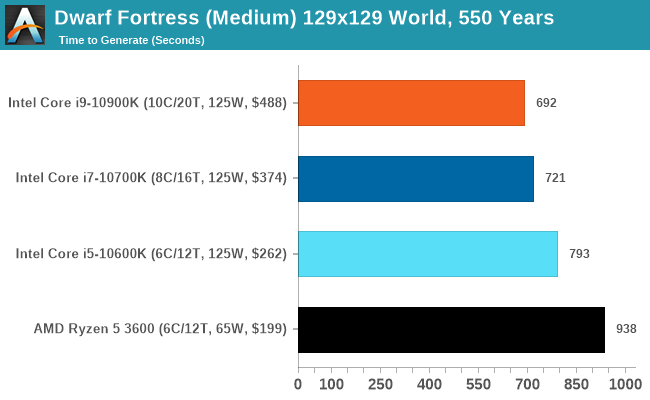

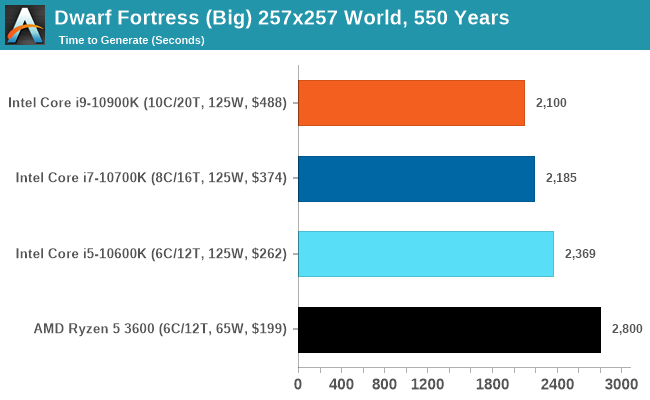

Dwarf Fortress

Another long standing request for our benchmark suite has been Dwarf Fortress, a popular management/roguelike indie video game, first launched in 2006. Emulating the ASCII interfaces of old, this title is a rather complex beast, which can generate environments subject to millennia of rule, famous faces, peasants, and key historical figures and events. The further you get into the game, depending on the size of the world, the slower it becomes.

DFMark is a benchmark built by vorsgren on the Bay12Forums that gives two different modes built on DFHack: world generation and embark. These tests can be configured, but range anywhere from 3 minutes to several hours. I’ve barely scratched the surface here, but after analyzing the test, we ended up going for three different world generation sizes.

This is another of our new tests.

220 Comments

View All Comments

ByteMag - Wednesday, May 20, 2020 - link

I'm wondering why the 3300X wasn't in the DigiCortex benchmark? This $120 dollar 4c/8t banger lays waste to the selected lineup. Or is it too much of a foreshadowing of how Zen 3 may perform? I guess benchmarks can sometimes be like a box of chocolates.ozzuneoj86 - Wednesday, May 20, 2020 - link

Just a request, but can you guys consider renaming the "IGP" quality level something different? The site has been doing it for a while and it kind of seems like they may not even know why at this point. Just change it to "Lowest" or something. Listing "IGP" as a test, when running a 2080 Ti on a CPU that doesn't have integrated graphics is extremely confusing to readers, to say the least.Also, I know the main reason for not changing testing methods is so that comparisons can be done (and charts can be made) without having to test all of the other hardware configs, but I have one small request for the next suite of tests (I'm sure they'll be revised soon). I'd request that testing levels for CPU benchmarks should be:

Low Settings at 720P

Max Settings at 1080P

Max Settings at 1440P

Max Settings at 4K

(Maybe a High Settings at 1080P thrown in for games where the CPU load is greatly affected by graphics settings)

Drop 8K testing unless we're dealing with flagship GPU releases. It just seems like 8K has very little bearing on what people are realistically going to need to know. A benchmark that shows a range from 6fps for the slowest to 9fps for the fastest is completely pointless, especially for CPU testing. In the future, replacing that with a more common or more requested resolution would surely be more useful to your readers.

Often times the visual settings in games do have a significant impact on CPU load, so tying the graphical settings to the resolution for each benchmark really muddies the waters. Why not just assume worst case scenario performance (max settings) for each resolution and go from there? Obviously anti-aliasing would need to be selected based on the game and resolution, with the focus being on higher frame rates (maybe no or low AA) for faster paced games and higher fidelity for slower paced games.

Just my 2 cents. I greatly appreciate the work you guys do and it's nice to see a tech site that is still doing written reviews rather than forcing people to spend half an hour watching a video. Yeah, I'm old school.

Spunjji - Tuesday, May 26, 2020 - link

Agreed 99% with this (especially that last part, all hial the written review) - but I'd personally say it makes more sense for the CPU reviews to be limited to 720p Low, 1080P High and 1440P Max.My theory behind that:

720p Low gives you that entirely academic CPU-limited comparison that some people still seem to love. I don't get it, but w/e.

1080p High is the kind of setting people with high-refresh-rate monitors are likely to run - having things look good, but not burning frames for near-invisible changes. CPU limiting is likely to be in play at higher frame rates. We can see whether a given CPU will get you all the way to your refresh-rate limit..

1440p Max *should* take you to GPU-limited territory. Any setting above this ought to be equally limited, so that should cover you for everything, and if a given CPU and/or game doesn't behave that way then it's a point of interest.

dickeywang - Wednesday, May 20, 2020 - link

With more and more cores being added to the CPU, it would've been nice to see some benchmarks under Linux.MDD1963 - Wednesday, May 20, 2020 - link

Darn near a full 2% gain in FPS in some games! Quite ...uhhh..... impressive! :/MDD1963 - Wednesday, May 20, 2020 - link

Doing these CPU gaming comparisons at 720P is just as silly as when HardOCP used to include 640x480 CPU scaling...; 1080P is low enough, go medium details if needed.Spunjji - Tuesday, May 26, 2020 - link

Personally agreed here. It just gives more fodder to the "15% advantage in gaming" trolls.croc - Wednesday, May 20, 2020 - link

It would be 'nice' if the author could use results from the exact same stack of chips for each test. If the same results cannot be obtained from the same stack, then whittle the stack down to those chips for which the full set of tests can be obtained. I could understand the lack of results on newly added tests...For a peer review exercise it would be imperative, and here at Anandtech I am sure that there are many peers....

69369369 - Thursday, May 21, 2020 - link

Overheating and very high power bills happens with Intel.Atom2 - Thursday, May 21, 2020 - link

Dear Ian, You must be the only person on the planet that goes to such lengths not to use AVX, that you even compare Intel's AVX512 instructions to a GPU based OpenCL, just to have a reason not to use it. Consequently you only have AMD win the synthetic benchmarks, but all real world math is held by Intel. Additionally, all those synthetics, which are "not" compiled with Intel C++. Forget it... GCC is only used by Universities. The level of bias towards AMD is becoming surreal.