NVIDIA's GeForce 6200 & 6600 non-GT: Affordable Gaming

by Anand Lal Shimpi on October 11, 2004 9:00 AM EST- Posted in

- GPUs

Power Consumption

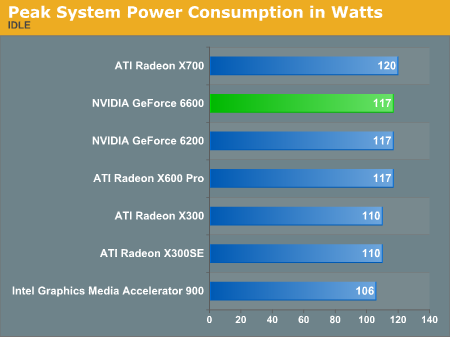

A new and much needed addition to our GPU review is tracking power consumption. Here, we're using a simple meter to track the power consumption at the power supply level, so what we're left with is total system power consumption. But with the rest of the components in the test system remaining the same and the only variable being the video card, we're able to get a reasonably accurate estimate of peak power usage here.At idle, all of the graphics cards pretty much perform the same, with the integrated graphics solution eating up the least amount of power at 106W for the entire system:

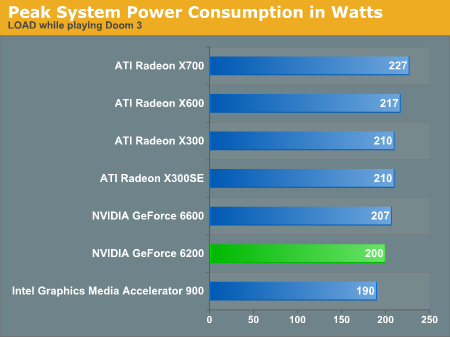

Then, we fired up Doom 3 and ran it at 800x600 (High Quality) in order to get a peak power reading for the system.

Interestingly enough, other than the integrated graphics solution, the 6200 is the lowest power card here - drawing even less power than the X300, but that is to be expected given that the X300 runs at a 25MHz higher clock speed.

It's also interesting to note that there's no difference in power consumption between the 128-bit and 64-bit X300 cards. The performance comparison is a completely different story, however.

In the end, none of these cards eat up too much power, with the X700 clearly leading the pack at 10% greater system power consumption than the 6600. It will be interesting to find out if there's any correlation here between power consumption and performance.

44 Comments

View All Comments

Saist - Monday, October 11, 2004 - link

xsliver : I think it's because ATi has generally cared more about optimizing for DirectX, and more recently just optimizing for API. OpenGl was never really big on ATi's list of supported API's... However, adding in Doom3, and the requirement of OGL on non-Windows-based systems, and OGL is at least as important to ATi now as DirectX. How long it will take to convert that priority into performance is unknown.Also, keep this in mind: Nvidia specifically built the Geforce mark-itecture from the ground up to power John Carmack's 3D dream. Nvidia has specifically stated they create their cards based on what Carmack says. Wether or not that is right or wrong I will leave up to you to decide, but that does very well explain the disparity between ID games and other games, even under OGL.

xsilver - Monday, October 11, 2004 - link

Just a conspiracy theory -- does the NV cards only perform well on the most popular / publicised games whereas the ATI cards excel due to a better written driver / better hardware?Or is the FRAPS testing biasing ATI for some reason?

Cygni - Monday, October 11, 2004 - link

What do you mean "who has the right games"? If you want to play Doom3, look at the Doom3 graphs. If you want to play FarCry, look a the FarCry graphs. If you want to play CoH, Madden, or Thunder 04, look at HardOCP's graphs. Every game is going to handle the cards differently. I really dont see anything wrong with AnandTech's current group of testing programs.And Newegg now has 5 6600 non-GTs in stock, ranging in price from $175-$148. But remember that it takes time to test and review these cards. When Anand went to get a 6600, its very likely that that was the only card he could find. I know I couldnt find one at all a week ago.

T8000 - Monday, October 11, 2004 - link

Check this, a XFX 6600 in stock for just $143:http://www.gameve.com/gve/Store/ProductDetails.asp...

Furthermore, the games you pick for a review make a large difference for the conclusion. Because of that, HardOCP has the 6200 outperforming the x600 by a small margin. So, I would like to know who has the right games.

And #2:

The X700/X800 is simular enough to the 9800 to compare them on pipelines and clock speeds. Based on that, the x700 should perform about the same.

Anand Lal Shimpi - Monday, October 11, 2004 - link

Thanks for the responses, here are some answers in no specific order:1) The X300 was omitted from the Video Stress Test benchmark because CS: Source was released before we could finish testing the X300, no longer giving us access to the beta. We will run the cards on the final version of CS: Source in future reviews.

2) I apologize for the confusing conclusion, that statement was meant to follow the line before it about the X300. I've made the appropriate changes.

3) No prob in regards to the Video Processor, I've literally been asking every week since May about this thing. I will get the full story one way or another.

4) I am working on answering some of your questions about comparing other cards to what we've seen here. Don't worry, the comparisons are coming...

Take care,

Anand

friedrice - Monday, October 11, 2004 - link

Here's my question, what is better? A Geforce 6800 or a Geforce 6600 GT? I wish there was like a Geforce round-up somewhere. And I saw some benchmarks that showed SLI does indeed work, but these were just used on 3dmark and anyone know if there is any actual tests out yet on SLI?Also to address another issue some of you have brought up, these new line of cards beat the 9800 Pro by a huge amount. But it's not worth the upgrade. Stick with what you have until it no longer works, and right now a 9800 Pro works just fine. Of course if you do need a new graphics card, the 6600 GT seems the way to go. If you can find someone that sells them.

O, and to address the pricing. nVidia only offers suggested retail prices. Vendors can up the price on parts so that they can still sell the inventory they have on older cards. In the next couple of months we should see these new graphics cards drop to the MSRP

ViRGE - Monday, October 11, 2004 - link

#10, because it's still an MP game at the core. The AI is as dumb as rocks, and is there for the console users. Most PC users will be playing this online, not alone in SP mode.rbV5 - Monday, October 11, 2004 - link

Thanks for the tidbit on the 6800's PVP. I'd like to see Anandtech take on a video card round up aimed at video processing and what these cards are actually capable of. It would fit in nicely with the media software/hardware Andrew's been looking at, and let users know what to actually expect from their hardware.thebluesgnr - Monday, October 11, 2004 - link

Buyxtremegear has the GeForce 6600 from Leadtek for $135. Gameve has 3 different cards (Sparkle, XFX, Leadtek) all under $150 for the 128MB version.#1,

they're probably talking about the power consumption under full load.

Sunbird - Monday, October 11, 2004 - link

All I hope is that the 128bit and 64bit versions have some easy way of distinguishing between them.