The NVIDIA GeForce GTX 1660 Ti Review, Feat. EVGA XC GAMING: Turing Sheds RTX for the Mainstream Market

by Ryan Smith & Nate Oh on February 22, 2019 9:00 AM ESTTotal War: Warhammer II (DX11)

Last in our 2018 game suite is Total War: Warhammer II, built on the same engine of Total War: Warhammer. While there is a more recent Total War title, Total War Saga: Thrones of Britannia, that game was built on the 32-bit version of the engine. The first TW: Warhammer was a DX11 game was to some extent developed with DX12 in mind, with preview builds showcasing DX12 performance. In Warhammer II, the matter, however, appears to have been dropped, with DX12 mode still marked as beta, but also featuring performance regression for both vendors.

It's unfortunate because Creative Assembly themselves have acknowledged the CPU-bound nature of their games, and with re-use of game engines as spin-offs, DX12 optimization would have continued to provide benefits, especially if the future of graphics in RTS-type games will lean towards low-level APIs.

There are now three benchmarks with varying graphics and processor loads; we've opted for the Battle benchmark, which appears to be the most graphics-bound.

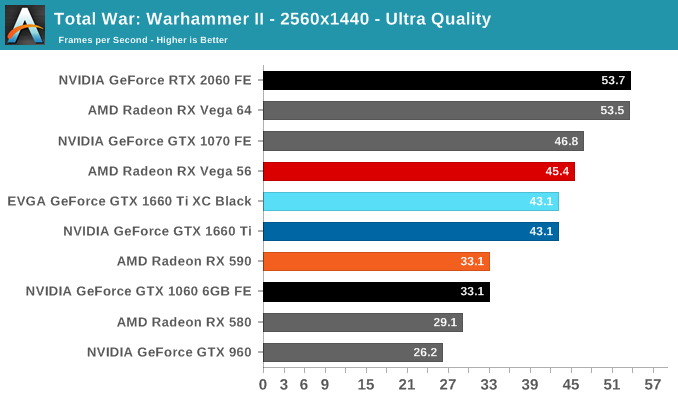

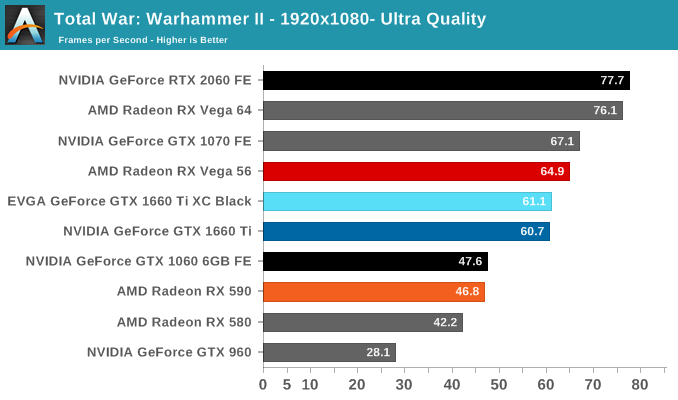

Rounding out our look at game performance is Total War: Warhammer II.

Here, the GTX 1660 Ti lags behind the RTX 2060 and GTX 1070 FE more than in the other games, offering only somewhere around 80% of the RTX 2060 speed and 90% of the GTX 1070. In turn, it doesn't improve as much upon the GTX 1060 6GB and GTX 960, though practically speaking it has rendered its RX 590 competition as last-generation performance, given that it's neck-and-neck with the GTX 1060 6GB FE.

157 Comments

View All Comments

Psycho_McCrazy - Tuesday, February 26, 2019 - link

Given that 21:9 monitors are also making great inroads into the gamer's purchase lists, can benchmark resolutions also include 2560.1080p, 3440.1440p and (my wishlist) 3840.1600p benchies??eddman - Tuesday, February 26, 2019 - link

2560x1080, 3440x1440 and 3840x1600That's how you right it, and the "p" should not be used when stating the full resolution, since it's only supposed to be used for denoting video format resolution.

P.S. using 1080p, etc. for display resolutions isn't technically correct either, but it's too late for that.

Ginpo236 - Tuesday, February 26, 2019 - link

a 3-slot ITX-sized graphics card. What ITX case can support this? 0.bajs11 - Tuesday, February 26, 2019 - link

Why can't they just make a GTX 2080Ti with the same performance as RTX 2080Ti but without useless RT and dlss and charge something like 899 usd (still 100 bucks more than gtx 1080ti)?i bet it will sell like hotcakes or at least better than their overpriced RTX2080ti

peevee - Tuesday, February 26, 2019 - link

Do I understand correctly that this thing does not have PCIe4?CiccioB - Thursday, February 28, 2019 - link

No, they have not a PCIe4 bus.Do you think they should have?

Questor - Wednesday, February 27, 2019 - link

Why do I feel like this was a panic plan in an attempt to bandage the bleed from RTX failure? No support at launch and months later still abysmal support on a non-game changing and insanely expensive technology.I am not falling for it.

CiccioB - Thursday, February 28, 2019 - link

Yes, a "panic plan" that required about 3 years to create the chips.3 years ago they already know that they would have panicked at the RTX cards launch and so they made the RT-less chip as well. They didn't know that the RT could not be supported in performance with the low number of CUDA core low level cards have.

They didn't know that the concurrent would have played with the only weapon it was left to it to battle, that is prize as they could not think that the concurrent was not ready with a beefed up architecture capable of the sa functionalities.

So, yes, they panicked for sure. They were not prepared to anything of what is happening,

Korguz - Friday, March 1, 2019 - link

" that required about 3 years to create the chips.3 years ago they already know that they would have panicked at the RTX cards launch and so they made the RT-less chip as well. They didn't know that the RT could not be supported in performance with the low number of CUDA core low level cards have. "

and where did you read this ? you do understand, and realize... is IS possible to either disable, or remove parts of an IC with out having to spend " about 3 years " to create the product, right ? intel does it with their IGP in their cpus, amd did it back in the Phenom days with chips like the Phenom X4 and X3....

CiccioB - Tuesday, March 5, 2019 - link

So they created a TU116, a completely new die without RT and Tensor Core, to reduce the size of the die and lose about 15% of performance with respect to the 2060 all in 3 months because they panicked?You probably have no idea of what are the efforts to create a 280mm^2 new die.

Well, by this and your previous posts you don't have idea of what you are talking about at all.