The NVIDIA GeForce GTX 1660 Ti Review, Feat. EVGA XC GAMING: Turing Sheds RTX for the Mainstream Market

by Ryan Smith & Nate Oh on February 22, 2019 9:00 AM ESTAshes of the Singularity: Escalation (DX12)

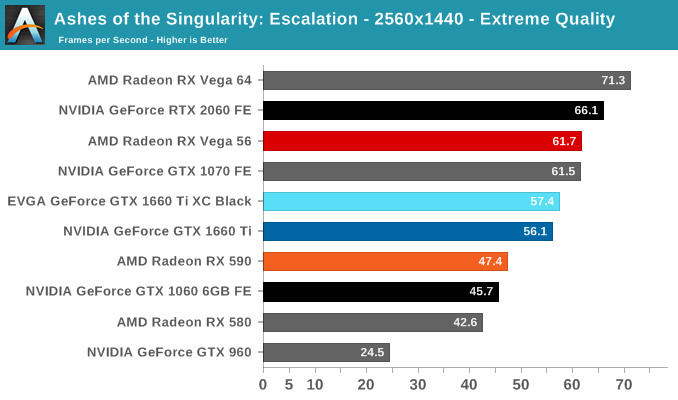

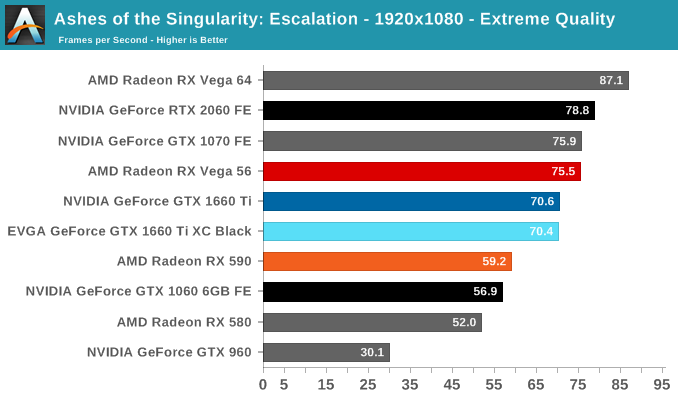

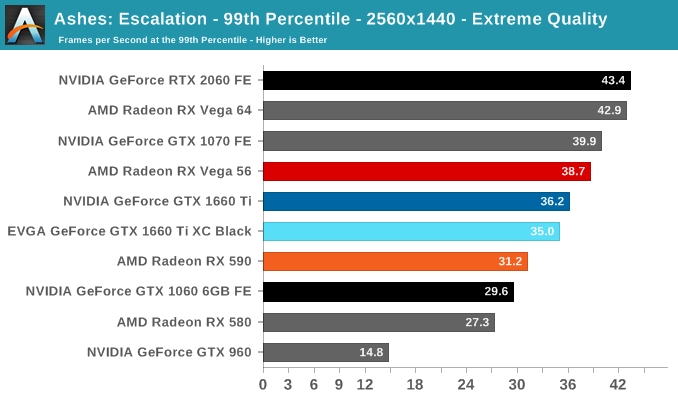

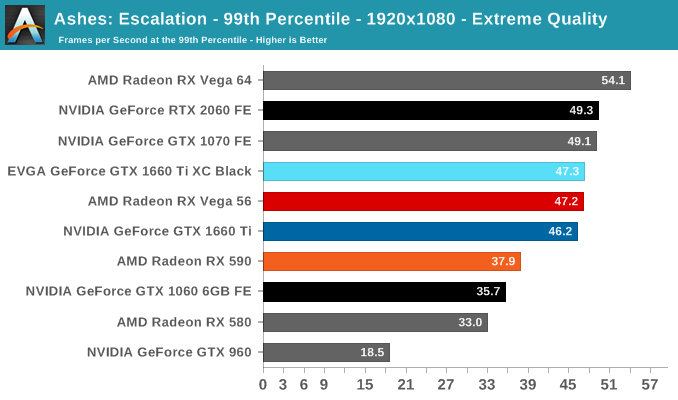

A veteran from both our 2016 and 2017 game lists, Ashes of the Singularity: Escalation remains the DirectX 12 trailblazer, with developer Oxide Games tailoring and designing the Nitrous Engine around such low-level APIs. The game makes the most of DX12's key features, from asynchronous compute to multi-threaded work submission and high batch counts. And with full Vulkan support, Ashes provides a good common ground between the forward-looking APIs of today. Its built-in benchmark tool is still one of the most versatile ways of measuring in-game workloads in terms of output data, automation, and analysis; by offering such a tool publicly and as part-and-parcel of the game, it's an example that other developers should take note of.

Settings and methodology remain identical from its usage in the 2016 GPU suite. To note, we are utilizing the original Ashes Extreme graphical preset, which compares to the current one with MSAA dialed down from x4 to x2, as well as adjusting Texture Rank (MipsToRemove in settings.ini).

We've updated some of the benchmark automation and data processing steps, so results may vary at the 1080p mark compared to previous data.

Interestingly, Ashes offers the least amount of improvement in the suite for the GTX 1660 Ti over the GTX 1060 6GB. Similarly, the GTX 1660 Ti lags behind the GTX 1070, which is already close to the older Turing sibling. With the GTX 1070 FE and RX Vega 56 neck-and-neck, the GTX 1660 Ti splits the RX 590/RX Vega 56 gap.

157 Comments

View All Comments

Yojimbo - Saturday, February 23, 2019 - link

My guess is that in the next (7 nm) generation, NVIDIA will create the RTX 3050 to have a very similar number of "RTX-ops" (and, more importantly, actual RTX performance) as the RTX 2060, thereby setting the capabilities of the RTX 2060 as the minimum targetable hardware for developers to apply RTX enhancements for years to come.Yojimbo - Saturday, February 23, 2019 - link

I wish there were an edit button. I just want to say that this makes sense, even if it eats into their margins somewhat in the short term. Right now people are upset over the price of the new cards. But that will pass assuming RTX actually proves to be successful in the future. However, if RTX does become successful but the people who paid money to be early adopters for lower-end RTX hardware end up getting squeezed out of the ray-tracing picture that is something that people will be upset about which NVIDIA wouldn't overcome so easily. To protect their brand image, NVIDIA need a plan to try to make present RTX purchases useful in the future being that they aren't all that useful in the present. They can't betray the faith of their customers. So with that in mind, disabling perfectly capable RTX hardware on lower end hardware makes sense.u.of.ipod - Friday, February 22, 2019 - link

As a SFFPC (mITX) user, I'm enjoying the thicker, but shorter, card as it makes for easier packaging.Additionally, I'm enjoying the performance of a 1070 at reduced power consumption (20-30w) and therefore noise and heat!

eastcoast_pete - Friday, February 22, 2019 - link

Thanks! Also a bit disappointed by NVIDIA's continued refusal to "allow" a full 8 GB VRAM in these middle-class cards. As to the card makers omitting the VR required USB3 C port, I hope that some others will offer it. Yes, it will add $20-30 to the price, but I don't believe I am the only one who's like the option to try some VR gaming out on a more affordable card before deciding to start saving money for a full premium card. However, how is VR on Nvidia with 6 GB VRAM? Is it doable/bearable/okay/great?eastcoast_pete - Friday, February 22, 2019 - link

"who'd like the option". Google keyboard, your autocorrect needs work and maybe some grammar lessons.Yojimbo - Friday, February 22, 2019 - link

Wow, is a USB3C port really that expensive?GreenReaper - Friday, February 22, 2019 - link

It might start to get closer once you throw in the circuitry needed for delivering 27W of power at different levels, and any bridge chips required.OolonCaluphid - Friday, February 22, 2019 - link

>However, how is VR on Nvidia with 6 GB VRAM? Is it doable/bearable/okay/great?It's 'fine' - the GTX 1050ti is VR capable with only 4gb VRAM, although it's not really advisable (see Craft computings 1050ti VR assessment on youtube - it's perfectly useable and a fun experience). The RTX 2060 is a very capable VR GPu, with 6gb VRAm. It's not really VRAM that is critical in VR GPU performance anyway - more the raw compute performance in rendering the same scene from 2 viewpoints simultaneously. So, I'd assess that the 1660ti is a perfectly viable entry level VR GPU. Just don't expect miracles.

eastcoast_pete - Saturday, February 23, 2019 - link

Thanks for the info! About the miracles: Learned a long time ago not to expect those from either Nvidia or AMD - fewer disappointments this way.cfenton - Friday, February 22, 2019 - link

You don't need a USB C port for VR, at least not with the two major headsets on the market today.