The iPhone XS & XS Max Review: Unveiling the Silicon Secrets

by Andrei Frumusanu on October 5, 2018 8:00 AM EST- Posted in

- Mobile

- Apple

- Smartphones

- iPhone XS

- iPhone XS Max

The A12 Vortex CPU µarch

When talking about the Vortex microarchitecture, we first need to talk about exactly what kind of frequencies we’re seeing on Apple’s new SoC. Over the last few generations Apple has been steadily raising frequencies of its big cores, all while also raising the microarchitecture’s IPC. I did a quick test of the frequency behaviour of the A12 versus the A11, and came up with the following table:

| Maximum Frequency vs Loaded Threads Per-Core Maximum MHz |

||||||

| Apple A11 | 1 | 2 | 3 | 4 | 5 | 6 |

| Big 1 | 2380 | 2325 | 2083 | 2083 | 2083 | 2083 |

| Big 2 | 2325 | 2083 | 2083 | 2083 | 2083 | |

| Little 1 | 1694 | 1587 | 1587 | 1587 | ||

| Little 2 | 1587 | 1587 | 1587 | |||

| Little 3 | 1587 | 1587 | ||||

| Little 4 | 1587 | |||||

| Apple A12 | 1 | 2 | 3 | 4 | 5 | 6 |

| Big 1 | 2500 | 2380 | 2380 | 2380 | 2380 | 2380 |

| Big 2 | 2380 | 2380 | 2380 | 2380 | 2380 | |

| Little 1 | 1587 | 1562 | 1562 | 1538 | ||

| Little 2 | 1562 | 1562 | 1538 | |||

| Little 3 | 1562 | 1538 | ||||

| Little 4 | 1538 | |||||

Both the A11 and A12’s maximum frequency is actually a single-thread boost clock – 2380MHz for the A11’s Monsoon cores and 2500MHz for the new Vortex cores in the A12. This is just a 5% boost in frequency in ST applications. When adding a second big thread, both the A11 and A12 clock down to respectively 2325 and 2380MHz. It’s when we are also concurrently running threads onto the small cores that things between the two SoCs diverge: while the A11 further clocks down to 2083MHz, the A12 retains the same 2380 until it hits thermal limits and eventually throttles down.

On the small core side of things, the new Tempest cores are actually clocked more conservatively compared to the Mistral predecessors. When the system just had one small core running on the A11, this would boost up to 1694MHz. This behaviour is now gone on the A12, and the clock maximum clock is 1587MHz. The frequency further slightly reduces to down to 1538MHz when there’s four small cores fully loaded.

Much improved memory latency

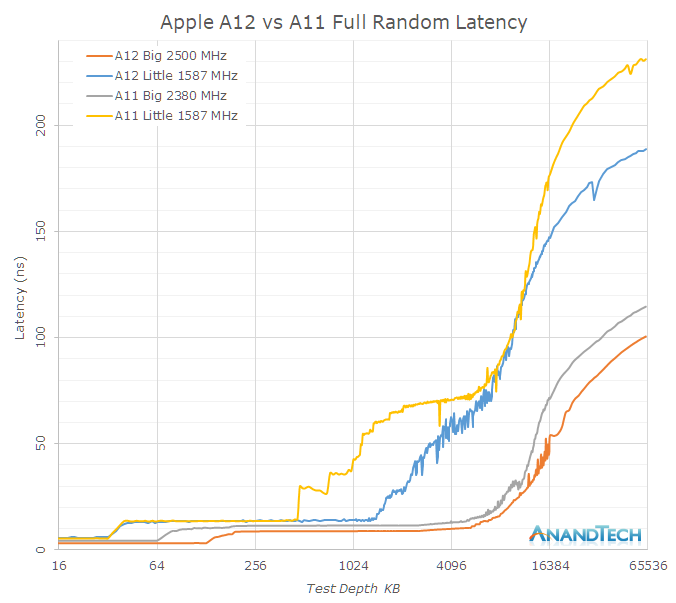

As mentioned in the previous page, it’s evident that Apple has put a significant amount of work into the cache hierarchy as well as memory subsystem of the A12. Going back to a linear latency graph, we see the following behaviours for full random latencies, for both big and small cores:

The Vortex cores have only a 5% boost in frequency over the Monsoon cores, yet the absolute L2 memory latency has improved by 29% from ~11.5ns down to ~8.8ns. Meaning the new Vortex cores’ L2 cache now completes its operations in a significantly fewer number of cycles. On the Tempest side, the L2 cycle latency seems to have remained the same, but again there’s been a large change in terms of the L2 partitioning and power management, allowing access to a larger chunk of the physical L2.

I only had the test depth test up until 64MB and it’s evident that the latency curves don’t flatten out yet in this data set, but it’s visible that latency to DRAM has seen some improvements. The larger difference of the DRAM access of the Tempest cores could be explained by a raising of the maximum memory controller DVFS frequency when just small cores are active – their performance will look better when there’s also a big thread on the big cores running.

The system cache of the A12 has seen some dramatic changes in its behaviour. While bandwidth is this part of the cache hierarchy has seen a reduction compared to the A11, the latency has been much improved. One significant effect here which can be either attributed to the L2 prefetcher, or what I also see a possibility, prefetchers on the system cache side: The latency performance as well as the amount of streaming prefetchers has gone up.

Instruction throughput and latency

| Backend Execution Throughput and Latency | ||||||||

| Cortex-A75 | Cortex-A76 | Exynos-M3 | Monsoon | Vortex | |||||

| Exec | Lat | Exec | Lat | Exec | Lat | Exec | Lat | |

| Integer Arithmetic ADD |

2 | 1 | 3 | 1 | 4 | 1 | 6 | 1 |

| Integer Multiply 32b MUL |

1 | 3 | 1 | 2 | 2 | 3 | 2 | 4 |

| Integer Multiply 64b MUL |

1 | 3 | 1 | 2 | 1 (2x 0.5) |

4 | 2 | 4 |

| Integer Division 32b SDIV |

0.25 | 12 | 0.2 | < 12 | 1/12 - 1 | < 12 | 0.2 | 10 | 8 |

| Integer Division 64b SDIV |

0.25 | 12 | 0.2 | < 12 | 1/21 - 1 | < 21 | 0.2 | 10 | 8 |

| Move MOV |

2 | 1 | 3 | 1 | 3 | 1 | 3 | 1 |

| Shift ops LSL |

2 | 1 | 3 | 1 | 3 | 1 | 6 | 1 |

| Load instructions | 2 | 4 | 2 | 4 | 2 | 4 | 2 | |

| Store instructions | 2 | 1 | 2 | 1 | 1 | 1 | 2 | |

| FP Arithmetic FADD |

2 | 3 | 2 | 2 | 3 | 2 | 3 | 3 |

| FP Multiply FMUL |

2 | 3 | 2 | 3 | 3 | 4 | 3 | 4 |

| Multiply Accumulate MLA |

2 | 5 | 2 | 4 | 3 | 4 | 3 | 4 |

| FP Division (S-form) | 0.2-0.33 | 6-10 | 0.66 | 7 | >0.16 | 12 | 0.5 | 1 | 10 | 8 |

| FP Load | 2 | 5 | 2 | 5 | 2 | 5 | ||

| FP Store | 2 | 1-N | 2 | 2 | 2 | 1 | ||

| Vector Arithmetic | 2 | 3 | 2 | 2 | 3 | 1 | 3 | 2 |

| Vector Multiply | 1 | 4 | 1 | 4 | 1 | 3 | 3 | 3 |

| Vector Multiply Accumulate | 1 | 4 | 1 | 4 | 1 | 3 | 3 | 3 |

| Vector FP Arithmetic | 2 | 3 | 2 | 2 | 3 | 2 | 3 | 3 |

| Vector FP Multiply | 2 | 3 | 2 | 3 | 1 | 3 | 3 | 4 |

| Vector Chained MAC (VMLA) |

2 | 6 | 2 | 5 | 3 | 5 | 3 | 3 |

| Vector FP Fused MAC (VFMA) |

2 | 5 | 2 | 4 | 3 | 4 | 3 | 3 |

To compare the backend characteristics of Vortex, we’ve tested the instruction throughput. The backend performance is determined by the amount of execution units and the latency is dictated by the quality of their design.

The Vortex core looks pretty much the same as the predecessor Monsoon (A11) – with the exception that we’re seemingly looking at new division units, as the execution latency has seen a shaving of 2 cycles both on the integer and FP side. On the FP side the division throughput has seen a doubling.

Monsoon (A11) was a major microarchitectural update in terms of the mid-core and backend. It’s there that Apple had shifted the microarchitecture in Hurricane (A10) from a 6-wide decode from to a 7-wide decode. The most significant change in the backend here was the addition of two integer ALU units, upping them from 4 to 6 units.

Monsoon (A11) and Vortex (A12) are extremely wide machines – with 6 integer execution pipelines among which two are complex units, two load/store units, two branch ports, and three FP/vector pipelines this gives an estimated 13 execution ports, far wider than Arm’s upcoming Cortex A76 and also wider than Samsung’s M3. In fact, assuming we're not looking at an atypical shared port situation, Apple’s microarchitecture seems to far surpass anything else in terms of width, including desktop CPUs.

253 Comments

View All Comments

Ansamor - Friday, October 5, 2018 - link

Same app for Android https://play.google.com/store/apps/details?id=com....tim1724 - Friday, October 5, 2018 - link

My iPhone XS scored 2162. :)DERSS - Saturday, October 6, 2018 - link

Is it much versus Kirin and Qualcomm or not?shank2001 - Saturday, October 6, 2018 - link

2868 on my XS Maxname99 - Saturday, October 6, 2018 - link

But it is unclear that the benchmark is especially useful. In particular if it's just generic C code (as opposed to making special use of the Apple NN APIs) then it is just testing the CPU, not the NPU or even NN running on GPU.You scored 2162. iPhone 6S scores 642 (according to the picture). That sort of 3.5x difference to me looks like a lot less than the boost I'd expect from an NPU, and may just reflect basically 2x better CPU plus availability of the small cores (not present on iPhone 6S).

edwpang - Friday, October 5, 2018 - link

There are no storage, network, and phone tests. Hopefully, these tests will included in future update.name99 - Friday, October 5, 2018 - link

"Apple promised a significant performance improvement in iOS12, thanks to the way their new scheduler is accounting for the loads from individual tasks. The operating system’s kernel scheduler tracks execution time of threads, and aggregates this into an utilisation metric which is then used by for example the DVFS mechanism."This is not the only changes in the newest Darwin. There are also changes in GCD scheduling. There was a lot of cruft surrounding that in earlier Darwins (issues of lock implementations, how priority inversion was handled, the heuristics of when a task was so short it's cheaper to just complete it than give up the CPU "for fairness --- but everyone then pays the switching cost"). These are even more difficult to tease out (and certainly won't present in single-threaded benchmarking) but are considered to be significant. There's also been a lot of thinking inside Apple about best practices for GCD (and the generic problem of "how to use multiple cores") and this has likely been translated into new designs within at least some frameworks and Apple's tier1 apps.

You can see this discussed here:

https://gist.github.com/tclementdev/6af616354912b0...

sheltem - Friday, October 5, 2018 - link

Can we chalk up the improvements of the 2x lens to computational HDR or is there a hardware improvement as well?darkich - Friday, October 5, 2018 - link

I just can't wait for Apple to FINALLY flesh out their in-house Mac chips.Not because I love Apple, but simply because I think the end result will be spectacular and outright shocking for Intel..and I do hate Intel.

They are disgustingly overrated.

varase - Tuesday, October 23, 2018 - link

I hope it's a good while ... I *need* VMWare and the ability to run Windows in a VM (for work).Not to mention, I'd be really disappointed if I couldn't boot Windows for game play.