The NVIDIA GeForce RTX 2080 Ti & RTX 2080 Founders Edition Review: Foundations For A Ray Traced Future

by Nate Oh on September 19, 2018 5:15 PM EST- Posted in

- GPUs

- Raytrace

- GeForce

- NVIDIA

- DirectX Raytracing

- Turing

- GeForce RTX

Battlefield 1 (DX11)

Battlefield 1 returns from the 2017 benchmark suite, the 2017 benchmark suite with a bang as DICE brought gamers the long-awaited AAA World War 1 shooter a little over a year ago. With detailed maps, environmental effects, and pacy combat, Battlefield 1 provides a generally well-optimized yet demanding graphics workload. The next Battlefield game from DICE, Battlefield V, completes the nostalgia circuit with a return to World War 2, but more importantly for us, is one of the flagship titles for GeForce RTX real time ray tracing, although at this time it isn't ready.

We use the Ultra preset is used with no alterations. As these benchmarks are from single player mode, our rule of thumb with multiplayer performance still applies: multiplayer framerates generally dip to half our single player framerates. Battlefield 1 also supports HDR (HDR10, Dolby Vision).

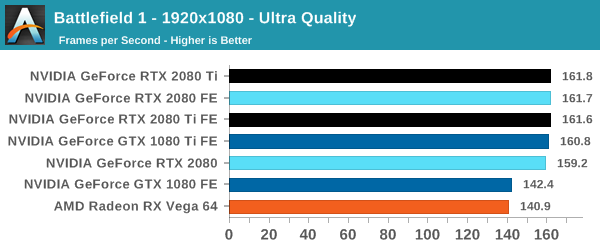

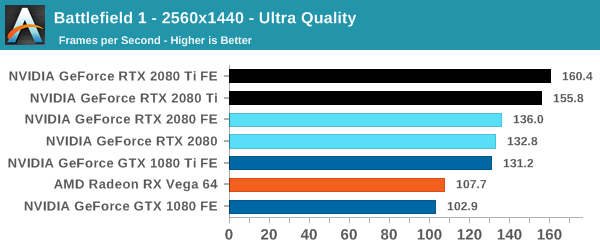

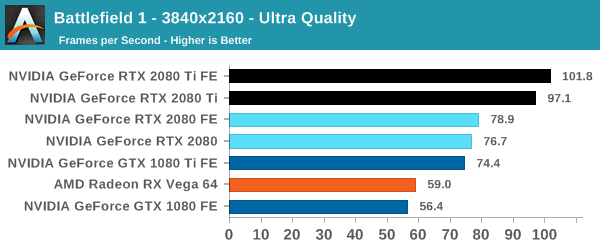

| Battlefield 1 | 1920x1080 | 2560x1440 | 3840x2160 |

| Average FPS |  |

|

|

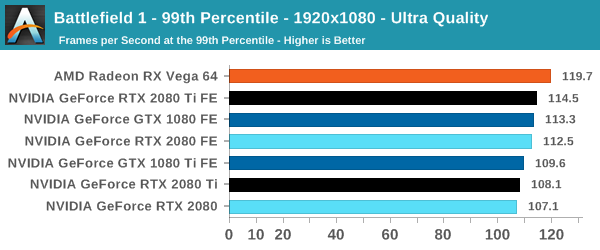

| 99th Percentile |  |

|

|

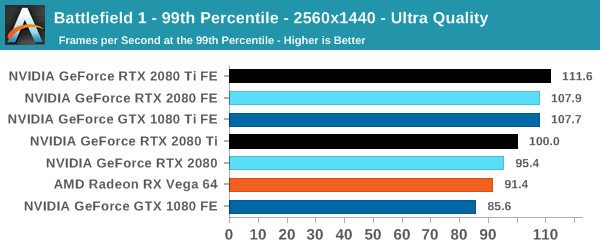

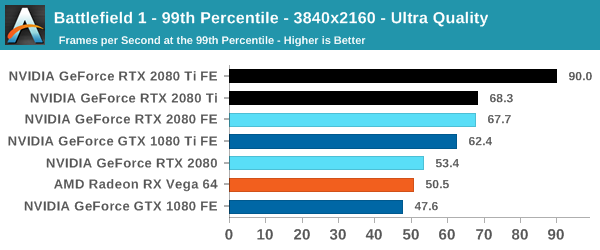

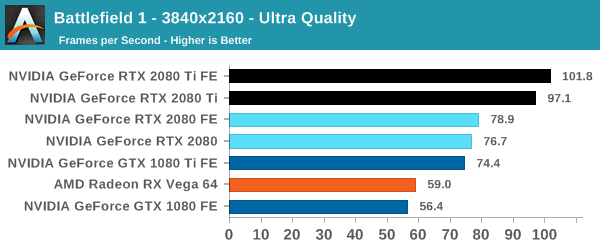

At this point, the RTX 2080 Ti is fast enough to touch the CPU bottleneck at 1080p, but it keeps its substantial lead at 4K. Nowadays, Battlefield 1 runs rather well on a gamut of cards and settings, and in optimized high-profile games like these, the 2080 in particular will need to make sure that the veteran 1080 Ti doesn't edge too close. So we see the Founders Edition specs are enough to firmly plant the 2080 Founders Edition faster than the 1080 Ti Founders Edition.

The outlying low 99th percentile reading for the 2080 Ti occurred on repeated testing, and we're looking into it further.

337 Comments

View All Comments

imaheadcase - Wednesday, September 19, 2018 - link

They wouldn't, because they would not have the extra bloat in the card, but performed the exact same..people would get the AMD card because of all the bullshit in the nvidia card not yet even proven to by worth it.Better question: How much does nvidia pay you to be a shill here and on forums? I mean you are obviously delusional about it.

DigitalFreak - Wednesday, September 19, 2018 - link

Typical fanboy response.Fritzkier - Wednesday, September 19, 2018 - link

Do you actually read V900's other comments? He is a shill.And anyway AMD did the same thing with Vega 64 (1080 ish performance but a little expensive, except in Vulkan) and see that many also outrageous with Vega releases. That's just because AMD is one year late and making it a little expensive.

bji - Wednesday, September 19, 2018 - link

He may not be a shill; perhaps he's just trying to counterbalance the clueless posters who don't understand why products cost what they do.I know that I am not a shill for anyone, but I can understand the motivation in posting something like that.

tamalero - Wednesday, September 19, 2018 - link

Cost means nothing if they cant provide what they are expected to.Noone gives a darn if the die is insane if their performance isnt as good as promised.

R600 was huge, hot, and was a total new game in graphic design and tech. Was its performance good? NO.

Inteli - Wednesday, September 19, 2018 - link

I think they're substantially overblowing the significance of raytracing and DLSS, at least in this generation's lifespan.The best way I've seen it put is this: look at the Shadow of the Tomb Raider demo. There isn't *that* much of a difference between raytracing on and off. Shaders, ambient occlusion, and other such cheats have gotten so good that they're very close to ray tracing.

Will ray tracing ever be significantly better than raster rendering? Of course it will. It's the future of rendering. Is it significantly better now? No.

It's not an issue of "card is expensive", it "card isn't a good value compared to its predecessors in current games". Most of those extra transistors aren't contributing to the card's performance right now, so you're paying a lot more for nothing, at least right now. For current games, the 2080 is 1080 Ti performance for 1080 Ti prices.

Unless you either A. must have the latest and greatest, or B. anticipate playing games that have been confirmed to support RT, I don't see a compelling reason to buy a 2080 over a 1080 Ti, at least until 1080 Ti supply dries up.

BurntMyBacon - Thursday, September 20, 2018 - link

@Inteli: "For current games, the 2080 is 1080 Ti performance for 1080 Ti prices."I think you meant greater than 1080Ti prices. At the same price and performance, I'd buy newer if for no other reason than to have longer support. The extra features (regardless of whether or not they are used in games I play) are just icing on the cake at that point.

Inteli - Saturday, September 22, 2018 - link

Right.eva02langley - Thursday, September 20, 2018 - link

Because it is their only selling point. It is obviously not the performances.imaheadcase - Wednesday, September 19, 2018 - link

OH so my 1080Ti makes me a AMD fanboy. right..