The Toshiba RC100 SSD Review: Tiny Drive In A Big Market

by Billy Tallis on June 14, 2018 9:00 AM ESTExploring the NVMe Host Memory Buffer Feature

Most modern SSDs include onboard DRAM, typically in a ratio of 1GB RAM per 1TB of NAND flash memory. This RAM is usually dedicated to tracking where each logical block address is physically stored on the NAND flash—information that changes with every write operation due to the wear leveling that flash memory requires. This information must also be consulted in order to complete any read operation. The standard DRAM to NAND ratio provides enough RAM for the SSD controller to use a simple and fast lookup table instead of more complicated data structures. This greatly reduces the work the SSD controller needs to do to handle IO operations, and is key to offering consistent performance.

SSDs that omit this DRAM can be cheaper and smaller, but because they can only store their mapping tables in the flash memory instead of much faster DRAM, there's a substantial performance penalty. In the worst case, read latency is doubled as potentially every read request from the host first requires a NAND flash read to look up the logical to physical address mapping, then a second read to actually fetch the requested data.

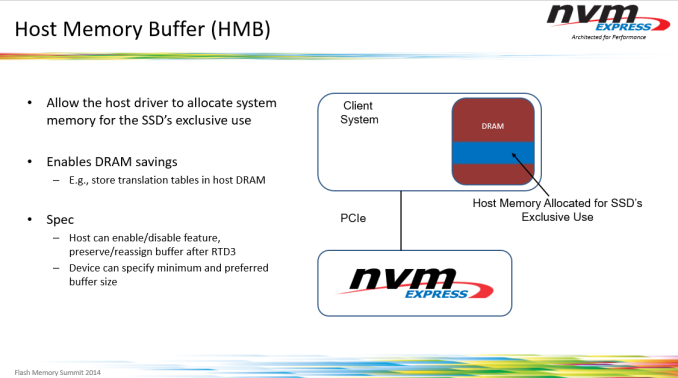

The NVMe version 1.2 specification introduced an in-between option for SSDs. The Host Memory Buffer (HMB) feature takes advantage of the DMA capabilities of PCI Express to allow SSDs to use some of the DRAM attached to the CPU, instead of requiring the SSD to bring its own DRAM. Accessing host memory over PCIe is slower than accessing onboard DRAM, but still much faster than reading from flash. The HMB is not intended to be a full-sized replacement for the onboard DRAM that mainstream SSDs use. Instead, all SSDs using the HMB feature so far have targeted buffer sizes in the tens of megabytes. This is sufficient for the drive to cache mapping information for tens of gigabytes of flash, which is adequate for many consumer workloads. (Our ATSB Light test only touches 26GB of the drive, and only 8GB of the drive is accessed more than once.)

Caching is of course one of the most famously difficult problems in computer science and none of the SSD controller vendors are eager to share exactly how their HMB-enabled controllers and firmware use the host DRAM they are given, but it's safe to assume the caching strategies focus on retaining the most recently and heavily used mapping information. Areas of the drive that are accessed repeatedly will have read latencies similar to that of mainstream drives, while data that hasn't been touched in a while will be accessed with performance resembling that of traditional DRAMless SSDs.

SSD controllers do have some memory built in to the controller itself, but usually not enough to allow a significant portion of NAND mapping tables to be cached. For example, the Marvell 88SS1093 Eldora high-end NVMe SSD controller has numerous on-chip buffers with capacities in the kilobyte range and aggregate capacity of less than 1MB. Some SSD vendors have hinted that their controllers have significantly more on-board memory—Western Digital says this is why their SN520 NVMe SSD doesn't use HMB, but they declined to say how much memory is on that controller. We've also seen some other drives in recent years that don't fall clearly into the DRAMless category or the 1GB per TB ratio. The Toshiba OCZ VX500 uses a 256MB DRAM part for the 1TB model, but the smaller capacity drives rely just on the memory built in to the controller (and of course, Toshiba didn't disclose the details of that controller architecture).

The Toshiba RC100 requests a block of 38 MB of host DRAM from the operating system. The OS could provide more or less than the drive's preferred amount, and if the RC100 gets less than 10MB it will give up on trying to use HMB at all. Both the Linux and Windows NMVe drivers expose some settings for the HMB feature, allowing us to test the RC100 with HMB enabled and disabled. In theory, we could also test with varying amounts of host memory allocated to the SSD, but that would be a fairly time-consuming exercise and would not reflect any real-world use cases, because the driver settings in question are so obscure and not worth changing from their defaults.

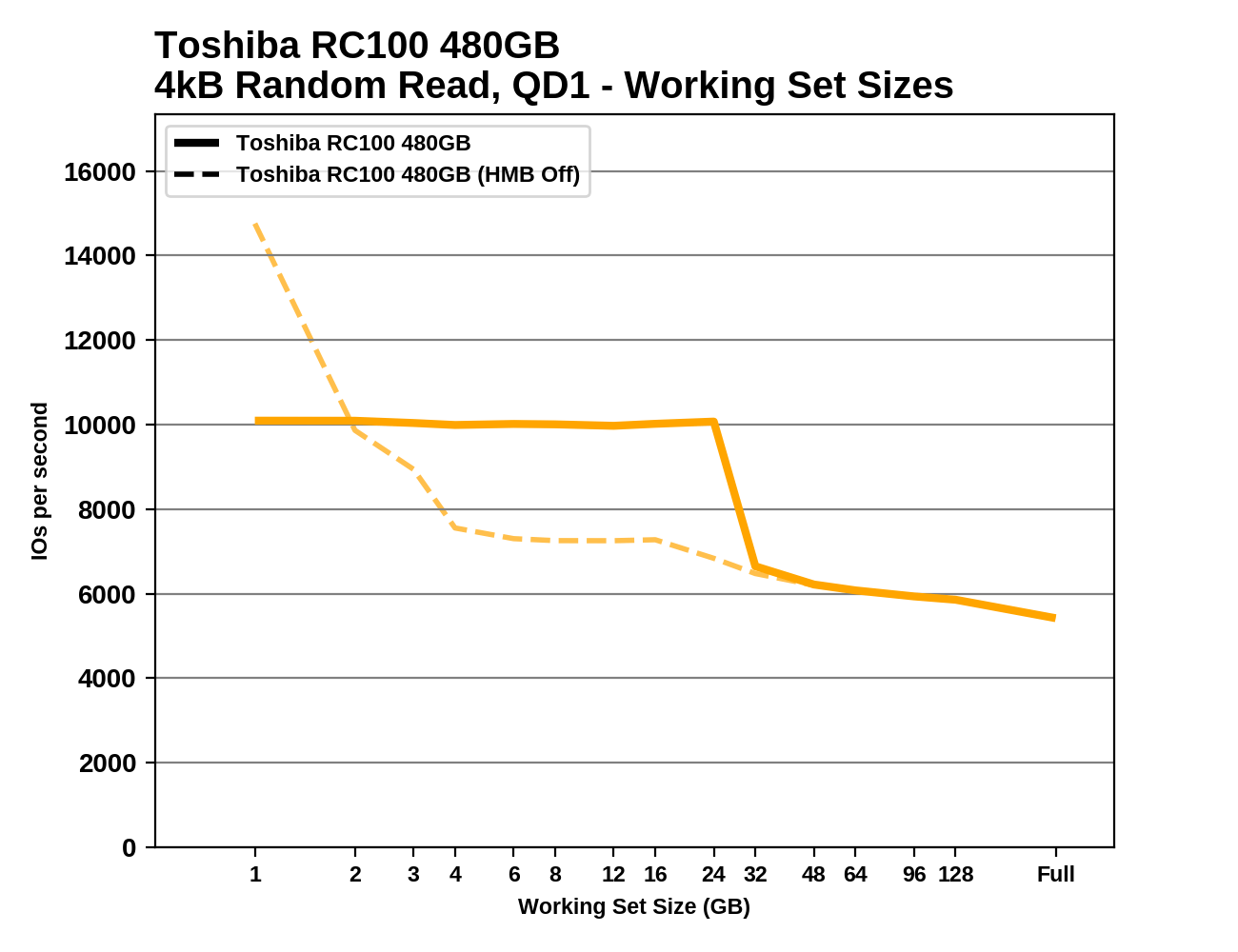

Working Set Size

We can see the effects of the HMB cache quite clearly by measuring random read performance while increasing the test's working set—the amount of data that's actively being accessed. When all of the random reads are coming from the same 1GB range, the RC100 performs much better than when the random reads span the entire drive. There's a sharp drop in performance when the working set approaches 32GB. When the RC100 is tested with HMB off, performance is just as good for a 1GB working set (and actually substantially better on the 480GB model), but larger working sets are almost as slow as the full-span random reads. It looks like the RC100's controller may have about 1MB of built-in memory that is much faster than accessing host DRAM over the PCIe link.

|

|||||||||

Most mainstream SSDs offer nearly the same random read performance regardless of the working set size, though performance through this test varies some due to other factors (eg. thermal throttling). The drives using the Phison E7 and E8 NVMe controllers are a notable exception, with significant performance falloff as the working set grows, despite these drives being equipped with ample onboard DRAM.

62 Comments

View All Comments

Samus - Thursday, June 14, 2018 - link

I didn’t consider it either. The WD Black hit the sweet spot for me, picked the 512GB up on sale for $150...Ryan Smith - Thursday, June 14, 2018 - link

"My issue with Anandtech was the sole posting of the 970 EVO review and no 970 PRO review now for over 7 weeks."On the hardware side of matters, Samsung sampled us the 970 EVO at launch. They did not sample us the 970 PRO at that time. So that greatly impacts what gets reviewed and when.

XabanakFanatik - Thursday, June 14, 2018 - link

I'm very confused at why Samsung would have sampled several other review sites with both drives (obvious by the reviews of both being posted together before launch) but have skipped on sampling Anandtech at the same time.Maybe it was a mistake? Maybe it was intentional? Maybe the 970 Pro would not have shined as well in the thorough testing you do here?

In any case, I need to apologize. Sorry, Billy, for jumping you about it. Thanks for an answer.

Ryan Smith - Friday, June 15, 2018 - link

Samsung essentially does random sampling. We got the EVO at two capacities instead of an EVO and a PRO.melgross - Thursday, June 14, 2018 - link

Well, maybe that answers your question. If those other sites are inferior, then why would you care that they came out with early reviews?The truth is that these drives will provide more than enough performance for most people, and that includes most people here, if they’re willing to admit it.

CheapSushi - Thursday, June 14, 2018 - link

Why are you so cranky? Seriously. Eat a snickers.gglaw - Wednesday, June 20, 2018 - link

He had a completely legitimate request/concern. If historically AT and other big sites typically review the top 2 models of any given release at a time like previous generation EVO/EVO Pro, GTX 1070/1080, etc., and HE has an interest in the product even if he's part of the <5% who cares, a thorough review would still be very significant for a semi expensive purchase. Most of us have 0 intention of buying the vast, vast majority of the reviews we read - we just like to know how new products are performing. Just like Billy and many of us here, the Toshiba drive is interesting but very few of us have any intention of buying it.Flagship products may only interest a very small percentage of the general public, but a much higher percentage of techies who follow hardware sites and even engage in the forums and comments. Most of us hardware enthusiasts buy plenty of things with almost no practical value. Anything beyong the AT light SSD testing is completely irrelevant to most home users yet we still care about the destroyer and heavy tests. I have the 850, 850 pro, 960 evo, 960 pro, and the cheapest per GB drive ever released (The Micron 3D TLC 2TB drive that goes on sale for $270 range every other week and barely above $200 with the father's day ebay coupon). My LAN room has all these drives running almost side by side and sadly no one including myself can even tell which drive is in which gaming station. Yet, I have no issues with paying 400% more per GB on one drive vs another that I literally can't tell the difference in when using the computers. The meaningless but insane numbers I see on CrystalMark somehow gives me some satisfaction.

Without Ryan clarifying the issue, most of us just assume products are sampled together based on how the reviews have come out in the past. Knowing this was different than their typical review pattern, maybe they should've just clarified it in the intro. Bashing them was unnecessary, but questioning why they would omit a major flagship release is completely valid. Flagship reviews are very interesting whether or not we buy them. They're indicative of many things that trickle down or where a company is in their technological advancement compared to others. Just because there is minimal real world difference between the 850/pro, 960/pro, how do we know they didn't tweak the 970 pro more? If Nvidia's flahship destroy's AMD's but their current midrange products are similar price/performance, there's a good chance the next midrange GTX card will be the midrange king (the 1060 comes out after 1080, the 1160 will come out after the 1180).

ptrinh1979 - Thursday, June 14, 2018 - link

I also find reviews like these to be refreshing. I prefer a variety of product reviews, not *just* the latest, greatest, and sometimes unattainable products. This review was very interesting to me because despite its flaws when the drive is full at lower capacities, its performance to price ratio makes it a contender for casual workloads. What was *really* useful for me was the price comparison chart at the end with the different capacities. I would use charts like that, and then cross reference the performance characteristics when I am recommending drive upgrades for clients who do not always have top dollars to spend, nor justify on an upgrade, yet not content to recommend typical upgrades, or corporate style upgrade recommendations.jabber - Saturday, June 16, 2018 - link

I like the reviews of the cheaper but still 'decent' gear as it works for my customers who don't want screaming top end stuff but want something better then spinning rust. Decent budget SSD options are important.ChickaBoom4768 - Saturday, June 16, 2018 - link

Totally agree with you. Such low priced technology has a real potential to disrupt the existing market instead of another $600 Intel/Samsung drive. In this case of course the drive is a sad disappointment but it was a good review.