Image Quality Analysis Fall 2003: A Glance Through the Looking Glass

by Derek Wilson on December 10, 2003 11:14 PM EST- Posted in

- GPUs

Antialiasing

Non-horizontal and non-vertical straight lines and edges need to be approximated via multiple small horizontal or vertical lines. This is due to the fact that pixels on the screen are laid out in a grid. The resulting problem is called aliasing and it can bee seen as jagged edges in an image.To combat this, supersample antialiasing was created. The principle behind this is that a larger resolution image is generated for each scene. This larger image is then downsampled and filtered. Of course, generating this image is very expensive, and to combat the performance hit, multisample antialiasing was devised.

Multisample antialiasing works on the same principal as supersample without having to generate the image at a larger resolution. The idea is to generate multiple, slightly-shifted versions of each pixel at the same time for use in the filtering process. The level of antialiasing has to do with the number of pixels generated (subsamples) to calculate the final pixel color value.

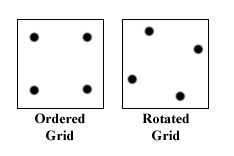

The differences in image quality come in when we look at what subsamples are generated for each pixel. Generating subsamples that form a square with horizontal and vertical edges within each pixel means we are using a method called ordered grid antialiasing. This doesn't lend itself to smoothing very close to horizontal or very close to vertical edges. To address such edges, we can slightly rotate or skew the square in order (giving us the rotated grid antialiasing scheme).

|

| The squares represent pixels while the dots within are multisample subsamples. |

As it happens, ATI uses a rotated grid antialiasing, while NVIDIA sticks with ordered. This creates a lot of room for variation between the cards antialiased images.

35 Comments

View All Comments

nourdmrolNMT1 - Thursday, December 11, 2003 - link

i hate flame wars but, blackshrike....there is hardly any difference between the images. and nvidia used to be way behind in that area. so they have caught up, and are actually in some instances doing more work to get the image to look a little different, or maybe they render everything that should be there, while ati doesnt (halo 2)

MIKE

Icewind - Thursday, December 11, 2003 - link

I have no idea what your bitching about Blackshrike, the UT2k3 pics look exactly the same to me.Perhaps you should go work for for AT and run benchmarks how you want them done instead of whining like a damn 5 year old..sheesh.

Shinei - Thursday, December 11, 2003 - link

Maybe I'm going blind at only 17, but I couldn't tell a difference between nVidia's and ATI's AF, and I even had a hard time seeing the effects of the AA. I agree with AT's conclusion, it's very hard to tell which one is better, and it's especially hard to tell the difference when you're in the middle of a firefight; yes, it's nice to have 16xAF and 6xAA, but is it NECESSARY if it looks pretty at 3 frames per second? I'm thinking "No"; performance > quality, that's why quality is called a LUXURY and not a requirement.Now I imagine that since I didn't hop up and down and screech "omg nvidia is cheeting ATI owns nvidia" like a howler monkey on LSD, I'll be called an nVidia fanboy and/or told that A) I'm blind, B) my monitor sucks, C) I'm color blind, and D) my head is up my biased ass. Did I meet all the basic insults from ATI fanboys, or are there some creative souls out there who can top that comprehensive list? ;)

nastyemu25 - Thursday, December 11, 2003 - link

cheer up emo kidBlackShrike - Thursday, December 11, 2003 - link

For my first line I forget to say blurry textures on the nvidia card. Sry, I was frustrated at the article.BlackShrike - Thursday, December 11, 2003 - link

Argh, this article concluded suddenly and without concluding anything. Not to mention, I saw definite blurry textures in UT 2003, and TRAOD. Not to mention the use of D3D AF Tester seemed to imply a major problem with one or the other hardware but they didn't use it at different levels of AF. I mean I only use 4-8 AF, I'd like to see the difference.AND ANOTHER THING. OKAY THE TWO ARE ABOUT THE SAME IN AF BUT IN AA ATI USUALLY WINS. SO, IF YOU CAN'T CONCLUDE ANYTHING, GIVE US A PERFORMACE CONCLUSION, like which runs better with AA or AF? Which creates the best with both settings enabled?

Oh and AT. Remember back to the very first ATI 9700 Pro, you did tests with 6x AA and 16 AF. DO IT AGAIN. I want to see which is faster and better quality when their settings are absoulutely maxed out. Because I prefer playing 1024*768 at 6x AA and 16 AF then 1600*1200 at 4x AA 8x AF because I have a small moniter.

I am VERY disappointed in this article. You say Nvidia has cleaned up their act, but you don't prove anything conclusive as to why. You say they are similar but don't say why. The D3D AF Tester was totally different for the different levels. WHAT DOES THIS MEAN? Come on Anand clean up this article, it's very poorly designed and concluded and is not at all like your other GPU articles.

retrospooty - Thursday, December 11, 2003 - link

Well a Sony G500 is pretty good in my book =)Hanners - Thursday, December 11, 2003 - link

Not a bad article per se - Shame about the mistakes in the filtering section and the massive jumping to conclusions regarding Halo.gordon151 - Thursday, December 11, 2003 - link

I'm with #6 and the sucky monitor theory :P.Icewind - Thursday, December 11, 2003 - link

Or your monitor sucks #5ATI wins either way