Intel Optane SSD DC P4800X 750GB Hands-On Review

by Billy Tallis on November 9, 2017 12:00 PM ESTSingle-Threaded Performance

This next batch of tests measures how much performance can be driven by a single thread performing asynchronous I/O to produce queue depths ranging from 1 to 64. With these fast SSDs, the CPU can become the bottleneck at high queue depths and clock speed can have a major impact on IOPS.

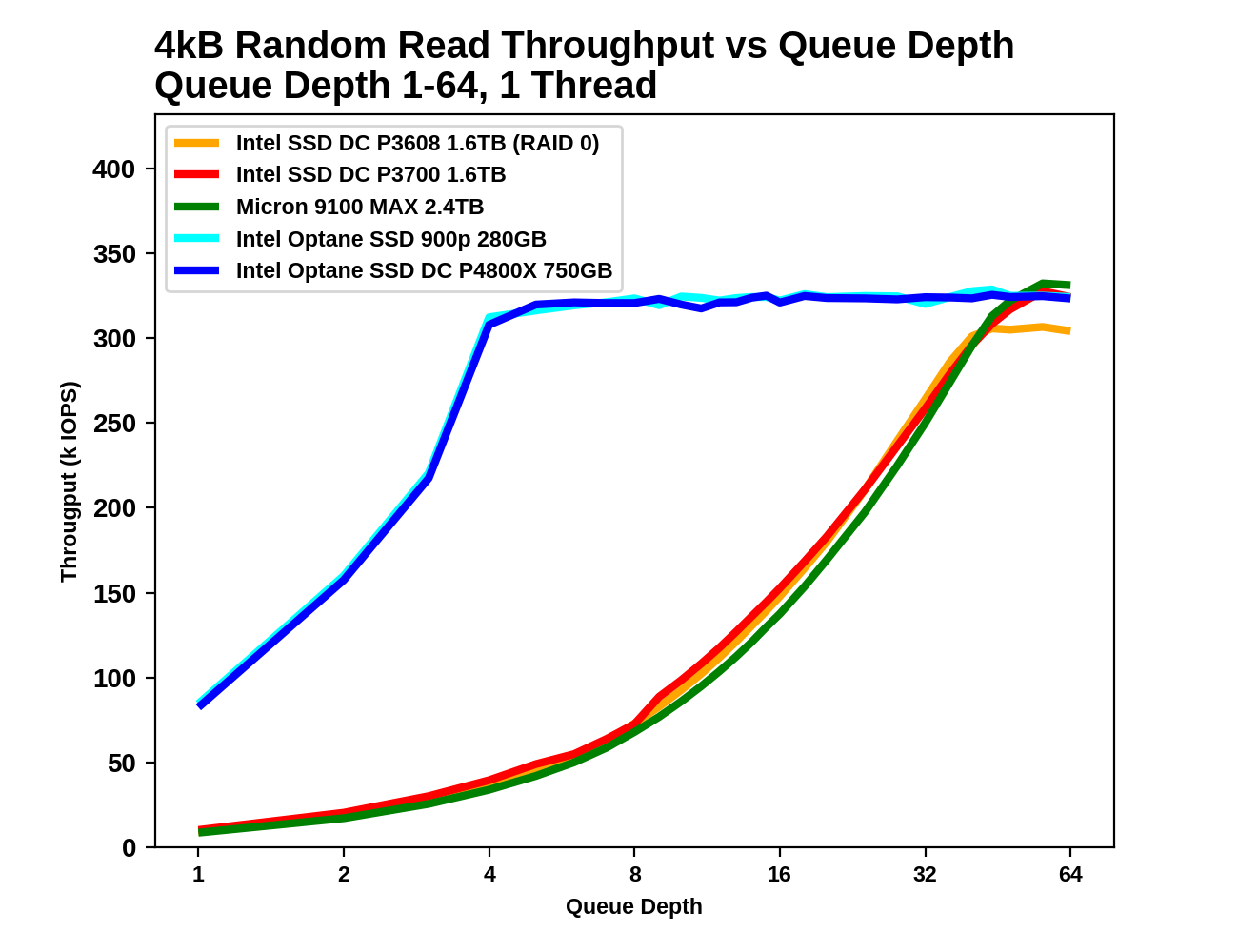

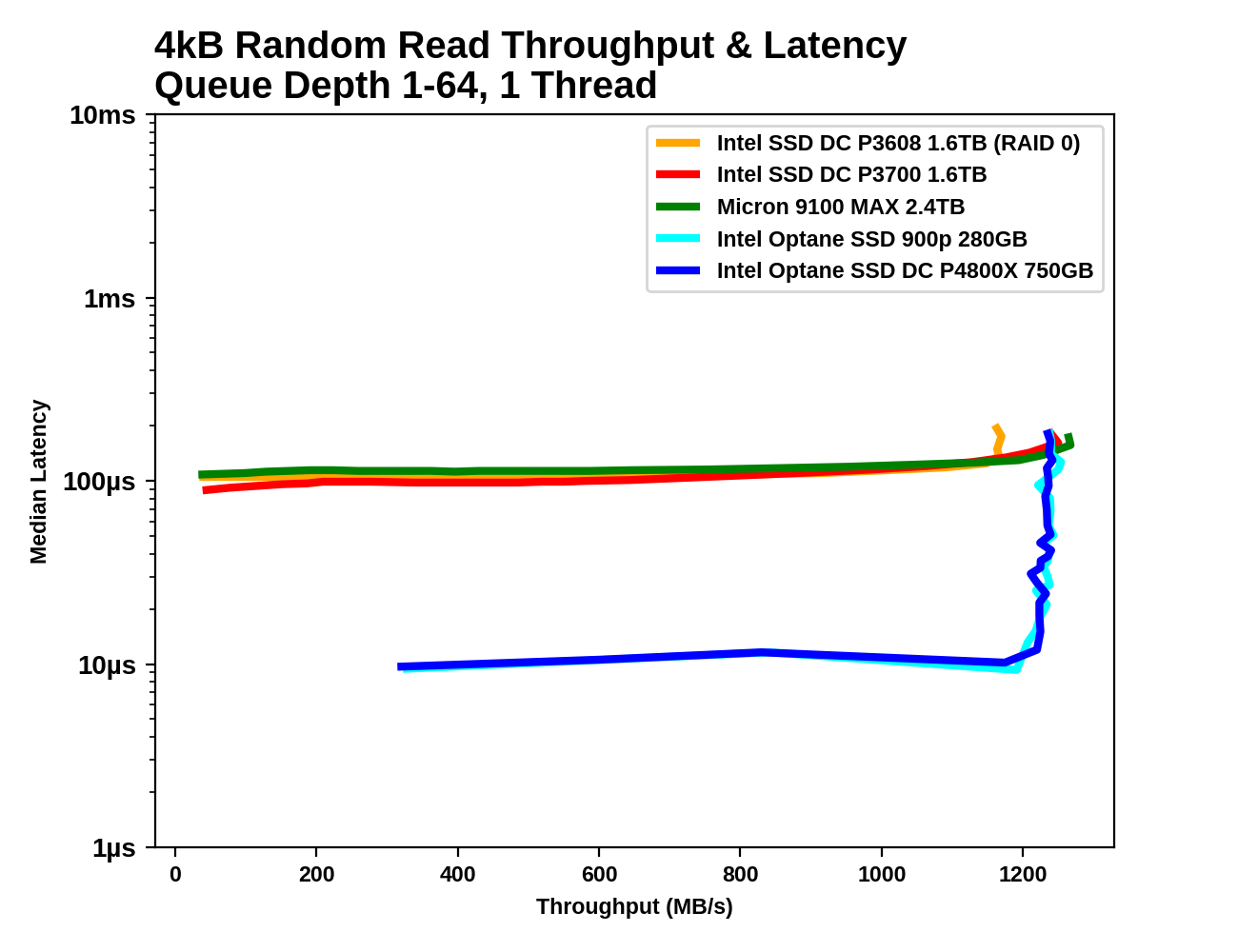

4kB Random Reads

With a single thread issuing asynchronous requests, all of the SSDs top out around 1.2GB/s for random reads. What separates them is how high a queue depth is necessary to reach this level of performance, and what their latency is when they first reach saturation.

|

|||||||||

| Throughput: | IOPS | MB/s | |||||||

| Latency: | Mean | Median | 99th Percentile | 99.999th Percentile | |||||

For the Optane SSDs, the queue depth only needs to reach 4-6 in order to be near the highest attainable random read performance, and further increases in queue depth only add to the latency without improving throughput. The flash-based SSDs require queue depths well in excess of 32. Even long after the Optane SSDs have reached saturation an latency has begun to climb, the Optane SSDs continue to offer better QoS than the flash SSDs.

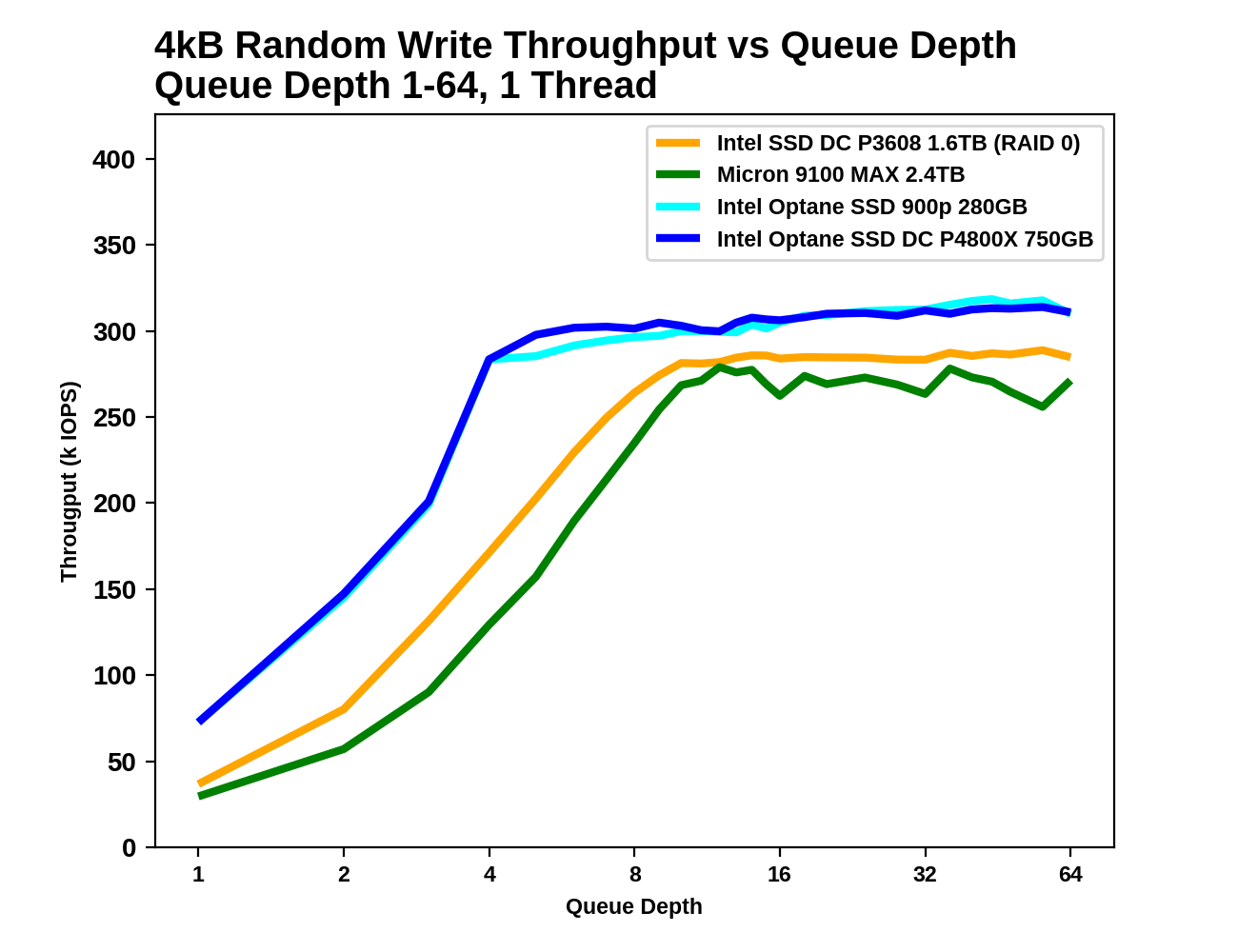

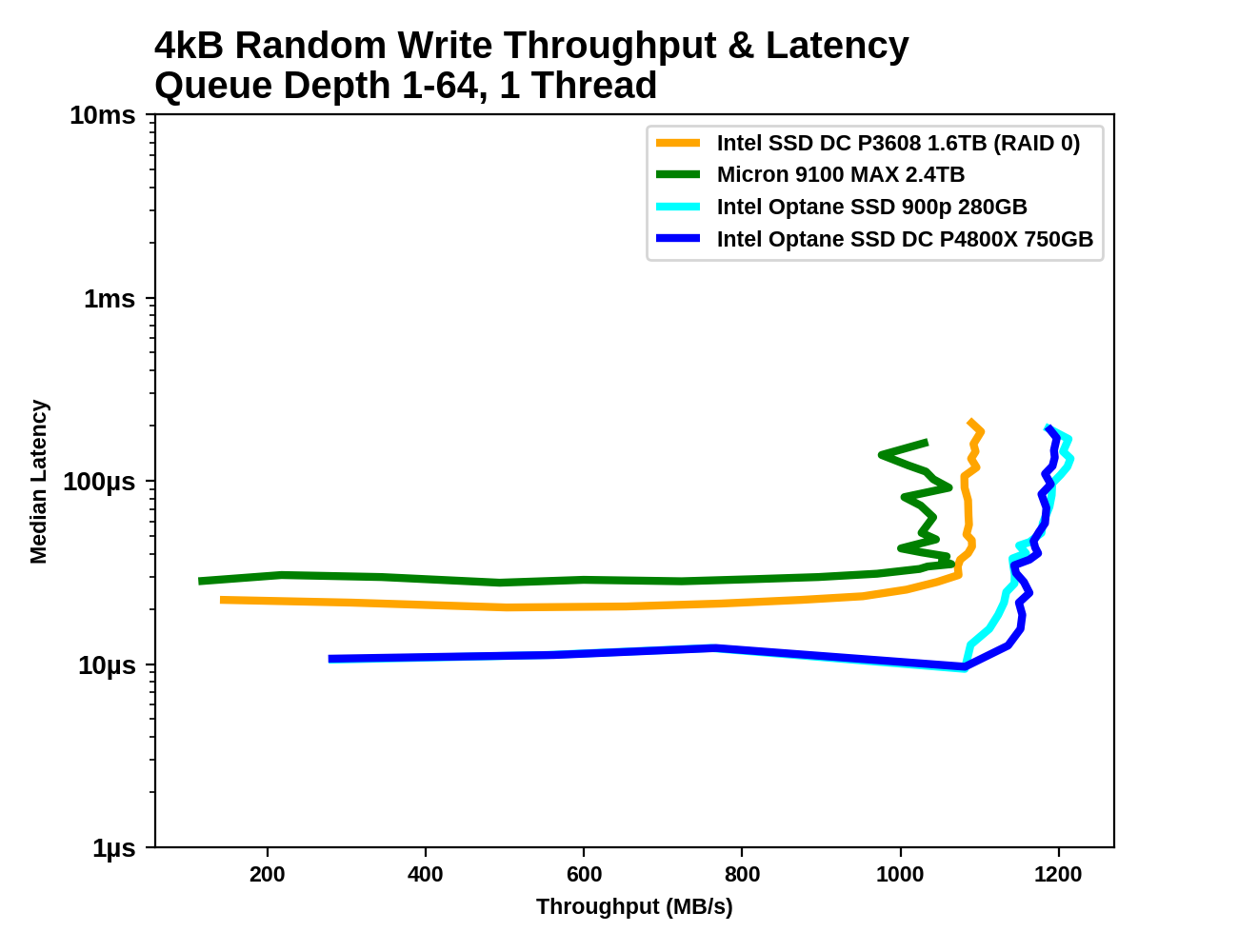

4kB Random Writes

The Optane SSDs offer the best single-threaded random write performance, but the margins are much smaller than for random reads, thanks to the write caches on the flash-based SSDs. The flash SSDs have random write latencies that are only 2-3x higher than the Optane SSD's latency, and the throughput advantage of the Optane SSD at saturation is less than 20%.

|

|||||||||

| Throughput: | IOPS | MB/s | |||||||

| Latency: | Mean | Median | 99th Percentile | 99.999th Percentile | |||||

The Optane SSDs saturate around QD4 where the CPU becomes the bottleneck, and the flash based SSDs follow suit between QD8 and QD16. Once all the drives are saturated at about the same throughput, the Optane SSDs offer far more consistent performance.

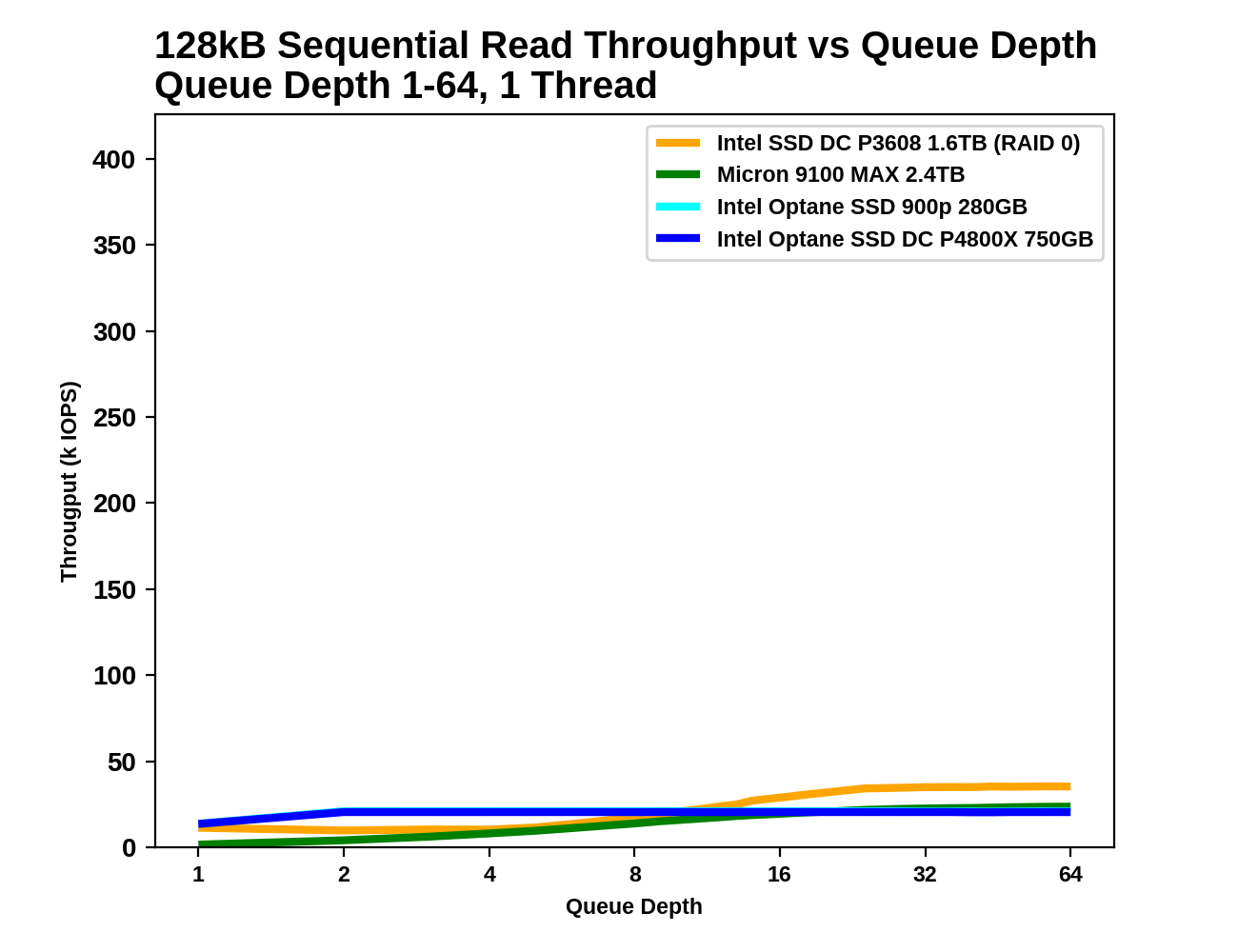

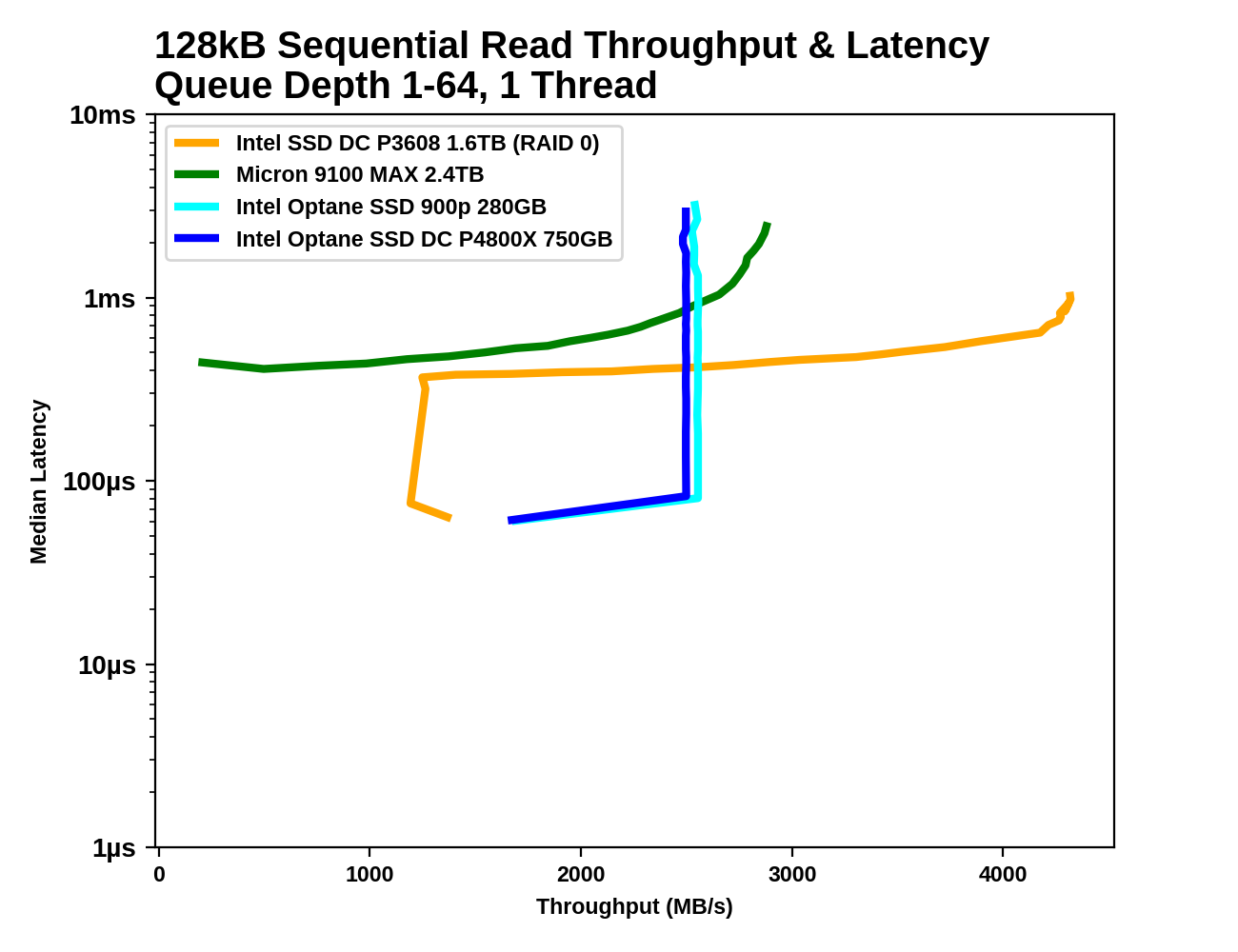

128kB Sequential Reads

With the large 128kB block size, the sequential read test doesn't hit a CPU/IOPS bottleneck like the random read test above. The Optane SSDs saturate at the rated throughput of about 2.4-2.5GB/s while the Micron 9100 MAX and the Intel P3608 scale to higher throughput.

|

|||||||||

| Throughput: | IOPS | MB/s | |||||||

| Latency: | Mean | Median | 99th Percentile | 99.999th Percentile | |||||

The Optane SSDs reach their full sequential read speed at QD2, and the flash-based SSDs don't catch up until well after QD8. The 99th and 99.999th percentile latencies of the Optane SSDs are more than an order of magnitude lower when the drives are operating at their respective saturation points.

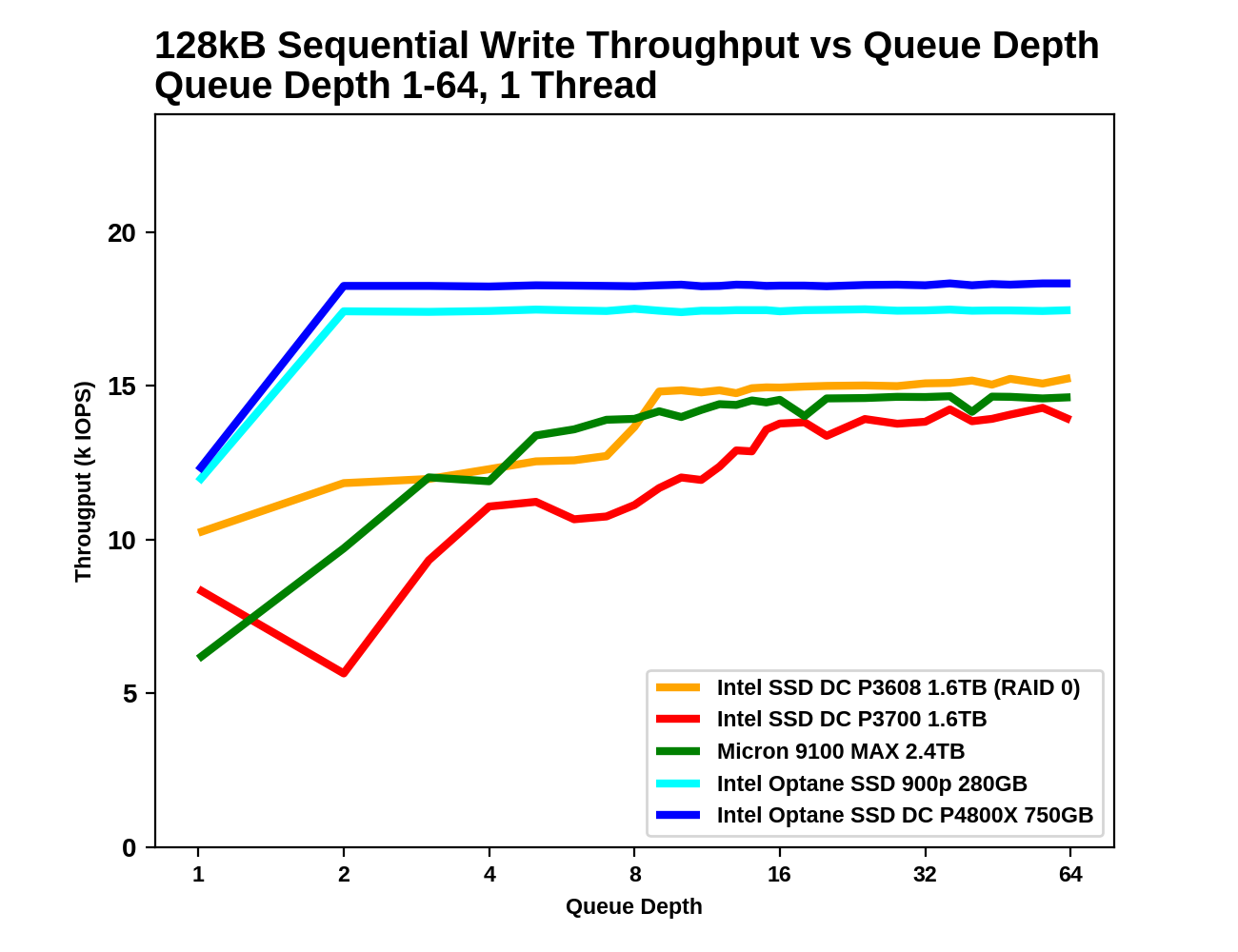

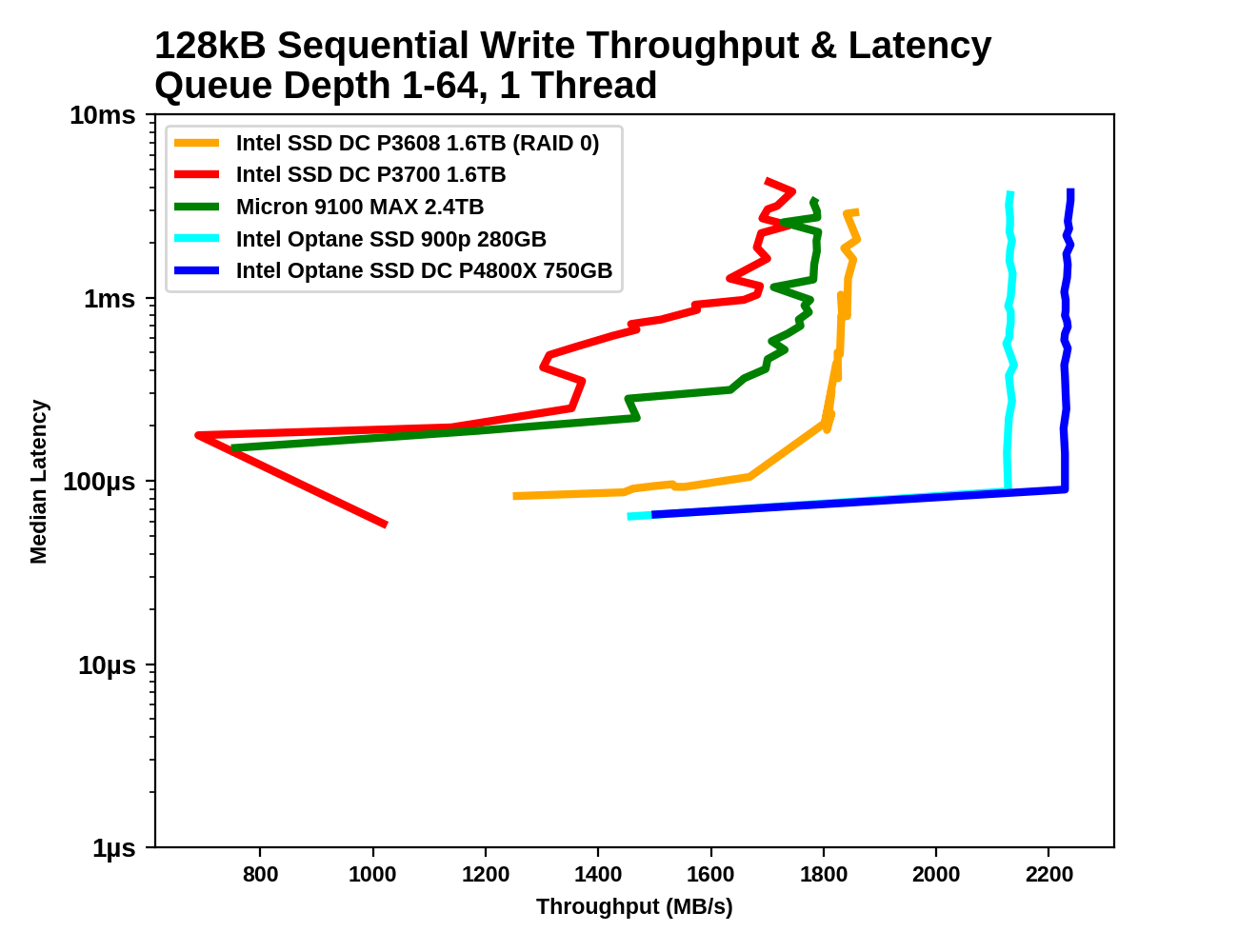

128kB Sequential Writes

Write caches again allow the flash-based SSDs to approach the write latency of the Optane SSDs, albeit at lower throughput. The Optane SSDs quickly exceed their specified 2GB/s sequential write throughput while the flash-based SSDs have to sacrifice low latency in order to reach high throughput.

|

|||||||||

| Throughput: | IOPS | MB/s | |||||||

| Latency: | Mean | Median | 99th Percentile | 99.999th Percentile | |||||

As with sequential reads, the Optane SSDs reach saturation at a mere QD2, while the flash-based SSDs need until around QD8 to scale up to full throughput. By the time the flash-based SSDs reach their maximum speed, their latency has at least doubled.

58 Comments

View All Comments

Lord of the Bored - Thursday, November 9, 2017 - link

Me too. ddriver is most of why I read the comments.mkaibear - Friday, November 10, 2017 - link

He is always good for a giggle. I suppose he's busy directing hard drive manufacturers to make special hard drive platters for him solely out of hand-gathered sand from the Sahara. Or something.Still it's a shame to miss the laughs. It's always the second thing I do on SSD articles - first read the conclusion, then go and see what deedee has said. Ah well.

extide - Friday, November 10, 2017 - link

Please.. don't jinx us!rocky12345 - Thursday, November 9, 2017 - link

Interesting drive to say the least. Also a well written review thanks.PeachNCream - Thursday, November 9, 2017 - link

30 DWPD over the course of 5 years turns into a really large amount of data when you're talking about 750GB of capacity. Isn't the typical endurance rating more like 0.3 DPWD for enterprise solid state?So this thing about Optane on DIMMs is really interesting to me. Is the plan for it to replace RAM and storage all at once or to act as a cache or some sort between faster DRAM and conventional solid state? Even with the endurance its offering right now, it seems like it would need to be more durable still for it to replace RAM.

Oh (sorry case of shinies) could this be like a DIMM behind HBM on the CPU package where HBM does more of the write heavy stuff and then Optane lives between HBM and SSD or HDD storage? Has Intel let much out of the bag about this sorta thing?

Billy Tallis - Thursday, November 9, 2017 - link

Enterprise SSDs are usually sorted into two or three endurance tiers. Drives meant for mostly-read workloads typically have endurance ratings of 0.3 DWPD. High-endurance drives for write-intensive uses are usually 10, 25 or 30 DWPD, but the ratings of high-endurance drives have decayed somewhat in recent years as the market realized few applications really need that much endurance.lazarpandar - Thursday, November 9, 2017 - link

Can this be used to supplement addressable system memory? I remember Intel talking about that during the product launch.Billy Tallis - Thursday, November 9, 2017 - link

Yes. It makes for a great swap device, especially with a recent Linux kernel. Alternatively, Intel will sell it bundled with a hypervisor that presents the guest OS with a pool of memory equal in size to the system's DRAM plus about 85% of the Optane drive's capacity. The hypervisor manages memory placement, so from the guest OS's perspective the memory is a homogeneous pool, not x GB of DRAM and y GB of Optane.tuxRoller - Friday, November 10, 2017 - link

It's a bit odd Intel would go for the hypervisor solution since the kernel can handle tiered pmem and it's in a better position to know where to place data.I suppose it's useful because it's cross-platform?

xype - Friday, November 10, 2017 - link

I’d guess a hypervisor solution would also allow any critical fixes to be propagated faster/easier than having to go through a 3rd party (kernel) provider?