The AMD Radeon RX Vega 64 & RX Vega 56 Review: Vega Burning Bright

by Ryan Smith & Nate Oh on August 14, 2017 9:00 AM ESTDawn of War III

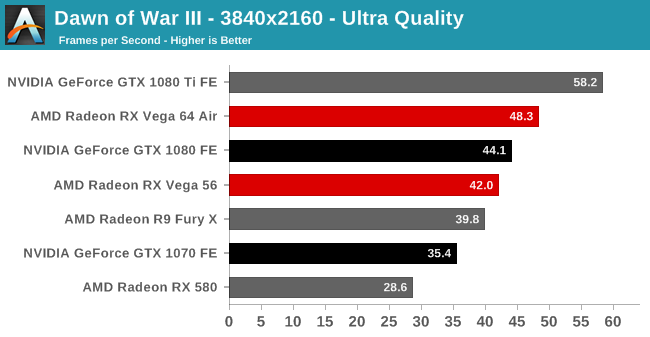

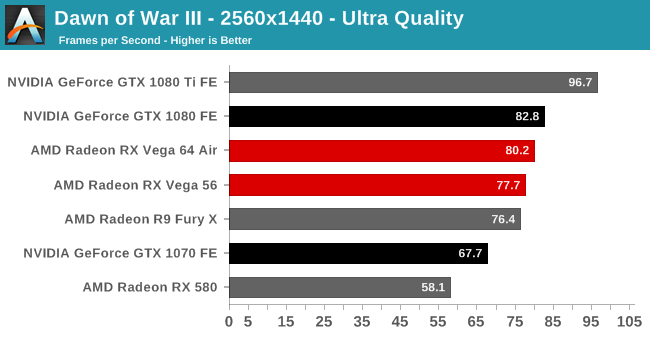

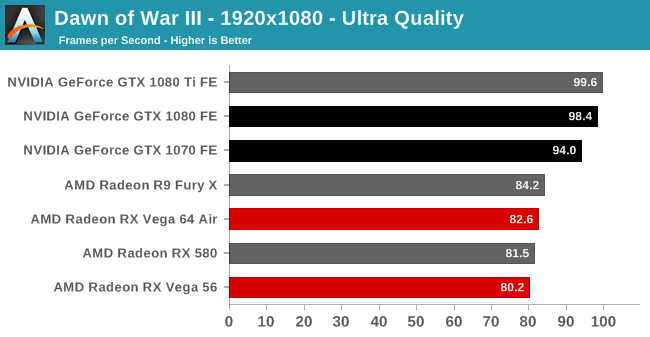

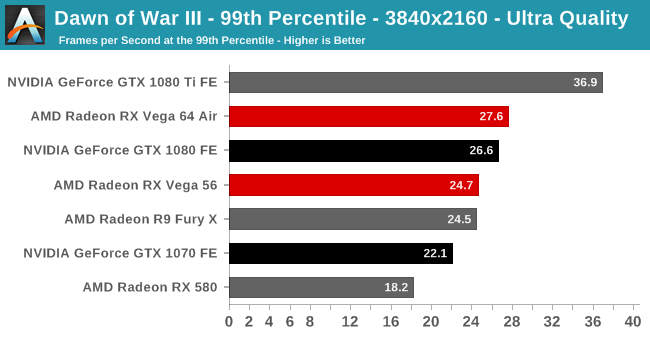

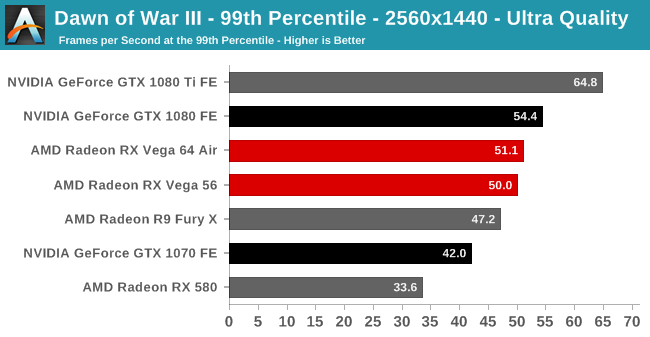

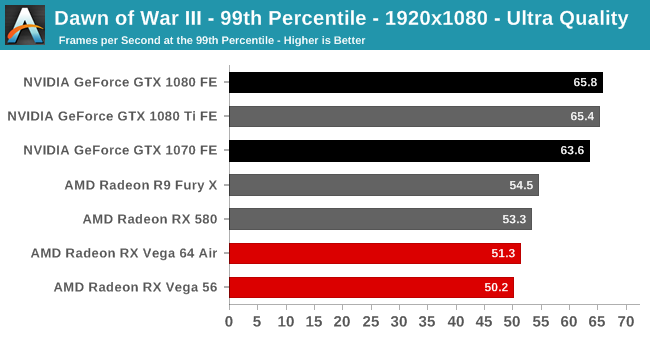

A Dawn of War game finally returns to our benchmark suite, with its predecessor last appearing in 2010. With Dawn of War III, Relic offers a demanding RTS with a built-in benchmark; however, the benchmark is still bugged, something noticed by Ian, as well as by other publications. The built-in benchmark for Dawn of War III collects frametime data for the loading screen before and black screen after the benchmark scene, rendering the calculated averages and minimum/maximums useless. While we used the benchmark scene for consistency, we used OCAT to collect the performance data instead. Ultra settings were used without alterations.

A note on the 1080p results: further testing revealed that Dawn of War III at 1080p was rather CPU-bound on our testbed, resulting in anomalous performance. Due to the extreme time constraints, we discovered and determined this very late in the process. For the sake of transparency, the graphs will remain as they were at the time of the original posting.

213 Comments

View All Comments

HollyDOL - Tuesday, August 15, 2017 - link

Thank you, I already did. Not everywhere is cheap electricity.Gigaplex - Tuesday, August 15, 2017 - link

A little over $30 per year extra. I tend to upgrade on a 3 year cadence. That's around $100 extra I can use to bump up to the Nvidia card.Outlander_04 - Tuesday, August 15, 2017 - link

The highest cost for electricity I can see in the US is 26 cents per kilowatt hour.The difference in gaming power consumption is 0.078 Kilowatts hour Meaning it would take 12.8 hours to burn that extra kW/H

Two hours of full load gaming every day adds up to 730 hours a year means 57 kW/H's extra for a total cost of $14.82 per year .

In states with electricity cost of 10 cents kW/H the difference is about $5.70 a year

You might have to save a bit longer than you expect .

Yojimbo - Wednesday, August 16, 2017 - link

Why did you assume he was interested in the 1070/Vega 56? Comparing the 1080 FE with the Vega 64 air cooled, the difference is .150 kilowatts. At your same assumption of 2 hours a day and 26 cents a kilowatt-hour it comes to $28.50 a year, right in line with his estimate. It's not a stretch to think he would game more than 730 hours a year, either.Outlander_04 - Thursday, August 17, 2017 - link

The BF1 power consumption difference between Vega 64 and the 1080 FE is 0.08 kW/H.Not sure where you get your numbers from , but it is not this review .

The numbers are essentially the same as I suggested above . 0.078 vs 0.080 .

Less than $6 a year in states with lower utility costs and as much as $15 a year in Hawaii .

Yes you could game more than 14 hours a week . Its also not a stretch to think you might game a lot less . What was your point?

HollyDOL - Friday, August 18, 2017 - link

I don't know where you look, but 1080 FE system is taking 310W, Vega 64 then 459W, which is 149W for no gain whatsoever.Outlander_04 - Friday, August 18, 2017 - link

379 vs 459 watts for the 1080 fe vs Vega 64.delta is 0.08 kW/H

Those figures are right here in this review on the gaming power consumption chart.

HollyDOL - Saturday, August 19, 2017 - link

Lol man, you need to reread that chart. 379W is 1080Ti FE, not 1080FE.FourEyedGeek - Tuesday, August 22, 2017 - link

What if you live in a hot part of the world? Extra heat equals extra throttling, during the summer I reduce my OCs due to this. Slap on the air conditioning and it'll run a bit extra too to compensate costing more.I'd look at undervolting if possible a VEGA 56

ET - Tuesday, August 15, 2017 - link

So Vega 72 yet to come? Page 2 says that there are 6 CU arrays of 3 CU's each. That's 18 CU's, with only 16 enabled in Vegz 64.