The AMD Ryzen Threadripper 1950X and 1920X Review: CPUs on Steroids

by Ian Cutress on August 10, 2017 9:00 AM ESTCivilization 6

First up in our CPU gaming tests is Civilization 6. Originally penned by Sid Meier and his team, the Civ series of turn-based strategy games are a cult classic, and many an excuse for an all-nighter trying to get Gandhi to declare war on you due to an integer overflow. Truth be told I never actually played the first version, but every edition from the second to the sixth, including the fourth as voiced by the late Leonard Nimoy, it a game that is easy to pick up, but hard to master.

Benchmarking Civilization has always been somewhat of an oxymoron – for a turn based strategy game, the frame rate is not necessarily the important thing here and even in the right mood, something as low as 5 frames per second can be enough. With Civilization 6 however, Firaxis went hardcore on visual fidelity, trying to pull you into the game. As a result, Civilization can taxing on graphics and CPUs as we crank up the details, especially in DirectX 12.

Perhaps a more poignant benchmark would be during the late game, when in the older versions of Civilization it could take 20 minutes to cycle around the AI players before the human regained control. The new version of Civilization has an integrated ‘AI Benchmark’, although it is not currently part of our benchmark portfolio yet, due to technical reasons which we are trying to solve. Instead, we run the graphics test, which provides an example of a mid-game setup at our settings.

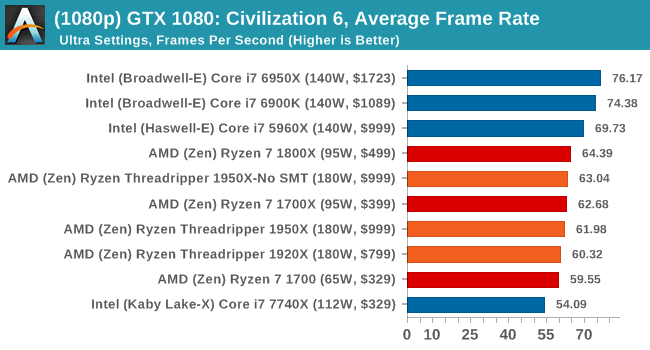

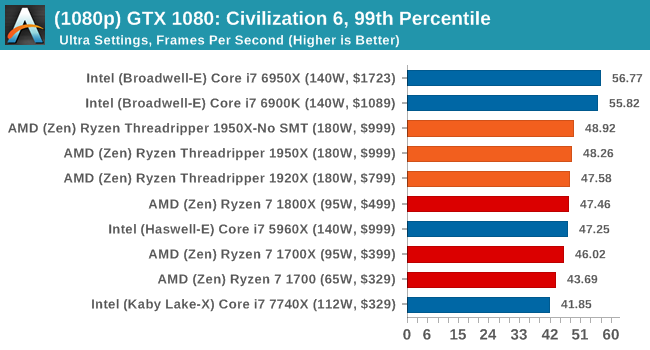

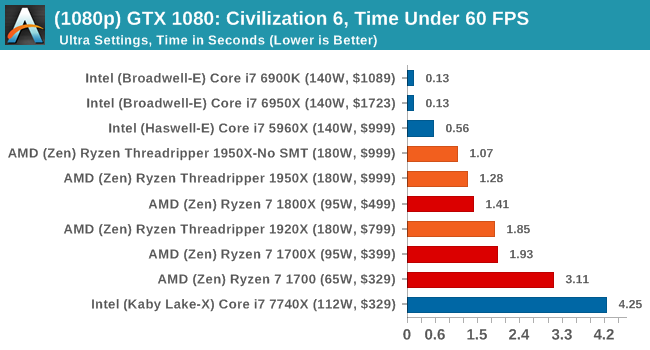

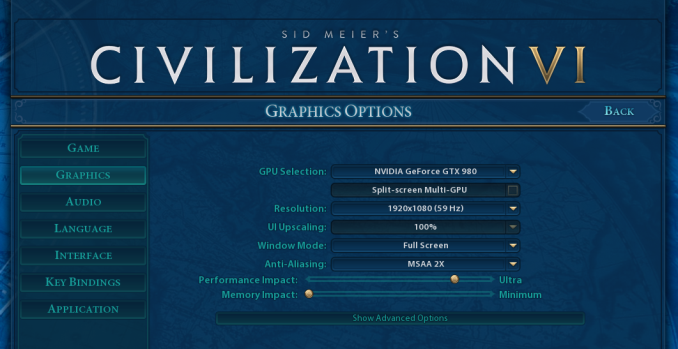

At both 1920x1080 and 4K resolutions, we run the same settings. Civilization 6 has sliders for MSAA, Performance Impact and Memory Impact. The latter two refer to detail and texture size respectively, and are rated between 0 (lowest) to 5 (extreme). We run our Civ6 benchmark in position four for performance (ultra) and 0 on memory, with MSAA set to 2x.

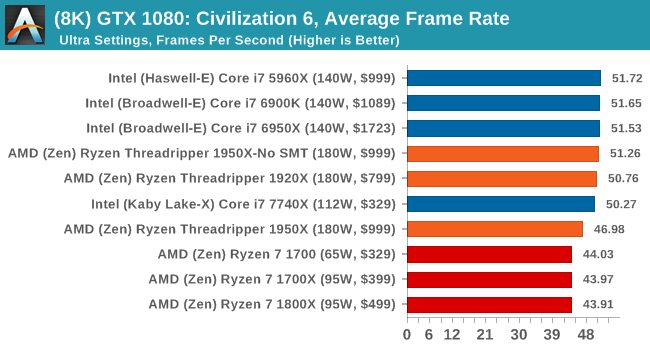

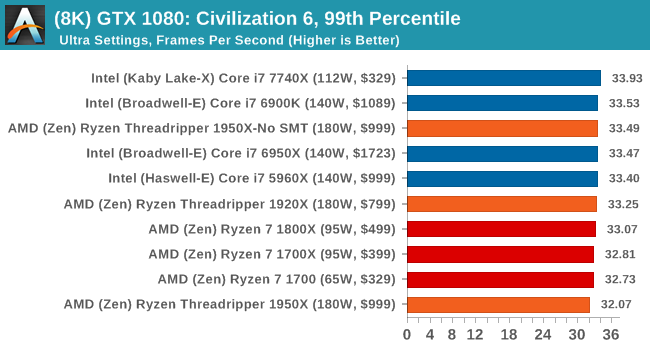

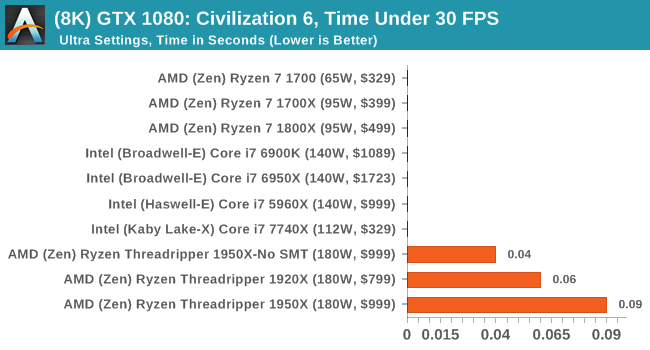

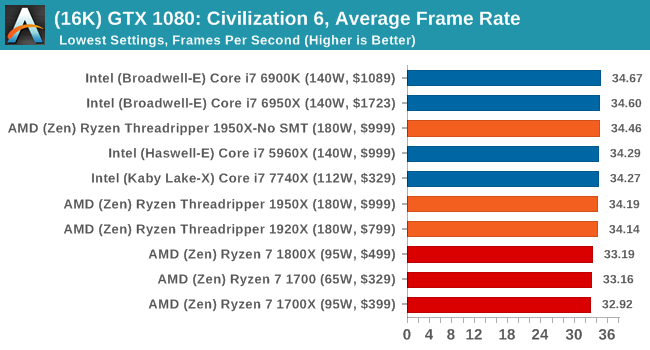

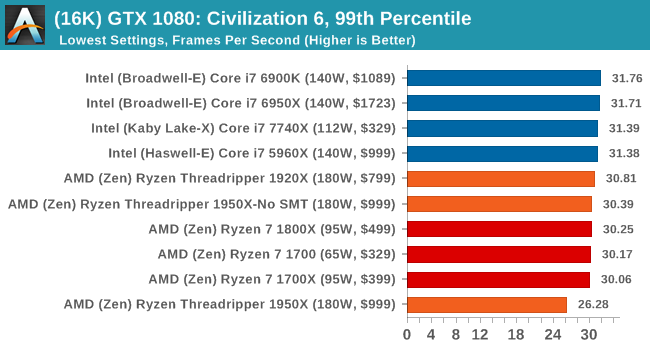

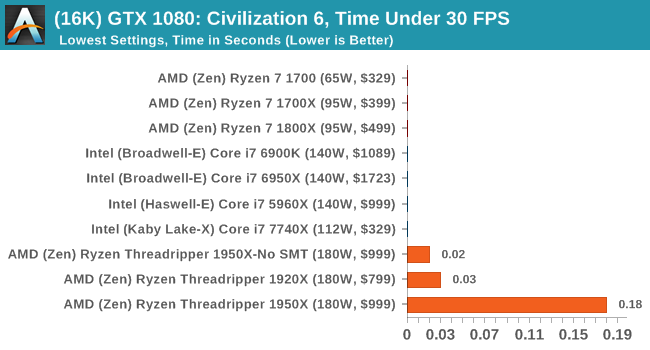

For reviews where we include 8K and 16K benchmarks (Civ6 allows us to benchmark extreme resolutions on any monitor) on our GTX 1080, we run the 8K tests similar to the 4K tests, but the 16K tests are set to the lowest option for Performance.

All of our benchmark results can also be found in our benchmark engine, Bench.

MSI GTX 1080 Gaming 8G Performance

1080p

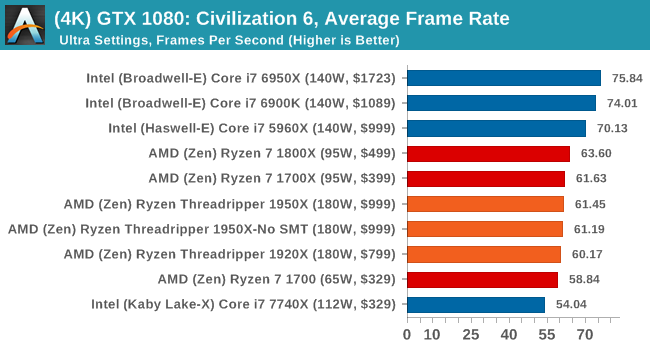

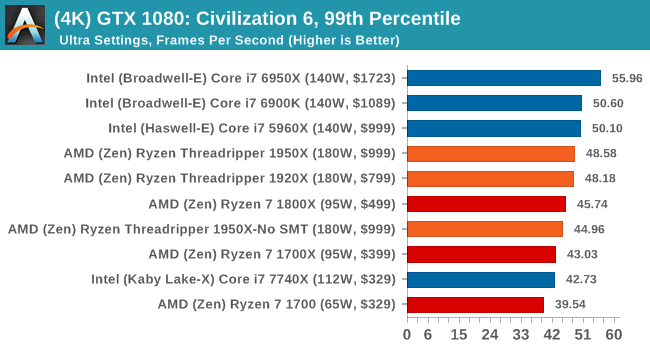

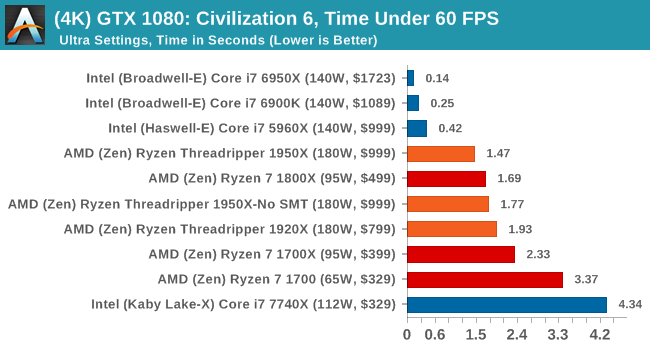

4K

8K

16K

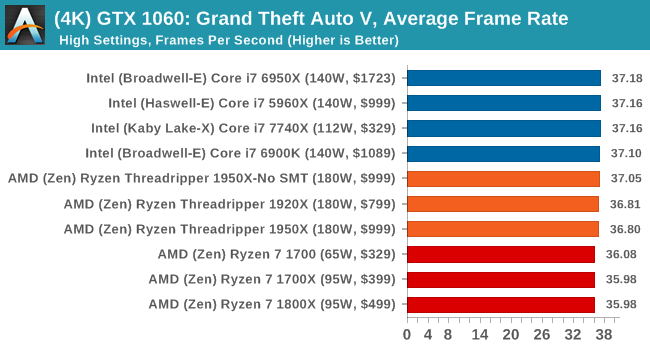

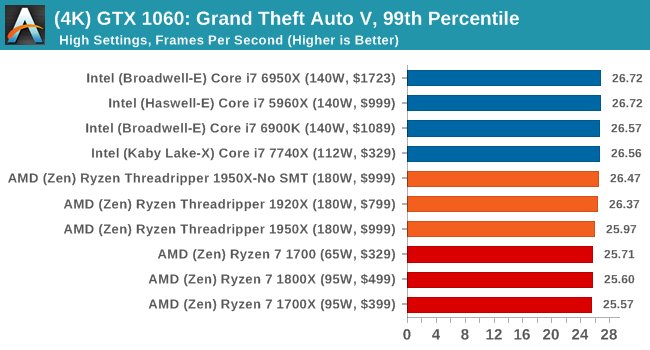

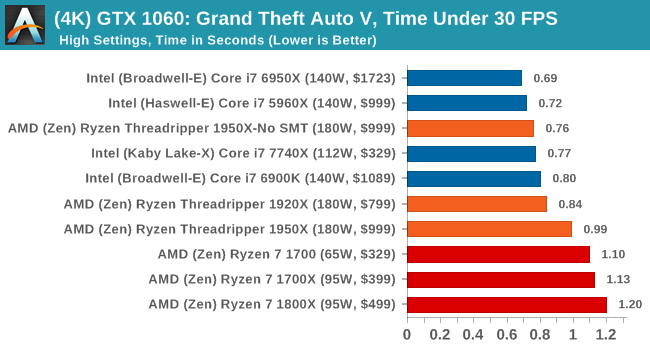

ASUS GTX 1060 Strix 6G Performance

1080p

4K

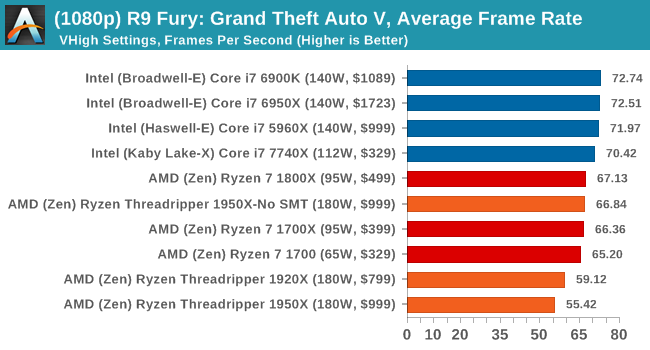

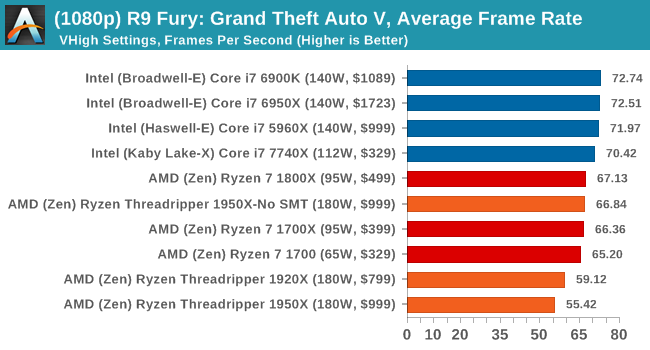

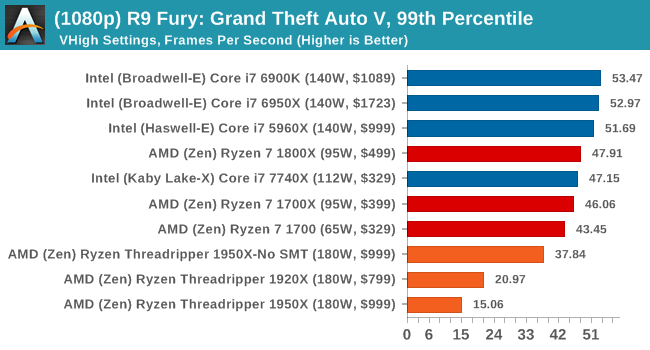

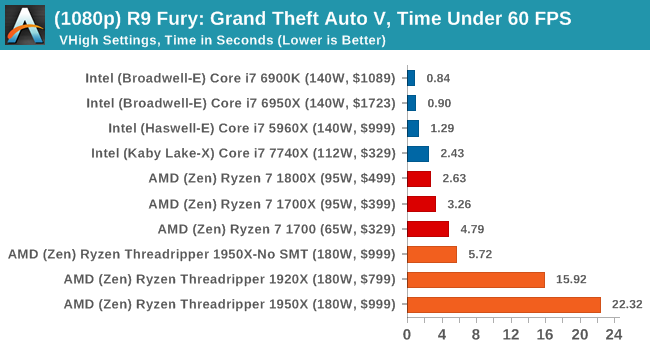

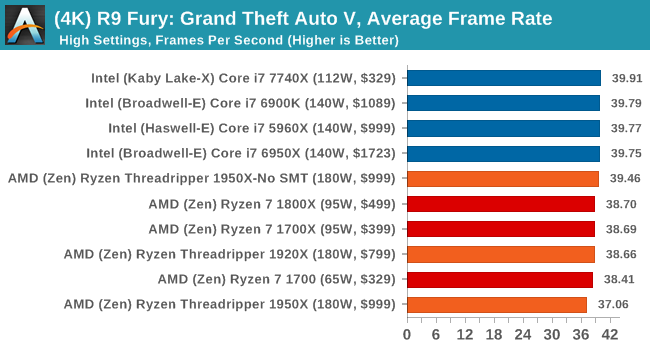

Sapphire Nitro R9 Fury 4G Performance

1080p

4K

Sapphire Nitro RX 480 8G Performance

1080p

4K

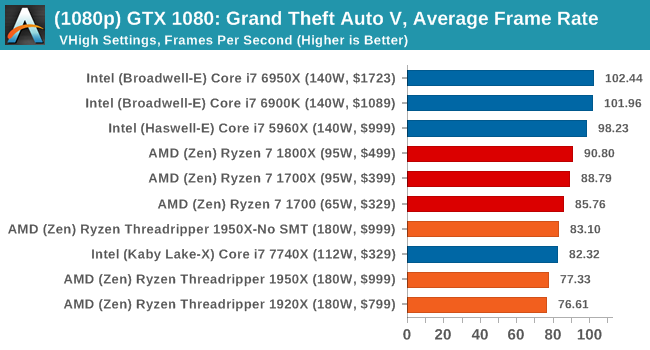

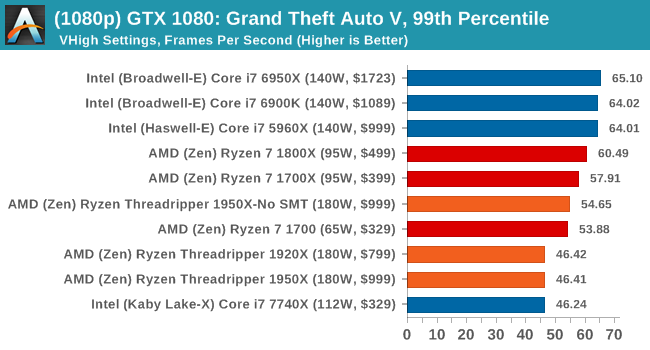

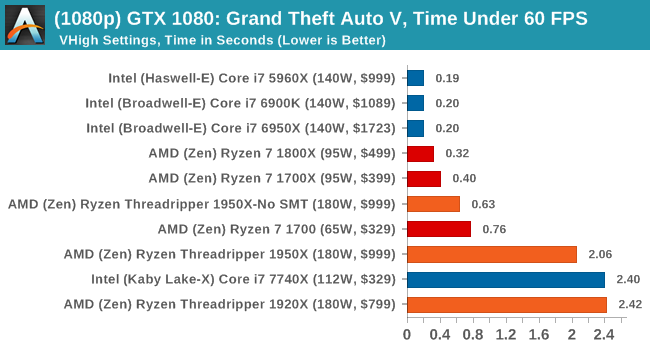

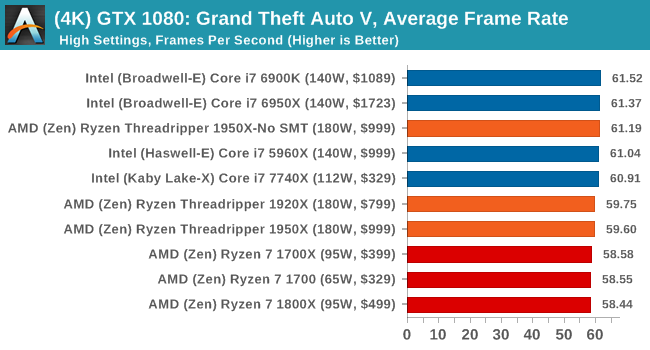

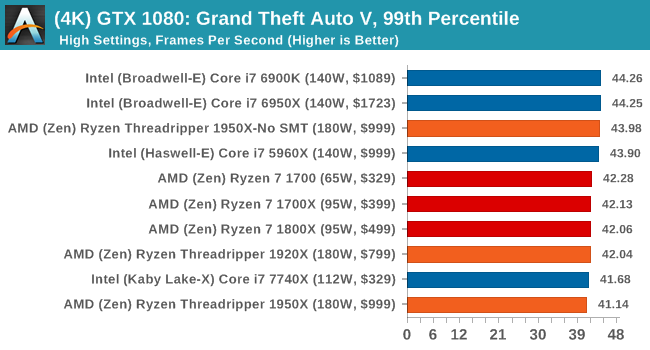

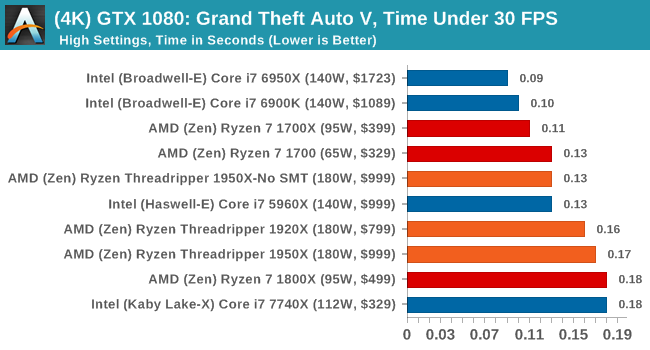

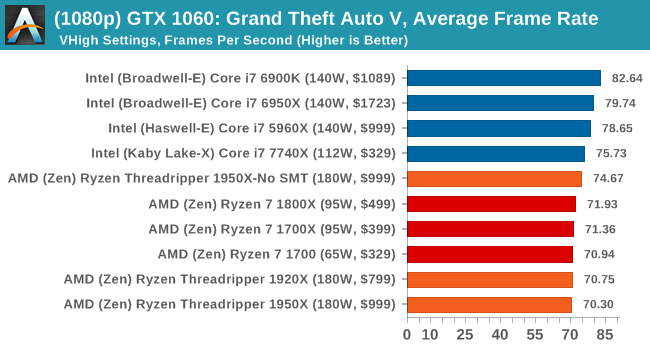

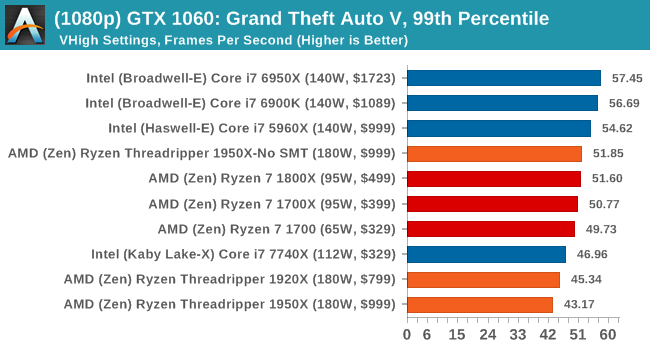

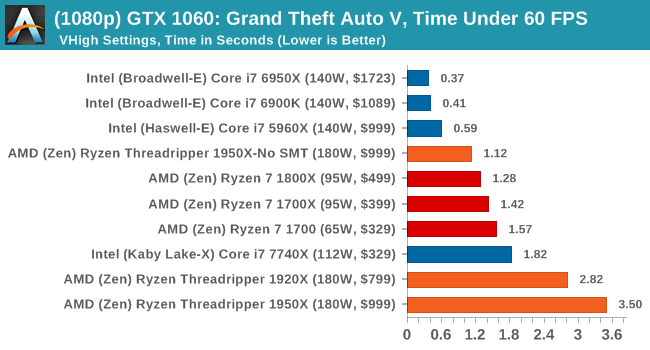

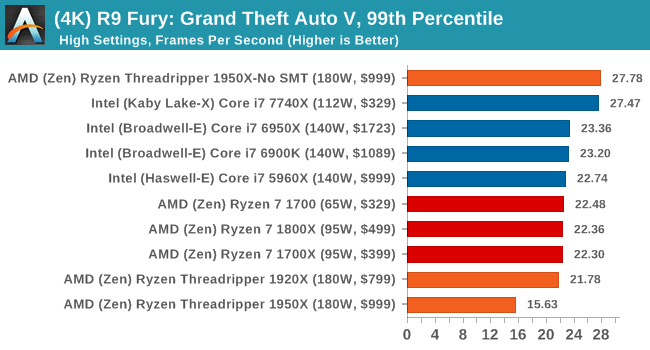

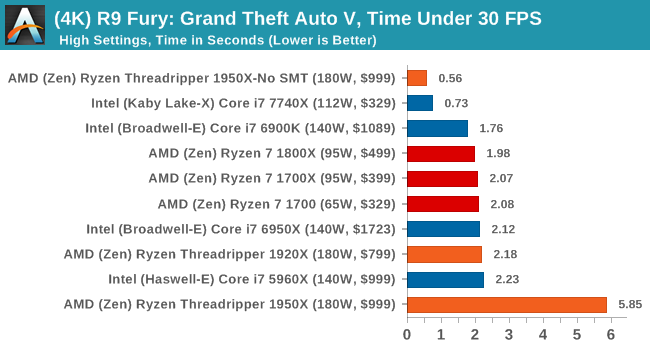

On the whole, the Threadripper CPUs perform as well as Ryzen does on most of the tests, although the Time Under analysis always seems to look worse for Threadripper.

347 Comments

View All Comments

Makaveli - Thursday, August 10, 2017 - link

Some professional work from home. Kinda of a silly question.mapesdhs - Thursday, August 10, 2017 - link

Yeah, I just inferred that'd be the case.prisonerX - Friday, August 11, 2017 - link

"640K should be enough for anyone"peevee - Thursday, August 10, 2017 - link

I had a lot of hope for Threadripper as a development machine... but when 16 core TR loses so bad to 10-core 7900x or even 8-core 7820x in compilation, there is something seriously wrong with the picture. Too much emphasis on FP performance nobody at home needs all that much (except in games where it is provided by GPU and not CPU anyway)? Maybe AT tests are wrong, say, they have failed to specify /m for MsBuild?peevee - Thursday, August 10, 2017 - link

Well, there is a good chance that the optimal config for the test would be SMT on (obviously) and NUMA on.peevee - Thursday, August 10, 2017 - link

Hiding the fact that the CPU is NUMA both from the OS and from software is a very bad idea. Thread migration out of a core is a disaster all but itself, but thread migration to different memory and especially L3 cache (as big as it is) should never be attempted.peevee - Thursday, August 10, 2017 - link

Basically, at this point I would take 7820x over TR1950X for every task, with similar MT performance in vast majority of tasks not offloadable to a GPU, better mixed-load performance and much better ST performance. And would save $400 and electricity costs in the process.BOBOSTRUMF - Friday, August 11, 2017 - link

Take your Intel, I'm with the ThreadRipperLolimaster - Friday, August 11, 2017 - link

X299 + cpu consumes and produces way more heat than TR, and that's a fact, anand is anand, if you're happy for you blu placebo site, good for ya.Notmyusualid - Saturday, August 12, 2017 - link

@ peeveeI'm sort-of eyeing-up the 7900X myself. But I have the feeling the Mrs. will sh1t if I buy any more new toys, and my 13 Nvidia GPUs.... :)