The Intel SSD 600p (512GB) Review

by Billy Tallis on November 22, 2016 10:30 AM ESTSequential Read Performance

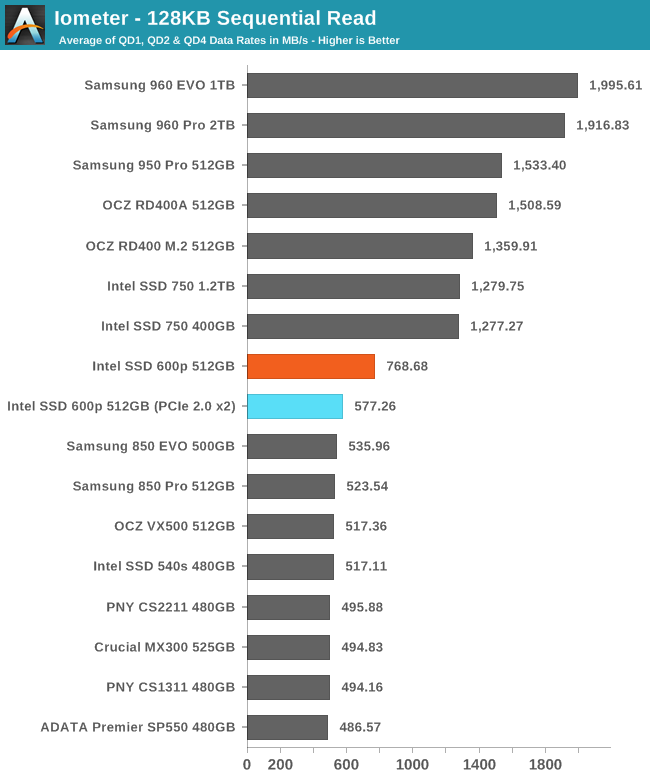

The sequential read test requests 128kB blocks and tests queue depths ranging from 1 to 32. The queue depth is doubled every three minutes, for a total test duration of 18 minutes. The test spans the entire drive, and the drive is filled before the test begins. The primary score we report is an average of performances at queue depths 1, 2 and 4, as client usage typically consists mostly of low queue depth operations.

Even when limited to PCIe 2.0 x2 the 600p has slightly higher sequential read speed than SATA drives can manage, but when given more PCIe bandwidth the 600p doesn't catch up to the more expensive NVMe drives.

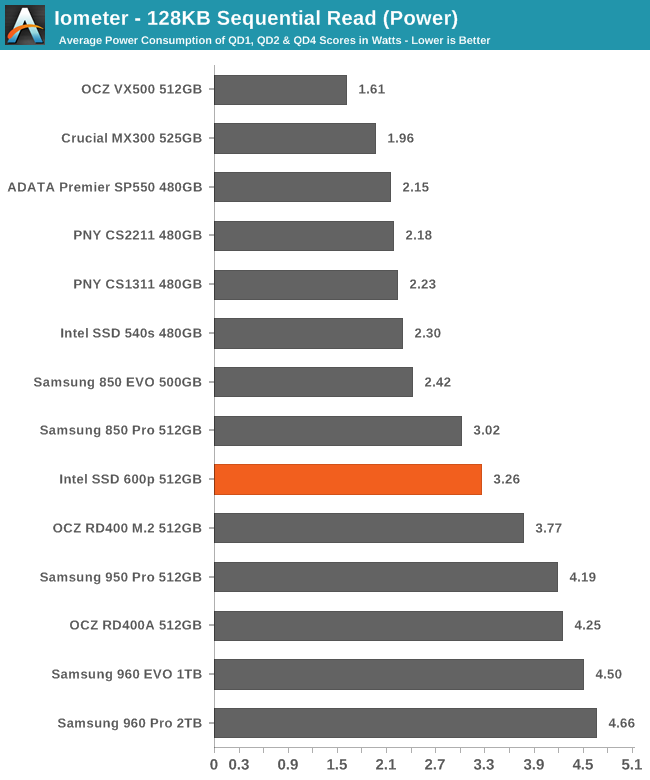

The 600p actually manages to surpass the power efficiency of several SATA SSDs, but it can't compete with the other NVMe drives that deliver twice the data rate.

|

|||||||||

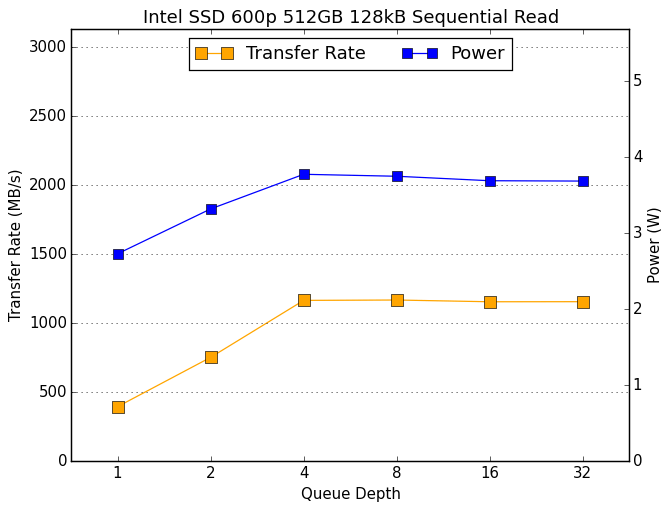

The 600p starts at just under 400MB/s hits its read speed limit at QD4 with around 1150MB/s. The other PCIe SSDs perform at least that well at QD1 and go up from there.

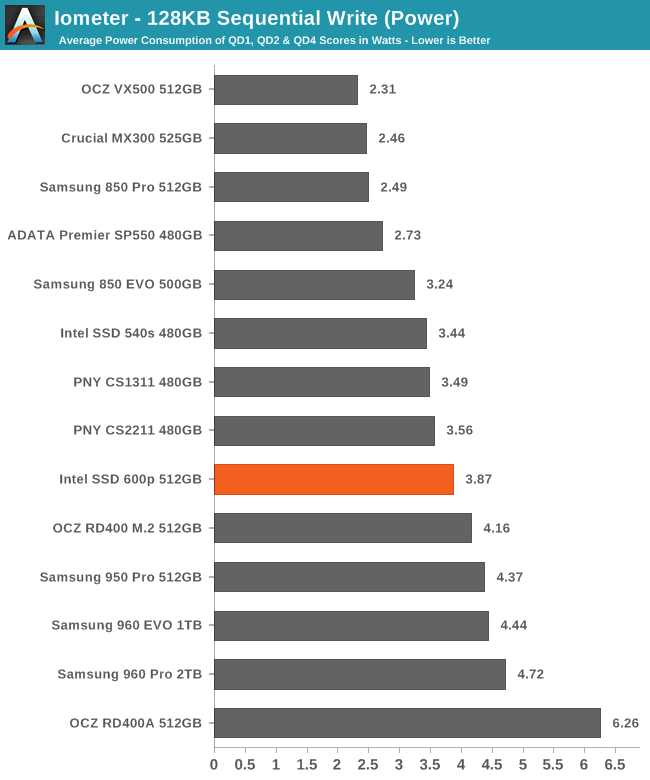

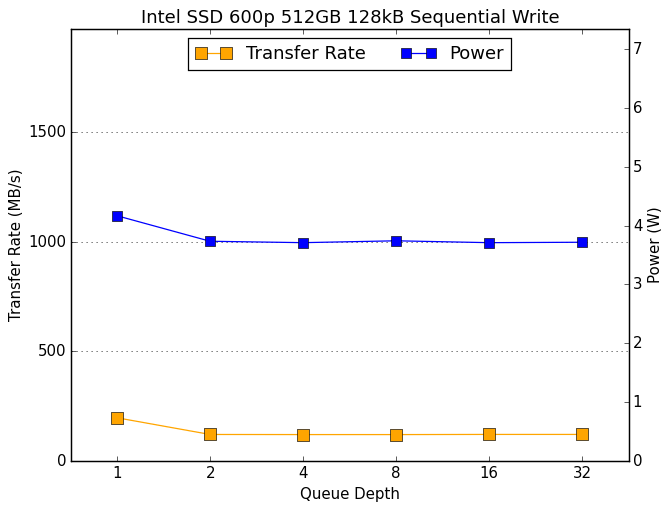

Sequential Write Performance

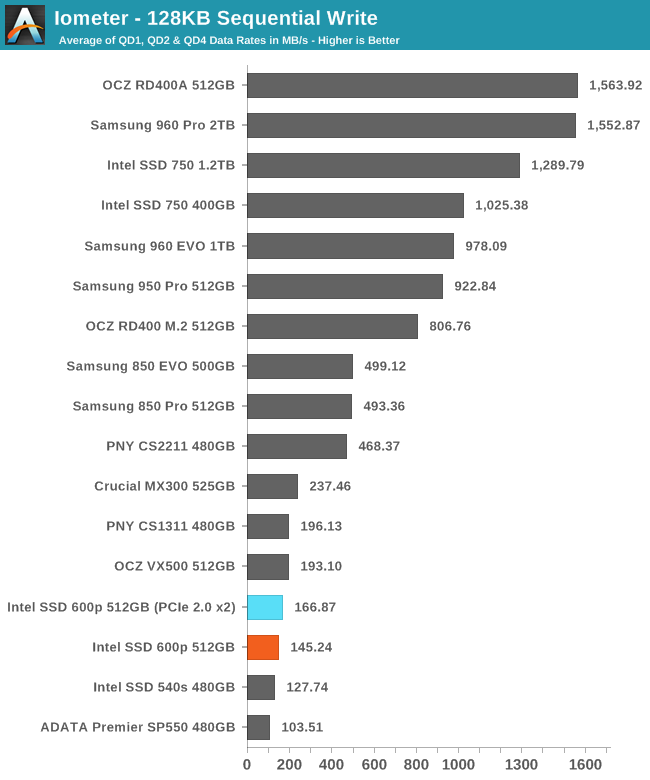

The sequential write test writes 128kB blocks and tests queue depths ranging from 1 to 32. The queue depth is doubled every three minutes, for a total test duration of 18 minutes. The test spans the entire drive, and the drive is filled before the test begins. The primary score we report is an average of performances at queue depths 1, 2 and 4, as client usage typically consists mostly of low queue depth operations.

It is a surprise to see the Intel 600p performing better in the motherboard's M.2 slot than in the PCIe 3.0 adapter, but in both cases the sustained write speeds are so slow that the interface is not a limitation.

The power consumption of the 600p when it's in the PCIe 3.0 adapter is high enough that temperature may be a factor in this test, and the 600p may have performed better in the motherboard's M.2 slot simply due to better positioning and orientation in the case.

|

|||||||||

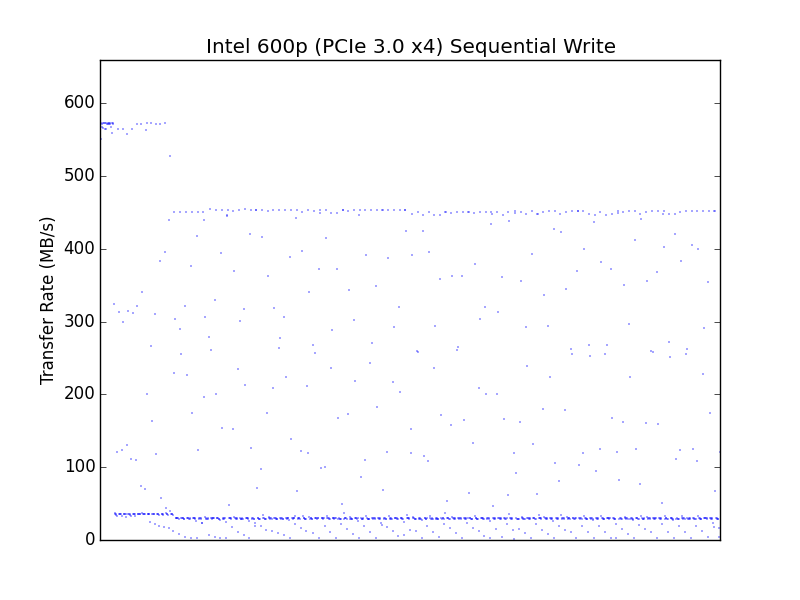

It is a familiar pattern for PCIe SSDs to see the highest write speeds at the beginning of the test, and a completely flat graph thereafter as thermal limits kick in. We're just used to seeing the performance curve near the top of the graph instead of at the bottom.

|

|||||||||

| PCIe 3.0 x4 adapter | motherboard M.2 PCIe 2.0 x2 | ||||||||

A comparison of the second by second performance during the sequential write test shows that the 600p reaches a steady state with the same kind of inconsistency we saw for random writes, and in the PCIe 3.0 adapter the performance is reduced across the board and the worst drops in performance are much closer to zero.

63 Comments

View All Comments

close - Thursday, November 24, 2016 - link

ddriver, you're the guy who insisted he designed a 5.25" hard drive that's better than anything on the market despite being laughed at and proven wrong beyond any shadow of a doubt but still insist on beginning and ending almost all of your comments with "you don't have a clue", "you probably don't know". Projecting much?You're not an engineer and you're obviously not even remotely good at tech. You have no idea (and it actually does matters) how this works. You just make up scenarios in your head with how you *think* it works and then you throw a tantrum when you're contradicted by people who don't have to imagine this stuff, they know it.

In your scenario you have 2 clients using 2 galleries at the same time (reasonable enough, 2 users/server just like any respectable content server). You server reads image 1, sends it, then reads image 2 and sends it because when working with a gallery this is exactly how it works (it definitely won't be 200 users requesting thousands of thumbnails for each gallery and then having to send that to each client). Then the network bandwidth will be an issue because your content server is limited to 100Mbps, maybe 1Gbps, since you only designed it for 2 concurrent users. A server delivering media content - so a server who's ONLY job is to DELIVER MEDIA CONTENT - will have that kind of bandwidth that's "vastly exceeded by the drive's performance", the kind that can't cope with several hard drives furiously seeking hundreds or thousands of files. And of course it doesn't matter if you have 2 users or 2000, it's all the same to a hard drive, it simply sucks it up and takes it like a man. That's why they're called HARD...

Most content delivery servers use a hefty solid state cache in front of the hard drives and hope that the content is in the cache. The only reasons spinning drives are still in the picture are capacity and cost per GB. Except ddriver's 5.25" drive that beats anything in every metric imaginable.

Oh and BTW, before the internet became mainstream there was slightly less data to move around. While drive performance increased 10 fold since then the data being move increased 100 times or more.

But heck, we can stick to your scenario that 2 users access 2 pictures on a content server with a 10/100 half duplex.

Now quick, whip out those good ol' lines: "you're a troll wannabe", "you have no clue". Than will teach everybody that you're not a wannabe and not to piss all over you. ;)

vFunct - Wednesday, November 23, 2016 - link

> I'd think the best answer to that would be a custom motherboard with the appropriate slots on it to achieve high storage densities in a slim (maybe something like a 1/2 1U rackmount) chassis.I agree that the best option would be for motherboard makers to create server motherboards with a ton of vertical M.2 slots, like DIMM slots, and space for airflow. We also need to be able to hot-swap these out by sliding out the chassis, uncovering the case, and swapping out a defective one as needed.

A problem with U.2 connectors is that they have thick cabling all over the place. Having a ton of M.2 slots on the motherboard avoids all that.

saratoga4 - Tuesday, November 22, 2016 - link

If only they made it with a SATA interface!DanNeely - Tuesday, November 22, 2016 - link

As a SATA device it'd be meh. Peak performance would be bottlenecked at the same point as every other SATA SSD, and it loses out to the 850 evo, nevermind the 850 pro in consistency.Samus - Tuesday, November 22, 2016 - link

There are lots of good reliable SATA m2 drives on the market. The thing that makes the 600p special is it is priced at near parity with them when most PCIe SSD's have a 20-30% premium.A really good m2 2280 option is the MX300 or 850 EVO. Sandisk has some great m2 2260 drives.

ddriver - Tuesday, November 22, 2016 - link

Even in the case of such "server" you are better off with sata ssds, get a decent hba or raid card or two, connect 8-16 sata ssds and you have it. Price is better, performance in raid would be very good, and when a drive needs replacing, you can do it in 30 seconds without even powering off the machine.The only actual sense this product makes is in budget ultra portable laptops or x86 tablets, because it takes up less space, performance wise there will not be any difference in user experience between that and a sata drive, but it will enable a thinner chassis.

There is no "density advantage" for nvme, there is only FORM FACTOR advantage, and that is only in scenarios where that's the systems primary and sole storage device. What enables density is the nand density, and the same dense chips can be used just as well in a sata or sas drive. Furthermore I don't recall seeing a mobo that has more than 2 m2 slots. A pci card with 4 m2 slots itself will not be exactly compact either. I've seen such, they are as big as upper mid-range video card. It takes about as much space as 4 standard 2.5' drives, however unlike 4x2'5" you can't put it into htpc form factor.

ddriver - Tuesday, November 22, 2016 - link

Also, the 1tb p600 is nowhere to be found, and even so, m2 peaks at 2tb for the 960 pro, which is wildly expensive. Whereas with 2.5" there is already a 4tb option and 8tb is entirely possible, the only thing that's missing is demand. Samsung demoed 16tb 2.5" sdd over a year ago. I'd say that the "density advantage" is very much on the side of 2.5" ssds.BrokenCrayons - Tuesday, November 22, 2016 - link

Probably not.XabanakFanatik - Tuesday, November 22, 2016 - link

If Samsung stopped refusing to make two-sided M.2 drives and actually put the space to use there could easily be a 4TB 960 Pro.... and it would cost $2800.JamesAnthony - Tuesday, November 22, 2016 - link

Those cards are widely available, (I have some), 16x PCIe 3.0 interface and then 4 M.2 slots with each slot getting 4x PCIe 3.0 bandwidth, then a cooling fan for them.However WHY would you want to do that when you could just go get an Intel P3520 2TB drive or for higher speed a P3700 2TB drive. Standard PCIe interface format card for either low profile or standard profile slots?

The only advantage an M.2 drive has is being small, but if you are going to put it in a standard PCIe slot, then why not just go with a purpose built PCIe NVMe SSD drive & not have to worry about thermal throttling on the M.2 cards?