A Bit More on AMD’s Polaris GPUs: 36 & 16 CUs

by Ryan Smith on June 15, 2016 8:30 AM EST

Along with this week’s teaser of the forthcoming Radeon RX 470 and RX 460 at E3, AMD also held a short press briefing about Polaris. The bulk of AMD’s presentation is going to be familiar to our readers who keep close tabs on AMD’s market strategy (in a word, VR), but this latest presentation also brought to light a few more details on the company’s two Polaris GPUs that I want to quickly touch upon.

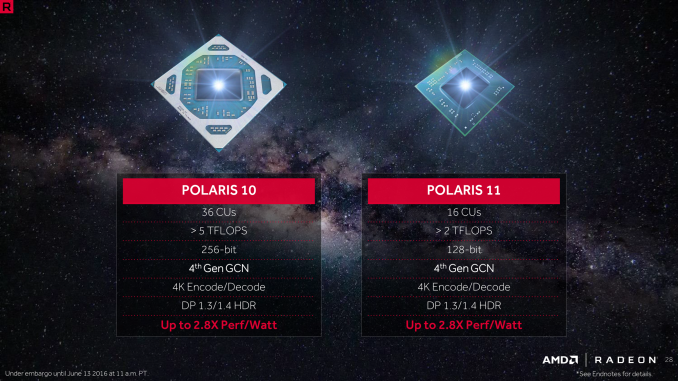

First and foremost, AMD’s presentation included a slide with pictures of the two chips, and confirmation on their full configurations. The larger Polaris 10 is a 36 CU (2304 SP) chip, meaning that the forthcoming Radeon RX 480 video card is using a fully enabled chip. Meanwhile the smaller Polaris 11 (note that these pictures aren’t necessarily to scale) packs 16 CUs (1024 SPs). This puts it a bit below Pitcairn (20 CUs) before factoring in GCN 4’s higher efficiency. Meanwhile as is common for these lower-power GPUs, AMD’s slide also confirms that it features a 128-bit memory bus.

AMD is expecting Polaris 11 to offer over 2 TFLOPs of performance. Assuming a very liberal range of 2.0 to 2.5 TFLOPs for possible shipping products, this would put clockspeeds of a high-end Polaris 11 part at between 975MHz and 1220MHz, which is similar to our projections for RX 480/Polaris 10. Note that AMD has not yet announced any specific product using Polaris 11, however as we now know that RX 470 is a Polaris 10 based card, it’s safe to assume that RX 460 is Polaris 11, and the over-2 TFLOPs projection is for that card.

Second, briefly mentioned in AMD’s press release on Monday was the low z-height of at least Polaris 11, and it pops up in this slide deck again. There was some confusion whether z-height referred to the laptop or the chip, but the slide makes it clear that this is about the chip. So it will be interesting to see how thin Polaris 11 is, how that compares to other chips, and just what manufacturers can in turn do with it.

98 Comments

View All Comments

vladx - Wednesday, June 15, 2016 - link

*Kaby Lakemdriftmeyer - Thursday, June 16, 2016 - link

No, just pay up the ass for the Intel CPU/iGPU when I can buy the Zen/dGPU and get more bang for my money.Michael Bay - Thursday, June 16, 2016 - link

If Zen is even remotely close to current Intel crop, you`ll be paying just as much. AMD has no room to price dump.maroon1 - Friday, June 24, 2016 - link

Skylake iGPU has full support for HEVCThe "hybrid" support is for 10-bit HEVC and VP9 (kaby lake will get full hardware support for this though)

emn13 - Wednesday, June 15, 2016 - link

I currently am also GPU-less, and by and large that's fine, but I've noticed that several programs now offer gpgpu acceleration. And even though it's still quite rare, it's very noticeable how much slower things run without a gpu when gpgpu is supported.CSMR - Wednesday, June 15, 2016 - link

Assuming you're talking about iGPUs rather than headless servers? These should support OpenCL shouldn't they? Usually GPUs are so much faster at what they can do that any GPU is good enough, even low-end IGPUs.looncraz - Wednesday, June 15, 2016 - link

Because not everyone wants to replace their motherboard and CPU to gain a few graphics features.My HTPC currently runs a 7870XT, which is a power hog and is definitely overkill for its use-cases (some racing games (Dirt 2) and a few other games that are majestic on a 65" TV with theater-quality surround sound). The RX 460 is the card I've been waiting for to upgrade that machine (save power, reduce noise, not lose much - or any - performance, and updated off-loaded decoding).

xrror - Wednesday, June 15, 2016 - link

7870XT being a salvaged Tahiti part (It's basically what a 7930 would have been) isn't really a power hog for what it is, but I don't think the current drivers really optimize for it anymore since it also only has 2GB instead of 3GB so it's unlike all other Tahiti parts.Not disagreeing that it might be a bit warm for a compact HTPC, but it wasn't ever really advertised as a power sipper either.

Thorburn - Wednesday, June 15, 2016 - link

No they don't, even Kaby Lake is only 1.4a.You can do it using DP 1.2 to HDMI 2.0 adapters though.

Srikzquest - Monday, June 20, 2016 - link

This is a rumor but still something to consider... http://www.notebookcheck.net/Intel-Kaby-Lake-will-...