NVIDIA Announces the GeForce GTX 1000 Series: GTX 1080 and GTX 1070 Arrive In May & June

by Ryan Smith on May 7, 2016 3:25 AM ESTGeForce GTX 1080

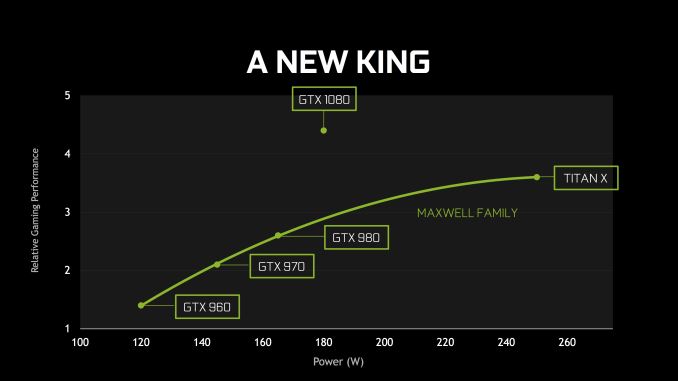

With Pascal/GP104 particulars out of the way, let’s talk about the cards themselves. “The new king” as NVIDIA affectionately calls it will be the GTX 1080, and will be their new flagship card. NVIDIA is promoting it as having better performance than both GTX 980 SLI and GTX Titan X. NVIDIA’s own performance marketing slides put the average at around 65% faster than GTX 980 and 20-25% faster than GTX Titan X/980 Ti, which is relatively consistent for a new NVIDIA GPU. Of course, real-world performance remains to be seen, and will vary from game to game.

| NVIDIA GTX x80 Specification Comparison | ||||||

| GTX 1080 | GTX 1070 | GTX 980 | GTX 780 | |||

| CUDA Cores | 2560 | (Fewer) | 2048 | 2304 | ||

| Texture Units | 160? | (How many?) | 128 | 192 | ||

| ROPs | 64 | (Good question) | 64 | 48 | ||

| Core Clock | 1607MHz | (Slower) | 1126MHz | 863MHz | ||

| Boost Clock | 1733MHz | (Again) | 1216MHz | 900Mhz | ||

| TFLOPs (FMA) | 9 TFLOPs | 6.5 TFLOPs | 5 TFLOPs | 4.1 TFLOPs | ||

| Memory Clock | 10Gbps GDDR5X | GDDR5 | 7Gbps GDDR5 | 6Gbps GDDR5 | ||

| Memory Bus Width | 256-bit | ? | 256-bit | 256-bit | ||

| VRAM | 8GB | 8GB | 4GB | 3GB | ||

| FP64 | ? | ? | 1/32 FP32 | 1/24 FP32 | ||

| TDP | 180W | ? | 165W | 250W | ||

| GPU | "GP104" | "GP104" | GM204 | GK110 | ||

| Transistor Count | 7.2B | 7.2B | 5.2B | 7.1B | ||

| Manufacturing Process | TSMC 16nm | TSMC 16nm | TSMC 28nm | TSMC 28nm | ||

| Launch Date | 05/27/2016 | 06/10/2016 | 09/18/2014 | 05/23/2013 | ||

| Launch Price | MSRP: $599 Founders $699 |

MSRP:$379 Founders: $449 |

$549 | $649 | ||

The GTX 1080 will ship with the most powerful GP104 implementation – we don’t yet have confirmation of whether it’s fully enabled – with 2560 of Pascal’s higher efficiency CUDA cores. And while I’m also awaiting confirmation of this as well, I believe it’s a very safe bet that the card will feature 160 texture units and 64 ROPs, given what is known about the architecture.

Along with Pascal’s architectural efficiency gains, the other big contributor to GTX 1080’s performance will come from its high clockspeed. The card will ship with a base clock of 1607MHz and a boost clock of 1733MHz. This is a significant 43% boost in operating frequency over GTX 980, and it will be interesting to hear how much of this is from the jump to 16nm and how much of this is from any kind of specific optimization to hit higher clockspeeds. Meanwhile NVIDIA is touting that GTX 1080 will be a solid overclocker, demoing it running at 2114MHz with its reference air cooler in their presentation.

GTX 1080 will be paired with 8GB of the new GDDR5X memory, on a 256-bit memory bus. The shift to GDDR5X allows NVIDIA to run GTX 1080 at 10Gbps/pin, giving the card a total of 320GB/sec of memory bandwidth. Interestingly, even with GDDRX5 this is still a bit less memory bandwidth than GTX 980 Ti (336GB/sec), a reminder that even with GDDR5X, memory bandwidth improvements continue to be outpaced by GPU throughput improvements, so memory bandwidth efficiency is always paramount.

I am admittedly a bit surprised that GTX 1080’s GDDR5X is only clocked at 10Gbps, and not something faster. Micron’s current chips are rated for up to 12Gbps, and the standard itself is meant to go up to 14Gbps. So I am curious over whether this is NVIDIA holding back so that they have some future headroom, whether this is a chip supply thing, or if perhaps GP104 simply can’t do 12Gbps at this time. At the same time it will be interesting to see whether the fact that NVIDIA can currently only source GDDRX from a single supplier (Micron) has any impact, as GDDR5 was always multi-sourced. Micron for their part has previously announced that their GDDR5X production line wouldn’t reach volume production until the summer, which is a potential indicator that GDDR5X supplies will be limited.

On the power front, NVIDIA has given the GTX 1080 an 180W TDP rating. This is 15W higher than the GTX 980, so the GTX x80 line is drifting back up a bit in TDP, but overall NVIDIA is still trying to keep the GTX x80 lineup as mid-power cards, as this worked well for them with GTX 680/980. Meanwhile thanks to Pascal and 16nm this is much lower than GTX 980 Ti for higher performance. We’ll look at card design a bit more in a moment, but I do want to note that NVIDIA is using a single 8-pin PCIe power connector for this, as opposed to 2 6-pin connectors, and this is something that is becoming increasingly common.

Looking at the design of the card itself, the GTX 1080 retains a lot of the signature style of NVIDIA’s other high-end reference cards, however after using the same industrial design since the original GTX Titan in 2013, NVIDIA has rolled out a new industrial design for the GTX 1000 series. The new design is far more (tri)angular as opposed to the squared-off GTX Titan cooler. Otherwise limited information is available about this design and whether the change improves cooling/noise in some fashion, or if this is part of NVIDIA’s overall fascination with triangles. Though one thing that has not changed is size: this is a 10.5” double-wide card, the same as all of the cards that used the previous design.

Industrial design aside, NVIDIA confirmed that the GTX 1080 will come with a vapor chamber cooler; GTX 980 did not do this, as NVIDIA didn’t believe this was necessary on a 165W card. Given NVIDIA’s overclocking promises with this card, this likely has something to do with it, as a vapor chamber should prove very capable on a 180W card.

Meanwhile it looks like the DVI port will live to see another day. Other than upgrading the underlying display controller to support the newer iterations of the DisplayPort standard, NVIDIA has not changed the actual port configuration since GTX 980 Ti. So we’re looking at 3 DisplayPorts, 1 HDMI port, and one DL-DVI-D port. This does mean that built-in analog (VGA capabilities) are dead though, as NVIDIA has switched from DVI-I to the pure-digital DVI-D.

As mentioned elsewhere, the GTX 1080’s power input has evolved a bit over GTX 980. Rather than 2 6-pin connectors it’s now a single 8-pin connector to feed the 180W card. This is also the first card to feature NVIDIA’s SLI HB connectors, which will require new SLI bridges. Though at this point our concerns about the long-term suitability over AFR stand.

For pricing and availability, NVIDIA has announced that the card will be available on May 27th. There will be two versions of the card, the base/MSRP card at $599, and then a more expensive Founders Edition card at $699. At the base level this is a slight price increase over the GTX 980, which launched at $549. Information on the differences between these versions is limited, but based on NVIDIA’s press release it would appear that only the Founders Edition card will ship with NVIDIA’s full reference design, cooler and all. Meanwhile the base cards will feature custom designs from NVIDIA’s partners. NVIDIA’s press release was also very careful to only attach the May 27th launch date to the Founders Edition cards.

Consequently, at this point it’s unclear whether the $599 card will be available on the 27th. In previous generations all of the initial launch cards were full reference cards, and if that were the case here then all of the cards on launch day will be the $699 cards, but we are looking to get confirmation of this situation ASAP. Otherwise, I expect that the base cards will forgo the vapor chamber cooler and embrace the dual/triple fan open air coolers that most of NVIDIA’s partners use. Though with any luck these cards will use the reference PCB, at least for the early runs.

On a final observation, if the new NVIDIA reference design and cooler will only be available with the Founders Edition card, this means that customers who prefer the NVIDIA reference card will be seeing a greater de-facto price increase. In that case we’re looking at $699 versus $549 for a launch window reference GTX 980.

GTX 1070

| NVIDIA GTX x70 Specification Comparison | ||||||

| GTX 1070 | GTX 970 | GTX 770 | GTX 670 | |||

| CUDA Cores | (Fewer than GTX 1080) | 1664 | 1536 | 1344 | ||

| Texture Units | (How many?) | 104 | 128 | 112 | ||

| ROPs | (Good question) | 56 | 32 | 32 | ||

| Core Clock | (Slower) | 1050MHz | 1046MHz | 915MHz | ||

| Boost Clock | (Again) | 1178MHz | 1085MHz | 9i80Mhz | ||

| TFLOPs (FMA) | 6.5 TFLOPs | 3.9 TFLOPs | 3.3 TFLOPs | 2.6 TFLOPs | ||

| Memory Clock | ? GDDR5 | 7Gbps GDDR5 | 7Gbps GDDR5 | 6Gbps GDDR5 | ||

| Memory Bus Width | ? | 256-bit | 256-bit | 256-bit | ||

| VRAM | 8GB | 4GB | 2GB | 2GB | ||

| FP64 | ? | 1/32 FP32 | 1/24 FP32 | 1/24 FP32 | ||

| TDP | ? | 145W | 230W | 170W | ||

| GPU | "GP104" | GM204 | GK104 | GK104 | ||

| Transistor Count | 7.2B | 5.2B | 3.5B | 3.5B | ||

| Manufacturing Process | TSMC 16nm | TSMC 28nm | TSMC 28nm | TSMC 28nm | ||

| Launch Date | 06/10/2016 | 09/18/2014 | 05/30/2013 | 05/10/2012 | ||

| Launch Price | MSRP:$379 Founders: $449 |

$329 | $399 | $399 | ||

Finally, below the GTX 1080 we have its cheaper sibling, the GTX 1070. Information on this card is more limited. We know it’s rated for 6.5 TFLOPs – 2.5 TFLOPs (28%) slower than GTX 1080 – but NVIDIA has not published specific CUDA core counts or GPU clockspeeds. Looking just at rated TFLOPs, at 72% of the rated performance of the GTX 1080, the gap between the GTX 1070 and GTX 1080 is a bit wider than it was for the GTX 900 series. There the GTX 970 was rated for 79% of the GTX 980’s performance.

On the memory front, the card will be paired with more common GDDR5. Like the GTX 1080 there’s 8GB of VRAM, but specific clockspeeds are unknown at this time. Also unknown is the card’s TDP, though lower than GTX 1080 is a safe assumption.

Like GTX 1080, GTX 1070 will be offered in a base/MSRP version and a Founders Edition version. These will be $379 and $449 respectively – $50 over the GTX 970’s launch price of $329 – with the Founders Edition card employing the new NVIDIA industrial design. I’ll also quickly note that it remains to be seen whether the industrial design reuse will include GTX 1080’s vapor chamber, or if NVIDIA will swap out the cooling apparatus under the hood.

The GTX 1070 will be the latter of the two new Pascal cards, hitting the streets on June 10th. Like the GTX 1080, NVIDIA’s press release is very careful to only attach that date to the Founders Edition version, so we’re still waiting on confirmation over whether the base card will be available on the 10th as well.

234 Comments

View All Comments

Kutark - Thursday, May 12, 2016 - link

Oh The Time™ has definitely come.Even a 1070 is going to be a light years improvement over a 580.

BrokenCrayons - Monday, May 9, 2016 - link

It's an interesting announcement, but in some ways rather disappointing too. The 1080 is suffering from wattage creep as it now requires more power than the card it was meant to replace. Yes, there's more performance too, but I was hoping that the focus would be on getting power consumption and heat output under control with this generation as opposed to greater performance which is probably being driven by the soon-to-flop VR fad. This is yet another GPU in a long like of Hair Dryer FXs that started with the 5800 and that silly fairy girl marketing run.It'll unfortunately probably be at least a year until the GT 730 in my headless Steam streaming box gets a replacement unless AMD can come through with a 16nm card that meets my requirement. I'm in no hurry to replace my desktop GPU, but when I do, I would much prefer a sub-30 watt half-height card over one of these silly showboat toys.

jzkarap - Monday, May 9, 2016 - link

"soon-to-flop VR fad" will be tasty crow in a few years.dragonsqrrl - Monday, May 9, 2016 - link

I'm curious about your perception of 'wattage creep'. The only way I could see this having any validity is if your only point of comparison is the 980. 180W TDP is not at all unusual for a x04 GPU. The 560Ti had a 170W TDP, and the 680 195W. The point of improved efficiency isn't necessarily to reduce power consumption at a given GPU tier, it's to improve performance at a given TDP. That's how generational performance scaling works in modern GPU architectures. So I'm not sure what you were expecting since the 1080 achieves about 2x the perf/W of the 980Ti. And your comparison to the 5800 falls so far outside the realm of objectivity and informed reality that I'm not even sure how to address it.In any case the 1080 clearly isn't targeted at you or your typical workload, so why do you care? In fact its most relevant attribute is its significantly improved efficiency, which you should be excited about given the implications for low-end cards based on Pascal. Are you suggesting that merely the existence of this type of card somehow poses a threat to you or your graphics needs?

AnnonymousCoward - Monday, May 9, 2016 - link

Very well said. And this guy wants <30W! He might as well stick to iGPU.BrokenCrayons - Tuesday, May 10, 2016 - link

With regards to wattage creep, I only compared the new GPU to the current generation card which NV is using as a basis of comparison in its presentation materials. Prior generations aren't really a concern, but if they are taken into account the situation becomes progressively more unforgivable as we iterate backwards toward the Riva 128 as power requirements are significantly lower despite the less efficient design of earlier graphics cards.I also don't think it's fair to take into account a claim of 2x the performance per watt until after the 1080 and 1070 benchmarks are released that give us a better idea of the actual performance versus the marketing claims as those so rarely align. However, if I were to entertain that claim as accurate, I'd find the situation even more abhorrent since NV failed to take the time to use the gains in efficiency to do away with external power connectors and dual slot cooling, both of which go against the grain of the progressively more mobile and power efficient world of computing in which we're living now. It's as if they decided the best solution to the problem of graphics was "make it bigger and more powerful!" That sort of approach doesn't actually impress people who aren't resolution junkies, but it's NV's business to produce niche cards for a shrinking market in order to obtain the halo effect of claiming the elusive performance crown so people buy their lower tier offerings as a consequence of the competition between graphics hardware unrelated to their current purchase.

I do suggest and maintain that the existence of high wattage, large graphics cards continues to threaten the concept of efficiency. There's only so far down that any given design can scale efficiently. By failing to focus exclusively on efficient designs, NV is leaving unrealized performance on the table. Once again, that's their concern and not mine. However, I would prefer they announce and release their low end cards first, bringing them to the forefront of the media hype they're attempting to build because those parts are the ones that people ultimately end up purchasing in large numbers as indicated by statistically significant collection mechanisms like the Steam survey.

BiggieShady - Wednesday, May 11, 2016 - link

Also existence of croissants is threatening the concept of muffins, and existence of space shuttle is threatening the concept of a bicycle.dragonsqrrl - Thursday, May 12, 2016 - link

The problem is modern performance scaling, particularly with GPUs, works differently than it did 1 to 2 decades ago. Now efficiency is essentially the same as performance because we've hit the TDP ceiling for every computing form factor including desktop/server (around 250-300W single GPU). As you mentioned this wasn't the case back when Riva 128 launched. The difference is chip makers can no longer rely on increasing TDP to help scale performance from one gen to the next like they could back then. For Nvidia this shift gained a lot of momentum with Kepler. So while not everything going back to the beginnings of desktop GPUs is relevant to modern performance scaling, I would argue that everything since Fermi is. This is further evidenced by the relative consistency in die sizes, naming convention, and TDPs for every GPU lineup since Fermi. The main difference from one gen to the next is performance. Fortunately it's pretty clear that your definition of 'wattage creep' died with Fermi. It's no more relevant to modern performance scaling than your reference to Riva 128 as proof of continued wattage creep.I just find it difficult to believe that any informed person familiar with the trends and progression of the industry over the past two decades would now expect Nvidia to limit TDPs to 75W, and then feel threatened when they, in overwhelmingly predictable fashion, didn't do that. I mean, what's the basis for this expectation? When have they ever launched an ultra low-end card first, or imposed such ridiculous TDP constraints on themselves relative to the norms of the time? Why does the existence of 'high' TDP cards "threaten the concept of efficiency" when TDP has no bearing over efficiency?

And it's strange that you mention steam survey in defense of your position, when the 730 isn't nearly as popular as cards like the 970, or 960. There's definitely a sweet spot, but the 730 (and other cards like it) fall far below that threshold.

BrokenCrayons - Friday, May 13, 2016 - link

I guess I'll try once more since you don't seem to understand what I'm talking about with respect to wattage creep. I've cut out the non-relevant parts of the discussion so it'll make more sense. This pretty much encapsulates what I meant: "With regards to wattage creep, I only compared the new GPU to the current generation card which NV is using as a basis of comparison in its presentation materials." I probably should have left out the historic details since you're getting awfully hung up on the Riva 128 and don't seem to acknowledge there were a few other graphics cards that were produced between it and the Fermi generation. I'm guessing that generation is probably when you became more familiar with the technology so it might make sense that the artificial cut-off in acknowledging graphics card power requirements would begin there. So, for the sake of your own comprehension, the wattage creep thing that you're stuck on is the increase between the 980 -> 1080 and nothing more. We probably shouldn't look at anything earlier than the past couple of years since that appears to be really confusing.To address your second point of confusion, my desire is to see the release of lower end graphics cards, starting from the bottom and working upward to the top. I'm not surprised by the approach NV has taken, but that doesn't stop me from wishing the world were different and that the people in it were less easily taken in by business marketing. I realize that very few people are ultimately endowed with the ability to analyze the bigger picture of the world in which they live without losing sight of the nuances as well, but I'm eternally optimistic that a few people are capable of doing so and just need the right sort of nudge to get there.

In fact, your third discussion point, the Steam Survey, is a pretty good example of missing fact that a forest exists because of all those trees that are getting in the way of seeing it. The 730's percentages aren't notable in relationship to the 9xx cards. In fact, what's more noteworthy is the percentage of Intel graphics cards. Intel's percentage alone ought to make it obvious that the bottom rungs of the GPU performance ladder are vitally important. Combining those with the lower end of the NV and AMD product stacks and it paints that forest picture I was just talking about wherein low end graphics adapters are an unquestionably dominant force. Beyond the Steam survey are the sales numbers by cost categories that have demonstrated for years that lower end computers with lower end hardware sell in large numbers. Though I'm probably point out too many individual trees at this point, I'll also throw in the mobile devices out there (smartphones and tablets) demonstrates how huge the entertainment market is on power efficient hardware when the number of copies of casual games by numbers demonstrates the dominance of gaming on comparatively weak graphics hardware.

dragonsqrrl - Friday, May 13, 2016 - link

Argument creep?"However, if I were to entertain that claim as accurate, I'd find the situation even more abhorrent since NV failed to take the time to use the gains in efficiency to do away with external power connectors and dual slot cooling, both of which go against the grain of the progressively more mobile and power efficient world of computing in which we're living now."

Your own words. So what you're trying to say now is that I've misunderstood. You weren't trying to say that it's abhorrent of Nvidia to not have killed off external power connectors this generation, you simply wished Nvidia would focus more on low-end cards. I love how you're now trying to paint yourself as some sort of enlightened open minded intellectual, but unfortunately your previous 2 comments aren't going anywhere.

"I probably should have left out the historic details since you're getting awfully hung up on the Riva 128 and don't seem to acknowledge there were a few other graphics cards that were produced between it and the Fermi generation."

... that's exactly what I acknowledged. In fact failing to acknowledge the rationale for the inflation of TDP between the two was exactly the part of your previous comment I tried to address. I tried to explain that the 'wattage creep' you're thinking about, in referencing the inflation since Riva 128, and that lead to a card like the 5800, is very different from the increase in TDP between the 980 and 1080. The difference now is efficiency is driving the performance scaling.

"So, for the sake of your own comprehension, the wattage creep thing that you're stuck on is the increase between the 980 -> 1080 and nothing more."

... so I think it's misinformed and misleading to simply refer to that as 'wattage creep' while referencing the 5800 and Riva as examples.

"I realize that very few people are ultimately endowed with the ability to analyze the bigger picture of the world in which they live without losing sight of the nuances as well, but I'm eternally optimistic that a few people are capable of doing so and just need the right sort of nudge to get there."

Isn't this criticism just a little hypocritical given your hard line position? You point out the inability of others to "analyze the bigger picture", but at the same time you can't seem to fathom the value of higher TDP cards, not just for gamers, but for researchers, developers, and content creators. It's much more than just the objectively unnuaunced picture you're portraying of ignorant "resolution junkies" being taken in by marketing and "silly showboat toys". Again, you say that higher TDP cards "threaten the concept of efficiency", which is ironic since the 1080 is the most efficient card ever, and TDP has nothing to do with efficiency.

"The 730's percentages aren't notable in relationship to the 9xx cards. In fact, what's more noteworthy is the percentage of Intel graphics cards."

How is that noteworthy in the context of your argument? You discussed the popularity of ultra low-end discrete cards to help bolster your position, did you not? How do iGPUs that come with most Intel processors by default support that?

"Combining those with the lower end of the NV and AMD product stacks and it paints that forest picture I was just talking about wherein low end graphics adapters are an unquestionably dominant force."

True, but I doubt ultra low-end, like you've been promoting, would make for quite as compelling an argument. Hopefully your position hasn't migrated too much here either. You did say, "I would much prefer a sub-30 watt half-height card over one of these silly showboat toys". I could also add up cards like the 970, 960, 750Ti, 760, 660, etc. and make a pretty compelling argument for the popularity of higher-end cards.