The Apple iPad Pro Review

by Ryan Smith, Joshua Ho & Brandon Chester on January 22, 2016 8:10 AM ESTSoC Analysis: On x86 vs ARMv8

Before we get to the benchmarks, I want to spend a bit of time talking about the impact of CPU architectures at a middle degree of technical depth. At a high level, there are a number of peripheral issues when it comes to comparing these two SoCs, such as the quality of their fixed-function blocks. But when you look at what consumes the vast majority of the power, it turns out that the CPU is competing with things like the modem/RF front-end and GPU.

x86-64 ISA registers

Probably the easiest place to start when we’re comparing things like Skylake and Twister is the ISA (instruction set architecture). This subject alone is probably worthy of an article, but the short version for those that aren't really familiar with this topic is that an ISA defines how a processor should behave in response to certain instructions, and how these instructions should be encoded. For example, if you were to add two integers together in the EAX and EDX registers, x86-32 dictates that this would be equivalent to 01d0 in hexadecimal. In response to this instruction, the CPU would add whatever value that was in the EDX register to the value in the EAX register and leave the result in the EDX register.

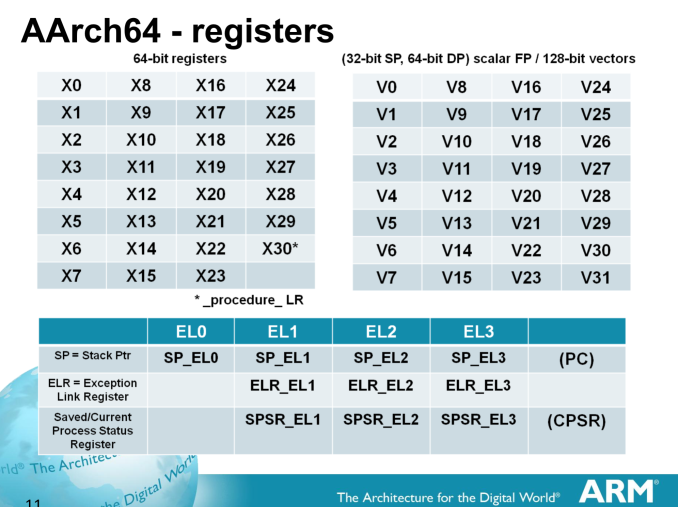

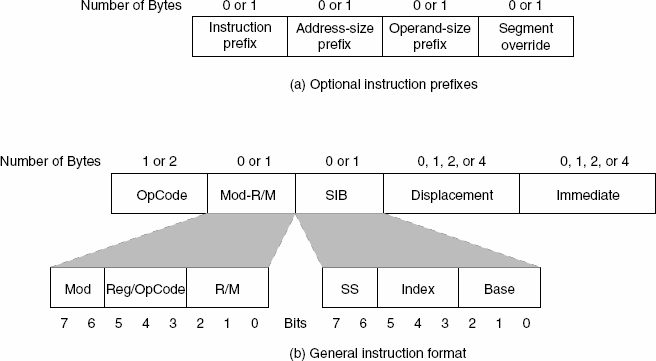

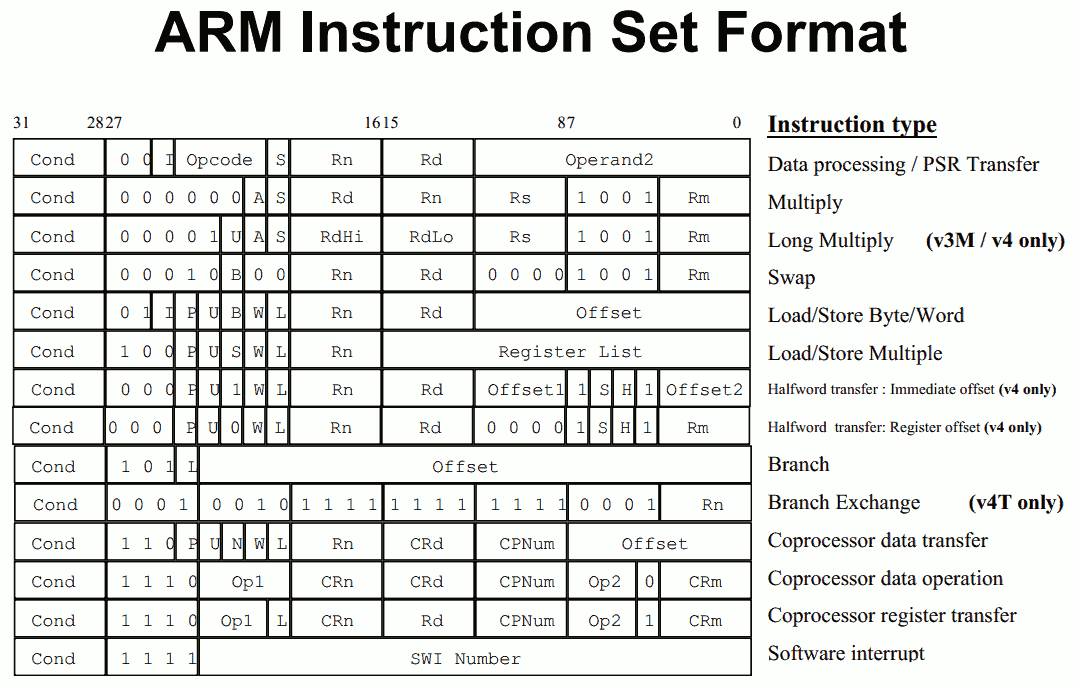

The fundamental difference between x86 and ARM is that x86 is a relatively complex ISA, while ARM is relatively simple by comparison. One key difference is that ARM dictates that every instruction is a fixed number of bits. In the case of ARMv8-A and ARMv7-A, all instructions are 32-bits long unless you're in thumb mode, which means that all instructions are 16-bit long, but the same sort of trade-offs that come from a fixed length instruction encoding still apply. Thumb-2 is a variable length ISA, so in some sense the same trade-offs apply. It’s important to make a distinction between instruction and data here, because even though AArch64 uses 32-bit instructions the register width is 64 bits, which is what determines things like how much memory can be addressed and the range of values that a single register can hold. By comparison, Intel’s x86 ISA has variable length instructions. In both x86-32 and x86-64/AMD64, each instruction can range anywhere from 8 to 120 bits long depending upon how the instruction is encoded.

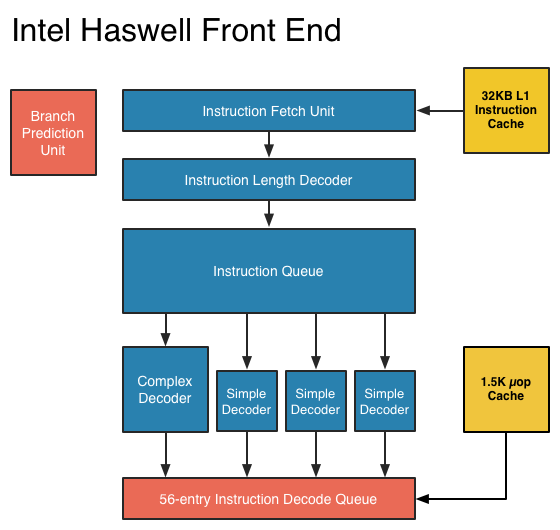

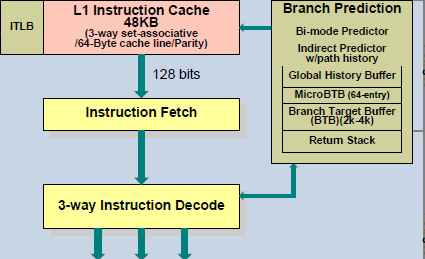

At this point, it might be evident that on the implementation side of things, a decoder for x86 instructions is going to be more complex. For a CPU implementing the ARM ISA, because the instructions are of a fixed length the decoder simply reads instructions 2 or 4 bytes at a time. On the other hand, a CPU implementing the x86 ISA would have to determine how many bytes to pull in at a time for an instruction based upon the preceding bytes.

A57 Front-End Decode, Note the lack of uop cache

While it might sound like the x86 ISA is just clearly at a disadvantage here, it’s important to avoid oversimplifying the problem. Although the decoder of an ARM CPU already knows how many bytes it needs to pull in at a time, this inherently means that unless all 2 or 4 bytes of the instruction are used, each instruction contains wasted bits. While it may not seem like a big deal to “waste” a byte here and there, this can actually become a significant bottleneck in how quickly instructions can get from the L1 instruction cache to the front-end instruction decoder of the CPU. The major issue here is that due to RC delay in the metal wire interconnects of a chip, increasing the size of an instruction cache inherently increases the number of cycles that it takes for an instruction to get from the L1 cache to the instruction decoder on the CPU. If a cache doesn’t have the instruction that you need, it could take hundreds of cycles for it to arrive from main memory.

Of course, there are other issues worth considering. For example, in the case of x86, the instructions themselves can be incredibly complex. One of the simplest cases of this is just some cases of the add instruction, where you can have either a source or destination be in memory, although both source and destination cannot be in memory. An example of this might be addq (%rax,%rbx,2), %rdx, which could take 5 CPU cycles to happen in something like Skylake. Of course, pipelining and other tricks can make the throughput of such instructions much higher but that's another topic that can't be properly addressed within the scope of this article.

By comparison, the ARM ISA has no direct equivalent to this instruction. Looking at our example of an add instruction, ARM would require a load instruction before the add instruction. This has two notable implications. The first is that this once again is an advantage for an x86 CPU in terms of instruction density because fewer bits are needed to express a single instruction. The second is that for a “pure” CISC CPU you now have a barrier for a number of performance and power optimizations as any instruction dependent upon the result from the current instruction wouldn’t be able to be pipelined or executed in parallel.

The final issue here is that x86 just has an enormous number of instructions that have to be supported due to backwards compatibility. Part of the reason why x86 became so dominant in the market was that code compiled for the original Intel 8086 would work with any future x86 CPU, but the original 8086 didn’t even have memory protection. As a result, all x86 CPUs made today still have to start in real mode and support the original 16-bit registers and instructions, in addition to 32-bit and 64-bit registers and instructions. Of course, to run a program in 8086 mode is a non-trivial task, but even in the x86-64 ISA it isn't unusual to see instructions that are identical to the x86-32 equivalent. By comparison, ARMv8 is designed such that you can only execute ARMv7 or AArch32 code across exception boundaries, so practically programs are only going to run one type of code or the other.

Back in the 1980s up to the 1990s, this became one of the major reasons why RISC was rapidly becoming dominant as CISC ISAs like x86 ended up creating CPUs that generally used more power and die area for the same performance. However, today ISA is basically irrelevant to the discussion due to a number of factors. The first is that beginning with the Intel Pentium Pro and AMD K5, x86 CPUs were really RISC CPU cores with microcode or some other logic to translate x86 CPU instructions to the internal RISC CPU instructions. The second is that decoding of these instructions has been increasingly optimized around only a few instructions that are commonly used by compilers, which makes the x86 ISA practically less complex than what the standard might suggest. The final change here has been that ARM and other RISC ISAs have gotten increasingly complex as well, as it became necessary to enable instructions that support floating point math, SIMD operations, CPU virtualization, and cryptography. As a result, the RISC/CISC distinction is mostly irrelevant when it comes to discussions of power efficiency and performance as microarchitecture is really the main factor at play now.

408 Comments

View All Comments

ddriver - Friday, January 22, 2016 - link

Should have named in iPad XL or something, this device will barely suit the need of any professional. Performance is good, but without supporting professional software, the hardware is useless.Coztomba - Friday, January 22, 2016 - link

And why would anyone bother to make professional software if the hardware wasn't capable? They needed a starting off point to say "Hey we can produce the hardware to run pro apps on a iPad. It's only going to get better. Start developing!"ddriver - Friday, January 22, 2016 - link

If anyone could stimulate software companies to do that, I guess that would be apple with its mountains of money and strong sales. They do have enough resources to do the software themselves.Mobile device hardware has been capable of professional workloads for at least 2-3 years. Nobody bothered to do it. Big software companies did not port their applications to ARM, instead they made cheap, crippled lesser versions. This is IMO a stupid move, they probably did this to promote their professional software to common folk, but it would have been more lucrative to bring professional software to mobile platforms.

There are 2 main issues with mobile platforms - memory and CPU performance. Modern software is very bloated memory consumption wise, especially software relying on managed languages, the latter are also significantly slower in terms of performance than languages like C or C++.

There is one big issue with legacy professional software - it originates back from the days developers were locked in platform specific application development APIs, so it represents a significant effort to port them to mobile platforms - essentially, most of the stuff needs to be rewritten.

But a rewrite in faster and more efficient language, taking advantage of contemporary technology such as OpenCL can easily bring professional software to mobile platforms at an experience as good as that of desktop workstations. Naturally, more efficiently written software will also run that much better on powerful desktop machines as well.

mr_tawan - Friday, January 22, 2016 - link

Not all pro are in the multimedia industry, you know :-).For most office workers, for instance, the only things they might need are notetaking (onenote), email (outlook), wordprocessor (word), and calendar (onenote). Most all tablet are capable to all of that, but it is a bit awkard to work with (due to the missing stylus, and not-so-comfy keyboard).

With iPad Pro which, well, address this issue in the same way as the Surface Pro by adding keyboard and stylus to the tablet. It's much easier to use the table extensively (rather than just browsing web and watching video, which is hardly described as a profession job). So I personally think that adding these two options could takes the iPad into the 'professional' realm.

I think that Apple would love to have iPad to complement MacBook (and Mac Pro), rather than to compete. If you need more power than just by Mac Pro :-).

ddriver - Friday, January 22, 2016 - link

Yeah, why use one device when you can buy and lug around two devices instead.melgross - Friday, January 22, 2016 - link

There's actually quite a lot of professional software available on iOS, and has been for some time. I suppose if you do t use iOS, and so do t know what's a bailable, you can say that little is available, but it's simply not true. Microsoft itself had about two dozen professional apps on iOS. You really need to look through the App Store.ddriver - Friday, January 22, 2016 - link

What would those 24 ("two dozen") professional microsoft apps be?Dave Bothell - Saturday, January 23, 2016 - link

Word, Excel, PowerPoint, Outlook, OWA for iPad, Sunrise Calendar, OneDrive, OneDrive for Business, OneNote, SmartGlass, Skype, Bing for iPad, Remote Desktop, Lync, Office 365 Admin, Intune, Azure Authenticator, Sway, SharePoint Newsfeed, Dynamics CRM, Dynamics Business Analyzer, Dynamics Time Management, PowerApps, Global Startup Directory. There's more, but you asked for 24.xerandin - Saturday, January 23, 2016 - link

Smartglass isn't a professional app--it controls Xbox 360s or Xbox Ones, depending on which version of the app you install.dsraa - Sunday, January 24, 2016 - link

you forgot bing.....bing isnt a professional app either.