Ask the Experts: ARM's Cortex A53 Lead Architect, Peter Greenhalgh

by Anand Lal Shimpi on December 10, 2013 9:00 AM EST- Posted in

- Ask the Experts

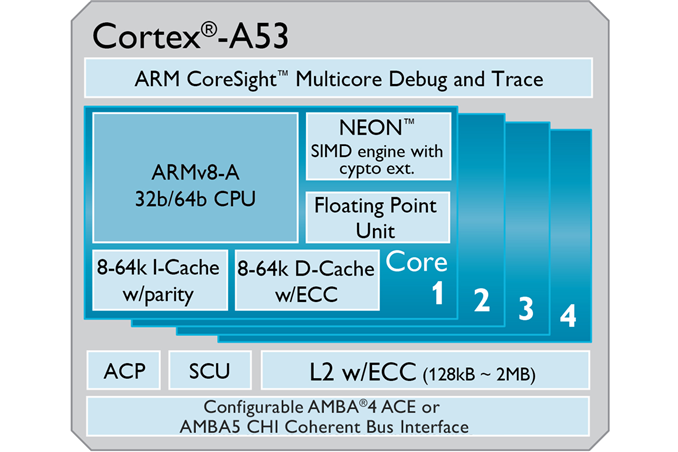

Given the timing of yesterday's Cortex A53 based Snapdragon 410 announcement, our latest Ask the Experts installment couldn't be better. Peter Greenhalgh, lead architect of the Cortex A53, has agreed to spend some time with us and answer any burning questions you might have on your mind about ARM, directly.

Peter has worked in ARM's processor division for 13 years and worked on the Cortex R4, Cortex A8 and Cortex A5 (as well as the ARM1176JZF-S and ARM1136JF-S). He was lead architect of the Cortex A7 and ARM's big.LITTLE technology as well.

Later this month I'll be doing a live discussion with Peter via Google Hangouts, but you guys get first crack at him. If you have any questions about Cortex A7, Cortex A53, big.LITTLE or pretty much anything else ARM related fire away in the comments below. Peter will be answering your questions personally in the next week.

Please help make Peter feel at home here on AnandTech by impressing him with your questions. Do a good job here and I might be able to even convince him to give away some ARM powered goodies...

158 Comments

View All Comments

HalloweenJack - Tuesday, December 10, 2013 - link

Will the A53 finally be `the big` breakthrough in the data server market you want? Google , apple and Facebook have all been testing ARM servers for a while now and good `noises` are heard about them. Also will the new tie in with AMD reap its rewards sooner than later ?Samus - Tuesday, December 10, 2013 - link

It's going to need more than 2MB cache per core to compete in the enterprise class because it's safe to say the branch prediction and cache hit rates will trail Intel.ddriver - Tuesday, December 10, 2013 - link

Cache does not matter for data servers, as much as you have it is never enough to keep a significant amount of the data it needs to serve to make a substantial difference, data servers need fast storage and plenty of ram for caching frequently accessed stuff.Cache serves computation purposes, when you need to put data on the registers and perform operations with it, because cpu cache is much faster than ram, not to mention mechanical storage, but for a data server, the latency of ram and large scale storage is not an issue. And furthermore, unless you have gigabytes of cpu cache, it won't make any difference.

Also, what branch prediction, the data server is gonna predict what data is needed? This is BS, branch prediction is important for computation, not for serving data, and for a typical data server application, a cache miss doesn't really matter, because in a data server application you will get predominantly misses, no data server uses a data set small enough to fit and stay in the cpu cache.

Your comment makes zero sense. My guess is you "heard" intel makes good prefetchers and you try to pretend to be smart... well, DON'T!

Samus - Tuesday, December 10, 2013 - link

Very constructive insult driver, nicely done.I'd recommend you research how and why enterprise class processors have historically had large caches and why they ARE important before you vouch for a mobile platform being retrofitted for use in datacenter. Any programmer or hardware engineer would disagree with your ridiculous trolling.

ddriver - Wednesday, December 11, 2013 - link

As I said, unless your data set is small enough to fit and stay in the cpu cache, you won't see significant improvements, and for a data server this scenario is completely out of the question.Unlike you, I am a programmer, and a pretty low level at that (low level at the hardware that is, not at skill). Also, I know a fanboy troll when I see one, you saw max L2 cache is 2MB and made the brilliant deduction an entire CPU architecture is noncompetitive to intel, which is what is really ridiculous.

When hardware engineers design CPUs, they run very accurate performance simulations to determined the optimal cache capacity, and if that chip is capped at 2MB of L2 cache, that means increasing it anymore is no longer efficient in terms of "die area/performance" ratio.

happycamperjack - Wednesday, December 11, 2013 - link

You guys talk like there can only be ONE CPU to rule them all! That's not how big guys like Facebook or Google work. They need different varieties of CPUs to handle different jobs. Low power ARMs for simple tasks like data retrieval or serving static data while conserving power usage. Enterprise CPU for data analysis and predictions. There are simply too many different tasks for just using one type of CPU if they want to run the company efficiently. A few watts of power differences could mean millions of savings.eriohl - Friday, December 13, 2013 - link

I agree that if you are just serving data on key (data servers) then cache size doesn't matter too much (although I can imagine that being able to keep indexing structures and such in processor cache might be useful depending on the application).But regardless or that, in my experience servers tend to do quite a bit of computing as well.

So I'm going to have to disagree with you that processor cache in general doesn't matter for enterprise servers (and agree with happycamperjack I suppose).

virtual void - Wednesday, December 11, 2013 - link

That was some utterly *bull*. Disable the L3 cache on a Xeon class CPU and run whatever you call "server-workload", your performance will be absolutely shit compared to when the L3 is enabled, even more so if you run on Sandy Bridge or later where I/O-devices DMA directly into (and out of) L3-cache.Samus - Wednesday, December 11, 2013 - link

I know driver may be a software engineer of some sort but he obviously has no clue about memory bandwidth and how large caches work around it.Pobu - Friday, December 13, 2013 - link

enterprise class = data servers ? Plz look carefully at what Samus said.Moreover, data server = data storage server or data analysis server ?

Cache is abusolutely a critical part for data analysis server.