Mobile Ivy Bridge and ASUS N56VM Preview

by Jarred Walton on April 23, 2012 12:02 PM ESTBattery Life: Generally Improved, Depending on the Laptop

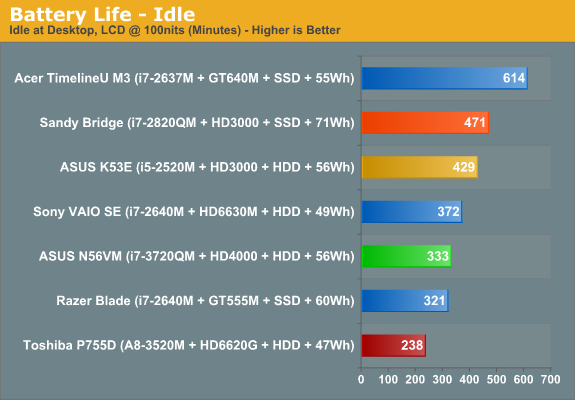

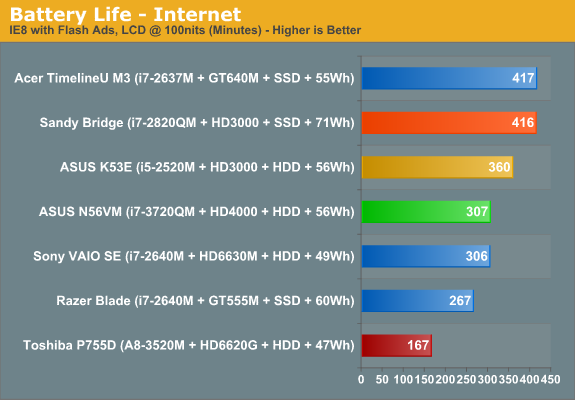

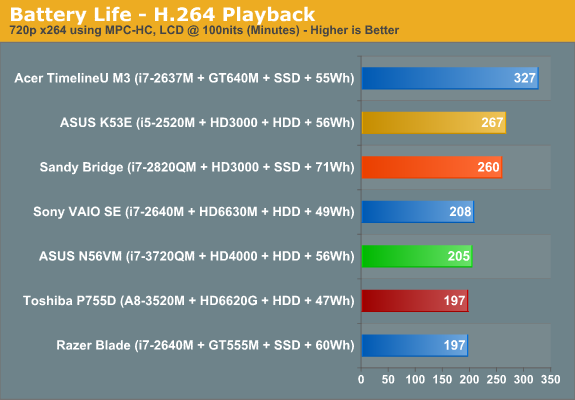

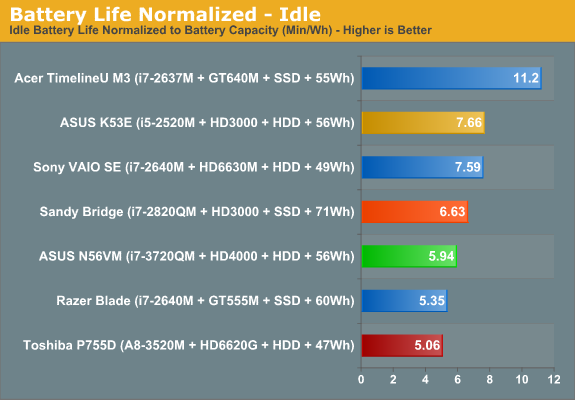

Another important metric for Ivy Bridge is battery life. We used our standard battery life test settings: 100 nits on the LCD, which was 25% in Windows’ power settings for the N56VM, and we use a tweaked Power Saver profile. We then timed how long the N56VM could last off the mains in idle, Internet, and H.264 playback scenarios, along with some “gaming” on the HD 4000. We’ll start with the charts.

One thing that we need to point out is that the original Sandy Bridge i7-2820QM laptop from Intel was an awesome example of how to deliver great battery life. We never did reach that same level with any of the retail quad-core laptops that we tested over the past year, but then most of the other quad-core laptops also included some form of discrete graphics. Compared with that particular notebook (which never shipped as a retail product), our Ivy Bridge notebook has worse battery life. Once we start comparing with retail laptops however—particularly those with switchable graphics—Ivy Bridge ends up looking like it will deliver similar to slightly better battery life relative to Sandy Bridge.

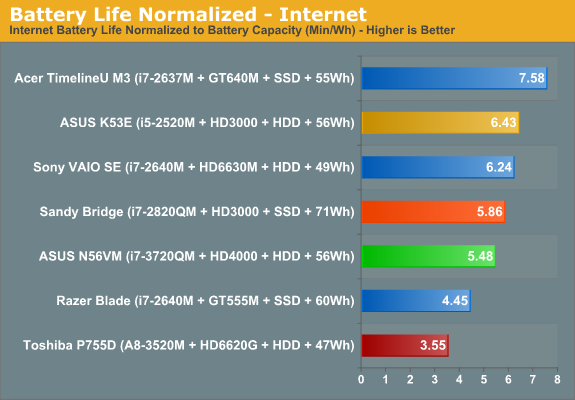

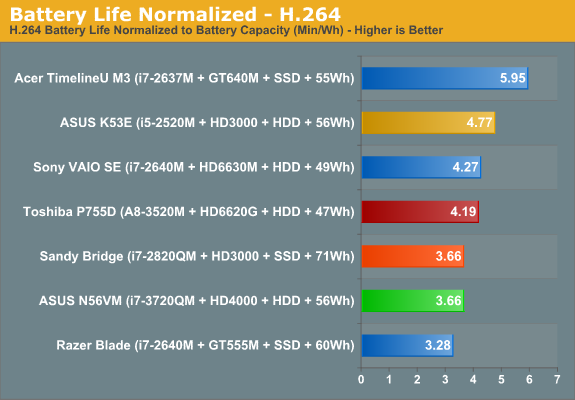

With a 56Wh battery, the N56VM manages 5.5 hours of idle battery life, five hours of Internet battery life, and just under 3.5 hours of H.264 battery life. Normalize that to battery capacity and the N56VM beats most of the quad-core Optimus enabled laptops from the past year in our tests, but it frequently loses to the dual-core Sandy Bridge laptops. If we take the best scores for each normalized battery life test (Idle, Internet, and H.264), Dell’s XPS 15 leads the N56VM in the H.264 and Idle tests, the Alienware M14x has a better Idle result, and the CyberPower X6-9100 comes out slightly ahead in H.264 as well, but the N56VM is 10% ahead of the closest Sandy Bridge quad-core in the Internet results. It’s also worth noting that as a whole, the N56VM places near the top of the normalized results for quad-core CPUs. Of course there are plenty of other laptops that deliver better battery life—ultrabooks and netbooks in particular, along with many of the dual-core Sandy Bridge offerings—but they’re not anywhere near the same performance class as standard voltage Sandy/Ivy Bridge.

There’s more to battery life than the above charts might suggest, unfortunately. While we’d love to make a definitive statement with regards to Ivy Bridge battery life, the reality is that we’re looking at our first Ivy Bridge laptop and we really have no idea how it will compare with future Ivy Bridge implementations. What’s more, we’re dealing with pre-release hardware, so ASUS likely hasn’t had quite as much opportunity to optimize for battery life as what we’ll see on the retail units. Optimizing for battery life is a complex task, and we’ve seen some manufacturers succeed while others fall well short. For example, the difference between the best and worst normalized battery life for quad-core Sandy Bridge laptops (not counting the non-retail notebook) is around 50% across all three of our tests—so the worst laptop might get 2.6 min/Wh in our H.264 playback test while the best laptop scored 3.9 min/Wh. That’s a pretty big difference, and until we test a few more Ivy Bridge laptops we won’t really know what to expect.

Gaming on Battery

Some of you have requested battery life while gaming, and we also did a test where we looped 3DMark06 (just the four gaming tests) at 1366x768 until the battery gave out. Using the “Balanced” power profile with the HD 4000 also set to “Balanced” performance, the N56VM managed 79 minutes before shutting down. That might seem like a poor showing, until you realize that the ASUS K53E (dual-core Sandy Bridge i5-2520M) only lasted 73 minutes in the same test, all while delivering 35% lower graphics performance.

Another interesting item to report is that previously we suggested that Llano was the champion of our “gaming off the mains” test: the A8-3500M lasted a whopping 161 minutes looping 3DMark06 when we first tested it, but unfortunately that was with the GPU set to maximize battery life—which means less than half the normal GPU performance. With the A8-3500M set to maximum performance (which is how we’re testing the HD 4000), battery life in our 3DMark06 loop drops to just 98 minutes with a 58Wh battery. That’s still better than the HD 4000 battery life while delivering roughly the same level of graphics performance, though it’s also with a 14” LCD so we’re not comparing otherwise identical platforms. Finally, running the same 3DMark06 loop on the N56VM with the GT 630M active (and set to “Prefer Maximum Performance”) results in 67 minutes of battery life.

If we do some quick math, 3DMark06 uses around 41.7W with the HD 4000 active compared to 49.1W with the GT 630M. Llano meanwhile uses just 35.5W under the same load. For Ivy Bridge, the 7.4W difference under load is significant, but at the same time NVIDIA (and AMD) GPUs appear more efficient for the performance they provide. It’s difficult to say exactly how much of the power draw is going to the GPU versus the CPU and the rest of the system, but the GT 630M can consume up to 35W and is likely using somewhere around 20-25W in this test; that means for less than half the performance, the HD 4000 looks to be consuming around 13-18W. Even if those numbers are off, one thing is clear: Llano is still the chip to beat for gaming on battery power. It’s just unfortunate that the CPU side of Llano is so far behind, but hopefully Trinity will change things in the near future.

49 Comments

View All Comments

krumme - Monday, April 23, 2012 - link

There is a reason Intel is bringing 14nm to the atoms in 2014.The product here doesnt make sense. Its expensive and not better than the one before it, except better gaming - that is, if the drivers work.

I dont know if the SB notebooks i have in the house is the same as the ones Jarred have. Mine didnt bring a revolution, but solid battery life, like the penryn notebook and core duo i also have. In my world more or less the same if you apply a ssd for normal office work.

Loads of utterly uninteresting benchmark doest mask the facts. This product excels where its not needed, and fails where it should excell most: battery life.

The trigate is mostyly a failure now. There is no need to call it otherwise, and the "preview" looks 10% like a press release i my world. At least trigate is not living up to expectations. Sometimes that happen with technology development, its a wonder its so smooth for Intel normally, and a testament to their huge expertise. When the technology matures and Intel makes better use of the technology in the arch, we will se huge improvements. Spare the praise until then, this is just wrong and bad.

JarredWalton - Monday, April 23, 2012 - link

Seriously!? You're going to mention Atom as the first comment on Ivy Bridge? Atom is such a dog as far as performance is concerned that I have to wonder what planet you're living on. 14nm Atom is going to still be a slow product, only it might double the performance of Cedar Trail. Heck, it could triple the performance of Cedar Trail, which would make it about as fast as Core 2 CULV from three years ago. Hmmm.....If Sandy Bridge wasn't a revolution, offering twice the performance as Clarksfield at the high end and triple the battery life potential (though much of that is because Clarksfield was paired with power hungry GPUs), I'm not sure what would be a revolution. Dual-core SNB wasn't as big of a jump, but it was still a solid 15-25% faster than Arrandale and offered 5% to 50% better battery life--the 50% figure coming in H.264 playback; 10-15% better battery life was typical of office workloads.

Your statement with regards to battery life basically shows you either don't understand laptops, or you're being extremely narrow minded with Ivy Bridge. I was hoping for more, but we're looking at one set of hardware (i7-3720QM, 8GB RAM, 750GB 7200RPM HDD, switchable GT 630M GPU, and a 15.6" LCD that can hit 430 nits), and we're looking at it several weeks before it will go on sale. That battery life isn't a huge leap forward isn't a real surprise.

SNB laptops draw around 10W at idle, and 6-7W of that is going to the everything besides the CPU. That means SNB CPUs draw around 2-3W at idle. This particular IVB laptop draws around 10W at idle, and all of the other components (especially the LCD) will easily draw at least 6-7W, which means once again the CPU is using 2-3W at idle. IVB could draw 0W at idle and the best we could hope for would be a 50% improvement in battery life.

As for the final comment, 22nm and tri-gate transistors are hardly a failure. They're not the revolution many hoped for, at least not yet. Need I point out that Intel's first 32nm parts (Arrandale) also failed to eclipse their outgoing and mature 45nm parts? I'm not sure what the launch time frame is for ULV IVB, but I suspect by the time we see those chips 22nm will be performing a lot better than it is in the first quad-core chips.

From my perspective, to shrink a process node, improve performance of your CPU by 5-25%, and keep power use static is still a definite success and worthy of praise. When we get at least three or four other retail IVB laptops in for review, then we can actually start to say with conviction how IVB compares to SNB. I think it's better and a solid step forward for Intel, especially for lower cost laptops and ultrabooks.

If all you're doing is office work, which is what it sounds like, you're right: Core 2, Arrandale, Sandy Bridge, etc. aren't a major improvement. That's because if all you're doing is office work, 95% of the time the computer is waiting for user input. It's the times where you really tax your PC that you notice the difference between architectures, and the change from Penryn to Arrandale to Sandy Bridge to Ivy Bridge represents about a doubling in performance just for mundane tasks like office work...and a lot of people would still be perfectly content to run Word, Excel, etc. on a Core 2 Duo.

usama_ah - Monday, April 23, 2012 - link

Trigate is not a failure, this move to Trigate wasn't expected to bring any crazy amounts of performance benefits. Trigate was necessary because of the limitations (leaks) from ever smaller transistors. Trigate has nothing to do with the architecture of the processor per se, it's more about how each individual transistor is created on such a small scale. Architectural improvements are key to significant improvements.Sandy Bridge was great because it was a brand new architecture. If you have been even half-reading what they post on Anandtech, Intel's tick-tock strategy dictates that this move to Ivy Bridge would be small improvements BY DESIGN.

You will see improvements in battery life with the NEW architecture, AFTER Ivy Bridge (when Intel stays at 22nm), the so-called "tock," called "Haswell." And yes, tri-gate will still be in use at that time.

krumme - Monday, April 23, 2012 - link

As I understand trigate, trigate provides the oportunity to even better granularity of power for the individual transistor, by using different numbers of gates. If you design your arch to the process (using that oportunity,- as IB is not, but the first 22nm Atom aparently is), there should be "huge" savingsI asume you BY DESIGN mean "by process" btw.

In my world process improvement is key to most industrial production, with tools often being the weak link. The process decides what is possible in your design. That why Intel have used billions "just" mounting the right equipment.

JarredWalton - Monday, April 23, 2012 - link

No, he means Ivy Bridge is not the huge leap forward by design -- Intel intentionally didn't make IVB a more complex, faster CPU. That will be Haswell, the 22nm tock to the Ivy Bridge tick. Making large architectural changes requires a lot of time and effort, and making the switch between process nodes also requires time and effort. If you try to do both at the same time, you often end up with large delays, and so Intel has settled on a "tick tock" cadence where they only do one at a time.But this is all old news and you should be fully aware of what Intel is doing, as you've been around the comments for years. And why is it you keep bringing up Atom? It's a completely different design philosophy from Ivy Bridge, Sandy Bridge, Merom/Conroe, etc. Atom is more a competitor to ARM SoCs, which have roughly an order of magnitude less compute performance than Ivy Bridge.

krumme - Monday, April 23, 2012 - link

- Intel speeds up Atom development, - not using depreciated equipment for the future.- Intel invest heavily to get into new business areas and have done for years

- Haswell will probably be slimmer on the cpu part

The reason they do so is because the need of cpu power outside of the servermarket, is stagnating. And new third world markets is emergin. And all is turning mobile - its all over your front page now i can see.

The new Atom probably will provide adequate for most. (like say core 2 culv). Then they will have the perfect product. Its about mobility and price and price. Haswell will probably be the product for the rest of the mainstream market leaving even less for the dedicated gpu.

IB is an old style desktop cpu, maturing a not quite ready 22nm trigate process. Designed to fight a BD that did not arive. Thats why it does not impress. And you can tell Intel knows because the mobile lineup is so slim.

The market have changed. The shareprice have rocketed for AMD even though their high-end cpu failed, because the Atom sized bobcat and old technology llano could enter the new market. I could note have imagined the success of Llano. I didnt understand the purpose of it, because trinity was comming so close. But the numbers talk for themselves. People buy an user experience where it matter at lowest cost, not pcmark, encoding times, zip, unzip.

You have to use new benchmarks. And they have to be reinvented again. They have to make sense. Obviously cpu have to play a less role and the rest more. You have a very strong team, if not the strongest out there. Benchmark methology should be at the top of your list and use a lot of your development time.

JarredWalton - Monday, April 23, 2012 - link

The only benchmarks that would make sense under your new paradigm are graphics and video benchmarks, well, and battery life as well, because those are the only areas where a better GPU matters. Unless you have some other suggestions? Saying "CPU speed is reaching the point where it really doesn't matter much for a large number of people" is certainly true, and I've said as much on many occasions. Still, there's a huge gulf between Atom and Core 2 still, and there are many tasks where CULV would prove insufficient.By the time the next Atom comes out, maybe it will be fixed in the important areas so that stuff like YouTube/Netflix/Hulu all work without issue. Hopefully it also supports at least 4GB RAM, because right now the 2GB limit along with bloated Windows 7 makes Atom a horrible choice IMO. Plus, margins are so low on Atom that Intel doesn't really want to go there; they'd rather figure out ways to get people to continue paying at least $150 per CPU, and I can't fault their logic. If CULV became "fast enough" for everyone Intel's whole business model goes down the drain.

Funny thing is that even though we're discussing Atom and by extension ARM SoCs, those chips are going through the exact same rapid increases in performance. And they need it. Tablets are fine for a lot of tasks, but opening up many web sites on a tablet is still a ton slower than opening the same sites on a Windows laptop. Krait and Tegra 3 are still about 1/3 the amount of performance I want from a CPU.

As for your talk about AMD share prices, I'd argue that AMD share prices have increased because they've rid themselves of the albatross that was their manufacturing division. And of course, GF isn't publicly traded and Abu Dhabi has plenty of money to invest in taking over CPU manufacturing. It's a win-win scenario for those directly involved (AMD, UAE), though I'm not sure it's necessarily a win for everyone.

bhima - Monday, April 23, 2012 - link

I figure Intel wants everyone to want their CULV processors since they seem to charge the most for them to the OEMs, or are the profit margins not that great because they are a more difficult/expensive processor to make?krumme - Tuesday, April 24, 2012 - link

Yes - video and gaming is what matters for the consumer now, everything is okey as it will - hopefully - be 2014. What matters is ssd, screen quality, and everything else, - just not cpu power. It just needs to have far less space. Cpu having so much space is just old habits for us old geeks.AMD getting rid of GF burden have been in the plan for years. Its known and can not influence share price. Basicly the, late, move to mobile focus, and the excellent execution of those consumer / not reviewer shaped apus is a part of the reason.

The reviewers need to move their mindset :) - btw its my impression Dustin is more in line with what the general consumer want. Ask him if he thinks the consumer want a new ssd benchmark with 100 hours of 4k reading and writing.

MrSpadge - Monday, April 23, 2012 - link

No, the finer granularity is just a nice side effect (which could probably be used more aggressively in the future). However, the main benefit of tri-gate is more control over the channel, which enables IB to reach high clock speeds at comparably very low voltages, and at very low leakage.