The NVIDIA GTC Spring 2022 Keynote Live Blog (Starts at 8:00am PT/15:00 UTC)

by Ryan Smith on March 22, 2022 10:00 AM EST

10:58AM EDT - Welcome GPU watchers to another GTC spring keynote

10:59AM EDT - We're here reporting from the comfort of our own homes on the latest in NVIDIA news from the most important of the company's GTC keynotes

10:59AM EDT - GTC Spring, which used to just be *the* GTC, is NVIDIA's traditional venue for big announcements of all sorts, and this year should be no exception

11:00AM EDT - NVIDIA's Ampere server GPU architecture is two years old and arguably in need of an update. Meanwhile the company also dropped a bomb last year with the announcement of the Grace CPU, which would be great to find out more about

11:00AM EDT - And here we go

11:01AM EDT - For the first time in quite a while, NVIDIA is not kicking things off with a variant of their "I am AI" videos

11:02AM EDT - Instead, we're getting what I'm guessing is NV's "digitial twin" of their HQ

11:02AM EDT - And here's Jensen

11:03AM EDT - And I spoke too soon. Here's a new "I am AI" video

11:04AM EDT - These videos are arguably almost cheating these days. In previous years NV has revealed that they have AI doing the music composition and, of course, the voiceover

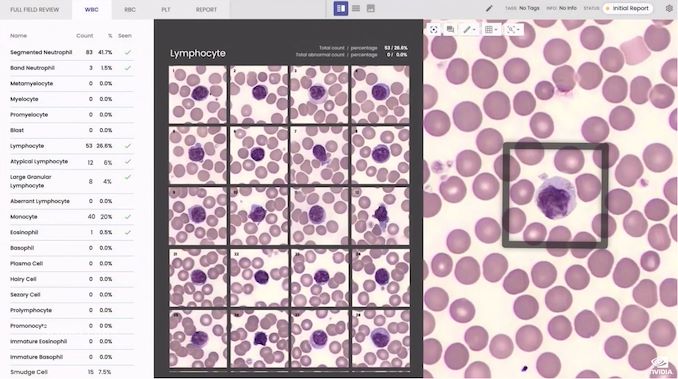

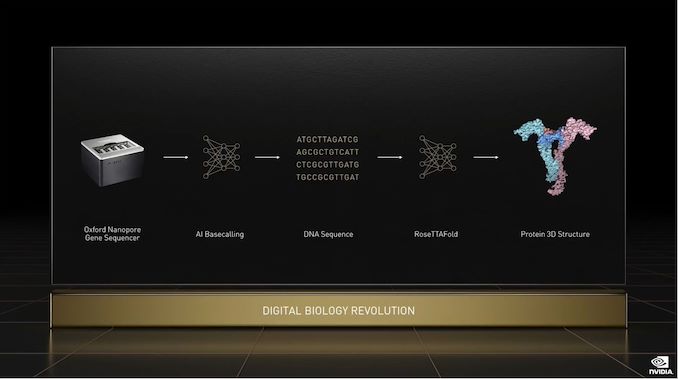

11:06AM EDT - And back to Jensen. Discussing how AI is being used in biology and medical fields

11:07AM EDT - None of this was possible a decade ago

11:07AM EDT - AI has fundamentally changed what software can make. And how you make software

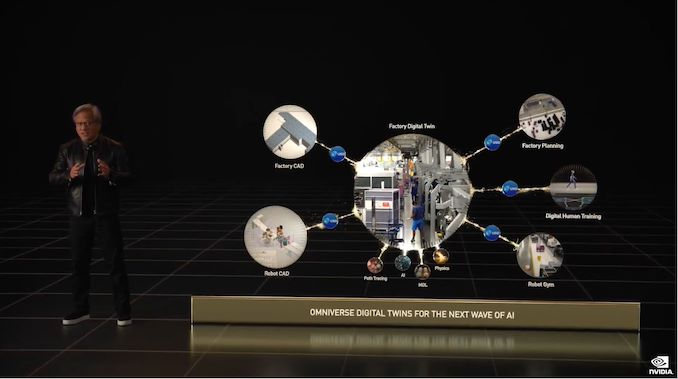

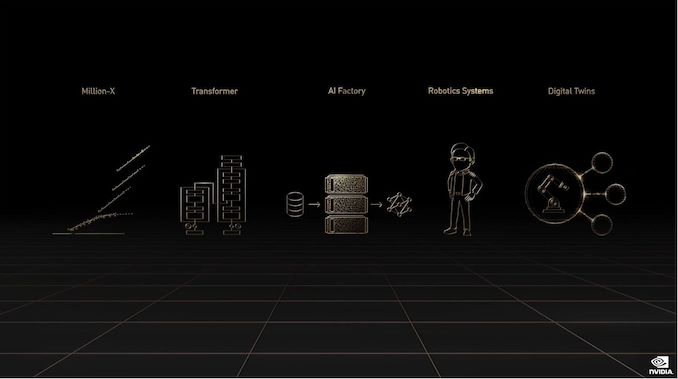

11:07AM EDT - The next wave of AI is robotics

11:08AM EDT - Digital robotics, avatars, and physical robotics

11:08AM EDT - And omniverse will be essential to making robotics

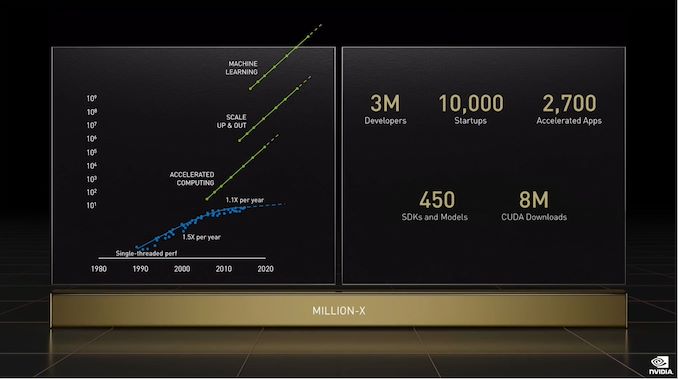

11:08AM EDT - In the past decade, NV's hardware and software have delivered a million-x speedup in AI

11:09AM EDT - Today NVIDIA accelerates millions of developers. GTC is for all of you

11:10AM EDT - Now running down a list of the major companies who are giving talks at GTC 2022

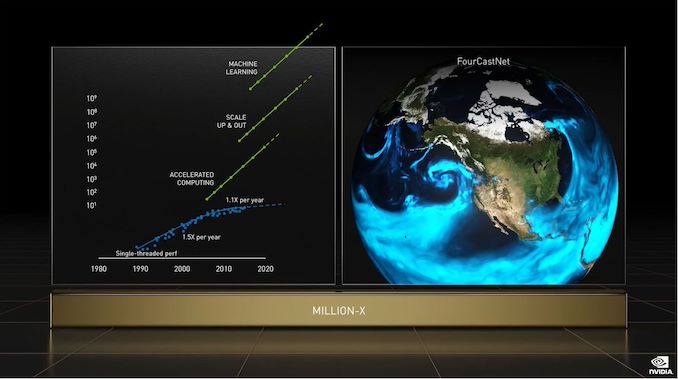

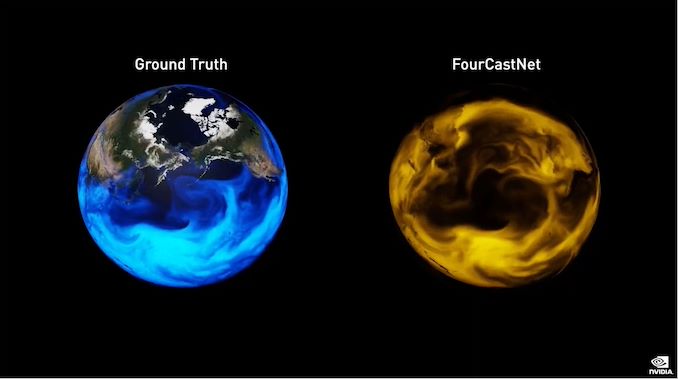

11:10AM EDT - Recapping NVIDIA's "Earth 2" digital twin of the Earth

11:12AM EDT - And pivoting to a deep learning weather model called FourCastNet

11:12AM EDT - Trained on 4TB of data

11:13AM EDT - FourCastNet can predict atmospheric river events well in advance

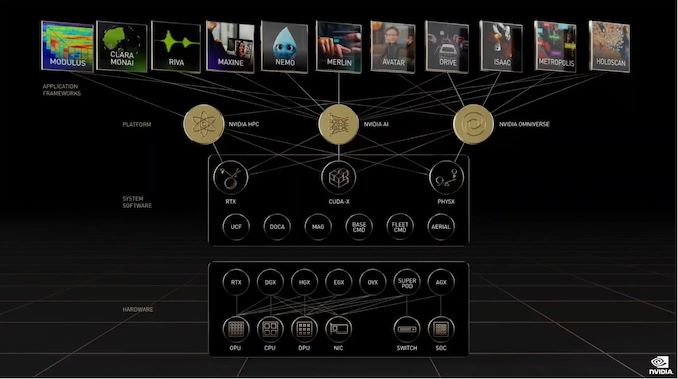

11:13AM EDT - Now talking about how NVIDIA offers multiple layers of products, across hardware, software, libraries, and more

11:14AM EDT - And NVIDIA will have new products to talk about at every layer today

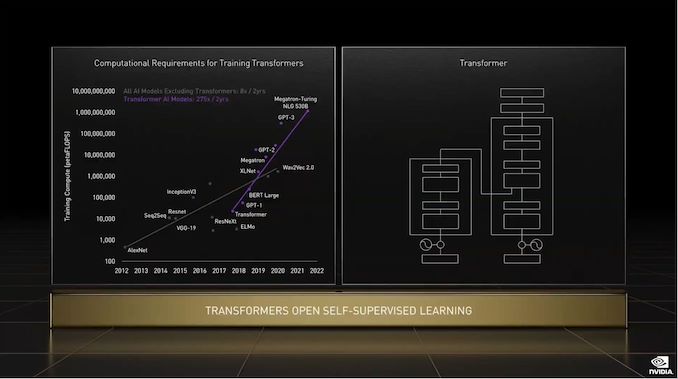

11:14AM EDT - Starting things off with a discussion about transformers (the deep learning model)

11:14AM EDT - Transformers are the model of choice for natural language processing

11:15AM EDT - e.g. AI consuming and generating text

11:15AM EDT - (GPT-3 is scary good at times)

11:15AM EDT - "AI is racing in every direction"

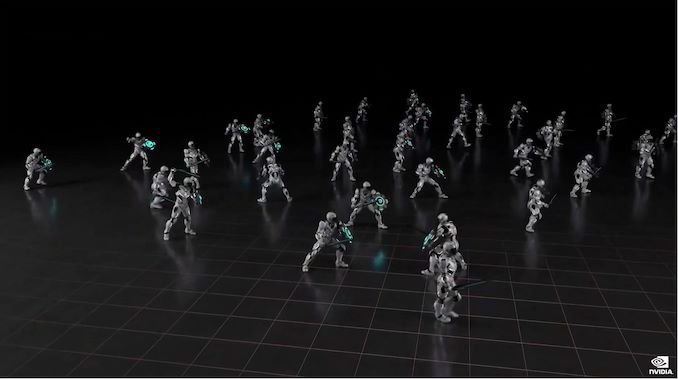

11:16AM EDT - Rolling a short video showing off a character model trained with reinforcement learning

11:16AM EDT - 10 years of simulation in 3 days of real world time

11:17AM EDT - NV's hope is to make animating a character as easy as talking to a human actor

11:18AM EDT - Now talking about the company's various NVIDIA AI libraries

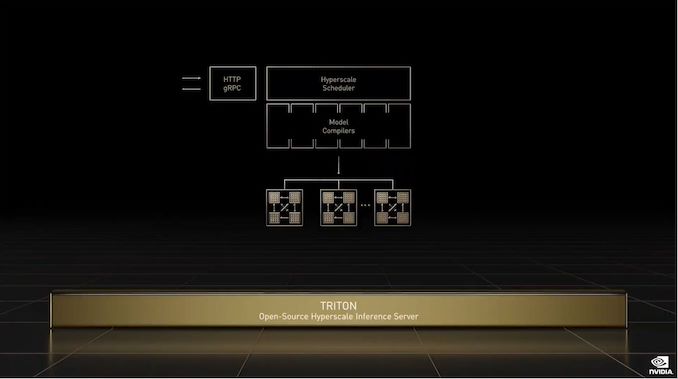

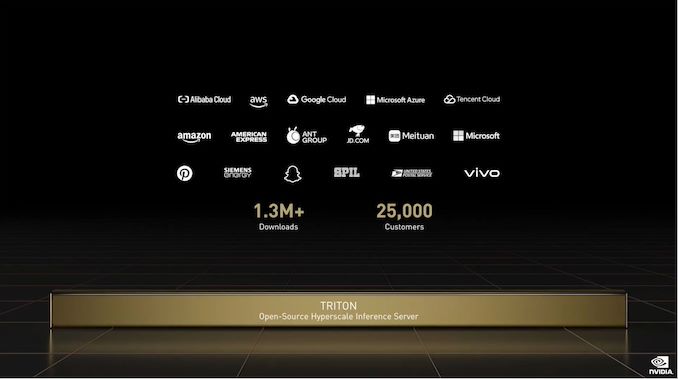

11:18AM EDT - And Triton, NVIDIA's inference server

11:19AM EDT - Triton has been downloaded over a million times

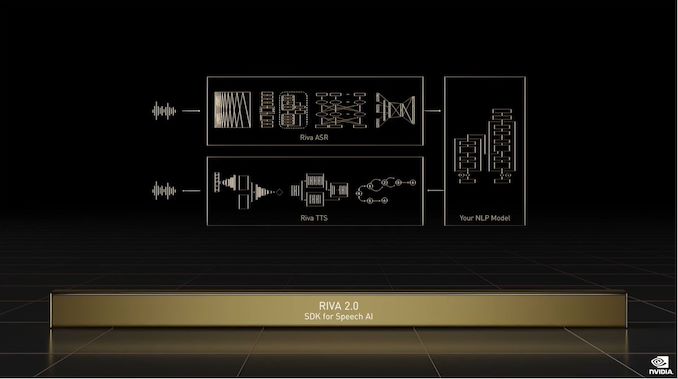

11:20AM EDT - Meanwhile, the Riva SDK for speech AI is now up to version 2.0, and is being released under general availability

11:20AM EDT - "AI will reinvent video conferencing"

11:21AM EDT - Tying that in to Maxine, NV's library for AI video conferencing

11:22AM EDT - Rolling a short demo video of Maxine in action

11:22AM EDT - It sounds like Maxine will be a big item at the next GTC, and today is just a teaser

11:23AM EDT - Now on to recommendation engines

11:23AM EDT - And of course, NVIDIA has a framework for that: Merlin

11:24AM EDT - The Merlin 1.0 release is now ready for general availability

11:24AM EDT - And back to transformers

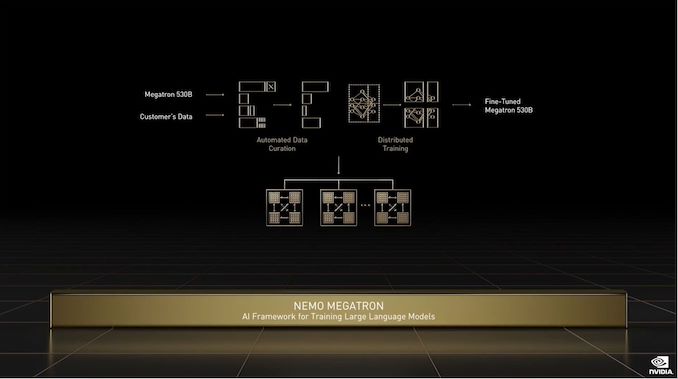

11:24AM EDT - Google is working on Switch, a 1.6 trillion parameter transformer

11:25AM EDT - And for that, NVIDIA has the Nemo Megatron framework

11:26AM EDT - And now back to where things started, AI biology and medicine

11:26AM EDT - "The conditions are prime for the digital biology revolution"

11:28AM EDT - (This black background does not pair especially well with YouTube's overly compressed video streams)

11:28AM EDT - And now on to hardware

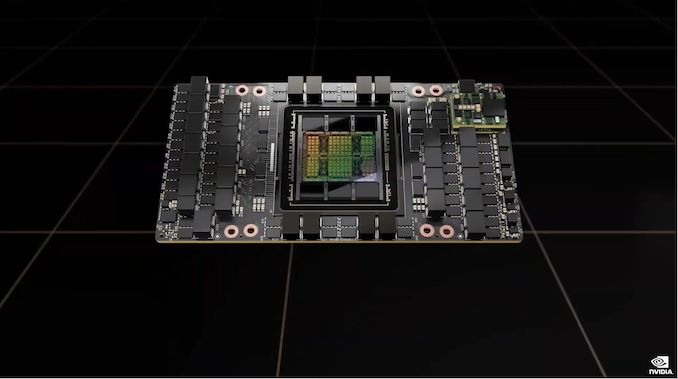

11:28AM EDT - Introducing NVIDIA H100!

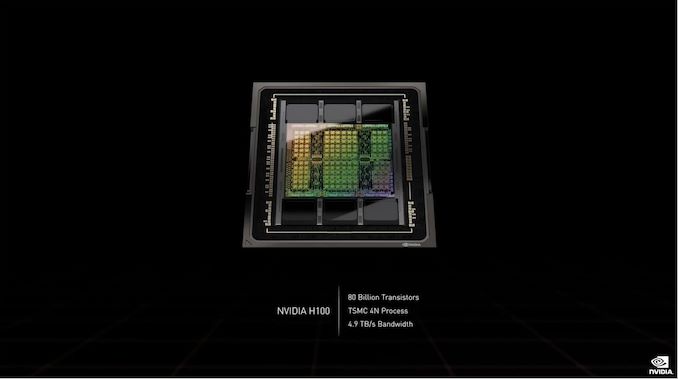

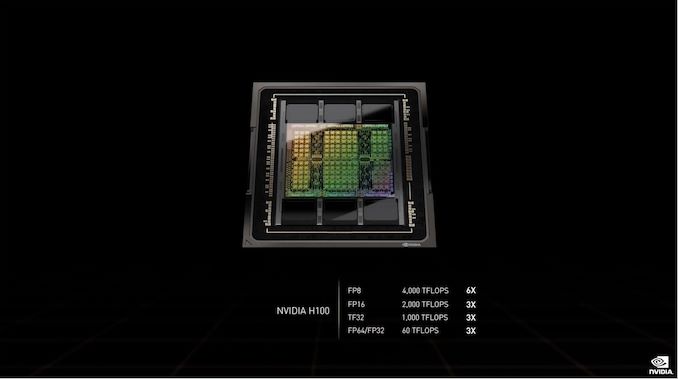

11:28AM EDT - 80B transistor chip built on TSMC 4N

11:28AM EDT - 4.9TB/sec bandwidth

11:28AM EDT - First PCIe 5.0 GPU

11:28AM EDT - First HBM3 GPU

11:28AM EDT - A single H100 sustains 40TBit/sec of I/O bandwidth

11:29AM EDT - 20 H100s can sustain the equivalent of the world's Internet traffic

11:29AM EDT - Hopper architecture

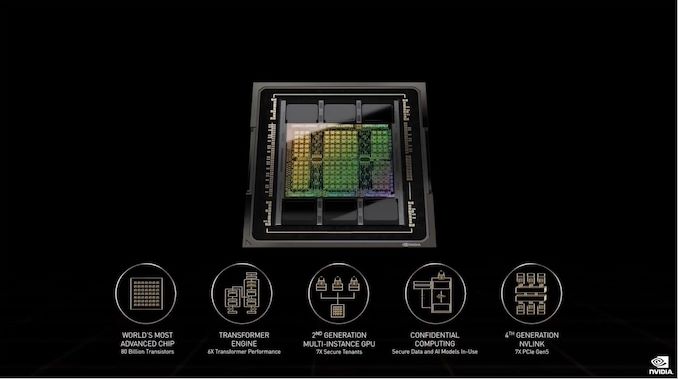

11:29AM EDT - "5 groundbreaking inventions"

11:29AM EDT - H100 has 4 PFLOPS of FP8 perform

11:29AM EDT - 2 PFLOPS of FP16, and 60 TFLOPS of FP64/FP32

11:30AM EDT - Hopper's FP8 is 6x the performance of Ampere's FP16 perf

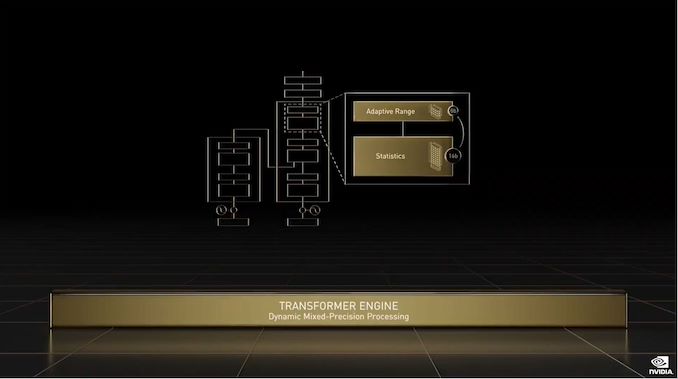

11:30AM EDT - Hopper introduces a transformer engine

11:30AM EDT - Transformer Engine: a new tensor core for transformer training and inference

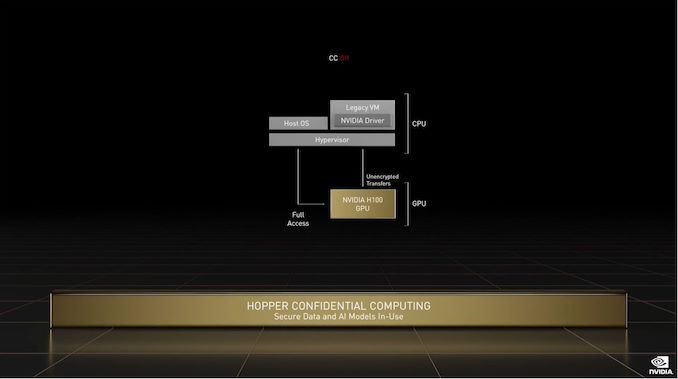

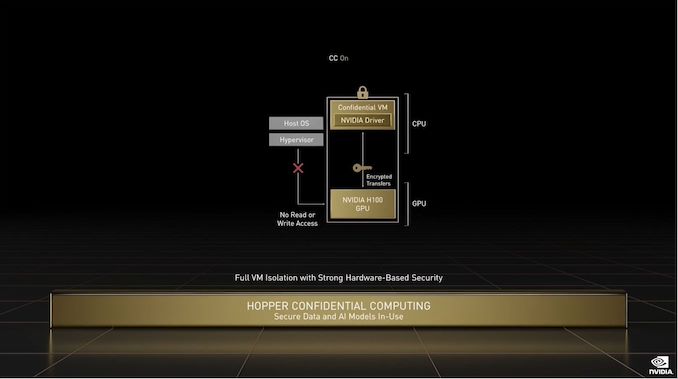

11:31AM EDT - On the security front, Hopper adds full isolation for MIG mode

11:31AM EDT - And each of the 7 instances is the performance of two T4 server GPUs

11:31AM EDT - The isolated MIG instances are fully secured and encrypted. Confidential computing

11:32AM EDT - Data and application are protected during use on the GPU

11:32AM EDT - Protects confidentiality of valuable AI models on shared or remote infrastructure

11:32AM EDT - New set of instructions: DPX

11:32AM EDT - Designed to accelerate dynamic programming algorithms

11:33AM EDT - Used in things like shortest route optimization

11:33AM EDT - Hopper DPX instructions will speed these up upwards of 40x

11:33AM EDT - COWOS 2.5 packaging

11:33AM EDT - HBM3 memory

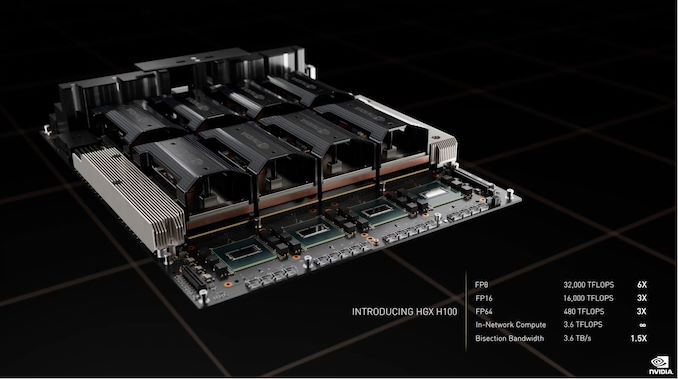

11:34AM EDT - 8 SXMs are paired with 4 NVSwitch chips on an H100 HGX board

11:34AM EDT - Dual "Gen 5" CPUs

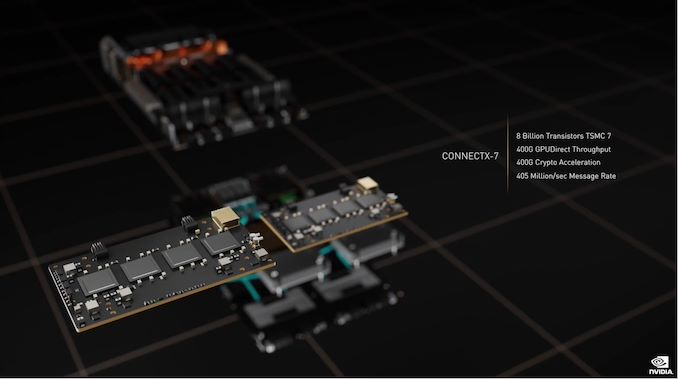

11:34AM EDT - Networking provided by Connectx-7 NICs

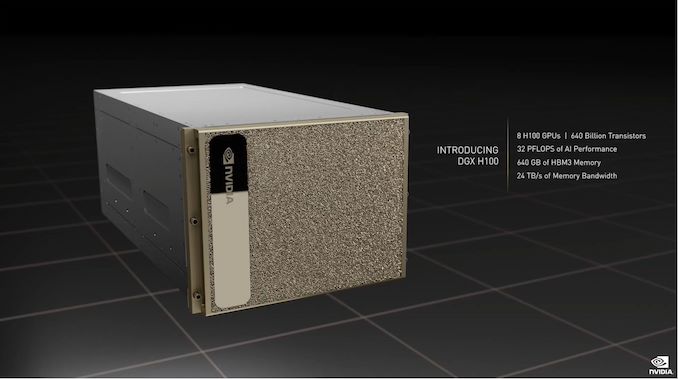

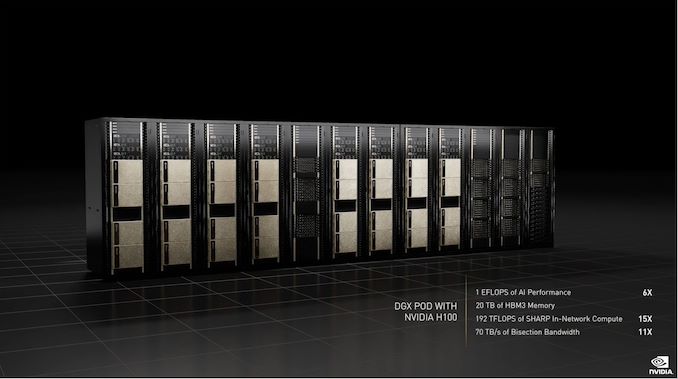

11:35AM EDT - Introducing the DGX H100, NVIDIA's latest AI computing system

11:35AM EDT - 8 H100 GPUs in one server

11:35AM EDT - 640GB of HBM3

11:35AM EDT - "We have a brand-new way to scale up DGX"

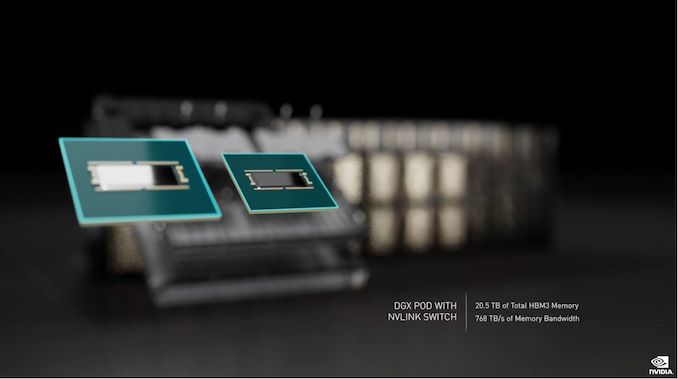

11:35AM EDT - NVIDIA NVLink Switch System

11:36AM EDT - Connect up to 32 nodes (256 GPUs) of H100s via NVLink

11:36AM EDT - This is the first time NVLink has been available on an external basis

11:36AM EDT - Connects to the switch via a quad port optical transceiver

11:36AM EDT - 32 transceivers connect to a single node

11:37AM EDT - 1 EFLOPS of AI performnace in an 32 node cluster

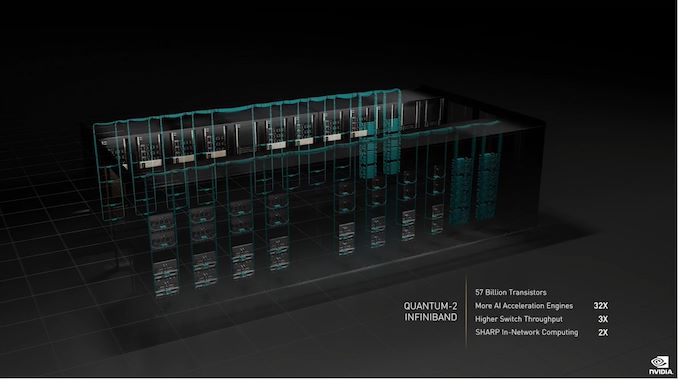

11:37AM EDT - And DGX SuperPods scale this up further with the addition of Quantum-2 Infiniband

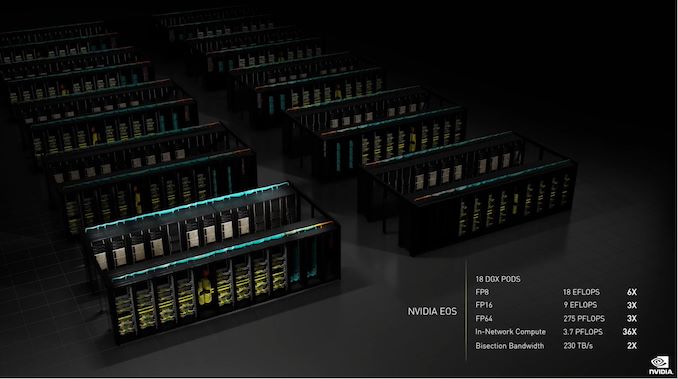

11:37AM EDT - NVIDIA is building another supercomputer: Eos

11:38AM EDT - 18 DGX Pods. 9 EFLOPS FP16

11:38AM EDT - Expect Eos to be the fastest AI computer in the world, and the blueprint for advanced AI infrastrucutre for NVIDIA's hardware partners

11:38AM EDT - Standing up Eos now and online in a few months

11:39AM EDT - Now talking about performance

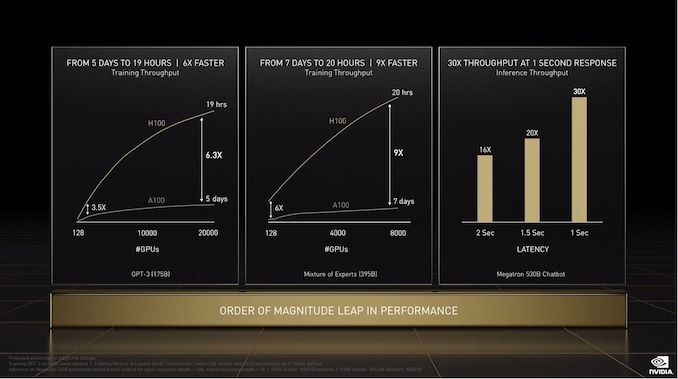

11:39AM EDT - 6.3x transformer training performance

11:39AM EDT - And 9x on a different transformer

11:39AM EDT - H100: the new engine of the world's AI infrastructure

11:39AM EDT - "Hopper will be a game changer for mainstream systems as well"

11:40AM EDT - Moving data to keep GPUs fed is a challenge

11:40AM EDT - Attach the network directly to the GPU

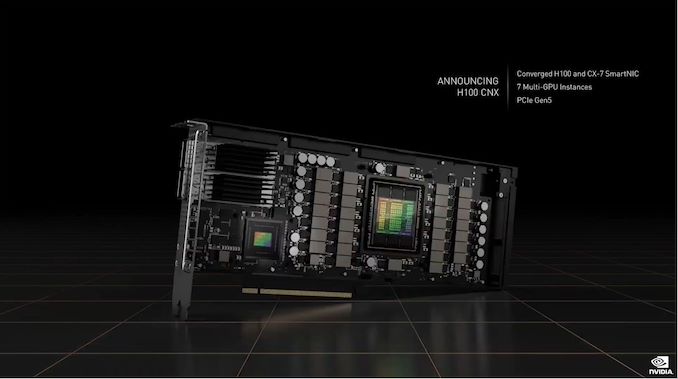

11:40AM EDT - Announcing the H100 CNX

11:40AM EDT - H100 and a CX-7 NIC on a single card

11:41AM EDT - Skip the bandwidth bottlenecks by having the GPU go directly to the network

11:41AM EDT - Now on to Grace

11:41AM EDT - Grace is "progressing fantastically" and is on track to ship next year

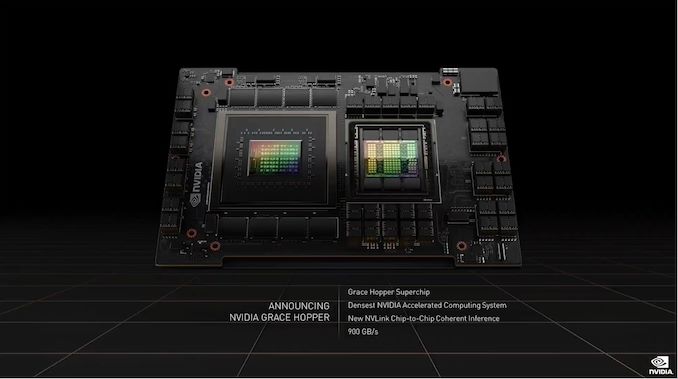

11:41AM EDT - Announcing Grace Hopper, a single MCM with a Grace CPU and a Hopper GPU

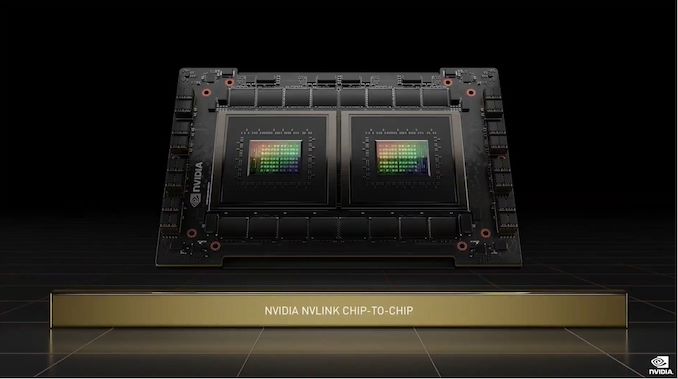

11:42AM EDT - The chips are using NVLink to communicate

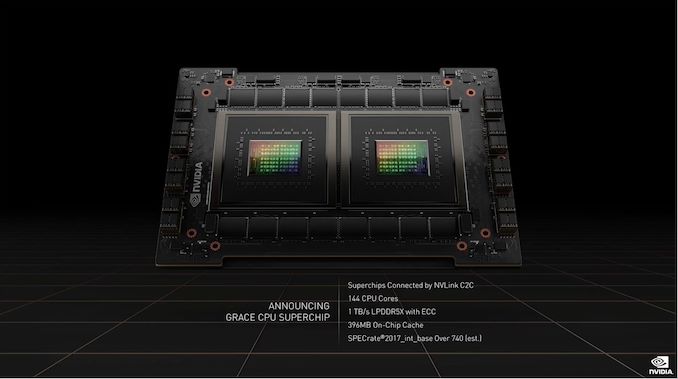

11:42AM EDT - Announcing Grace CPU Superchip. Two Graces in MCM

11:42AM EDT - 144 CPU cores, 1TB/sec of LPDDR5X

11:42AM EDT - Connected via NVLink Chip-2-Chip (C2C)

11:43AM EDT - Entire module, including memory, is only 500W

11:43AM EDT - And all of NVIDIA's software platforms will work on Grace

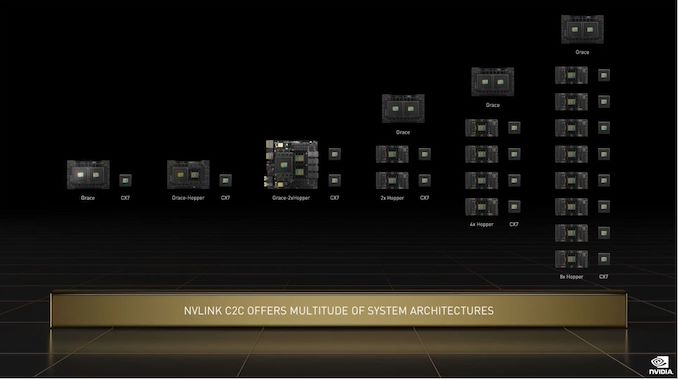

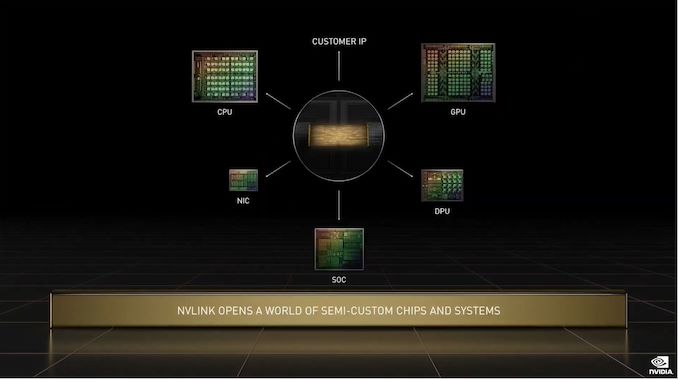

11:43AM EDT - Now talking about the NVLink C2C link used to connect these chips

11:44AM EDT - NVLink C2C allows for many different Grace/Hopper configurations

11:45AM EDT - And NVIDIA is opening up NVLink to let customers implement it as well to connect to NVIDIA's chips on a single package

11:45AM EDT - So NV is going chiplet and semi-custom?

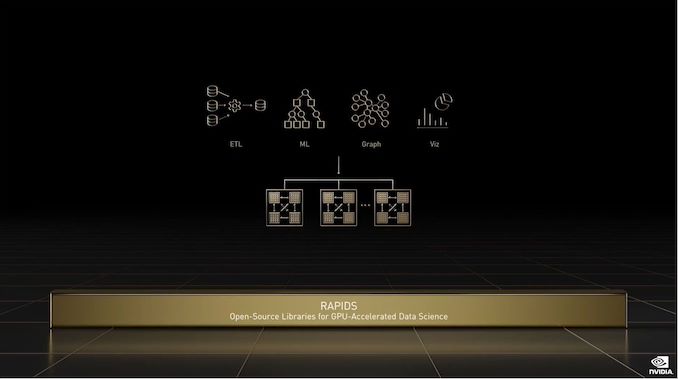

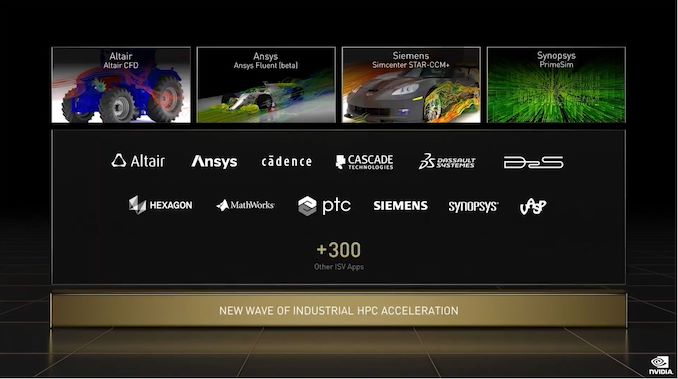

11:45AM EDT - Now on to NVIDIA SDKs

11:47AM EDT - Over 175 companies are testing NVIDIA CuOpt

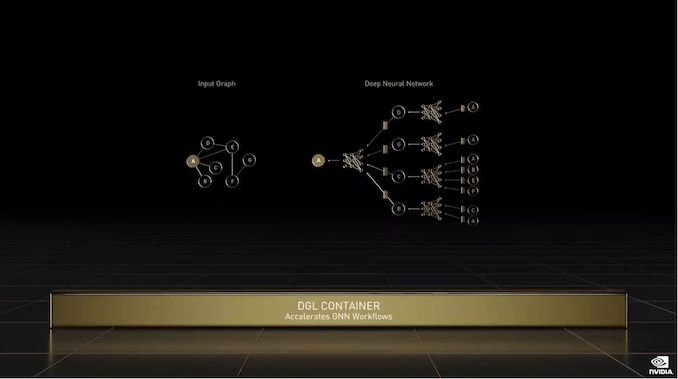

11:47AM EDT - NVIDIA DGL Container: training large graph NNs across multiple nodes

11:48AM EDT - NVIDIA cuQuantum: SDK for accelerating quantum circuits

11:48AM EDT - Aerial: SDK for 5G radio

11:49AM EDT - And NV is already getting ready for 6G

11:49AM EDT - Sionna: new framework for 6G research

11:50AM EDT - Monai: AI framework for medical imaging

11:50AM EDT - Flare: SDK for federated learning

11:51AM EDT - "The same NVIDIA systems you already own just got faster"

11:51AM EDT - And that's the word on NVIDIA's massive (and growing) library of frameworks

11:52AM EDT - Now talking about the Apollo 13 disaster, and how the fully functional replica on Earth helped to diagnose and deal with the issue

11:52AM EDT - Thus coining the term "digital twin"

11:53AM EDT - "Simulating the world is the ultimate grand challenge"

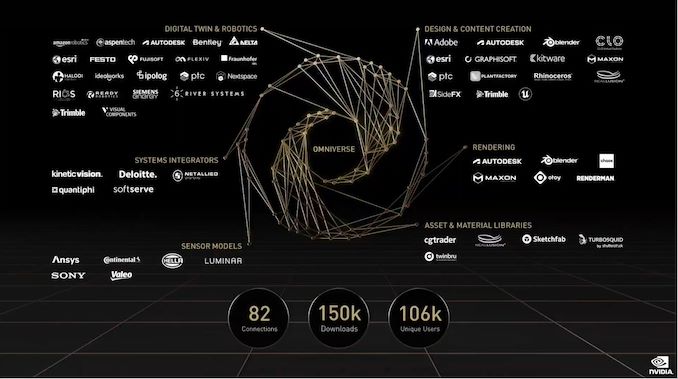

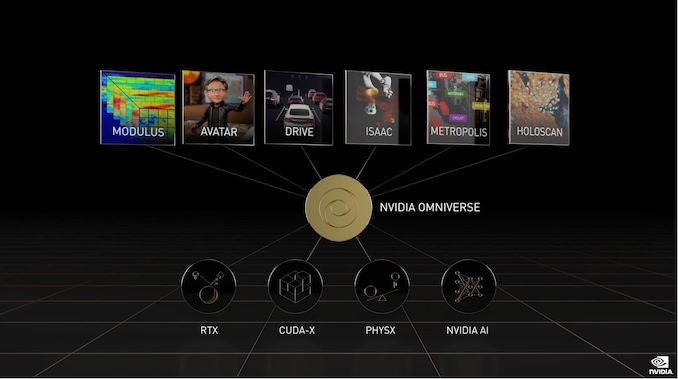

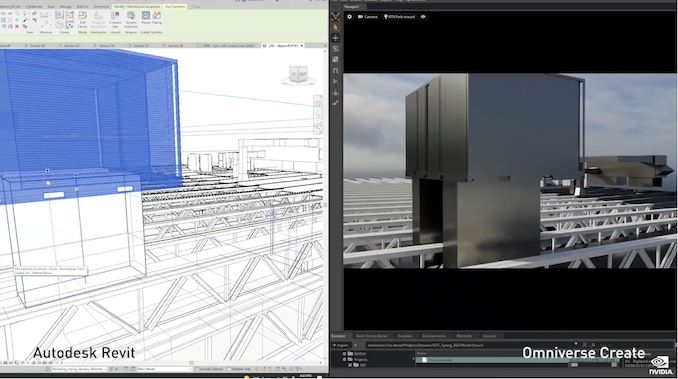

11:53AM EDT - Dovetailing into omniverse

11:53AM EDT - And what Omniverse is useful for today

11:53AM EDT - Industiral digital twins and more

11:54AM EDT - But first, the technologies that make omniverse possible

11:54AM EDT - Rendering, materials, particle simulations, physics simulations, and more

11:55AM EDT - "Omniverse Ramen Shop"

11:55AM EDT - (Can't stop for lunch now, Jensen, there's a keynote to deliver!)

11:56AM EDT - Omniverse is scalable from RTX PCs to large systems

11:56AM EDT - "But industrial twins need a new type of purpose-built computer"

11:56AM EDT - "We need to create a synchronous datacenter"

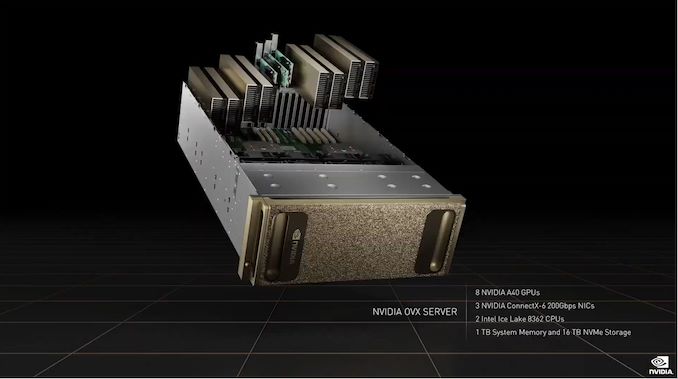

11:56AM EDT - NVIDIA OVX Server

11:57AM EDT - 8 A40s and dual Ice Lake CPUs

11:57AM EDT - And NVIDIA OVX SuperPod

11:57AM EDT - Nodes are synchronized

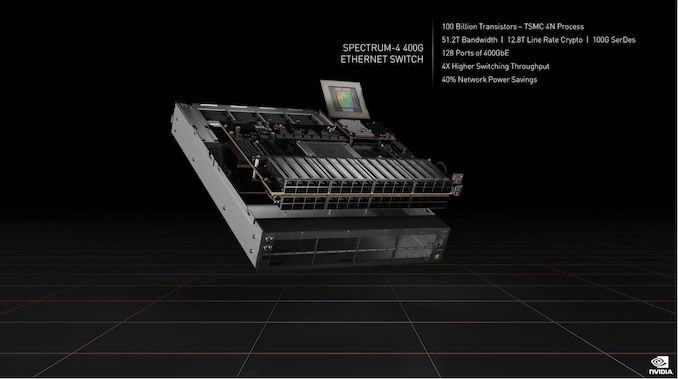

11:57AM EDT - Announcing Specturm-4 Switch

11:57AM EDT - 400G Ethernet Switch

11:58AM EDT - 100B transistors(!)

11:58AM EDT - World's first 400G end-to-end networking platform

11:58AM EDT - Timing precision to a new nanoseconds

11:59AM EDT - The backbone of their omniverse computer

11:59AM EDT - Samples in late Q4

12:00PM EDT - NVIDIA is releasing a major Omniverse kit at this GTC

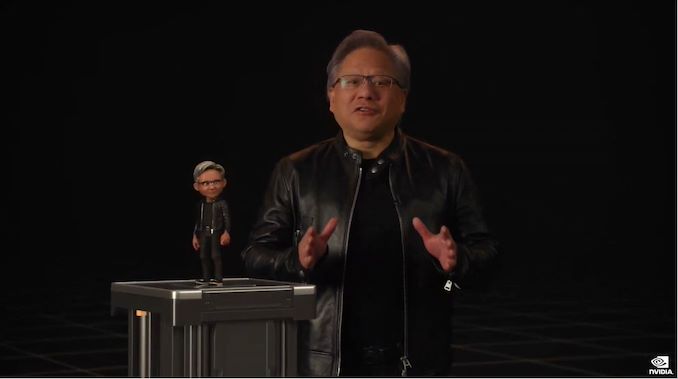

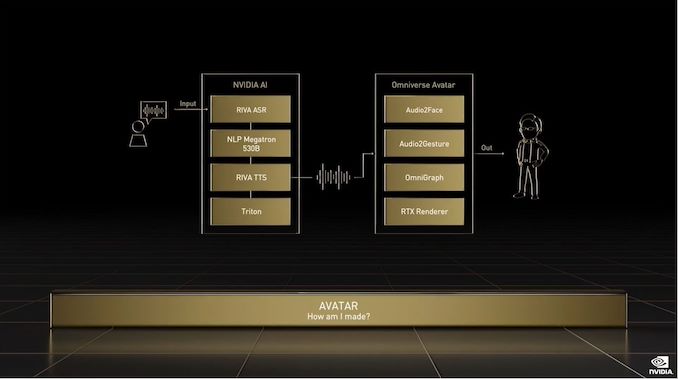

12:00PM EDT - Omniverse Avatar: a framework for building avatars

12:01PM EDT - Showing off Toy Jensen

12:01PM EDT - According to Jensen, TJ is not pre-recorded

12:01PM EDT - NV has also replicated a version of Jensen's voice

12:02PM EDT - The tex to speech is a bit rough, but it's a good proof of concept

12:03PM EDT - (At the rate this tech is progressing, I half-expect Jensen to replace himself with an avatar in GTC keynotes later this decade)

12:04PM EDT - Discussing all of the technologies/libraries that went into making Toy Jensen

12:04PM EDT - The next wave of AI is robotics

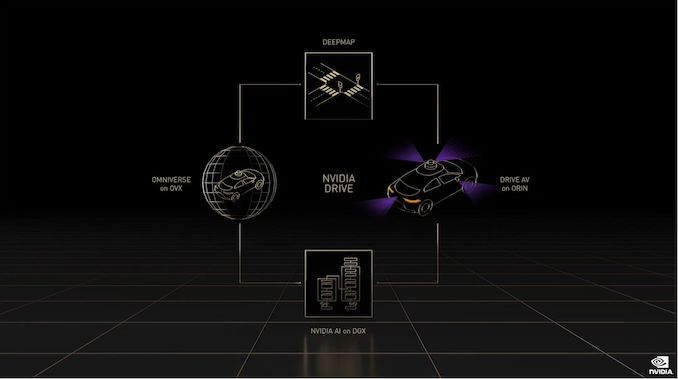

12:04PM EDT - Drive is being lumped in here, along with Isaac

12:06PM EDT - Now rolling a demo video of Drive with an AI avatar

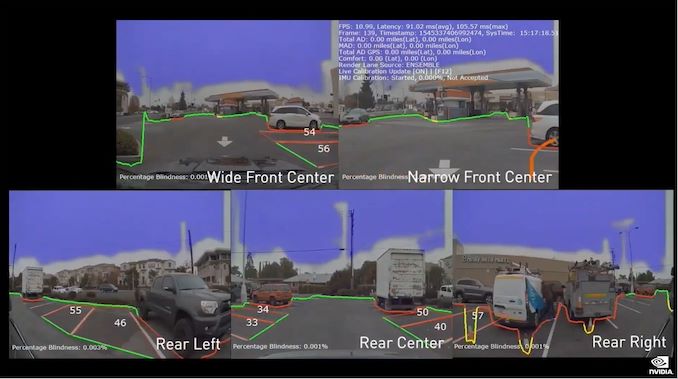

12:08PM EDT - Showing the various sub-features of Drive in action. As well as how the system monitors the driver

12:08PM EDT - And parking assistance, of course

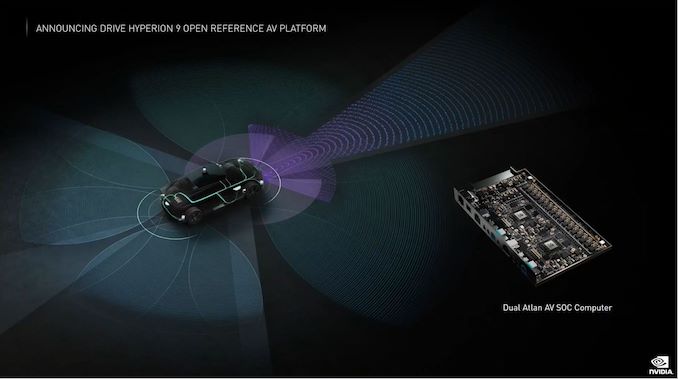

12:09PM EDT - Hyperion is the architecture of NVIDIA's self-driving car platform

12:09PM EDT - Hyperion 8 can achieve full self driving with the help of its large suite of sensors

12:09PM EDT - And Hyperion 9 is being announced today for cars shipping in 2026

12:10PM EDT - H9 will process twice as much sensor data as H8

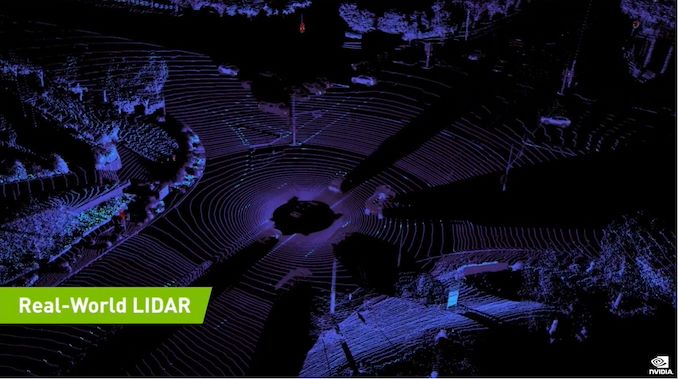

12:10PM EDT - NVIDIA Drive Map

12:10PM EDT - NV expects to map all major highways in North America, Europe, and Asia by the end of 2024

12:12PM EDT - And all of this data can be loaded into Omniverse to create a simulation environment for testing and training

12:12PM EDT - Pre-recorded Drive videos can also be ingested and reconstructed

12:13PM EDT - And that's Drive Map and Drive Sim

12:13PM EDT - Now on to the subject of electric vehicles

12:13PM EDT - And the Orin SoC

12:14PM EDT - Orin started shipping this month (at last!)

12:14PM EDT - BYD, the second-largest EV maker globally, will adopt Orin for cars shipping in 2023

12:14PM EDT - Now on to NV medical projects

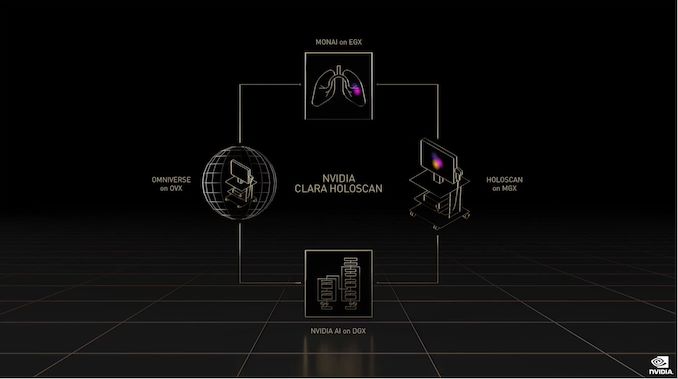

12:15PM EDT - Using NVIDIA's Clara framework to process copius amounts of microscope data in real time

12:16PM EDT - Clara Holoscan

12:17PM EDT - Announcing Clara Holoscan MGX platform, medical grade readiness in Q1 2023

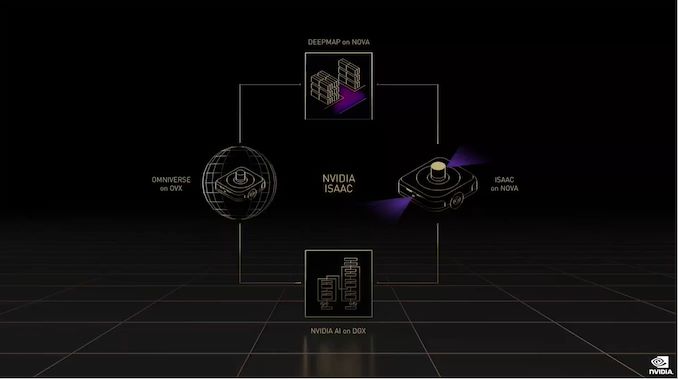

12:18PM EDT - NVIDIA Metropolis and Isaac

12:18PM EDT - "Metropolis has been a phenomenal success"

12:19PM EDT - Customers can use Omniverse to make digital twins of their facilities

12:19PM EDT - PepsiCo has built digital twins of their packaging and distribution centers

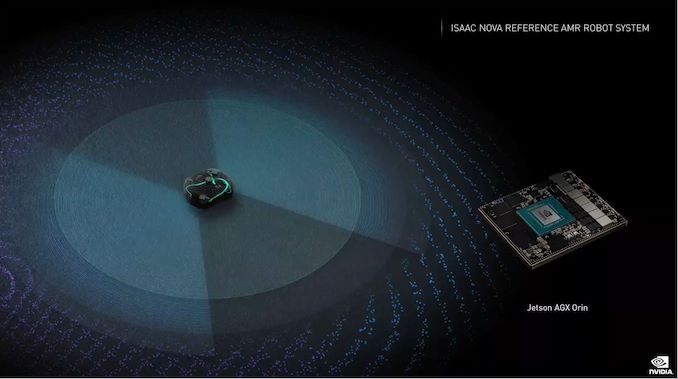

12:20PM EDT - Major release of Isaac: Isaac for AMRs

12:21PM EDT - Isaac Nova: reference AMR robot system

12:21PM EDT - Announcing Jetson Orin developer kits

12:21PM EDT - Nova AMR available in Q2

12:23PM EDT - And using reinforcement learning to train robots in simulation, and then using that training data to program a real robot

12:23PM EDT - Train your robots in a sim until they're ready to move into the real world

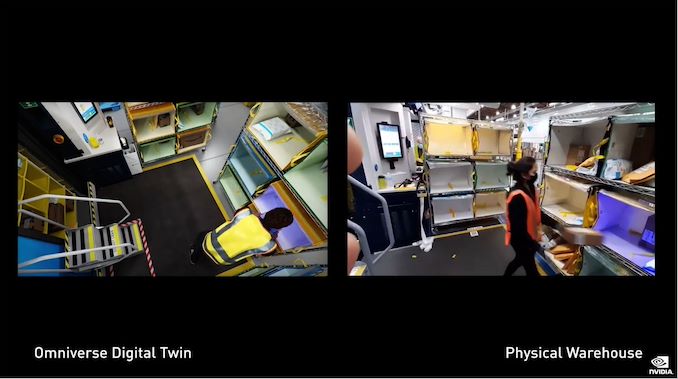

12:26PM EDT - And now a demo of how Amazon is using a digital twin

12:26PM EDT - Omniverse is helping Amazon optimize and simplify their processes

12:27PM EDT - Aggregating data from multiple CAD systems

12:27PM EDT - Test in the digital twin optimization concepts to see if and how it works

12:28PM EDT - And training models far faster in simulation than they could be trained in the real world in real time

12:29PM EDT - Announcing Omniverse Cloud

12:29PM EDT - "One click design collaboration"

12:29PM EDT - Rolling a demo

12:30PM EDT - Streaming Omniverse from GeForce Now, so Omniverse can be accessed on non-RTX systems

12:31PM EDT - And using Toy Jensen, having it modify an omniverse design via voice commands

12:32PM EDT - Omniverse: for the next wave of AI

12:32PM EDT - Jensen is now recapping today's announcements

12:34PM EDT - H100 is in production with availability in Q3

12:35PM EDT - NVLink is coming to all future NV chips

12:35PM EDT - Omniverse will be integral for action-oriented AI

12:38PM EDT - "We will strive for another million-x in the next decade"

12:38PM EDT - And thanking NV employees, partners, and families

12:38PM EDT - And now for one more thing with Omniverse, which was used to generate all of the renders seen in this keynote

12:39PM EDT - A bit of musical fanfare to close things out

12:42PM EDT - And that's a wrap. Please be sure to check out our Hopper H100 piece: https://www.anandtech.com/show/17327/nvidia-hopper-gpu-architecture-and-h100-accelerator-announced

9 Comments

View All Comments

brucethemoose - Tuesday, March 22, 2022 - link

"(GPT-3 is scary good at times)"How long before we have an AI liveblogging, and learning from, Nvidia's AI presentations?

Ryan Smith - Tuesday, March 22, 2022 - link

Probably sooner than is good for my career!brucethemoose - Tuesday, March 22, 2022 - link

Not if you don't tell anyone its an AI!gescom - Tuesday, March 22, 2022 - link

The more you buy, the more you save.rmfx - Tuesday, March 22, 2022 - link

In GTC, the G stands for GAME…It’s like coming to a barbecue to talk about growing trees.

It’s cool they expand their tech to useful fields like medicine, but also respect your public. Spend at least 1 percent of your time talking about what the conference is about.

brucethemoose - Tuesday, March 22, 2022 - link

I think you are confusing this with GDC?GTC seems to be an abbreviation for "GPU Technology Conference"

rmfx - Tuesday, March 22, 2022 - link

Ah, indeed you are right. I regret my comment then. Great conference then.Ryan Smith - Tuesday, March 22, 2022 - link

Don't feel too bad. Both are going on this week.name99 - Tuesday, March 22, 2022 - link

Grace Max and Grace Ultra are cute!Let's see if their (kinda, sorta, selling to very different markets) competitor when they ship are M2 or M3.