The DirectX 12 Performance Preview: AMD, NVIDIA, & Star Swarm

by Ryan Smith on February 6, 2015 2:00 PM EST- Posted in

- GPUs

- AMD

- Microsoft

- NVIDIA

- DirectX 12

CPU Scaling

Diving into our look at DirectX 12, let’s start with what is going to be the most critical component for a benchmark like Star Swarm, the CPU scaling.

Because Star Swarm is designed to exploit the threading inefficiencies of DirectX 11, the biggest gains from switching to DirectX 12 on Star Swarm come from removing the CPU bottleneck. Under DirectX 11 the bulk of Star Swarm’s batch submission work happens under a single thread, and as a result the benchmark is effectively bottlenecked by single-threaded performance, unable to scale out with multiple CPU cores. This is one of the issues DirectX 12 sets out to resolve, with the low-level API allowing Oxide to more directly control how work is submitted, and as such better balance it over multiple CPU cores.

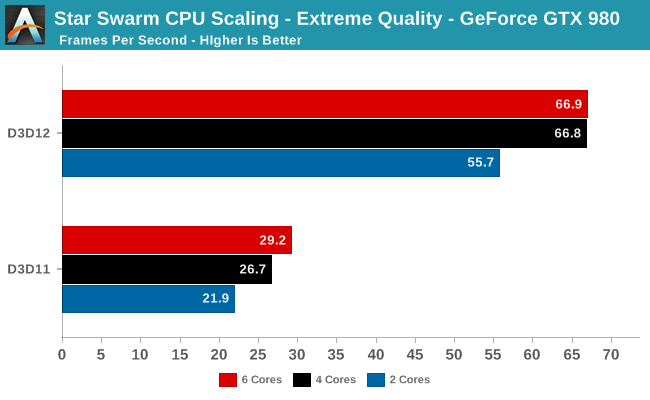

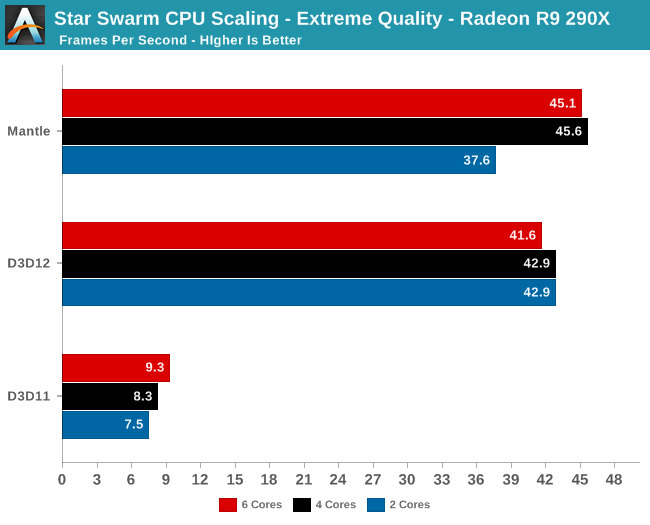

Starting with a look at CPU scaling on our fastest cards, what we find is that besides the absurd performance difference between DirectX 11 and DirectX 12, performance scales roughly as we’d expect among our CPU configurations. Star Swarm's DirectX 11 path, being single-threaded bound, scales very slightly with clockspeed and core count increases. The DirectX 12 path on the other hand scales up moderately well from 2 to 4 cores, but doesn’t scale up beyond that. This is due to the fact that at these settings, even pushing over 100K draw calls, both GPUs are solidly GPU limited. Anything more than 4 cores goes to waste as we’re no longer CPU-bound. Which means that we don’t even need a highly threaded processor to take advantage of DirectX 12’s strengths in this scenario, as even a 4 core processor provides plenty of kick.

Meanwhile this setup also highlights the fact that under DirectX 11, there is a massive difference in performance between AMD and NVIDIA. In both cases we are completely CPU bound, with AMD’s drivers only able to deliver 1/3rd the performance of NVIDIA’s. Given that this is the original Mantle benchmark I’m not sure we should read into the DirectX 11 situation too much since AMD has little incentive to optimize for this game, but there is clearly a massive difference in CPU efficiency under DirectX 11 in this case.

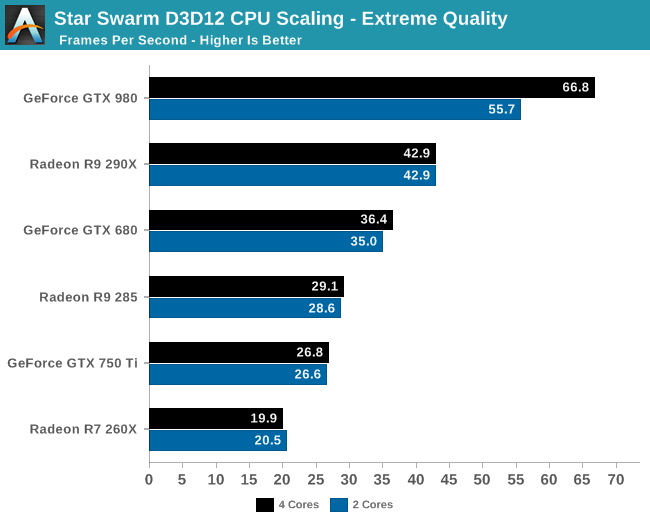

Having effectively ruled out the need for 6 core CPUs for Star Swarm, let’s take a look at a breakdown across all of our cards for performance with 2 and 4 cores. What we find is that Star Swarm and DirectX 12 are so efficient that only our most powerful card, the GTX 980, finds itself CPU-bound with just 2 cores. For the AMD cards and other NVIDIA cards we can get GPU bound with the equivalent of an Intel Core i3 processor, showcasing just how effective DirectX 12’s improved batch submission process can be. In fact it’s so efficient that Oxide is running both batch submission and a complete AI simulation over just 2 cores.

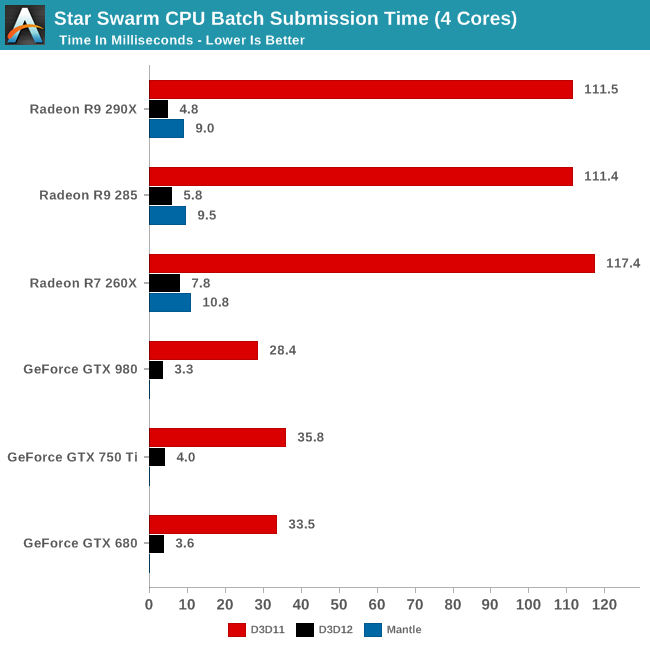

Speaking of batch submission, if we look at Star Swarm’s statistics we can find out just what’s going on with batch submission. The results are nothing short of incredible, particularly in the case of AMD. Batch submission time is down from dozens of milliseconds or more to just 3-5ms for our fastest cards, an improvement just overof a whole order of magnitude. For all practical purposes the need to spend CPU time to submit batches has been eliminated entirely, with upwards of 120K draw calls being submitted in a handful of milliseconds. It is this optimization that is at the core of Star Swarm’s DirectX 12 performance improvements, and going forward it could potentially benefit many other games as well.

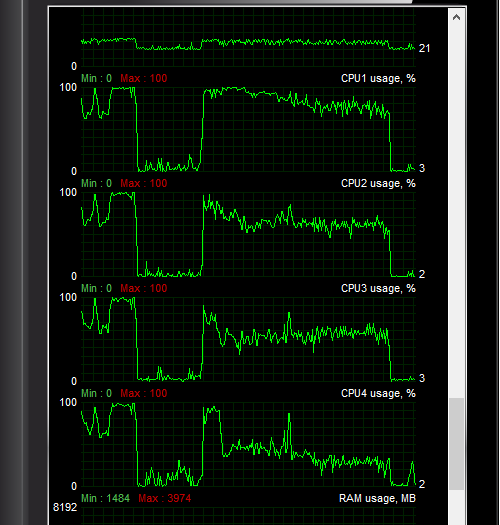

Another metric we can look at is actual CPU usage as reported by the OS, as shown above. In this case CPU usage more or less perfectly matches our earlier expectations: with DirectX 11 both the GTX 980 and R9 290X show very uneven usage with 1-2 cores doing the bulk of the work, whereas with DirectX 12 CPU usage is spread out evenly over all 4 CPU cores.

At the risk of speaking to the point that it’s redundant, what we’re seeing here is exactly why Mantle, DirectX 12, OpenGL Next, and other low-level APIs have been created. With single-threaded performance struggling to increase while GPUs continue to improve by leaps and bounds with each generation, something must be done to allow games to better spread out their rendering & submission workloads over multiple cores. The solution to that problem is to eliminate the abstraction and let the developers do it themselves through APIs like DirectX 12.

245 Comments

View All Comments

silverblue - Saturday, February 7, 2015 - link

This is but one use case. There does need to be an investigation into why AMD is so poor here with all three APIs, however - either a hardware deficiency exposed by the test, or NVIDIA's drivers just handle it better. I'll hold off on the conspiracy theories for now; this isn't Ubisoft, after all.AnnonymousCoward - Friday, February 6, 2015 - link

Finally a reason to move on from XP!BadThad - Saturday, February 7, 2015 - link

Great article! Thanks RyanPanzerEagle - Saturday, February 7, 2015 - link

Great article! Would like to see a follow uo/ add on article with a system that mimics and Xbox one. Since windows 10 is coming to the One it would be great to see the performance delta for the Xbox.thunderising - Saturday, February 7, 2015 - link

So.Mantle is better, eh.

Nuno Simões - Tuesday, February 10, 2015 - link

How did you come to that conclusion after this article?randomguy1 - Saturday, February 7, 2015 - link

As per the GPU scaling results, the percentage gain for Radeon cards is MUCH higher than Nvidia's cards. Although Nvidia had been ahead of AMD in terms of optimising the software with the hardware, but with the release of DX12, they are almost at level ground. What this means is that similarly priced Radeon cards will get a huge boost in performance as compared to their Nvidia counter parts. This is a BIG win for AMD.nulian - Saturday, February 7, 2015 - link

If you read the article it said we don't know why AMD has so bad performance on CPU it might be because NVIDIA drivers work better with multithreading on DX11 then AMD and the benchmark was originally written for AMD.calzone964 - Saturday, February 7, 2015 - link

I hope Assassin's Creed Unity is patched with this when Windows 10 releases. That game needs this so much...althaz - Saturday, February 7, 2015 - link

"AMD is banking heavily on low-level APIs like Mantle to help level the CPU playing field with Intel, so if Mantle needs 4 CPU cores to fully spread its wings with faster cards, that might be a problem."Actually - this is an extra point helping AMD out. Their CPUs get utterly crushed in single-threaded performance, but by and large at similar prices their CPUs will nearly always have more cores. Likely AMD simply don't care about dual-core chips - because they don't sell any.