AMD Radeon HD 7970 Review: 28nm And Graphics Core Next, Together As One

by Ryan Smith on December 22, 2011 12:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- ATI

- Radeon HD 7000

PCI Express 3.0: More Bandwidth For Compute

It may seem like it’s still fairly new, but PCI Express 2 is actually a relatively old addition to motherboards and video cards. AMD first added support for it with the Radeon HD 3870 back in 2008 so it’s been nearly 4 years since video cards made the jump. At the same time PCI Express 3.0 has been in the works for some time now and although it hasn’t been 4 years it feels like it has been much longer. PCIe 3.0 motherboards only finally became available last month with the launch of the Sandy Bridge-E platform and now the first PCIe 3.0 video cards are becoming available with Tahiti.

But at first glance it may not seem like PCIe 3.0 is all that important. Additional PCIe bandwidth has proven to be generally unnecessary when it comes to gaming, as single-GPU cards typically only benefit by a couple percent (if at all) when moving from PCIe 2.1 x8 to x16. There will of course come a time where games need more PCIe bandwidth, but right now PCIe 2.1 x16 (8GB/sec) handles the task with room to spare.

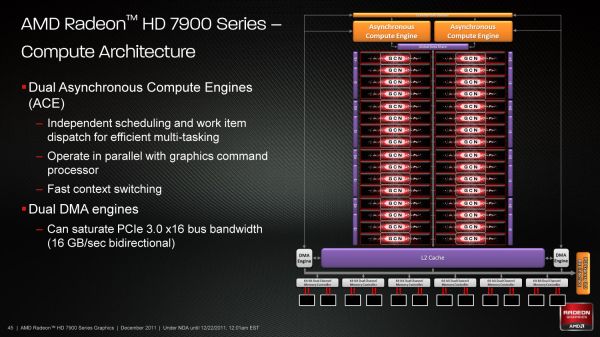

So why is PCIe 3.0 important then? It’s not the games, it’s the computing. GPUs have a great deal of internal memory bandwidth (264GB/sec; more with cache) but shuffling data between the GPU and the CPU is a high latency, heavily bottlenecked process that tops out at 8GB/sec under PCIe 2.1. And since GPUs are still specialized devices that excel at parallel code execution, a lot of workloads exist that will need to constantly move data between the GPU and the CPU to maximize parallel and serial code execution. As it stands today GPUs are really only best suited for workloads that involve sending work to the GPU and keeping it there; heterogeneous computing is a luxury there isn’t bandwidth for.

The long term solution of course is to bring the CPU and the GPU together, which is what Fusion does. CPU/GPU bandwidth just in Llano is over 20GB/sec, and latency is greatly reduced due to the CPU and GPU being on the same die. But this doesn’t preclude the fact that AMD also wants to bring some of these same benefits to discrete GPUs, which is where PCI e 3.0 comes in.

With PCIe 3.0 transport bandwidth is again being doubled, from 500MB/sec per lane bidirectional to 1GB/sec per lane bidirectional, which for an x16 device means doubling the available bandwidth from 8GB/sec to 16GB/sec. This is accomplished by increasing the frequency of the underlying bus itself from 5 GT/sec to 8 GT/sec, while decreasing overhead from 20% (8b/10b encoding) to 1% through the use of a highly efficient 128b/130b encoding scheme. Meanwhile latency doesn’t change – it’s largely a product of physics and physical distances – but merely doubling the bandwidth can greatly improve performance for bandwidth-hungry compute applications.

As with any other specialized change like this the benefit is going to heavily depend on the application being used, however AMD is confident that there are applications that will completely saturate PCIe 3.0 (and thensome), and it’s easy to imagine why.

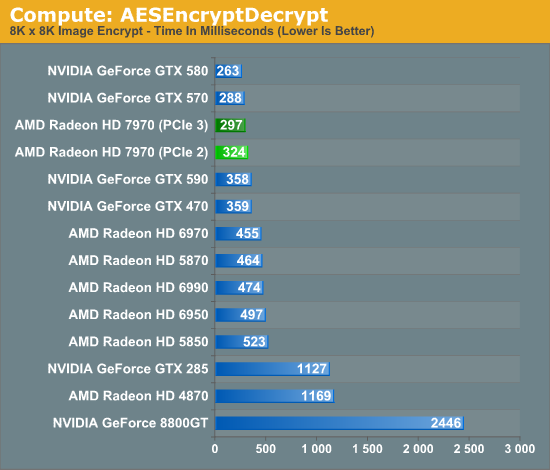

Even among our limited selection compute benchmarks we found something that directly benefitted from PCIe 3.0. AESEncryptDecrypt, a sample application from AMD’s APP SDK, demonstrates AES encryption performance by running it on square image files. Throwing it a large 8K x 8K image not only creates a lot of work for the GPU, but a lot of PCIe traffic too. In our case simply enabling PCIe 3.0 improved performance by 9%, from 324ms down to 297ms.

Ultimately having more bandwidth is not only going to improve compute performance for AMD, but will give the company a critical edge over NVIDIA for the time being. Kepler will no doubt ship with PCIe 3.0, but that’s months down the line. In the meantime users and organizations with high bandwidth compute workloads have Tahiti.

292 Comments

View All Comments

nitro912gr - Thursday, December 22, 2011 - link

I was planning a switch from AMD (4850) to a(n) nVidia GPU for my next upgrade, because they perform well both in computing and in gaming, and I need both fields to be filled here.But now I'm not sure about that, I will wait a bit to see how the software will welcome the new architecture first.

I hope they work as well so I can just pick the cheapest GPU.

Chloiber - Thursday, December 22, 2011 - link

The thing I still don't like about the new AMD cards is their massive problems with anisotropic filtering. AMD promised twice (with Cayman and Tahiti) that the "AF-Bug" is gone. But it's still mediocre to NV and worse than older cards (pre-R600).The bad thing about this is, that it's easily detectable in games and not just a theoretical flaw. It got better than Cayman, but it's still worse than NVs AF.

CeriseCogburn - Thursday, March 8, 2012 - link

Yes, thank you for that. We are supposed to ignore all amd radeon issues and failures and less thans, though, so we can extoll the greatness....Then when they "finally catch up to nvidia years later with some fix", the reviewers can tell us and admit openly that amd radeon has a years long problem of inferiority to nvidia that they "finally solved" and then we can get a gigantic zipped up download to show what "was for years amd fail hidden and not spoken of" is gone !

Hurrah !

Wow it's so much fun seeing it happen, again.

KoVaR - Thursday, December 22, 2011 - link

Awesome job on power consumption and noise levels. If only AMD did so well in the CPU realm...alpha754293 - Thursday, December 22, 2011 - link

Can you play a game while running a compute job?There's word that even for the nVidia Tesla compute accelerators (based on Fermi) that it stutters when you try to play a game or video while it is actively computing/working on something else.

Is that the case here too?

SlyNine - Thursday, December 22, 2011 - link

I'm sure it does, Context switching still occures a huge penalty.MrSpadge - Thursday, December 22, 2011 - link

GCN won't be able to help this on its own. The software needs to catch up. It's a major concern for true GP-GPU and heterogenous computing, though! And not even just launching a game, trying to use your desktop is enough of a problem already..MrS

shin0bi272 - Thursday, December 22, 2011 - link

Id really like to see is when you guys bench with an nvidia physx game... run the bench with physx on (maxed out if there's an option) once and off once.I know everyone is going to claim that physx is a gimmick but a good portion of that reason is because when a game supports it NO ONE BENCHMARKS IT WITH IT ON! That's like buying a big screen tv and covering half of it with duct tape. And lets not forget AMD opted to not use the tech when nvidia offered it to them... so AMD's loss is Nvidia's gain and no one uses it in their reviews because its not hardware neutral. That's partial favoritism IMHO.

Also why wasnt the gtx590 or the 6990 tested @ 16x10 dx10 HQ 16xaf on Metro2033? The 580 was tested and the 6970 were but not the dual chip cards. Whats up with that?

Finally - Thursday, December 22, 2011 - link

Spoken like a true Nvidia viral marketing shillshin0bi272 - Friday, December 23, 2011 - link

So because I prefer the extra eye candy physx offers I cant ask a question about a testing methodology? Sounds like someone has physx envy.