The SSD Relapse: Understanding and Choosing the Best SSD

by Anand Lal Shimpi on August 30, 2009 12:00 AM EST- Posted in

- Storage

A Wear Leveling Refresher: How Long Will My SSD Last?

As if everything I’ve talked about thus far wasn’t enough to deal with, there’s one more major issue that directly impacts the performance of these drives: wear leveling.

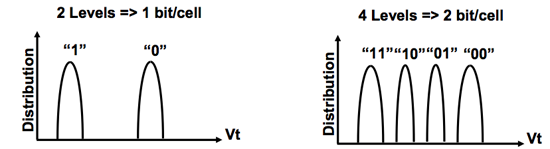

Each MLC NAND cell can be erased ~10,000 times before it stops reliably holding charge. You can switch to SLC flash and up that figure to 100,000, but your cost just went up 2x. For these drives to succeed in the consumer space and do it quickly, it must be using MLC flash.

SLC (left) vs. MLC (right) flash

Ten thousand erase/write cycles isn’t much, yet SSD makers are guaranteeing their drives for anywhere from 1 - 10 years. On top of that, SSD makers across the board are calling their drives more reliable than conventional hard drives.

The only way any of this is possible is by some clever algorithms and banking on the fact that desktop users don’t do a whole lot of writing to their drives.

Think about your primary hard drive. How often do you fill it to capacity, erase and start over again? Intel estimates that even if you wrote 20GB of data to your drive per day, its X25-M would be able to last you at least 5 years. Realistically, that’s a value far higher than you’ll use consistently.

My personal desktop saw about 100GB worth of writes (whether from the OS or elsewhere) to my SSD and my data drive over the past 14 days. That’s a bit over 7GB per day of writes. Let’s do some basic math:

| My SSD | |

| NAND Flash Capacity | 256 GB |

| Formatted Capacity in the OS | 238.15 GB |

| Available Space After OS and Apps | 185.55 GB |

| Spare Area | 17.85 GB |

If I never install another application and just go about my business, my drive has 203.4GB of space to spread out those 7GB of writes per day. That means in roughly 29 days my SSD, if it wear levels perfectly, I will have written to every single available flash block on my drive. Tack on another 7 days if the drive is smart enough to move my static data around to wear level even more properly. So we’re at approximately 36 days before I exhaust one out of my ~10,000 write cycles. Multiply that out and it would take 360,000 days of using my machine the way I have been for the past two weeks for all of my NAND to wear out; once again, assuming perfect wear leveling. That’s 986 years. Your NAND flash cells will actually lose their charge well before that time comes, in about 10 years.

This assumes a perfectly wear leveled drive, but as you can already guess - that’s not exactly possible.

Write amplification ensures that while my OS may be writing 7GB per day to my drive, the drive itself is writing more than 7GB to its flash. Remember, writing to a full block will require a read-modify-write. Worst case scenario, I go to write 4KB and my SSD controller has to read 512KB, modify 4KB, write 512KB and erase a whole block. While I should’ve only taken up one write cycle for 2048 MLC NAND flash cells, I will have instead knocked off a single write cycle for 262,144 cells.

You can optimize strictly for wear leveling, but that comes at the expense of performance.

295 Comments

View All Comments

zodiacfml - Wednesday, September 2, 2009 - link

Very informative, answered more than anything in my mind. Hope to see this again in the future with these drive capacities around $100.mgrmgr - Wednesday, September 2, 2009 - link

Any idea if the (mid-Sept release?) OCZ Colossus's internal RAID setup will handle the problem of RAID controllers not being able to pass Windows 7's TRIM command to the SSD array. I'm intent on getting a new Photoshop machine with two SSDs in Raid-0 as soon as Win7 releases, but the word here and elsewhere so far is that RAID will block the TRIM function.kunedog - Wednesday, September 2, 2009 - link

All the Gen2 X-25M 80GB drives are apparently gone from Newegg . . . so they've marked up the Gen1 drives to $360 (from $230):http://www.newegg.com/Product/Product.aspx?Item=N8...">http://www.newegg.com/Product/Product.aspx?Item=N8...

Unbelievable.

gfody - Wednesday, September 2, 2009 - link

What happened to the gen2 160gb on Newegg? For a month the ETA was 9/2 (today) and now it's as if they never had it in the first place. The product page has been removed.It's like Newegg are holding the gen2 drives hostage until we buy out their remaining stock of gen1 drives.

iwodo - Tuesday, September 1, 2009 - link

I think it acts as a good summary. However someone wrote last time about Intel drive handling Random Read / Write extremely poorly during Sequential Read / Write.Has Aanand investigate yet?

I am hoping next Gen Intel SSD coming in Q2 10 will bring some substantial improvement.

statik213 - Tuesday, September 1, 2009 - link

Does the RAID controller propagate TRIM commands to the SSD? Or will having RAID negate TRIM?justaviking - Tuesday, September 1, 2009 - link

Another great article, Anand! Thanks, and keep them coming.If this has already been discussed, I apologize. I'm still exhausted from reading the wonderful article, and have not read all 17 pages of comments.

On PAGE 3, it talks about the trade-off of larger vs. smaller pages.

I wonder if it would be feasible to make a hybrid drive, with a portion of the drive using small pages for faster performance when writing small files, and the majority of it being larger pages to keep the management of the drive reasonable.

Any file could be written anywhere, but the controller would bias small writes to the small pages, and large writes to large files.

Externally it would appear as a single drive, of course, but deep down in the internals, it would essentially be two drives. Each of the two portions would be tuned for maximum performance in different areas, but able to serve as backup or overflow if the other portion became full or ever got written to too many times.

Interesting concept? Or a hair brained idea buy an ignorant amateur?

CList - Tuesday, September 1, 2009 - link

Great article, wonderful to see insightful, in depth analysis.I'd be curious to hear anyone's thoughts on the implications are of running virtual hard disk files on SSD's. I do a lot of work these days on virtual machines, and I'd love to get them feeling more snappy - especially on my laptop which is limited to 4GB of ram.

For example;

What would the constant updates of those vmdk (or "vhd") files do to the disk's lifespan?

If the OS hosting the VM is windows 7, but the virtual machine is WinServer2003 will the TRIM command be used properly?

Cheers,

CList

pcfxer - Tuesday, September 1, 2009 - link

Great article!"It seems that building Pidgin is more CPU than IO bound.."

Obviously, Mr. Anand doesnt' understand how compilers work ;). Compilers will always be CPU and memory bound, reduce your memory in the computer to say 256MB (or lower) and you'll see what I mean. The levels of recursion necessary to follow the production (grammars that define the language) use up memory but would rarely use the drive unless the OS had terrible resource management. :0.

CMGuy - Wednesday, September 2, 2009 - link

While I can't comment on the specifics of software compilers I know that faster disk IO makes a big difference when your performing a full build (compilation and packaging) of software.IDEs these days spend a lot their time reading/writing small files (thats a lot of small, random, disk IO) and a good SSD can make a huge difference to this.