NVIDIA Acquires AGEIA: Enlightenment and the Death of the PPU

by Derek Wilson on February 12, 2008 11:00 AM EST- Posted in

- GPUs

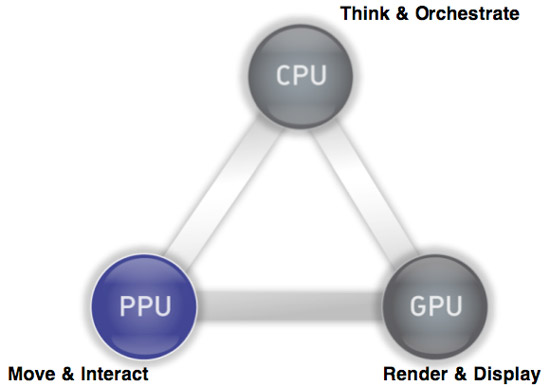

Last week, NVIDIA announced that they have agreed to acquire AGEIA. As most here probably know, AGEIA is the company that make the PhysX physics engine and acceleration hardware. The PhysX PPU (physics processing unit) is designed to accelerate the processing of physics calculations in order to offer developers the potential to deliver more realistic and immersive worlds. The PhysX SDK is there for developers to be able to write game engines that can take advantage of either a CPU or dedicated hardware.

While this has been a terrific idea in theory, the benefits of the hardware are currently a tough sell. The problem stems from the fact that game developers can't rely on gamers having a physics card, and thus they are unable to base fundamental aspects of gameplay on the assumption of physics hardware being present. It is similar to the issues we saw when hardware 3D graphics acceleration first came on to the scene, only the impact from hardware 3D was more readily apparent. The long term benefit from physics hardware is less in what you see and more in the basic principles of how a game world works.

Currently, the way the developers make use of PhysX is based on the lowest common denominator performance: how fast can it run on a CPU. With added hardware, effects can scale (more particles, more objects, faster processing, etc.), but you can't get much beyond "eye candy" style enhancements; you can't yet expect game developers to implement worlds dependent on hardware accelerated physics.

The NVIDIA acquisition of AGEIA would serve to change all that by bringing physics hardware to everyone via a software platform already tailored to scale physics capabilities and performance to the underlying hardware. How is NVIDIA going to be successful where AGEIA failed? After all, not everyone has or

will have NVIDIA graphics hardware. That's the interesting bit.

PPU/GPU, What's the Difference?

Why Dedicated Hardware?

Ever since AGEIA hit the scene, GPU makers have been jumping up and down saying "we can do that too." Sure, physics can run on GPUs. Both graphics and physics lend themselves to a parallel architecture. There are differences though, and AGEIA claimed to be able to handle massively parallel and dependent computations much better than anything else out there. And their claim is probably true. They built hardware to do lots of physics really well.

The problem with that is the issue we mentioned above: developers aren't going to push this thing to the limits by creating games centered on dedicated physics hardware. The type of "effects" physics that developer are currently using the PhysX hardware for is also well suited to a GPU. Certainly complex systems with collisions between rigid and soft bodies happening everywhere would drown a GPU, but adding particles or more fragments from explosions or more gibs or debris is not a problem for either NVIDIA or AMD.

The Saga of Havok FX

Of course, that's why Havok FX came along. Attempting to make use of shaders to implement a physics engine, Havok FX would have enabled developers to start looking at using more horsepower for effects physics without regard for dedicated hardware. While the contemporary GPUs might not have been able to match up to the physics processing power of the PhysX hardware, that really didn't matter because developers were never going to push PhysX to its limits if they wanted to sell games.

But, now that Intel has acquired Havok, it seems that Havok FX is no longer a priority. Or even a thing at all from what we can find. Obviously Intel would prefer all the physics processing stay on the CPU at this point. We can't really blame them; it's good business sense. But it is certainly not the most beneficial thing for the industry as a whole or for gamers in particular.

And now, with no promise of a physics SDK to support graphics cards, and slow adoption of PhysX hardware, NVIDIA saw itself with an opportunity.

Seriously: Why Dedicated Hardware?

In light of the Intel / Havok situation, NVIDIA's acquisition of AGEIA makes sense. They get the PhysX physics engine that they can port over to their graphics hardware. The SDK is already used in many games across many platforms. Adding NVIDIA GPU acceleration to PhysX instantly provides all owners of games that make use of PhysX with hardware for physics acceleration when running on an NVIDIA GPU.

As we pointed out, compared to current GPUs, dedicated physics hardware has more potential physics power. But we also are not going to see a high level of relative physics complexity implemented until developers can be sure consumers have the hardware to handle it. The GPU is just as good as the PhysX card at this stage in hardware accelerated physics. At this point in time there is no benefit to all the power that sits dormant in a PhysX card, and the GPU offers a good solution to the kinds of effects developers are actually using PhysX to implement.

The PhysX software engine is capable of scaling complexity and performance if there is hardware present, and with NVIDIA GPUs essentially being that hardware there is certainly an instantaneously larger install base for PhysX. This totally tips the scales away from the need for dedicated hardware and towards the replacement of the PPU with the GPU at this point in time. We'll look at the future in a second.

32 Comments

View All Comments

kilkennycat - Tuesday, February 12, 2008 - link

Ageia will certainly not make a paper-weight for nVidia. This was a very smart acquisition. There is a side of nVidia's business that you may currently know very little about, but that particular side of their business is probably the fastest growing and potentially the most profitable of all their ventures... considering the type of customer involved. nVidia is providing massively-parallel-processing-on-the-desktop capability for both academic research and the engineering industry, thanks to their CUDA toolset. Time costs money in research and can represent lost-opportunity-cost in engineering-related businesses. Waiting in line for centralized servers to crunch numbers is a time-losing game for those involved. Ageia brings a powerful physics-package to the party for those in research or industry who need it. No doubt nVidia is furiously working on integrating the Ageia physics library into their CUDA toolset.MadBoris - Wednesday, February 13, 2008 - link

Fair enough, if the technology is used to better map proteins it may help nvidia become more prominent in those venues. I agree I know nothing of it, but if it's geared towards those uses primarily and gains a following, it's doubtful it's uses will be as successful in gaming for reasons mentioned.