AMD & ATI: The Acquisition from all Points of View

by Anand Lal Shimpi on August 1, 2006 10:26 PM EST- Posted in

- CPUs

AMD’s Position

Given that AMD is the one ponying up $5.4 billion dollars for the acquisition of ATI, there had better be some incredibly good reasons motivating the investment, especially considering that AMD isn't sitting on a ton of cash at the moment. AMD is obviously extremely bullish on the move, but still vague on most details as to what it plans on doing with ATI assuming the deal goes through. The majority of AMD's statements publicly have been reassuring the market that its intention isn't to become another Intel, that it will continue to value its partners (even those that compete with ATI) and still treat them better than Intel would.

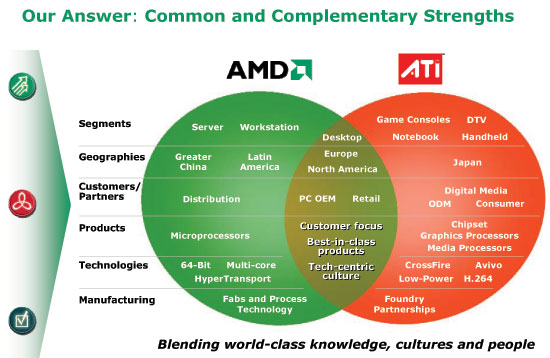

Completing the Platform & Growing x86 Market Share

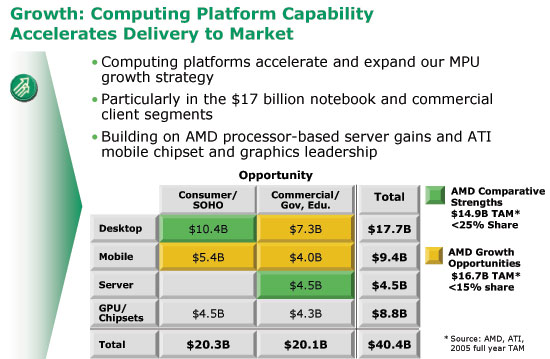

While AMD has always publicly stated that it prefers to work with its partners, rather than against them like Intel does, this move is all about becoming more like Intel. From the platform standpoint, AMD would essentially be expanding its staff to include more engineers, team leaders and product managers that could develop chipsets with and without integrated graphics for AMD processors. Each AMD CPU sold helps sell a great deal of non-AMD silicon (e.g. NVIDIA GPU, NVIDIA North Bridge, NVIDIA South Bridge), and by acquiring ATI AMD would be able to offer a complete platform that could keep all of those sales in-house. From a customer standpoint, it’s a lot easier to sell a complete package to a customer than it is to sell an individual component. Intel proved the strength of the platform with Centrino and AMD is merely following in the giant’s footsteps.

Going along with completing the platform, being able to provide a complete AMD solution of CPU, motherboard and chipset with integrated graphics could in theory increase AMD’s desktop and mobile market share. According to AMD, each percentage point of x86 market share is worth about $300M in revenues. At current profit margins of around 60%, if the acquisition can help increase AMD’s market share by enough percentage points it’s a no-brainer. AMD is convinced that with a complete platform, it could take even more market share away from Intel particularly in the commerical desktop and consumer/commerical mobile markets.

Step 2 in Becoming Intel: Find Something to do with Older Fabs

Slowly but surely, AMD has been following in Intel’s footsteps, aiming to improve wherever possible. We saw the first hints of this trend with the grand opening of Fab 36 in Dresden, and the more recent commitment to build a fab in New York. AMD wants to get its manufacturing business in shape, which is necessary in order to really go after Intel.

A secondary part of that requirement is that you need to have something to manufacture at older fabs before you upgrade them to help extend the value of your investment. By acquiring ATI, chipsets and even some GPUs can be manufactured at older fabs before they need to be transitioned to newer technologies (e.g. making chipsets at Fab 30 on 90nm while CPUs are made at Fab 36 at 65nm).

Once the New York fab is operational, AMD could have two state of the art fabs running the smallest manufacturing processes, with one lagging behind to handle chipset and GPU production. The lagging fab would change between all three fabs, as they would each be on a staggered upgrade timeline - much like how Intel manages to keep its fabs full. For example, Intel's Fab 11X in New Mexico is a 90nm 300mm fab that used to make Intel's flagship Pentium 4/D processors, but now it's being transitioned to make chipsets alongside older 90nm CPUs while newer 65nm CPUs are being made at newly upgraded fabs.

Presently, AMD has no plans to change the way ATI GPUs and chipsets are manufactured. ATI's business model of using TSMC/UMC for manufacturing will not change for at least the next 1 - 2 years, after which AMD will simply do what makes sense.

What if GPUs and CPUs Become One

If GPUs do eventually become one with CPUs as some are predicting, then the ATI acquisition would be a great source of IP for AMD. For Intel, getting access to IP from companies like ATI isn’t too difficult, because Intel has a fairly extensive IP portfolio that other companies need access to in order to survive (e.g. Intel Bus license). The two companies would simply strike out a cross licensing agreement, and suddenly Intel gets what it wants while the partner gets to help Intel sell more CPUs.

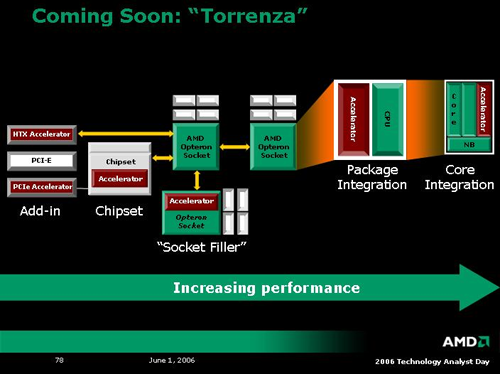

AMD doesn’t quite have the strength of Intel in that department, but by acquiring ATI it would be fairly well prepared for merging CPUs and GPUs. The process doesn't have to be that extreme, however. Remember AMD's Torrenza announcement back at its June 2006 analyst day? Part of the strategy included putting various types of "accelerators" either in a Hyper Transport slot or on-package with an AMD CPU, not necessarily on-die.

Conveniently, the "accelerator" blocks are all colored red in AMD's diagram, but you can see many areas that ATI's IP could be used here. AMD could put ATI's Avivo engine in the chipsets for HTPC or CE applications, you could find an ATI GPU in a HTX slot or integrated on the CPU package.

We're moving to quad-core CPUs next year, and there is definitely some debate about how useful that will truly be for the home computer user. Beyond quad cores, what do CPU manufacturers do to continue to sell product? Ramping up clock speeds is becoming more difficult, and while two cores definitely shows some promise, and four cores can be useful, it's really difficult to imagine a computing environment at this point where the typical user needs four or more CPU cores. Long-term, throwing more cores on could give way to putting a GPU into the core, and given the nearly infinitely parallel nature of graphics it becomes a bit easier to make use of additional transistors. Remember that ATI and NVIDIA both have flagship products with over 300M transistors, while AMD is currently using about half that for the 2x512K X2 chips. Core 2 4M is close to 300M transistors, but a large number of those are devoted to cache, and doubling cache quickly has diminishing returns.

A Great Way of Penetrating the CE Market

Intel had a huge showing at this year’s Consumer Electronics Show (CES) in Vegas, making very clear its intentions to be a significant force in the CE market moving forward. AMD unfortunately has very little recognition or penetration in the CE market, but buying ATI would change all of that. Aside from the fact that ATI is in Microsoft’s Xbox 360, an item that Microsoft wants to be entrenched in the Digital Home, ATI silicon is also used in many digital televisions as well as cell phones. By acquiring ATI, AMD would be able to gain entry into the extremely lucrative CE market.

If the world of convergence devices truly do take off, AMD's acquisition of ATI would pay off as it would give AMD the starting exposure necessary to make even further moves into the CE market.

61 Comments

View All Comments

jjunos - Wednesday, August 2, 2006 - link

I believe that the high % ATI is getting here is because of a shortage of chipsets that INTEL was experiencing in the last quarter. As the article states the increase of 400% in ATI chipset marketshare.So as such, I wouldn't necessarily take this breakdown as permanent future marketshares.

JarredWalton - Wednesday, August 2, 2006 - link

I added some clarification (the quality commentary). Basically, Intel is the king of Intel platform chipsets, and NVIDIA rules the AMD platform. ATI sells more total chipsets than NVIDIA at present, but a lot of those go into laptops, and ATI chipset performance on Intel platforms has never been stellar. Then again, VIA chipset performance on Intel platforms has never been great, and they still provide 15% of all chipsets sold.Of course, the budget sector ships a TON of systems, relatively speaking. That really skews the numbers. Low average profit, lower quality, lower performance, but lots of market share. That's how Intel remains the #1 GPU provider. I'd love to see numbers showing chipset sales if we remove all low-end "budget" configurations. I'm not sure if VIA or SiS would even show up on the charts if we only count systems that cost over $750.

jones377 - Wednesday, August 2, 2006 - link

Before Nvidia bought Uli they had about 5% marketshare IIRC. And I bet of those current 9% Nvidia share, ULi still represents a good portion (probably on the order of 3-4%) and it's all in the low-end. For some reason, Nvidia really sucks at striking OEM deals compared to ATI which have historically been very good at it. The fact that Intel is selling motherboards with ATI chipsets is really helping ATI though.According to my previous link, ATI has 28% of the AMD platform market despite being a relative newcomer compared to Nvidia there. I think even without this buyout, ATI would have continued to grow this share, now this is a certain. I think Nvidia also realised this a while back because they started pushing their chipsets for the Intel platform much more.

Still, Nvidia will have an uphill struggle in the long term. If Intel chooses a new chipset partner in the future (they might just go at it alone instead), they are just as likely to pick SiS or VIA (though I doubt they will go VIA) over Nvidia and SiS already have a massive marketshare advantage over Nvidia there (well since Nvidia has almost none everyone has). So while ATI will likely loose most/all of their Intel chipset marketshare eventually, I doubt Nvidia will gobble up all of that. They will face stiff competition from Intel, SiS and VIA in that order. The one bright spot for Nvidia is that they should continue to hold on to the profitable high-end chipset market for the AMD platform and grow it for the Intel platform. Still, overall this market is very small..

And lets not even mention the mobile market... Intel has that one all gobbled up for itself with the Centrino brand and this AMD/ATI deal will ensure that AMD will have something simular soon. Given the high power consumption of Nvidia chipset making them already unsuited for the mobile market, even if they come out with an optimised mobile chipset, their window of opportunity is all but gone there now.

Sunrise089 - Wednesday, August 2, 2006 - link

In terms of style, I don't think the initial part of the article (the part with each company's slant) was very clear. Was it pure PR speak (which is how the NVIDIA part read) or AT's targeted analysis (how the Intel part read).Second, I think quotes like this:

"Having each company operate entirely independently makes no sense, since we've already discussed that it's what these two can do together that makes this acquisition so interesting."

continue to show you guys are great tech experts, but may also suggest that you guys aren't the best business writers on the web (not saying I am either of course). A lot of companies are acquired by another company solely for the purpose of making money, not any sort of integration or creation of a competitive advantage. If a company is perceived to be undervalued, and another company feels it's currently in a good financial situation, it's a smart move to spend the $$$ on an acquisition if you feel the acquired company's long term growth may out-pace that of the currently wealthier company. Yahoo and Google do this sort of thing all the time, and big companies like Berkshire-Hathaway do it as well. Do you think Warren Buffet really wanted to invest in Gillette to allow his employees to obtain cheaper shaving products? No, he simply felt he had money available and buying a share of Gilette would net him money in the long term.

If the ATI/AMD merger only creates the possibility of major collaboration between the two companies in the future (basically as a hedge for both companies against unexpected or uncertain changes in the marketplace) but ATI continue to turn a profit when seen as an individual corporate entity, than the acquisition was the correct thing to do so long as AMD had no better use for the $$$ it will spend on the purchase.

defter - Wednesday, August 2, 2006 - link

If the deal goes through, ATI won't be an individual corporate entity. There will be just one company, and it will be called "AMD".

That's why Anand's comment makes sense. It would be quite silly e.g. for marketing teams to operate independently. Imagine: first AMD's team goes to OEM to sell the CPU. Then the "ATI" team goes to the same OEM to sell GPU/chipset. Isn't it much better to combine those teams so they can offer CPU+GPU/chipset to the OEM at once?

jjunos - Wednesday, August 2, 2006 - link

I can't see ATI simply throwing away their name. They've spent way too much money and time building up their brand, why throw it away now?Sunrise089 - Wednesday, August 2, 2006 - link

I'm not sold on the usefullness of combining the marketing at all. Yes, to OEMs you would obviously do it, but why on the retail channel? ATI has massive brand recognition, AMD has none in the GPU maerketplace. Even if the teams are the same people, using the ATI name would not at all be a ridiculous notion. Auto manufactures do this all the time: Ford ownes Mazda, and for economics are scale purposes builds the Escape and Tribute at the same plant. Then when selling to a rental company, they would both be sold by corporate fleet sales, but when sold to the public Ford and Mazda products are marketed completely independently of one another.Once again, even if both companies are owned by AMD it is not impossible to still keep the two divisions farely distinct, and that's where my "when seen as an individual corporate entity" comment came from.

johnsonx - Wednesday, August 2, 2006 - link

Obviously you are referring to ATI and NVIDIA. The 3d revolution certainly did spawn NVIDIA, but my recollection says that ATI has been around far longer than that. I think I still have an ATI Mach-32 EISA-bus graphics card in a box somewhere, and that was hardly ATI's first product. ATI products even predate the 2D graphics accelerator, and even predate VGA if I recall correctly (anyone see any 9-pin monitor plugs lately?). I do suppose your statement is correct in the sense that there were far more graphics chip players in the market 'back then'; today there really are just two giants and about 3 also-rans (Matrox, SiS, XGI). ATI was certainly one of the big players 'back then'; indeed it took me (and ATI too, for that matter) quite some time to figure out that in the 3D Market that ATI was a mere also-ran themselves for awhile; the various 3D Rage chips were rather uncompetitive vs the Voodoo and TNT series of the times.

No offense to you kids who write for AT, but I actually remember the pre-3D days. I sold and serviced computers with Hercules Monochrome graphics adapters, IBM CGA and EGA cards, etc. The advent of VGA was a *BIG DEAL*, and it took quite some time before it was at all common, as it was VERY expensive. I remember many of ATI's early VGA cards had mouse ports on them too (and shipped with a mouse), since the likely reason to even want VGA was to run Aldus Pagemaker which of course required a mouse (at least some versions of it used a run-time version of Windows 1.0... there was also a competing package that used GEM, but I digress).

To make a long story short, in turn by making it even longer, ATI was hardly 'spawned' by the 3D revolution.

now I'll just sit back and wait for the 'yeah, well I remember farther back than you!' flames, along with the 'shut up geezer, no one cares about ancient history!' flames.

Wesley Fink - Wednesday, August 2, 2006 - link

Not everyone at AT is a kid. My 3 children are all older than our CEO, and Gary Key has been around in the notebook business since it started. If I recall I was using a CPM-based Cromemco when Bill Gates was out pushing DOS as a cheaper alternative to expensive CPM. I also had every option possible in my earlier TI99-4A expansion box. There amy even be a Sinclair in a box in the attic - next to the first Apple.You are correct in that ATI pre-dates 3-D and had been around eons before nVidia burst on the scene with their TNT. I'm teaching this to my grandchildren so they won't grow up assuming - like some of our readers - that anyone older than 25 is computer illiterate. All my kids are in the Computer Business and they all still call me for advice.

Gary Key - Thursday, August 3, 2006 - link

I am older than dirt. I remember building and selling Heath H8 kits to pay for college expenses. The days of programming in Benton Harbor Basic and then moving up to HDOS and CP/M were exciting times, LOL. My first Computer Science course allowed me to learn the basics to program the H10 paper tape reader and punch unit and sell a number of units into the local Safeway stores with the H9 video/modem (1200 baud) kit. A year later I upgraded everyone with the H-17 drive units, dual 5.25" floppy drives ($975) that required 16k ($375) of RAM to operate (base machine had 4k of RAM).Anyway, NVIDIA first started with the infamous NV1 (VRAM) or STG2000 (DRAM) cards that featured 2d/3d graphics and an advanced audio (far exceeded Creative Labs offerings) engine. Of course Microsoft failed to support Quadratic Texture Maps in the first version of Direct3D that effectively killed the cards. I remember having to dispose of several thousand Diamond EDGE 3D cards at a former company. They rebounded of course with the RIVA 128 (after spending a lot of time on the ill-fated NV2 for Sega, but it paid the bills) and the rest is history.

While ATI pre-dated most graphic manufacturers, they were still circling the drain from a consumer viewpoint and also starting to lose OEM contracts (except for limited Rage Pro sales due to multimedia performance) in 1997 when they acquired Tseng Labs. Thanks to those engineers the Rage 128 became a big OEM hit in 1998/1999 although driver performance was still terrible on the consumer 3D side even though the hardware was competitive but lead to the once again OEM hit, Radeon 64. The biggest break came in 2000 when they acquired ArtX and a couple of years later we had the R300, aka Radeon 9700 and the rest is history. If S3 had not failed so bad with driver support and buggy hardware releases in the late 1998 with the Savage 3D, ATI very well could have gone the way of Tseng, Trident, and others as S3 was taking in significant OEM revenue from the Trio and ViRGE series chipsets.

Enough old fart history for tonight, back to work..... :)