The Intel 12th Gen Core i9-12900K Review: Hybrid Performance Brings Hybrid Complexity

by Dr. Ian Cutress & Andrei Frumusanu on November 4, 2021 9:00 AM ESTFundamental Windows 10 Issues: Priority and Focus

In a normal scenario the expected running of software on a computer is that all cores are equal, such that any thread can go anywhere and expect the same performance. As we’ve already discussed, the new Alder Lake design of performance cores and efficiency cores means that not everything is equal, and the system has to know where to put what workload for maximum effect.

To this end, Intel created Thread Director, which acts as the ultimate information depot for what is happening on the CPU. It knows what threads are where, what each of the cores can do, how compute heavy or memory heavy each thread is, and where all the thermal hot spots and voltages mix in. With that information, it sends data to the operating system about how the threads are operating, with suggestions of actions to perform, or which threads can be promoted/demoted in the event of something new coming in. The operating system scheduler is then the ring master, combining the Thread Director information with the information it has about the user – what software is in the foreground, what threads are tagged as low priority, and then it’s the operating system that actually orchestrates the whole process.

Intel has said that Windows 11 does all of this. The only thing Windows 10 doesn’t have is insight into the efficiency of the cores on the CPU. It assumes the efficiency is equal, but the performance differs – so instead of ‘performance vs efficiency’ cores, Windows 10 sees it more as ‘high performance vs low performance’. Intel says the net result of this will be seen only in run-to-run variation: there’s more of a chance of a thread spending some time on the low performance cores before being moved to high performance, and so anyone benchmarking multiple runs will see more variation on Windows 10 than Windows 11. But ultimately, the peak performance should be identical.

However, there are a couple of flaws.

At Intel’s Innovation event last week, we learned that the operating system will de-emphasise any workload that is not in user focus. For an office workload, or a mobile workload, this makes sense – if you’re in Excel, for example, you want Excel to be on the performance cores and those 60 chrome tabs you have open are all considered background tasks for the efficiency cores. The same with email, Netflix, or video games – what you are using there and then matters most, and everything else doesn’t really need the CPU.

However, this breaks down when it comes to more professional workflows. Intel gave an example of a content creator, exporting a video, and while that was processing going to edit some images. This puts the video export on the efficiency cores, while the image editor gets the performance cores. In my experience, the limiting factor in that scenario is the video export, not the image editor – what should take a unit of time on the P-cores now suddenly takes 2-3x on the E-cores while I’m doing something else. This extends to anyone who multi-tasks during a heavy workload, such as programmers waiting for the latest compile. Under this philosophy, the user would have to keep the important window in focus at all times. Beyond this, any software that spawns heavy compute threads in the background, without the potential for focus, would also be placed on the E-cores.

Personally, I think this is a crazy way to do things, especially on a desktop. Intel tells me there are three ways to stop this behaviour:

- Running dual monitors stops it

- Changing Windows Power Plan from Balanced to High Performance stops it

- There’s an option in the BIOS that, when enabled, means the Scroll Lock can be used to disable/park the E-cores, meaning nothing will be scheduled on them when the Scroll Lock is active.

(For those that are interested in Alder Lake confusing some DRM packages like Denuvo, #3 can also be used in that instance to play older games.)

For users that only have one window open at a time, or aren’t relying on any serious all-core time-critical workload, it won’t really affect them. But for anyone else, it’s a bit of a problem. But the problems don’t stop there, at least for Windows 10.

Knowing my luck by the time this review goes out it might be fixed, but:

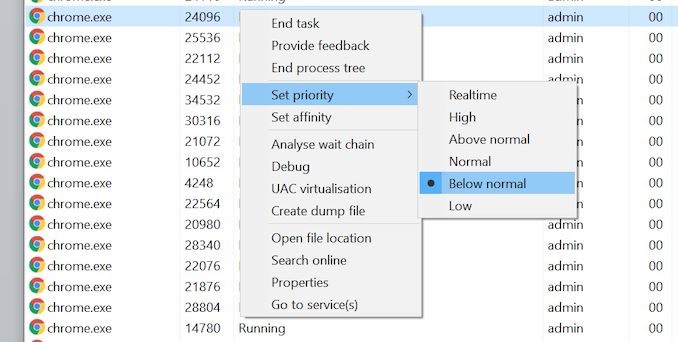

Windows 10 also uses the threads in-OS priority as a guide for core scheduling. For any users that have played around with the task manager, there is an option to give a program a priority: Realtime, High, Above Normal, Normal, Below Normal, or Idle. The default is Normal. Behind the scenes this is actually a number from 0 to 31, where Normal is 8.

Some software will naturally give itself a lower priority, usually a 7 (below normal), as an indication to the operating system of either ‘I’m not important’ or ‘I’m a heavy workload and I want the user to still have a responsive system’. This second reason is an issue on Windows 10, as with Alder Lake it will schedule the workload on the E-cores. So even if it is a heavy workload, moving to the E-cores will slow it down, compared to simply being across all cores but at a lower priority. This is regardless of whether the program is in focus or not.

Of the normal benchmarks we run, this issue flared up mainly with the rendering tasks like CineBench, Corona, POV-Ray, but also happened with yCruncher and Keyshot (a visualization tool). In speaking to others, it appears that sometimes Chrome has a similar issue. The only way to fix these programs was to go into task manager and either (a) change the thread priority to Normal or higher, or (b) change the thread affinity to only P-cores. Software such as Project Lasso can be used to make sure that every time these programs are loaded, the priority is bumped up to normal.

474 Comments

View All Comments

Spunjji - Friday, November 5, 2021 - link

N7 is a little more dense than Intel's 10nm-class process - 15-20% in comparable product lines (e.g. Renoir vs. Ice Lake, Lakefield vs. Zen 3 compute chiplet). There is no indication that Intel 7 is more dense than previous iterations of 10nm. N7 also appears to have better power characteristics.It's difficult to tell, though, because Intel are pushing much harder on clock speeds than AMD and have a wider core design, both of which would increase power draw even on an identical process.

Blastdoor - Thursday, November 4, 2021 - link

I’m a little surprised by the low level of attention to performance/watt in this review. ArsTechnica gave a bit more info in that regard, and Alder Lake looks terrible on performance/watt.If Intel had achieved this performance with similar efficiency to AMD I would have bought Intel stock today.

But the efficiency numbers here are truly awful. I can see why this is being released as an enthusiast desktop processor -- that's the market where performance/watt matters least. In the mobile and data center markets (ie, the Big markets), these efficiency numbers are deal breakers. AMD appears to have nothing to fear from Intel in the markets that matter most.

meacupla - Thursday, November 4, 2021 - link

Yeah, the power consumption of 12900K is quite bad.From other reviews, it's pretty clear that highest end air cooling is not enough for 12900K, and you will need a thick 280mm or 360mm water cooler to keep 12900K cool.

Ian Cutress - Thursday, November 4, 2021 - link

I think there are some issues with temperature readings on ADL. A lot of software showcases 100C with only 3 P-cores loaded, but even with all cores loaded, the CPU doesn't de-clock at that temp. My MSI AIO has a temperature display, and it only showed 75C at load. I've got questions out in a few places - I think Intel switched some of the thermal monitoring stuff inside and people are polling the wrong things. Other press are showing 100C quite easily too. I'm asking MSI how their AIO had 75C at load, but I'm still waiting on an answer. An ASUS rep said that 75-80C should be normal under load. So why everything is saying 100C I have no idea.Blastdoor - Thursday, November 4, 2021 - link

Note that the ArsTechnica review looks at power draw from the wall, so unaffected by sensor issues.jamesjones44 - Thursday, November 4, 2021 - link

They also show the 5900x somehow drawing more power than a 5950x at full load. While I'm sure Intel is drawing more power, I question their testing methods given we know there is very little chance of a 5950x fully loaded drawing less than a 5900x unless they won or lost the CPU lottery.TheinsanegamerN - Thursday, November 4, 2021 - link

techspot and TPU also show that, and it has been explained before that the 5950x gets the premium dies and runs at a lower core voltage then the 5900x, thus it pulls less power despite having more cores.haukionkannel - Thursday, November 4, 2021 - link

5950x use better chips than 5900x... that is the reason for power usage!vegemeister - Saturday, November 6, 2021 - link

5950X can hit the current limit when all cores are loaded, so the power consumption folds back.meacupla - Thursday, November 4, 2021 - link

75C reading from the AIO, presumably a reading from the base plate, is quite hot, I must say.