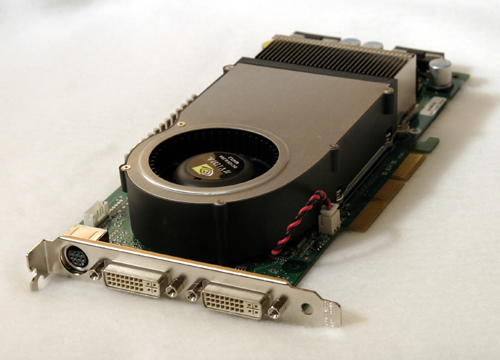

NVIDIA GeForce 6800 Ultra: The Next Step Forward

by Derek Wilson on April 14, 2004 8:42 AM EST- Posted in

- GPUs

Introduction

Today has been a long time in coming, especially for NVIDIA. It has been almost 2 years since they were on top of the industry, and they definitely want their position back. With the troubles the NV3x line of cards had, NVIDIA has really been pushing to get every bit of performance out of their CineFX architecture as possible.

In pushing for performance they have produced a massive GPU that can push quite a lot of pixels. In addition, we are seeing the introduction of a handful of DX 9.0c (shader model 3.0) features. Of course, as with everything, there are pros and cons. Do the ups out weight the downs?

First lets look at what new features we can expect to see from this generation of graphics cards, then we'll take a look at the cost.

77 Comments

View All Comments

Marsumane - Wednesday, April 14, 2004 - link

This card owns... Anyone know when it ships to retail stores? Guesses even?SpaceRanger - Wednesday, April 14, 2004 - link

I'd like to see what ATI comes up with before I make my decision. I rushed to judgement back when the GF4 TI4600 came out, and regretted making the quick call to buy. If I don't have to get a new PSU for the ATI solution, I'll consider it, even if performance is 5-10FPS slower. Adding 100 bucks to the already costly 500 for the card doesn't justify the expenditure.gordon151 - Wednesday, April 14, 2004 - link

AtaStrumf is so right. More than likely you'll be able to buy the X800s before you can buy this.Shinei - Wednesday, April 14, 2004 - link

Well, I'm sold. Yeah, that sounds fanboyish, but this thing is a solid performer and doesn't require me to completely replace my display drivers... Even if ATI wins by five FPS and has a lens flare in a forgotten corner of a screenshot that you have to stare at for ten minutes to spot, my money is going to NV40--assuming the prices come down a little. ;)Speaking of DX9/PS2.0, what about a Max Payne 2 benchmark? I'm curious what NV40 can do on that game with maxed out everything... :)

skiboysteve - Wednesday, April 14, 2004 - link

i love anandtech's deep technical reviews but yall did no where near enough testing, the xbit article does a hell of allot more testing, 48 pages!http://www.xbitlabs.com/articles/video/display/nv4...

the card fucking rapes everything.

the anand tests dont show nearly the rape the xbit ones do...

AtaStrumf - Wednesday, April 14, 2004 - link

I find it really funny when people say that they will wait until ATi releases their X800 to make up their buying decisions.It's not you can run out and BUY this card right now or tomorrow. Of yourse you will wait. You don't really have a choice :)

ChronoReverse - Wednesday, April 14, 2004 - link

The Techreport tested out the total power draw of this thing and it only drew slightly higher than the 5950 (both of which draws more than the 9800XT).So it seems the recommendation isn't actually necessary (and my Enermax enhanced 12V lines will take it easily).

Pete - Wednesday, April 14, 2004 - link

mkruer #27, all the reviews I've read mention $500 for the 6800U, and $299 for a 12-pipe 128MB 6800.DerekWilson - Wednesday, April 14, 2004 - link

#27,The 6800 Ultra (which we tested) will be priced at $500

The 6800 (with 12 pipes rather than 16) will be priced at $300

Pete - Wednesday, April 14, 2004 - link

quikah #26: FarCry comparison screens are at HOCP.http://hardocp.com/article.html?art=NjA2LDU=

Apparently PS3 wasn't enabled, but the 6800U looks better than the 5950U running PS2. It's still uglier than the 9800XT, sadly. Banding abounds, both here and in FiringSquad's Lock-On screens. Puzzling, really. If the 6800U really runs FP32 as fast as FP16 within memory limits, I wonder if all it will take to get IQ on a level with ATi is forcing the 6800U to run the ATi path or removing the NV3x path's _pp hints.