Retesting AMD Ryzen Threadripper’s Game Mode: Halving Cores for More Performance

by Ian Cutress on August 17, 2017 12:01 PM ESTCPU Rendering Tests

Rendering tests are a long-time favorite of reviewers and benchmarkers, as the code used by rendering packages is usually highly optimized to squeeze every little bit of performance out. Sometimes rendering programs end up being heavily memory dependent as well - when you have that many threads flying about with a ton of data, having low latency memory can be key to everything. Here we take a few of the usual rendering packages under Windows 10, as well as a few new interesting benchmarks.

All of our benchmark results can also be found in our benchmark engine, Bench.

Corona 1.3: link

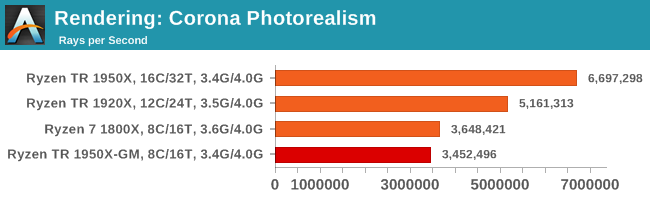

Corona is a standalone package designed to assist software like 3ds Max and Maya with photorealism via ray tracing. It's simple - shoot rays, get pixels. OK, it's more complicated than that, but the benchmark renders a fixed scene six times and offers results in terms of time and rays per second. The official benchmark tables list user submitted results in terms of time, however I feel rays per second is a better metric (in general, scores where higher is better seem to be easier to explain anyway). Corona likes to pile on the threads, so the results end up being very staggered based on thread count.

Corona loves threads. Game Mode goes behind the 1800X due to frequency.

Blender 2.78: link

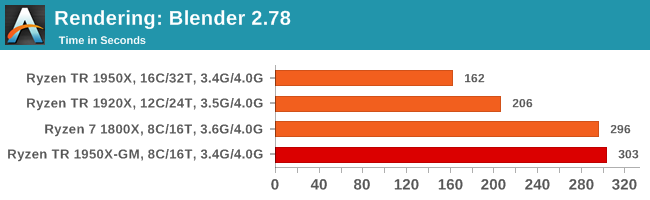

For a render that has been around for what seems like ages, Blender is still a highly popular tool. We managed to wrap up a standard workload into the February 5 nightly build of Blender and measure the time it takes to render the first frame of the scene. Being one of the bigger open source tools out there, it means both AMD and Intel work actively to help improve the codebase, for better or for worse on their own/each other's microarchitecture.

Blender loves threads.

LuxMark v3.1: Link

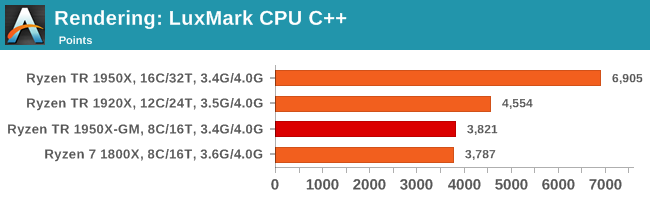

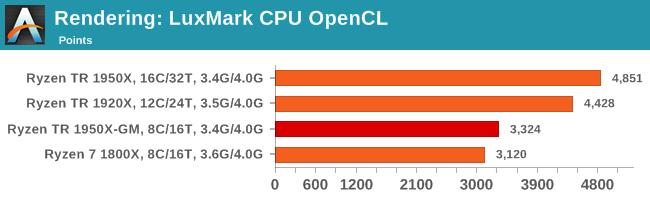

As a synthetic, LuxMark might come across as somewhat arbitrary as a renderer, given that it's mainly used to test GPUs, but it does offer both an OpenCL and a standard C++ mode. In this instance, aside from seeing the comparison in each coding mode for cores and IPC, we also get to see the difference in performance moving from a C++ based code-stack to an OpenCL one with a CPU as the main host.

Like Blender, LuxMark is all about the thread count. Ray tracing is very nearly a textbook case for easy multi-threaded scaling, although a couple of things pop up in the OpenCL version. Aside from the scores being lower, the jump from 1920X to 1950X isn't that great, and the quad-channel DRAM of the 1950X in Game Mode puts it over the 1800X.

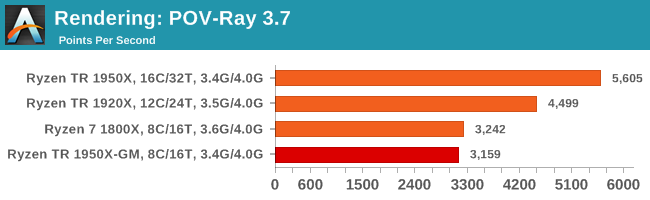

POV-Ray 3.7.1b4: link

Another regular benchmark in most suites, POV-Ray is another ray-tracer but has been around for many years. It just so happens that during the run up to AMD's Ryzen launch, the code base started to get active again with developers making changes to the code and pushing out updates. Our version and benchmarking started just before that was happening, but given time we will see where the POV-Ray code ends up and adjust in due course.

POV-Ray loves threads.

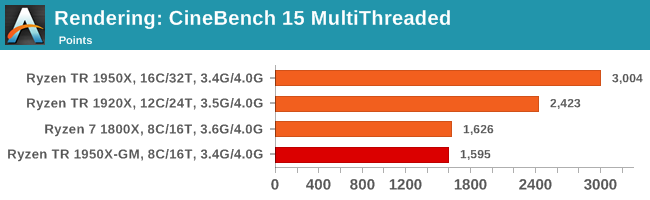

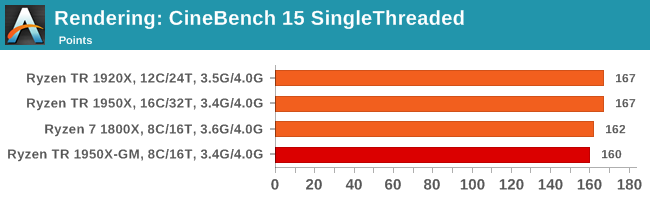

Cinebench R15: link

The latest version of CineBench has also become one of those 'used everywhere' benchmarks, particularly as an indicator of single thread performance. High IPC and high frequency gives performance in ST, whereas having good scaling and many cores is where the MT test wins out.

Multithreaded results are as expected, and single thread seems to benefit a bit from more DRAM channels, although 200 MHz is enough to put the 1800X over the 1950X in Game Mode.

104 Comments

View All Comments

Lieutenant Tofu - Friday, August 18, 2017 - link

"... we get an interesting metric where the 1950X still comes out on top due to the core counts, but because the 1920X has fewer cores per CCX, it actually falls behind the 1950X in Game Mode and the 1800X despite having more cores. "Would you mind elaborating on this? How does the proportion of cores per CCX affect performance?

JasonMZW20 - Sunday, August 20, 2017 - link

The only thing I can think of is CCX cache locality. Given a choice, you want more cores per CCX to keep data on that CCX rather than using cross-communication between CCXes through L2/L3. Once you have to communicate with the other CCX, you automatically incur a higher average latency penalty, which in some cases, is also a performance penalty (esp. if data keeps moving between the two CCXes).Lieutenant Tofu - Friday, August 18, 2017 - link

On the compile test (prev page):"... we get an interesting metric where the 1950X still comes out on top due to the core counts, but because the 1920X has fewer cores per CCX, it actually falls behind the 1950X in Game Mode and the 1800X despite having more cores. "

Would you mind elaborating on this? How does the proportion of cores per CCX affect performance?

rhoades-brown - Friday, August 18, 2017 - link

This gaming mode intrigues me greatly- the article states that the PCIe lanes and memory controller is still enabled, but the cores are turned off as shown in this diagram:http://images.anandtech.com/doci/11697/kevin_lensi...

If these are two complete processors on one package (as the diagrams and photos show), what impact does having gaming mode enabled and a PCIe device connected to the PCIe controller on the 'inactive' side? The NUMA memory latency seems to be about 1.35 surely this must affect the PCIe devices too- further how much bandwidth is there between the two processors? Opteron processors use HyperTransport for communication, do these do the same?

I work in the server world and am used to NUMA systems- for two separate processor packages in a 2 socket system, cross-node memory access times is normally 1.6x that of local memory access. For ESXi hosts, we also have particular PCIe slots that we place hardware in, to ensure that the different controllers are spread between PCIe controllers ensuring the highest level of availability due to hardware issue and peek performance (we are talking HBAs, Ethernet adapters, CNAs here). Although, hardware reliability is not a problem in the same way in a Threadripper environment, performance could well be.

I am intrigued to understand how this works in practice. I am considering building one of these systems out for my own home server environment- I yet to see any virtualisation benchmarks.

versesuvius - Friday, August 18, 2017 - link

So, what is a "Game"? Uses DirectX? Makes people act stupidly? Is not capable of using what there is? Makes available hardware a hindrance to smooth computing? Looks like a lot of other apps (that are not "Game") can benefit from this "Gaming Mode".msroadkill612 - Friday, August 18, 2017 - link

A shame no Vega GPU in the mix :(It may have revealed interesting synergies between sibling ryzen & vega processors as a bonus.

BrokenCrayons - Friday, August 18, 2017 - link

The only interesting synergy you'd get from a Threadripper + Vega setup is an absurdly high electrical demand and an angry power supply. Nothing makes less sense than throwing a 180W CPU plus a 295W GPU at a job that can be done with a 95W CPU and a 180W GPU just as well in all but a few many-threaded workloads (nevermind the cost savings on the CPU for buying Ryzen 7 or a Core i7).versesuvius - Friday, August 18, 2017 - link

I am not sure if I am getting it right, but apparently if the L3 cache on the first Zen core is full and the core has to go to the second core's L3 cache there is an increase in latency. But if the second core is power gated and does not take any calls, then the increase in latency is reduced. Is it logical to say that the first core has to clear it with the second core before it accesses the second core's cache and if the second core is out it does not have to and that checking with the second core does not take place and so latency is reduced? Moving on if the data is not in the second core's cache then the first core has to go to DRAM accessing which supposedly does not need clearance from the second core. Or does it always need to check first with the second core and then access even the DRAM?BlackenedPies - Friday, August 18, 2017 - link

Would Threadripper be bottlenecked by dual channel RAM due to uneven memory access between dies? Is the optimal 2 DIMM setup one per die channel or two on one die?Fisko - Saturday, August 19, 2017 - link

Anyone working on daily basis just to view and comment pdf won't use acrobat DC. Exception can be using OCR for pdf. Pdfxchange viewer uses more threads and opens pdf files much faster than Adobe DC. I regularly open files from 25 to 80 mb of CAD pdf files and difference is enormous.