The AMD Radeon R9 Fury Review, Feat. Sapphire & ASUS

by Ryan Smith on July 10, 2015 9:00 AM ESTGrand Theft Auto V

The final game in our review of the R9 Fury X is our most recent addition, Grand Theft Auto V. The latest edition of Rockstar’s venerable series of open world action games, Grand Theft Auto V was originally released to the last-gen consoles back in 2013. However thanks to a rather significant facelift for the current-gen consoles and PCs, along with the ability to greatly turn up rendering distances and add other features like MSAA and more realistic shadows, the end result is a game that is still among the most stressful of our benchmarks when all of its features are turned up. Furthermore, in a move rather uncharacteristic of most open world action games, Grand Theft Auto also includes a very comprehensive benchmark mode, giving us a great chance to look into the performance of an open world action game.

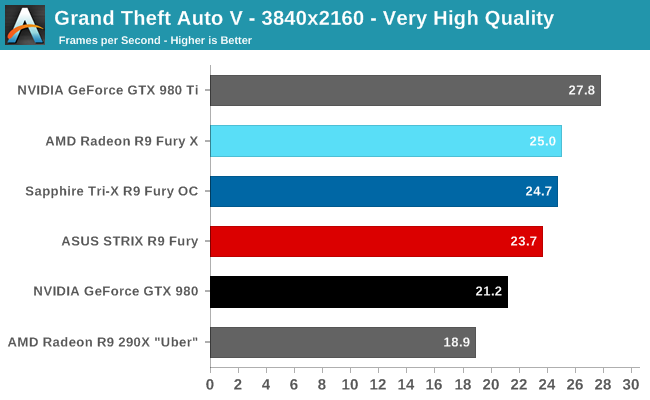

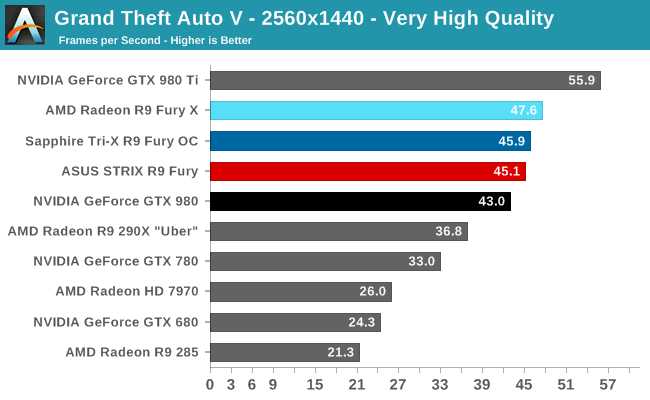

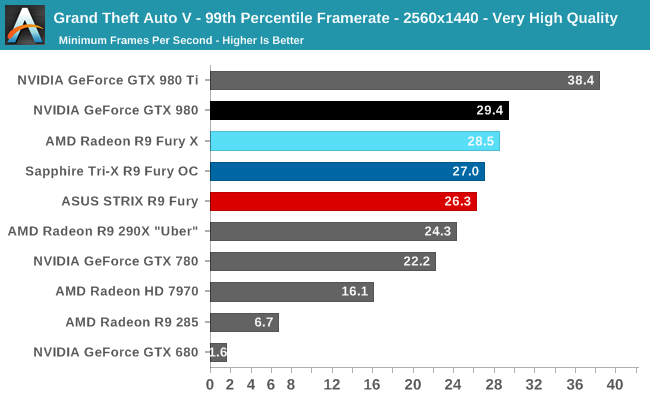

On a quick note about settings, as Grand Theft Auto V doesn't have pre-defined settings tiers, I want to quickly note what settings we're using. For "Very High" quality we have all of the primary graphics settings turned up to their highest setting, with the exception of grass, which is at its own very high setting. Meanwhile 4x MSAA is enabled for direct views and reflections. This setting also involves turning on some of the advanced redering features - the game's long shadows, high resolution shadows, and high definition flight streaming - but not increasing the view distance any further.

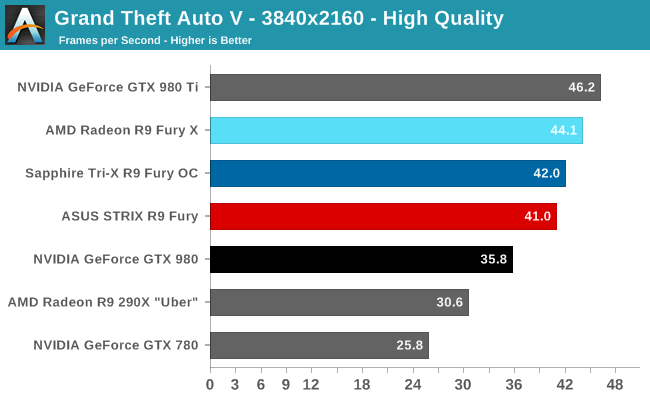

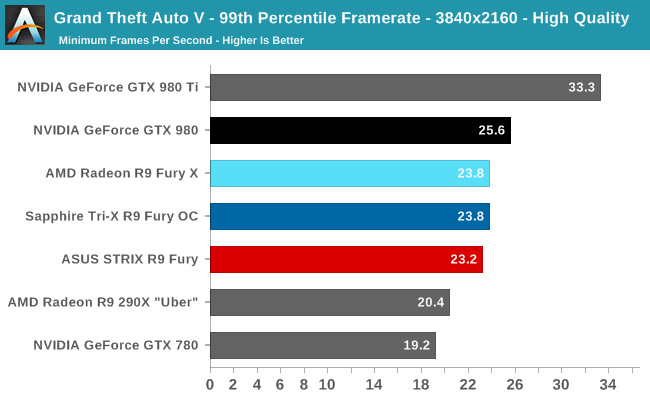

Otherwise for "High" quality we take the same basic settings but turn off all MSAA, which significantly reduces the GPU rendering and VRAM requirements.

Closing out our gaming benchmarks, the R9 Fury is once again in the lead, besting the GTX 980 by as much as 15%. However GTA V also serves as a reminder that the R9 Fury doesn’t have quite enough power to game at 4K without compromises. And if we do shift back to 1440p, a more comfortable resolution for this card, AMD’s lead is down to just 5%. At that point the R9 Fury isn’t quite covering its price advantage.

Meanwhile compared to the R9 Fury X, we close out roughly where we started. The R9 Fury trails the more powerful R9 Fury X by 5-7% depending on the resolution, a difference that has more to do with GPU clockspeeds than the cut-down CU count. Overall the gap between the two cards has been remarkably consistent and surprisingly narrow.

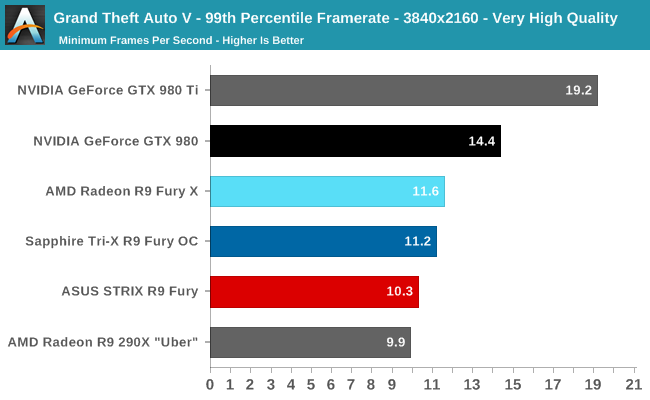

99th percentile framerates however are simply not in AMD’s favor here. Despite AMD’s driver optimizations and the fact that the GTX 980 only has 4GB of VRAM, the R9 Fury X could not pull ahead of the GTX 980, so the R9 Fury understandably fares worse. Even at 1440p the R9 Fury cards can’t quite muster 30fps, though in all fairness even the GTX 980 falls just short of this mark as well.

288 Comments

View All Comments

nightbringer57 - Friday, July 10, 2015 - link

Intel kept it in stock for a while but it didn't sell. So the management decided to get rid of it, gave it away to a few colleagues (dell, HP, many OEMs used BTX for quite a while, both because it was a good user lock-down solution and because the inconvenients of BTX didn't matter in OEM computers, while the advantages were still here) and noone ever heard of it on the retail market again?nightbringer57 - Friday, July 10, 2015 - link

Damn those not-editable comments...I forgot to add: with the switch from the netburst.prescott architecture to Conroe (and its followers), CPU cooling became much less of a hassle for mainstream models so Intel did not have anything left to gain from the effort put into BTX.

xenol - Friday, July 10, 2015 - link

It survived in OEMs. I remember cracking open Dell computers in the later half of 2000 and finding out they were BTX.yuhong - Friday, July 10, 2015 - link

I wonder if a BTX2 standard that fixes the problems of original BTX is a good idea.onewingedangel - Friday, July 10, 2015 - link

With the introduction of HBM, perhaps it's time to move to socketed GPUs.It seems ridiculous for the industry standard spec to devote so much space to the comparatively low-power CPU whilst the high-power GPU has to fit within the confines of (multiple) pci-e expansion slots.

Is it not time to move beyond the confines of ATX?

DanNeely - Friday, July 10, 2015 - link

Even with the smaller PCB footprint allowed by HBM; filling up the area currently taken by expansion cards would only give you room for a single GPU + support components in an mATX sized board (most of the space between the PCIe slots and edge of the mobo is used for other stuff that would need to be kept not replaced with GPU bits); and the tower cooler on top of it would be a major obstruction for any non-GPU PCIe cards you might want to put into the system.soccerballtux - Friday, July 10, 2015 - link

man, the convenience of the socketed GPU is great, but just think of how much power we could have if it had it's own dedicated card!meacupla - Friday, July 10, 2015 - link

The clever design trend, or at least what I think is clever, is where the GPU+CPU heatsinks are connected together, so that, instead of many smaller heatsinks trying to cool one chip each, you can have one giant heatsink doing all the work, which can result in less space, as opposed to volume, being occupied by the heatsink.You can see this sort of design on high end gaming laptops, Mac Pro, and custom water cooling builds. The only catch is, they're all expensive. Laptops and Mac Pro are, pretty much, completely proprietary, while custom water cooling requires time and effort.

If all ATX mobos and GPUs had their core and heatsink mounting holes in the exact same spot, it would be much easier to design a 'universal multi-core heatsink' that you could just attach to everything that needs it.

Peichen - Saturday, July 11, 2015 - link

That's quite a good idea. With heat-pipes, distance doesn't really matter so if there is a CPU heatsink that can extend 4x 8mm/10mm heatpipes over the videocard to cooled the GPU, it would be far quieter than the 3x 90mm can cooler on videocard now.FlushedBubblyJock - Wednesday, July 15, 2015 - link

330 watts transferred to the low lying motherboard, with PINS attached to amd's core failure next...Slap that monster heat onto the motherboard, then you can have a giant green plastic enclosure like Dell towers to try to move that heat outside the case... oh, plus a whole 'nother giant VRM setup on the motherboard... yeah they sure will be doing that soon ... just lay down that extra 50 bucks on every motherboard with some 6X VRM's just incase amd fanboy decides he wants to buy the megawatter amd rebranded chip...

Yep, NOT HAPPENING !