The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation

by Ryan Smith on July 20, 2016 8:45 AM ESTAsynchronous Concurrent Compute: Pascal Gets More Flexible

Continuing our dive into the Pascal architecture, while Pascal did not make any fundamental execution changes to the CUDA cores, the same is not true for how work is allocated/scheduled on the CUDA cores. In fact, next to the addition of GDDR5X, I’d consider the changes to work scheduling to be the other great change to the overall Pascal core architecture. With Pascal, NVIDIA has significantly improved their ability to allocate and balance workloads, which in turn has ramifications in several difference scenarios. But for the AnandTech audience the greatest significance is going to be in what it means for work concurrency when using asynchronous compute.

However to understand just what NVIDIA has done here, we’re going to have to first take a step back and try to unravel the ball of yarn that is asynchronous compute, concurrency, and load balancing on prior NVIDIA architectures. From a technical perspective, NVIDIA has slowly evolved their work queue execution abilities over time. Consumer Kepler (GK10x) could only handle a single work queue, while Big Kepler (GK110/GK210) added HyperQ, which introduced a 32 queue setup, but one that could only be used with pure compute workloads. For HPC users this was a big deal, but for consumer use cases there was no support for mixing HyperQ compute queues with a graphics queue.

| NVIDIA GPU Queue Engine Support | |||||

| Graphics/Mixed Mode | Pure Compute Mode | Scheduling | |||

| Pascal (1000 Series) | 1 Graphics + 31 Compute | 32 Compute | Dynamic! | ||

| Maxwell 2 (900 Series) | 1 Graphics + 31 Compute | 32 Compute | Static | ||

| Maxwell 1 (750 Series) | 1 Graphics | 32 Compute | Static | ||

| Kepler GK110 (780/Titan) | 1 Graphics | 32 Compute | Static | ||

| Kepler GK10x (600/700 Series) | 1 Graphics | 1 Compute | N/A | ||

Moving to Maxwell, Maxwell 1 was a repeat of Big Kepler, offering HyperQ without any way to mix it with graphics. It was only with Maxwell 2 that NVIDIA finally gained the ability to mix compute queues with graphics mode, allowing for the single graphics queue to be joined with up to 31 compute queues, for a total of 32 queues.

This from a technical perspective is all that you need to offer a basic level of asynchronous compute support: expose multiple queues so that asynchronous jobs can be submitted. Past that, it's up to the driver/hardware to handle the situation as it sees fit; true async execution is not guaranteed. Frustratingly then, NVIDIA never enabled true concurrency via asynchronous compute on Maxwell 2 GPUs. This despite stating that it was technically possible. For a while NVIDIA never did go into great detail as to why they were holding off, but it was always implied that this was for performance reasons, and that using async compute on Maxwell 2 would more likely than not reduce performance rather than improve it.

There’s a maxim in the consumer electronics industry that if you want to know what’s wrong with the current product, wait for the next one to be released. And in the case of the Pascal launch, this definitely ended up being true. Now that Pascal is upon us and NVIDIA has fixed that which ills Maxwell 2, we finally know why NVIDIA has held off from enabling concurrency with asynchronous compute on Maxwell 2 all this time.

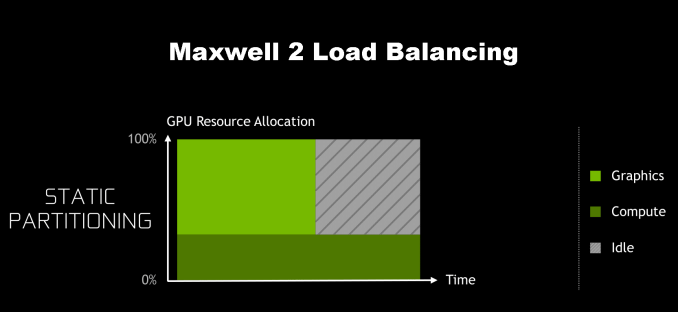

The issue, as it turns out, is that while Maxwell 2 supported a sufficient number of queues, how Maxwell 2 allocated work wasn’t very friendly for async concurrency. Under Maxwell 2 and earlier architectures, GPU resource allocation had to be decided ahead of execution. Maxwell 2 could vary how the SMs were partitioned between the graphics queue and the compute queues, but it couldn’t dynamically alter them on-the-fly. As a result, it was very easy on Maxwell 2 to hurt performance by partitioning poorly, leaving SM resources idle because they couldn’t be used by the other queues.

NVIDIA’s theoretical example involves when the graphics queue runs out of work before the compute queue, though in practice either one can happen, and either one would be similarly bad. There are a number of caveats in this example – among other things, this assumes that other new work can’t be started until both queues are finished – so please don’t consider this a catch-all for how concurrency under asynchronous compute works, but it covers the most basic and common case where a compute workload is closely tied to a graphics workload.

Meanwhile not shown in these simple graphical examples is that for async’s concurrent execution abilities to be beneficial at all, there needs to be idle time bubbles to begin with. Throwing compute into the mix doesn’t accomplish anything if the graphics queue can sufficiently saturate the entire GPU. As a result, making async concurrency work on Maxwell 2 is a tall order at best, as you first needed execution bubbles to fill, and even then you’d need to almost perfectly determine your partitions ahead of time.

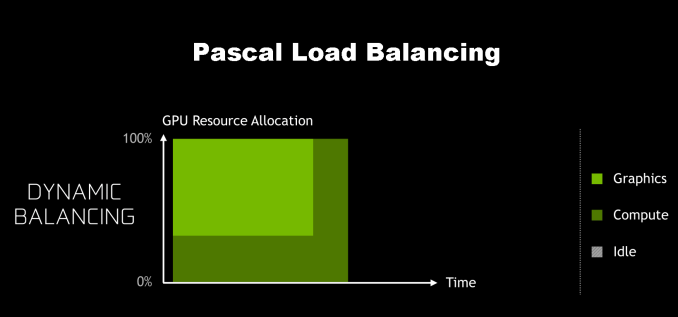

Getting back to Pascal then, Pascal finally fixes the resource allocation issue. For Pascal, NVIDIA has implemented a dynamic load balancing system to replace Maxwell 2’s static partitions. Now if the queues end up unbalanced and one of the queues runs out of work early, the driver and work schedulers can step in and fill up the remaining time with work from the other queues.

In concept it sounds simple, and in practice it should make a large difference to how beneficial async compute can be on NVIDIA’s architectures. Adding more work to create concurrency to fill execution bubbles only works if the queue scheduling itself doesn’t create bubbles, and this was Maxwell 2’s Achilles’ heel that Pascal has addressed.

At the same time however I feel it’s important to note that the scheduling change alone won’t (and can’t) guarantee that Pascal will see significant gains from async compute across the board. Async compute itself is a catch-all term – there are lots of things you can do with asynchronous work submission/execution – so async doesn’t mean that a game is making significant use of concurrency. Furthermore the concurrency is still based on filling execution bubbles, and that means that there needs to be bubbles to fill in the first place. In other words, the greatest gains from async will come from scenarios where for whatever reason, the graphics queue and its synchronous shaders can’t completely saturate the GPU on its own.

Right now I think it’s going to prove significant that while NVIDIA introduced dynamic scheduling in Pascal, they also didn’t make the architecture significantly wider than Maxwell 2. As we discussed earlier in how Pascal has been optimized, it’s a slightly wider but mostly higher clocked successor to Maxwell 2. As a result there’s not too much additional parallelism needed to fill out GP104; relative to GM204, you only need 25% more threads, a relatively small jump for a generation. This means that while NVIDIA has made Pascal far more accommodating to asynchronous concurrent executeion, there’s still no guarantee that any specific game will find bubbles to fill. Thus far there’s little evidence to indicate that NVIDIA’s been struggling to fill out their GPUs with Maxwell 2, and with Pascal only being a bit wider, it may not behave much differently in that regard.

Meanwhile, because this is a question that I’m frequently asked, I will make a very high level comparison to AMD. Ever since the transition to unified shader architectures, AMD has always favored higher ALU counts; Fiji had more ALUs than GM200, mainstream Polaris 10 has nearly as many ALUs as high-end GP104, etc. All other things held equal, this means there are more chances for execution bubbles in AMD’s architectures, and consequently more opportunities to exploit concurrency via async compute. We’re still very early into the Pascal era – the first game supporting async on Pascal, Rise of the Tomb Raider, was just patched in last week – but on the whole I don’t expect NVIDIA to benefit from async by as much as we’ve seen AMD benefit. At least not with well-written code.

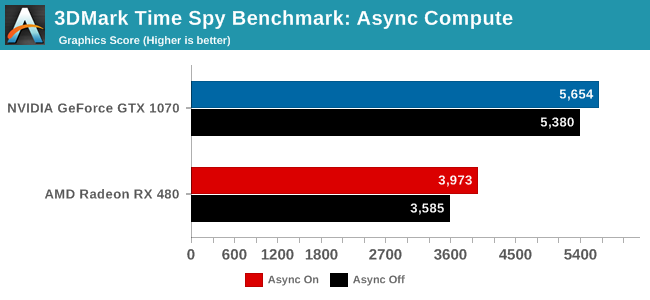

Otherwise, for the time being, the one good benchmark we have here is 3DMark Time Spy, which was released last week. The ground up DirectX 12 benchmark attempts to heavily overlap rendering passes to fill those aforementioned execution bubbles.

Taking a quick run of the benchmark, on a relative basis we see a 10.8% gain from using async compute plus concurrency for the RX 480, and a 5.4% gain for the GTX 1070. This is but one benchmark (and technically not even a game at that), but for what it’s worth this is the kind of trend I’m expecting to see in future games as they get better about exploiting workload concurrency via async compute.

Finally, getting back to the subject of dynamic scheduling, I’ve spent some time mulling over what’s probably the obvious question: if dynamic scheduling is so great, why didn’t NVIDIA do this sooner? It’s not a question I have an answer to, but I strongly suspect it’s another one of those tradeoffs that’s rooted in balancing costs and benefits. Dynamic scheduling requires a greater management of hazards that simply weren’t an issue with static scheduling, as now you need to handle everything involved with suddenly switching an SM to a different queue. Meanwhile NVIDIA more than likely paid a die space penalty for implementing dynamic scheduling. GPUs continually sit on the fence between being an ultra-fast staticly scheduled array of ALUs and an ultra-flexible somewhat smaller array of ALUs, and GPU vendors get to sit in the middle trying to figure out which side to lean towards in order to deliver the best performance for workloads that are 2-5 years down the line. It is, if you’ll pardon the pun, a careful balancing act for everyone involved.

200 Comments

View All Comments

eddman - Wednesday, July 20, 2016 - link

That puts a lid on the comments that Pascal is basically a Maxwell die-shrink. It's obviously based on Maxwell but the addition of dynamic load balancing and preemption clearly elevates it to a higher level.Still, seeing that using async with Pascal doesn't seem to be as effective as GCN, the question is how much of a role will it play in DX12 games in the next 2 years. Obviously async isn't be-all and end-all when it comes to performance but can Pascal keep up as a whole going forward or not.

I suppose we won't know until more DX12 are out that are also optimized properly for Pascal.

javishd - Wednesday, July 20, 2016 - link

Overwatch is extremely popular right now, it deserves to be a staple in gaming benchmarks.jardows2 - Wednesday, July 20, 2016 - link

Except that it really is designed as an e-sport style game, and can run very well with low-end hardware, so isn't really needed for reviewing flagship cards. In other words, if your primary desire is to find a card that will run Overwatch well, you won't be looking at spending $200-$700 for the new video cards coming out.Ryan Smith - Wednesday, July 20, 2016 - link

And this is why I really wish Overwatch was more demanding on GPUs. I'd love to use it and DOTA 2, but 100fps at 4K doesn't tell us much of use about the architecture of these high-end cards.Scali - Wednesday, July 20, 2016 - link

Thanks for the excellent write-up, Ryan!Especially the parts on asynchronous compute and pre-emption were very thorough.

A lot of nonsense was being spread about nVidia's alleged inability to do async compute in DX12, especially after Time Spy was released, and actually showed gains from using multiple queues.

Your article answers all the criticism, and proves the nay-sayers wrong.

Some of them went so far in their claims that they said nVidia could not even do graphics and compute at the same time. Even Maxwell v2 could do that.

I would say you have written the definitive article on this matter.

The_Assimilator - Wednesday, July 20, 2016 - link

Sadly that won't stop the clueless AMD fanboys from continuing to harp on that NVIDIA "doesn't have async compute" or that it "doesn't work". You've gotta feel for them though, NVIDIA's poor performance in a single tech demo... written with assistance from AMD... is really all the red camp has to go on. Because they sure as hell can't compete in terms of performance, or power usage, or cooler design, or adhering to electrical specifications...tipoo - Wednesday, July 20, 2016 - link

Pretty sure critique was of Maxwell. Pascals async was widely advertised. It's them saying "don't worry, Maxwell can do it" to questions about it not having it, and then when Pascal is released, saying "oh yeah, performance would have tanked with it on Maxwell", that bugs people as it shouldScali - Wednesday, July 20, 2016 - link

Nope, a lot of critique on Time Spy was specifically *because* Pascal got gains from the async render path. People said nVidia couldn't do it, so FutureMark must be cheating/bribed.darkchazz - Thursday, July 21, 2016 - link

It won't matter much though because they won't read anything in this article or Futuremark's statement on Async use in Time Spy.And they will keep linking some forum posts that claim nvidia does not support Async Compute.

Nothing will change their minds that it is a rigged benchmark and the developers got bribed by nvidia.

Scali - Friday, July 22, 2016 - link

Yea, not even this official AMD post will: http://radeon.com/radeon-wins-3dmark-dx12/