The Intel 6th Gen Skylake Review: Core i7-6700K and i5-6600K Tested

by Ian Cutress on August 5, 2015 8:00 AM ESTThe Skylake CPU Architecture

As with any new Intel architecture, the devil is in the details. Previously at AnandTech we have been able to provide deep dives into what exactly is going on in the belly of the beast, although the launch of Skylake has posed a fair share of problems.

Nominally we rely on a certain amount of openness from the processor/SoC manufacturer in providing low level details that we can verify and/or explain. In the past, this information has typically been provided in advance of the launch by way of several meetings/consultations with discussions talking to the engineers. There are some things we can probe, but others are like a black box. The black box nature of some elements, such as Qualcomm’s Adreno graphics, means that it will remain a mystery until Pandora’s box is opened.

In the lead up to the launch of Intel’s Skylake platform, architecture details have been both thin on the ground and thin in the air, even when it comes down to fundamental details about the EU counts of the integrated graphics, or explanations regarding the change in processor naming scheme. In almost all circumstances, we’ve been told to wait until Intel’s Developer Forum in mid-August for the main reason that the launch today is not the full stack Skylake launch, which will take place later in the quarter. Both Ryan and I will be at IDF taking fastidious notes and asking questions for everyone, but at this point in time a good portion of our analysis comes from information provided by sources other than Intel, and while we trust it, we can't fully verify it as we normally would.

As a result, the details on the following few pages have been formed through investigation, discussion and collaboration outside the normal channels, and may be updated as more information is discovered or confirmed. Some of this information is mirrored in our other coverage in order to offer a complete picture in each article as well. After IDF we plan to put together a more detailed architecture piece as a fundamental block in analyzing our end results.

The CPU

As bad as it sounds, the best image of the underlying processor architecture is the block diagram:

From a CPU connectivity standpoint, we discussed the DDR3L/DDR4 dual memory controller design on the previous page so we won’t go over it again here. On the PCI-Express Graphics allocation side, the Skylake processors will have sixteen PCIe 3.0 lanes to use for directly attached devices to the processor, similar to Intel's previous generation processors. These can be split into a single PCIe 3.0 x16, x8/x8 or x8/x4/x4 with basic motherboard design. (Note that this is different to early reports of Skylake having 20 PCIe 3.0 lanes for GPUs. It does not.)

With this, SLI will work up to x8/x8. If a motherboard supports x8/x4/x4 and a PCIe card is placed into that bottom slot, SLI will not work because only one GPU will have eight lanes. NVIDIA requires a minimum of PCIe x8 in order to enable SLI. Crossfire has no such limitation, which makes the possible configurations interesting. Below we discuss that the chipset has 20 (!) PCIe 3.0 lanes to use in five sets of four lanes, and these could be used for graphics cards as well. That means a motherboard can support x8/x8 from the CPU and PCIe 3.0 x4 from the chipset and end up with either dual-SLI or tri-CFX enabled when all the slots are populated.

DMI 3.0

The processor is connected to the chipset by the four-lane DMI 3.0 interface. The DMI 3.0 protocol is an upgrade over the previous generation which used DMI 2.0 – this upgrade boosts the speed from 5.0 GT/s (2GB/sec) to 8.0 GT/s (~3.93GB/sec), essentially upgrading DMI from PCIe 2 to PCIe 3, but requires the motherboard traces between the CPU and chipset to be shorter (7 inches rather than 8 inches) in order to maintain signal speed and integrity. This also allows one of the biggest upgrades to the system, chipset connectivity, as shown below in the HSIO section.

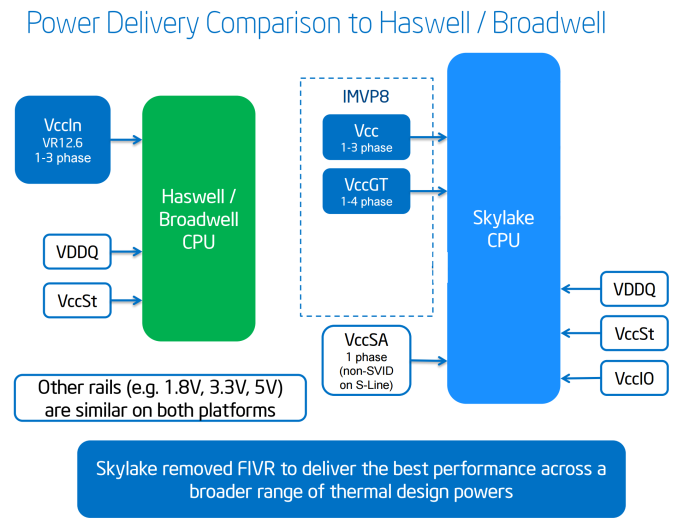

CPU Power Arrangements

Moving on to power arrangements, with Skylake the situation changes as compared to Haswell. Prior to Haswell, voltage regulation was performed by the motherboard and the right voltages were then put into the processor. This was deemed inefficient for power consumption, and for the Haswell/Broadwell processors Intel decided to create a fully integrated voltage regulator (FIVR) in order to reduce motherboard cost and reduce power consumption. This had an unintended side-effect – while it was more efficient (good for mobile platforms), it also acted as a source of heat generation inside the CPU with high frequencies. As a result, overclocking was limited by temperatures and the quality of the FIVR led to a large variation in results. For Skylake on the desktop, the voltage regulation is moved back into the hands of the motherboard manufacturers. This should allow for cooler processors depending on how the silicon works, but it will result in slightly more expensive motherboards.

A slight indication of this will be that some motherboards will go back to having a large amount of multiplexed phases on the motherboard, and it will allow some manufacturers to use this as a differentiating point, although the usefulness of such a design is sometimes questionable.

477 Comments

View All Comments

nsteussy - Tuesday, August 11, 2015 - link

Ian, under the Linux benchmarks you have the graphic for the NAMD Mol Dynamics twice but none for the NPB fluid dynamics. That said, that is quite the nice bump for NAMD (~24% from a 4770 to a 6770). Very tempting.Visual - Wednesday, August 12, 2015 - link

I kinda don't like how you keep repeating the generic benchmark descriptions before each graph. I'd prefer if it were hidden by default, visible on hover or toggled by clicking of some info button or similar, or at the very least formatted a bit different than actual article text.I'd also like if you had some comments on the actual results, at least where there are some peculiarities in them.

Case in point: Why is the 5775C IGP so much better in some games?

mapesdhs - Wednesday, August 12, 2015 - link

Agree re comments on results, eg. why does the 2600K dip so badly for Shadow of Mordor @ 4K with the GTX 770? It doesn't happen with the 980, but if the dip was a VRAM issue @ 4K then the 3770K shouldn't be so close to the other CPUs. Weird...wyssin - Wednesday, August 12, 2015 - link

Has anyone published a review comparing i7-6700k with other cpus all overclocked to, say, 4.5 GHz? For those who typically run an overclocked system, it's not an apples-to-apples comparison to put the new entry up against the older all at stock settings.So to make the best-informed decision, it would be very useful to be able to see head-to-head trials at both (1) stock settings and (2) overclocked to a speed they can all reasonably manage (apparently around 4.4 or 4.5 GHz).

I have the same problems with the Guru3D review and the Gamestar.de review that were mentioned in earlier comments.

Oxford Guy - Thursday, August 13, 2015 - link

The key is to pick a speed that the worst overclocking examples would be able to get to with reasonable voltage. That takes the luck of the draw out of the scenario.beatsbyden - Thursday, August 13, 2015 - link

Not much improvent. Only worth the money if you're coming from an I5Darkvengence - Thursday, August 13, 2015 - link

This lack of CPU power needed in gaming is only temporary once u have photorealistic graphics in 4k u gonna need crazy powerful GPUs which need feeding by beastly CPUs . our current technology will seem like a dinosaur CPU in comparison. That is of course a fair few years away but still one day it will happen . I'm glad current CPU are not being taxed by today's games even less with dx12. Gives my gen 1 MSI nightblade more life with 4970k as u can't change motherboard it all custom front panel connectors and stuff. I used to have a i7 920 and got to say that is still a good CPU especially for single GPU systems. I really like sandy bridge tho very impressive for its age. But older CPUs lose out mainly being tied to older chipsets so u lose new connector and bus speeds for hardware thogasparmx - Thursday, November 19, 2015 - link

I think you're kinda wrong, the point of DX12 is depending less on CPU, NVIDIA says in the future probably you're not going to need a beast CPU to play 4k games.djscrew - Friday, August 14, 2015 - link

I'm so disappointed in SB/DDR4. After all this wait and the IPC gains with discreet graphics are negative? WTF Intel. I Guess my Nehalem system will survive another generation, or maybe three? No compelling reason to upgrade. It's such a shame because I was really looking forward to building a $3k rig. I think I'll shop for a nice 4k panel instead.Ninjawithagun - Friday, August 14, 2015 - link

And just when I was about to purchase the 6600K and a Z170 mini-ITX motherboard as an upgrade to my 4690K and Z97i Plus motherboard...man, am I glad I ran across this article. Saved myself about $600 for a useless upgrade!