The AMD FreeSync Review

by Jarred Walton on March 19, 2015 12:00 PM EST

Introduction to FreeSync and Adaptive Sync

The first time anyone talked about adaptive refresh rates for monitors – specifically applying the technique to gaming – was when NVIDIA demoed G-SYNC back in October 2013. The idea seemed so logical that I had to wonder why no one had tried to do it before. Certainly there are hurdles to overcome, e.g. what to do when the frame rate is too low, or too high; getting a panel that can handle adaptive refresh rates; supporting the feature in the graphics drivers. Still, it was an idea that made a lot of sense.

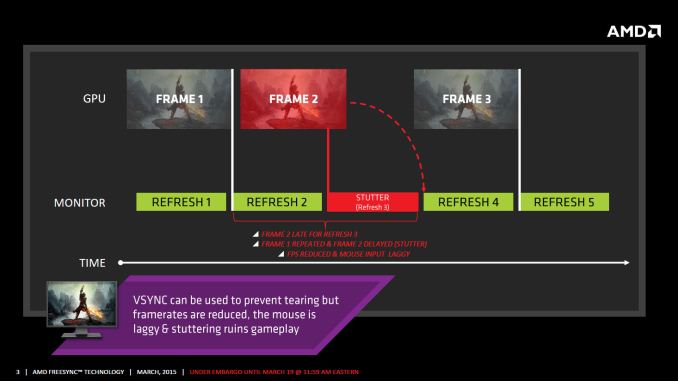

The impetus behind adaptive refresh is to overcome visual artifacts and stutter cause by the normal way of updating the screen. Briefly, the display is updated with new content from the graphics card at set intervals, typically 60 times per second. While that’s fine for normal applications, when it comes to games there are often cases where a new frame isn’t ready in time, causing a stall or stutter in rendering. Alternatively, the screen can be updated as soon as a new frame is ready, but that often results in tearing – where one part of the screen has the previous frame on top and the bottom part has the next frame (or frames in some cases).

Neither input lag/stutter nor image tearing are desirable, so NVIDIA set about creating a solution: G-SYNC. Perhaps the most difficult aspect for NVIDIA wasn’t creating the core technology but rather getting display partners to create and sell what would ultimately be a niche product – G-SYNC requires an NVIDIA GPU, so that rules out a large chunk of the market. Not surprisingly, the result was that G-SYNC took a bit of time to reach the market as a mature solution, with the first displays that supported the feature requiring modification by the end user.

Over the past year we’ve seen more G-SYNC displays ship that no longer require user modification, which is great, but pricing of the displays so far has been quite high. At present the least expensive G-SYNC displays are 1080p144 models that start at $450; similar displays without G-SYNC cost about $200 less. Higher spec displays like the 1440p144 ASUS ROG Swift cost $759 compared to other WQHD displays (albeit not 120/144Hz capable) that start at less than $400. And finally, 4Kp60 displays without G-SYNC cost $400-$500 whereas the 4Kp60 Acer XB280HK will set you back $750.

When AMD demonstrated their alternative adaptive refresh rate technology and cleverly called it FreeSync, it was a clear jab at the added cost of G-SYNC displays. As with G-SYNC, it has taken some time from the initial announcement to actual shipping hardware, but AMD has worked with the VESA group to implement FreeSync as an open standard that’s now part of DisplayPort 1.2a, and they aren’t getting any royalties from the technology. That’s the “Free” part of FreeSync, and while it doesn’t necessarily guarantee that FreeSync enabled displays will cost the same as non-FreeSync displays, the initial pricing looks quite promising.

There may be some additional costs associated with making a FreeSync display, though mostly these costs come in the way of using higher quality components. The major scaler companies – Realtek, Novatek, and MStar – have all built FreeSync (DisplayPort Adaptive Sync) into their latest products, and since most displays require a scaler anyway there’s no significant price increase. But if you compare a FreeSync 1440p144 display to a “normal” 1440p60 display of similar quality, the support for higher refresh rates inherently increases the price. So let’s look at what’s officially announced right now before we continue.

350 Comments

View All Comments

chizow - Monday, March 23, 2015 - link

Yes..the scaler that AMD worked with scaler makers to design, using the Spec that AMD designed and pushed through VESA lol. Again it is hilarious that AMD and their fanboys are now blaming the scaler and monitor makers already. This really bodes well for future development of FreeSync panels. /sarcasmViva La Open Standards!!!! /shoots_guns_in_air_in_fanboy_fiesta

So the AMD fanboys like you can't keep shoving your head in the sand and blaming Nvidia for your problems. Time to start holding AMD accountable, something AMD fanboys should've done years ago.

https://www.youtube.com/watch?v=-ylLnT2yKyA

ddarko - Thursday, March 19, 2015 - link

Is this where you have pulled the fanciful notion that Nvidia can't support fresync?http://techreport.com/news/25867/amd-could-counter...

That the display controller in Nvidia vidro cards don't support variable refresh intervals? First of all, that's an AMD executive's speculation on why Nvidia has to use an external module. It's never be confirmed to be true by Nvidia. If this is the source of your claim, then it's laughable - you're taking as gospel what an AMD exec says he thinks are the hardware capabilities of Nvidia cards. Whatever.

Second, even if it was true for arguments sake, that still means Freesync is open while Gsync is closed because Nvidia can add display controller hardware support without anyone's approval or licensing fee. AMD or Intel cannot do the same with Gsync. It's really that simple.

Really, grasping at straws only weakens your arguments. Everyone understands what open and closed means and your attempts to creatively redefine them are a failure. The need to add hardware does not make a standard closed - USB 3.1 is an open standard even though vendors must add new chips to support it. It is open because every vendor can add those chips without license fee to anyone else. Freesync is open - Nvidia, AMD or Intel. Gsync is not. Case closed.

chizow - Friday, March 20, 2015 - link

Hey, AMD designed the spec, they should certainly know better than anyone what can and cannot support it, especially given MANY OF THEIR OWN RELEVANT GCN cards CANNOT support FreeSync. I mean if it was such a trivial matter to support FreeSync, why can't any of AMD's GCN1.0 cards support it? Good questioin huh?2nd part, for arguments sake, I honestly hope Nvidia supports FreeSync, because they can just keep supporting G-Sync as their premium option allowing their users to use both monitors. Would be bad news for AMD however, as that would be even 1 less reason to buy a Radeon card.

Crunchy005 - Friday, March 20, 2015 - link

Not really radeon cards compete well with nvidia, the two leap frog and both offer better value depending on what time you look at what is available. Also the older GCN1.0 cards most likely don't have the hardware to support it, like the unconfirmed Nvidia story above, I myself am assuming here. Nvidia created a piece of hardware that will get them more money that has to be added into a monitor that would support older cards. Hard to change an architecture thats already released. Nvidia did well by making it the way they did, it offered a larger selection of cards to use even if it was a higher price. But now that there is an open standard things will shift and the next gen from AMD, i'm sure, will all support free sync broadening the available cards for free sync. The fact that g-sync still has a very limited amount of very expensive monitors makes it a tough argument that it is in any way winning, especially when by next month freeSync will have just as many options at a lower price. You just can't ever admit that AMD possibly did something well and that Nvidia is going to be fighting a very steep uphill battle to compete on this currently niche technology.Also lets add to the fact that against your article works against you.

"The question now is: why is this happening and does it have anything to do with G-Sync or FreeSync?...It could be panel technology, it could be VRR technology or it could be settings in the monitor itself. We will be diving more into the issue as we spend more time with different FreeSync models.

For its part, AMD says that ghosting is an issue it is hoping to lessen on FreeSync monitors by helping partners pick the right components (Tcon, scalars, etc.) and to drive a “fast evolution” in this area."

Even the article you posted and used for your argument says it is not freeSync but the monitor technology itself. You have a knack for trying to target anything wrong against AMD when it is the monitor manufacturer that has caused this problem.

chizow - Friday, March 20, 2015 - link

So you do agree, it is very well possible Nvidia can't actually support FreeSync for the same reasons most of AMD's own GPUs can't support it then? Is AMD just choosing to screw their customers by not supporting their own spec? Maybe they are trying to force you to buy a new AMD card so you can't see how bad FreeSync is at low FPS?Last bit of first paragraph. LOL, it's like AMD fans just dont' get it. Nvidia, the market leader that holds ~70% of the dGPU market (check any metric, JPR, Steam survey, your next LAN party, online guild, whatever) that supports 100% of their GPUs since 2012 with G-Sync and has at least 8 panels on the market has the uphill battle while AMD, the one that is fighting for relevance, supports only a fraction of their ~30% dGPU share FreeSync, has half the monitors, and ultimately, has the worst solution. But somehow Nvidia has the uphill battle in this niche market? AMD fans willing to actually buy expensive niche equipment is as niche as it gets lol.

And last part, uh, no. They are asking a rhetorical question they hope to answer and they do identify G-Sync and FreeSync as possible culprits with a follow-on catchall with VRR, but as I said the test is simple enough on these panels, do VSync ON/OFF and FreeSync and see under what conditions the panel ghosts. I would be shocked if they ghost with standard fixed refresh, so if the problem only exhibits itself with FreeSync enabled, it is clearly a problem inherent with FreeSync.

Also, if you read the comments by PCPer's co-editor Allyn, you will see he also believes it is a problem with FreeSync.

Crunchy005 - Saturday, March 21, 2015 - link

Well if that is true, not saying it is, then that would be an issue with the VRR technology built into the monitors scaler, not a free sync issues. Free sync uses an open standard technology so it is not freesync itself but the tech in the monitor, and how the manufacturers scaler handles the refresh rates. Also your argument that is falls apart at low refresh rates is again not really an AMD issue. The freesync implementation can go down as low as 9hz. But since freesync relies on the manufacturer to make a monster that can go that low there is a limitation there. Obviously an open standard will have more issue than a more closed proprietary system, ie. Mac vs windows. Freesync has to support different hardware and dealers across the board and not the exact same proprietary thing every time.The fact that the amount of monitors available for gsync in 18 months has only reached 8 models should tell you something there. Obviously manufacturers don't want to build monitors that cost them more to build that they have to sell at a higher price for a feature that is not selling much at all yet. But hey the fact that they have a new standard being built into newonitors that can be supported by several large brand names and if cost then almost nothing extra to build, and it can be used with non display port devices? Well that opens a much larger customer base for those monitors. Smart business there.

I'm sure as time goes on monitor manufacturers will build higher quality parts that will solve the ghosting issue, but the fact remains that AMD was able to accomplish essentially the same thing as Nvidia using an open standard that is working it's way into monitors. Also those monitors are still able to target a larger audience. Proprietary never wins out in cases like this.

Gsync is not enough of a benefit over freesync to justify the cost and in time it will be more widely adopted as companies who make monitors stop wanting to pay the extra to Nvidia. Although bent has a hybrid monitor for gsync, I could see a hybrid gsync/freesync monitor in the future(graphics agnostic, sort of).

Crunchy005 - Saturday, March 21, 2015 - link

Wow spelling errors gallore, this I wrote on my phone so ya auto correct is stupid.chizow - Saturday, March 21, 2015 - link

The fact the number of G-Sync panels is only 8 reinforces what Nvidia has said all along, doing it right is hard, and instead of just hoping Mfgs. pick the right equipment (while throwing them under the bus like AMD is doing), Nvidia actually fine tunes their G-Sync module to every single panel that gets G-Sync certified. This takes engineering time and resources.And how are you sure things will get better? It took AMD 15 months to produce what we see today and it is clearly riddled with problems that do not plague G-Sync. What if the sales of these panels are a flop and there is a high RMA rate attached to them due to the ghosting issues we have seen? Do you think AMD's typical "hey its an open standard, THEY messed it up" motto is doing any favors as they are now already blaming their Mfg. partners for this problem? Honestly, take a step back and watch history repeat itself. AMD once again releases a half-baked, half-supported solution, and then when problems arise, they don't take accountability for it.

Also, it sounds like you are acknowledging these problems do exist, so would you, in good conscience recommend that someone buy these, or wait for FreeSync panels 2.0 if they are indeed problems tied to hardware? Just wondering?

And how is G-Sync not worth the premium, given it does exactly as it said it would from Day 1, and has for some 18 months now without exhibiting these very same issues? Do you really think an extra $150 on a $600+ part is really going to make the difference if one solution provides what you want TODAY vs. the other that is a completely unknown commodity?

Just curious.

Crunchy005 - Monday, March 23, 2015 - link

It has one issue that you have pointed out, ghosting, so not riddled with problems. Also AMD had to wait for the standard to get into display port 1.2a they had it working in 2013 but until the VESA got it into display port they could ship monitors that supported it, hence the 15 months you say it took to 'develop'.So far all the reviews I have seen on freeSync have been great, so yes. The only one that even mentions ghosting is the one that you posted and i'm sure that it's only noticeable with high speed cameras. Obviously the ghosting is not a big enough issue that it was even required to make mention for when under normal use it isn't noticeable, and you still get smooth playback on the monitor, same as gsync.

Last, yes $150 is 25% increase on $600 there so that is a significant increase in price, very relevant because some people have a thing called a budget.

chizow - Monday, March 23, 2015 - link

No, its not the only issue, ghosting is just the most obvious and most problematic, but you also get flickering at low FPS because FreeSync does not have the onboard frame buffer to just double frames as needed to prevent this kind if pixel decay and flickering you see with FreeSync. Its really funny because these are all things (G-Sync module as smart scaler and frame/lookaside buffer) that AMD quizzically wondered why Nvidia needed extra hardware. Market leader, market follower, just another great example.Another issue apparently is there is a perceivable difference in smoothness as the AMD driver kick in and out of Vsync On/Off mode in its supported frame bands. This again, is mentioned in these previews but not covered in depth. My guess is because press had limited access to the machines again for these previews and they were done in controlled test environments with their own shipped versions only arriving around the time the embargo was lifted.

But yes you and anyone else should certainly wait for follow-up reviews because the ghosting and flickering was certainly visible without any need of high speed cameras

https://www.youtube.com/watch?v=-ylLnT2yKyA

25% increase is nothing if you want a working solution today, but yes a $600 investment in what AMD has shown would be a dealbreaker and waste of money imo, so if you are in the AMD camp or also need to spend another $300 on an AMD card for FreeSync you will certainly want to wait a bit longer.